Zehui Xiong

Sherman

Edge Intelligence Optimization for Large Language Model Inference with Batching and Quantization

May 12, 2024Generative Artificial Intelligence (GAI) is taking the world by storm with its unparalleled content creation ability. Large Language Models (LLMs) are at the forefront of this movement. However, the significant resource demands of LLMs often require cloud hosting, which raises issues regarding privacy, latency, and usage limitations. Although edge intelligence has long been utilized to solve these challenges by enabling real-time AI computation on ubiquitous edge resources close to data sources, most research has focused on traditional AI models and has left a gap in addressing the unique characteristics of LLM inference, such as considerable model size, auto-regressive processes, and self-attention mechanisms. In this paper, we present an edge intelligence optimization problem tailored for LLM inference. Specifically, with the deployment of the batching technique and model quantization on resource-limited edge devices, we formulate an inference model for transformer decoder-based LLMs. Furthermore, our approach aims to maximize the inference throughput via batch scheduling and joint allocation of communication and computation resources, while also considering edge resource constraints and varying user requirements of latency and accuracy. To address this NP-hard problem, we develop an optimal Depth-First Tree-Searching algorithm with online tree-Pruning (DFTSP) that operates within a feasible time complexity. Simulation results indicate that DFTSP surpasses other batching benchmarks in throughput across diverse user settings and quantization techniques, and it reduces time complexity by over 45% compared to the brute-force searching method.

Integration of Mixture of Experts and Multimodal Generative AI in Internet of Vehicles: A Survey

Apr 25, 2024Generative AI (GAI) can enhance the cognitive, reasoning, and planning capabilities of intelligent modules in the Internet of Vehicles (IoV) by synthesizing augmented datasets, completing sensor data, and making sequential decisions. In addition, the mixture of experts (MoE) can enable the distributed and collaborative execution of AI models without performance degradation between connected vehicles. In this survey, we explore the integration of MoE and GAI to enable Artificial General Intelligence in IoV, which can enable the realization of full autonomy for IoV with minimal human supervision and applicability in a wide range of mobility scenarios, including environment monitoring, traffic management, and autonomous driving. In particular, we present the fundamentals of GAI, MoE, and their interplay applications in IoV. Furthermore, we discuss the potential integration of MoE and GAI in IoV, including distributed perception and monitoring, collaborative decision-making and planning, and generative modeling and simulation. Finally, we present several potential research directions for facilitating the integration.

Generative Artificial Intelligence Assisted Wireless Sensing: Human Flow Detection in Practical Communication Environments

Apr 22, 2024Groundbreaking applications such as ChatGPT have heightened research interest in generative artificial intelligence (GAI). Essentially, GAI excels not only in content generation but also in signal processing, offering support for wireless sensing. Hence, we introduce a novel GAI-assisted human flow detection system (G-HFD). Rigorously, G-HFD first uses channel state information (CSI) to estimate the velocity and acceleration of propagation path length change of the human-induced reflection (HIR). Then, given the strong inference ability of the diffusion model, we propose a unified weighted conditional diffusion model (UW-CDM) to denoise the estimation results, enabling the detection of the number of targets. Next, we use the CSI obtained by a uniform linear array with wavelength spacing to estimate the HIR's time of flight and direction of arrival (DoA). In this process, UW-CDM solves the problem of ambiguous DoA spectrum, ensuring accurate DoA estimation. Finally, through clustering, G-HFD determines the number of subflows and the number of targets in each subflow, i.e., the subflow size. The evaluation based on practical downlink communication signals shows G-HFD's accuracy of subflow size detection can reach 91%. This validates its effectiveness and underscores the significant potential of GAI in the context of wireless sensing.

Interactive Generative AI Agents for Satellite Networks through a Mixture of Experts Transmission

Apr 14, 2024In response to the needs of 6G global communications, satellite communication networks have emerged as a key solution. However, the large-scale development of satellite communication networks is constrained by the complex system models, whose modeling is challenging for massive users. Moreover, transmission interference between satellites and users seriously affects communication performance. To solve these problems, this paper develops generative artificial intelligence (AI) agents for model formulation and then applies a mixture of experts (MoE) approach to design transmission strategies. Specifically, we leverage large language models (LLMs) to build an interactive modeling paradigm and utilize retrieval-augmented generation (RAG) to extract satellite expert knowledge that supports mathematical modeling. Afterward, by integrating the expertise of multiple specialized components, we propose an MoE-proximal policy optimization (PPO) approach to solve the formulated problem. Each expert can optimize the optimization variables at which it excels through specialized training through its own network and then aggregates them through the gating network to perform joint optimization. The simulation results validate the accuracy and effectiveness of employing a generative agent for problem formulation. Furthermore, the superiority of the proposed MoE-ppo approach over other benchmarks is confirmed in solving the formulated problem. The adaptability of MoE-PPO to various customized modeling problems has also been demonstrated.

Agent-driven Generative Semantic Communication for Remote Surveillance

Apr 10, 2024In the era of 6G, featuring compelling visions of intelligent transportation system, digital twins, remote surveillance is poised to become a ubiquitous practice. The substantial data volume and frequent updates present challenges in wireless networks. To address this, we propose a novel agent-driven generative semantic communication (A-GSC) framework based on reinforcement learning. In contrast to the existing research on semantic communication (SemCom), which mainly focuses on semantic compression or semantic sampling, we seamlessly cascade both together by jointly considering the intrinsic attributes of source information and the contextual information regarding the task. Notably, the introduction of the generative artificial intelligence (GAI) enables the independent design of semantic encoders and decoders. In this work, we develop an agent-assisted semantic encoder leveraging the knowledge based soft actor-critic algorithm, which can track the semantic changes, channel condition, and sampling intervals, so as to perform adaptive semantic sampling. Accordingly, we design a semantic decoder with both predictive and generative capabilities, which consists of two tailored modules. Moreover, the effectiveness of the designed models has been verified based on the dataset generated from CDNet2014, and the performance gain of the overall A-GSC framework in both energy saving and reconstruction accuracy have been demonstrated.

Harnessing the Power of AI-Generated Content for Semantic Communication

Apr 10, 2024Semantic Communication (SemCom) is envisaged as the next-generation paradigm to address challenges stemming from the conflicts between the increasing volume of transmission data and the scarcity of spectrum resources. However, existing SemCom systems face drawbacks, such as low explainability, modality rigidity, and inadequate reconstruction functionality. Recognizing the transformative capabilities of AI-generated content (AIGC) technologies in content generation, this paper explores a pioneering approach by integrating them into SemCom to address the aforementioned challenges. We employ a three-layer model to illustrate the proposed AIGC-assisted SemCom (AIGC-SCM) architecture, emphasizing its clear deviation from existing SemCom. Grounded in this model, we investigate various AIGC technologies with the potential to augment SemCom's performance. In alignment with SemCom's goal of conveying semantic meanings, we also introduce the new evaluation methods for our AIGC-SCM system. Subsequently, we explore communication scenarios where our proposed AIGC-SCM can realize its potential. For practical implementation, we construct a detailed integration workflow and conduct a case study in a virtual reality image transmission scenario. The results demonstrate our ability to maintain a high degree of alignment between the reconstructed content and the original source information, while substantially minimizing the data volume required for transmission. These findings pave the way for further enhancements in communication efficiency and the improvement of Quality of Service. At last, we present future directions for AIGC-SCM studies.

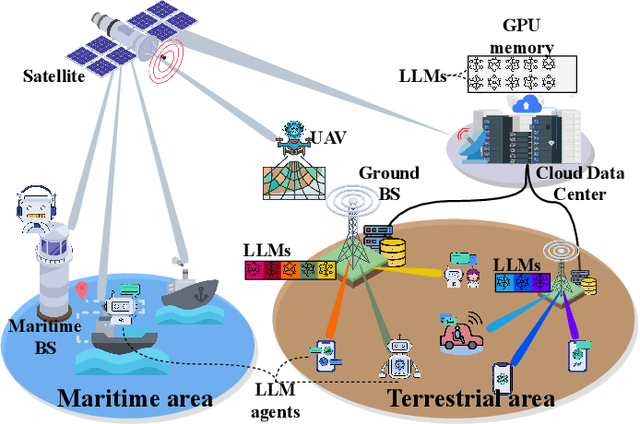

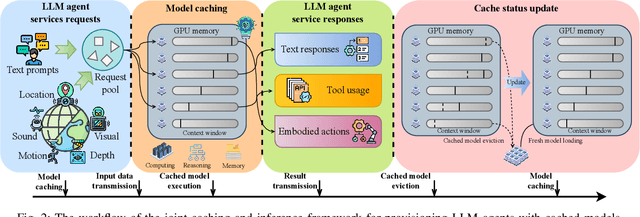

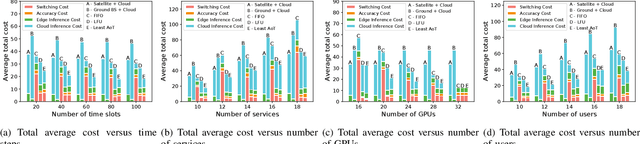

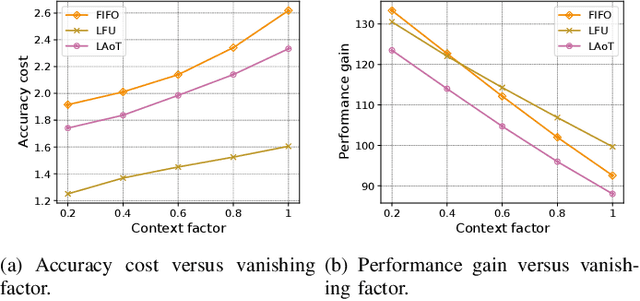

Cached Model-as-a-Resource: Provisioning Large Language Model Agents for Edge Intelligence in Space-air-ground Integrated Networks

Mar 09, 2024

Space-air-ground integrated networks (SAGINs) enable worldwide network coverage beyond geographical limitations for users to access ubiquitous intelligence services. {\color{black}Facing global coverage and complex environments in SAGINs, edge intelligence can provision AI agents based on large language models (LLMs) for users via edge servers at ground base stations (BSs) or cloud data centers relayed by satellites.} As LLMs with billions of parameters are pre-trained on vast datasets, LLM agents have few-shot learning capabilities, e.g., chain-of-thought (CoT) prompting for complex tasks, which are challenged by limited resources in SAGINs. In this paper, we propose a joint caching and inference framework for edge intelligence to provision sustainable and ubiquitous LLM agents in SAGINs. We introduce "cached model-as-a-resource" for offering LLMs with limited context windows and propose a novel optimization framework, i.e., joint model caching and inference, to utilize cached model resources for provisioning LLM agent services along with communication, computing, and storage resources. We design "age of thought" (AoT) considering the CoT prompting of LLMs, and propose the least AoT cached model replacement algorithm for optimizing the provisioning cost. We propose a deep Q-network-based modified second-bid (DQMSB) auction to incentivize these network operators, which can enhance allocation efficiency while guaranteeing strategy-proofness and free from adverse selection.

Generative AI for Unmanned Vehicle Swarms: Challenges, Applications and Opportunities

Feb 28, 2024With recent advances in artificial intelligence (AI) and robotics, unmanned vehicle swarms have received great attention from both academia and industry due to their potential to provide services that are difficult and dangerous to perform by humans. However, learning and coordinating movements and actions for a large number of unmanned vehicles in complex and dynamic environments introduce significant challenges to conventional AI methods. Generative AI (GAI), with its capabilities in complex data feature extraction, transformation, and enhancement, offers great potential in solving these challenges of unmanned vehicle swarms. For that, this paper aims to provide a comprehensive survey on applications, challenges, and opportunities of GAI in unmanned vehicle swarms. Specifically, we first present an overview of unmanned vehicles and unmanned vehicle swarms as well as their use cases and existing issues. Then, an in-depth background of various GAI techniques together with their capabilities in enhancing unmanned vehicle swarms are provided. After that, we present a comprehensive review on the applications and challenges of GAI in unmanned vehicle swarms with various insights and discussions. Finally, we highlight open issues of GAI in unmanned vehicle swarms and discuss potential research directions.

Mixture of Experts for Network Optimization: A Large Language Model-enabled Approach

Feb 15, 2024Optimizing various wireless user tasks poses a significant challenge for networking systems because of the expanding range of user requirements. Despite advancements in Deep Reinforcement Learning (DRL), the need for customized optimization tasks for individual users complicates developing and applying numerous DRL models, leading to substantial computation resource and energy consumption and can lead to inconsistent outcomes. To address this issue, we propose a novel approach utilizing a Mixture of Experts (MoE) framework, augmented with Large Language Models (LLMs), to analyze user objectives and constraints effectively, select specialized DRL experts, and weigh each decision from the participating experts. Specifically, we develop a gate network to oversee the expert models, allowing a collective of experts to tackle a wide array of new tasks. Furthermore, we innovatively substitute the traditional gate network with an LLM, leveraging its advanced reasoning capabilities to manage expert model selection for joint decisions. Our proposed method reduces the need to train new DRL models for each unique optimization problem, decreasing energy consumption and AI model implementation costs. The LLM-enabled MoE approach is validated through a general maze navigation task and a specific network service provider utility maximization task, demonstrating its effectiveness and practical applicability in optimizing complex networking systems.

Deep Reinforcement Learning Empowered Activity-Aware Dynamic Health Monitoring Systems

Jan 19, 2024In smart healthcare, health monitoring utilizes diverse tools and technologies to analyze patients' real-time biosignal data, enabling immediate actions and interventions. Existing monitoring approaches were designed on the premise that medical devices track several health metrics concurrently, tailored to their designated functional scope. This means that they report all relevant health values within that scope, which can result in excess resource use and the gathering of extraneous data due to monitoring irrelevant health metrics. In this context, we propose Dynamic Activity-Aware Health Monitoring strategy (DActAHM) for striking a balance between optimal monitoring performance and cost efficiency, a novel framework based on Deep Reinforcement Learning (DRL) and SlowFast Model to ensure precise monitoring based on users' activities. Specifically, with the SlowFast Model, DActAHM efficiently identifies individual activities and captures these results for enhanced processing. Subsequently, DActAHM refines health metric monitoring in response to the identified activity by incorporating a DRL framework. Extensive experiments comparing DActAHM against three state-of-the-art approaches demonstrate it achieves 27.3% higher gain than the best-performing baseline that fixes monitoring actions over timeline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge