Solving clustering as ill-posed problem: experiments with K-Means algorithm

Nov 15, 2022Alberto Arturo Vergani

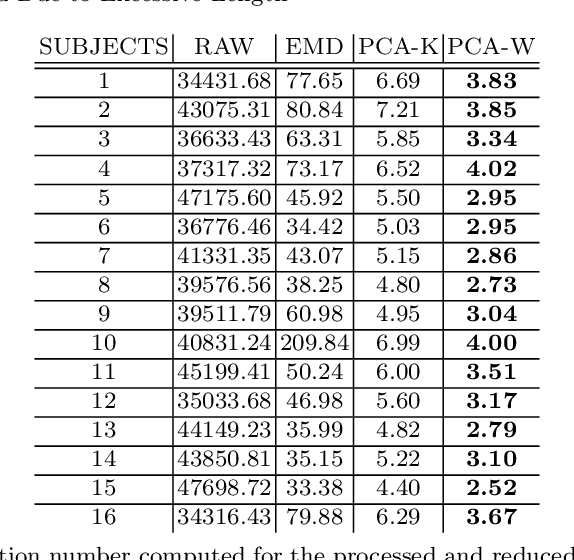

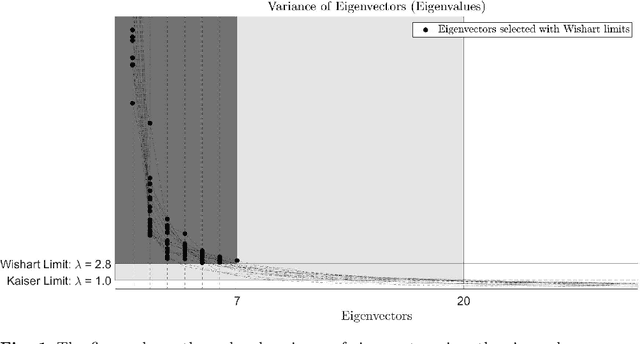

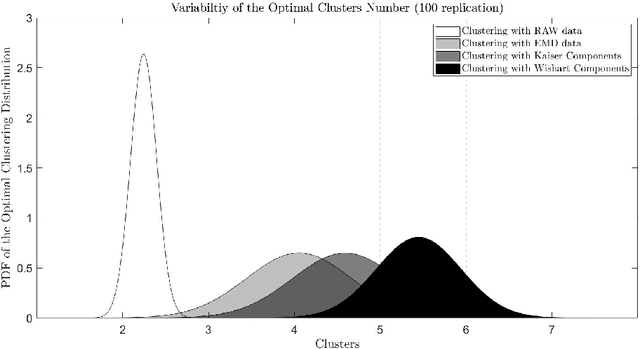

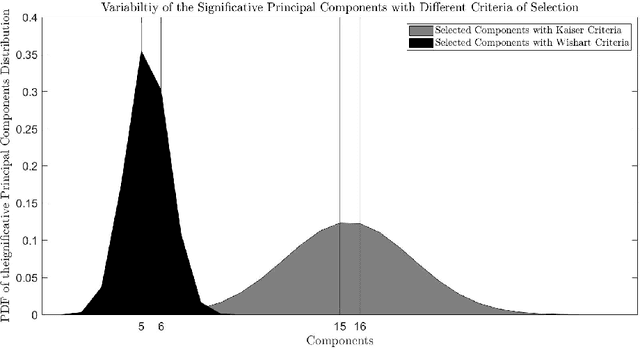

In this contribution, the clustering procedure based on K-Means algorithm is studied as an inverse problem, which is a special case of the illposed problems. The attempts to improve the quality of the clustering inverse problem drive to reduce the input data via Principal Component Analysis (PCA). Since there exists a theorem by Ding and He that links the cardinality of the optimal clusters found with K-Means and the cardinality of the selected informative PCA components, the computational experiments tested the theorem between two quantitative features selection methods: Kaiser criteria (based on imperative decision) versus Wishart criteria (based on random matrix theory). The results suggested that PCA reduction with features selection by Wishart criteria leads to a low matrix condition number and satisfies the relation between clusters and components predicts by the theorem. The data used for the computations are from a neuroscientific repository: it regards healthy and young subjects that performed a task-oriented functional Magnetic Resonance Imaging (fMRI) paradigm.

Critical Limits in a Bump Attractor Network of Spiking Neurons

Mar 30, 2020Alberto Arturo Vergani, Christian Robert Huyck

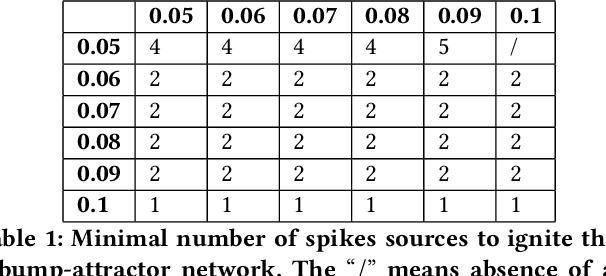

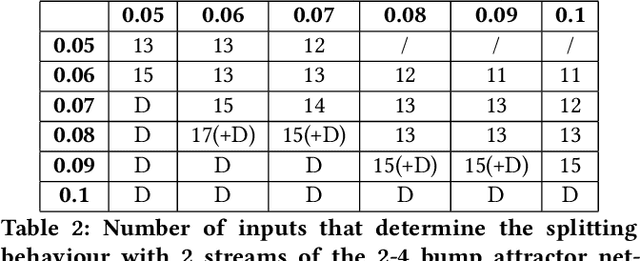

A bump attractor network is a model that implements a competitive neuronal process emerging from a spike pattern related to an input source. Since the bump network could behave in many ways, this paper explores some critical limits of the parameter space using various positive and negative weights and an increasing size of the input spike sources The neuromorphic simulation of the bumpattractor network shows that it exhibits a stationary, a splitting and a divergent spike pattern, in relation to different sets of weights and input windows. The balance between the values of positive and negative weights is important in determining the splitting or diverging behaviour of the spike train pattern and in defining the minimal firing conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge