An Adaptive Weighted Deep Forest Classifier

Jan 04, 2019Lev V. Utkin, Andrei V. Konstantinov, Viacheslav S. Chukanov, Mikhail V. Kots, Anna A. Meldo

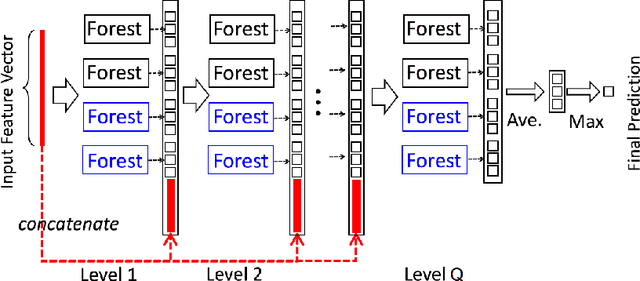

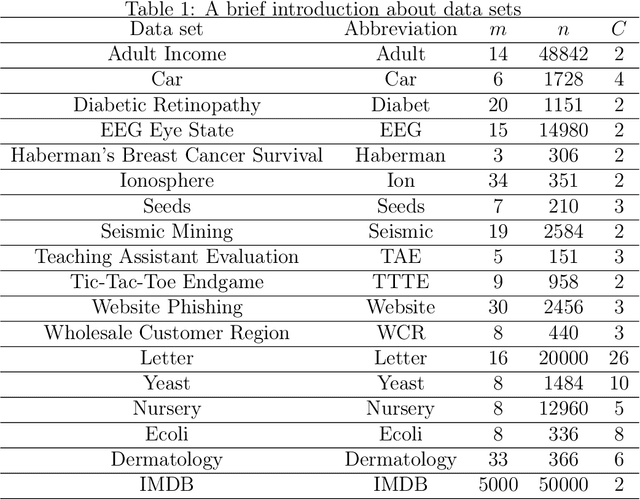

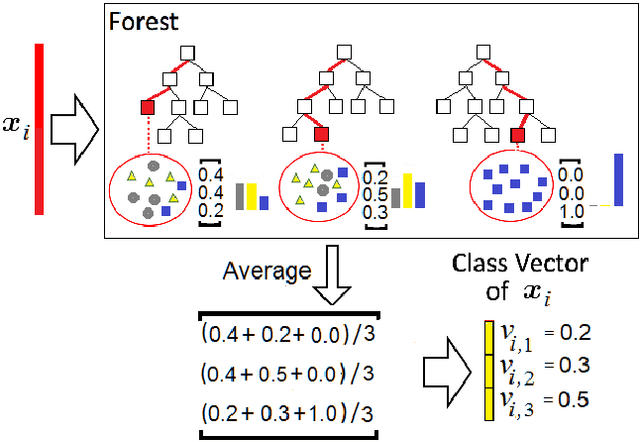

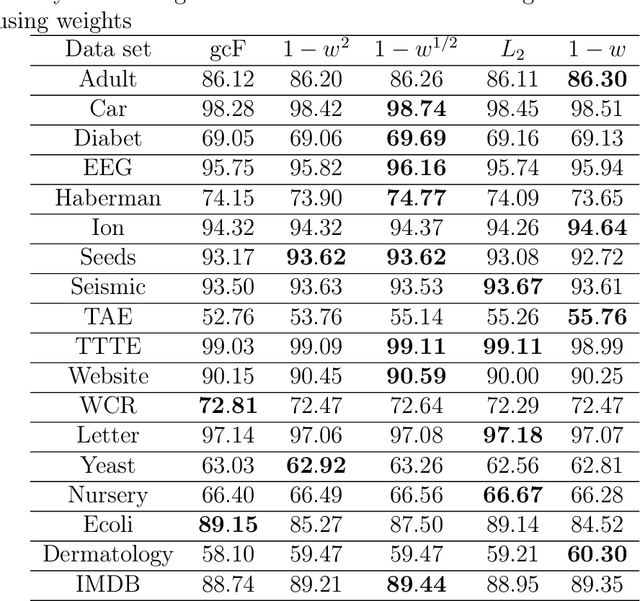

A modification of the confidence screening mechanism based on adaptive weighing of every training instance at each cascade level of the Deep Forest is proposed. The idea underlying the modification is very simple and stems from the confidence screening mechanism idea proposed by Pang et al. to simplify the Deep Forest classifier by means of updating the training set at each level in accordance with the classification accuracy of every training instance. However, if the confidence screening mechanism just removes instances from training and testing processes, then the proposed modification is more flexible and assigns weights by taking into account the classification accuracy. The modification is similar to the AdaBoost to some extent. Numerical experiments illustrate good performance of the proposed modification in comparison with the original Deep Forest proposed by Zhou and Feng.

A weighted random survival forest

Jan 01, 2019Lev V. Utkin, Andrei V. Konstantinov, Viacheslav S. Chukanov, Mikhail V. Kots, Mikhail A. Ryabinin, Anna A. Meldo

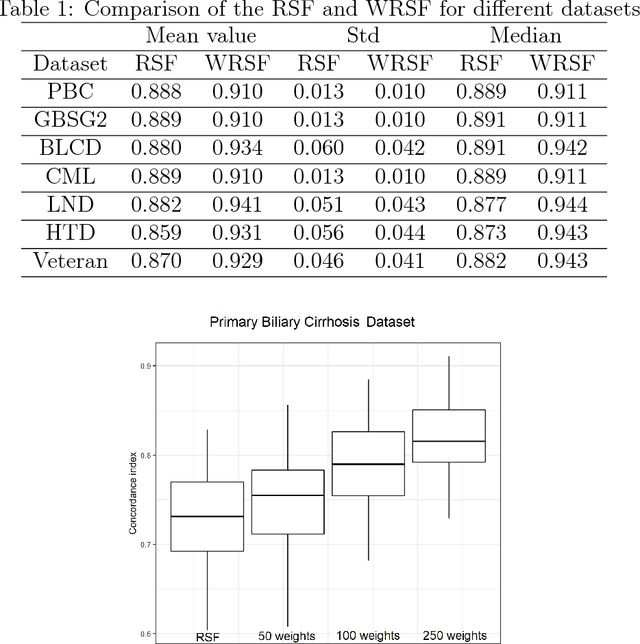

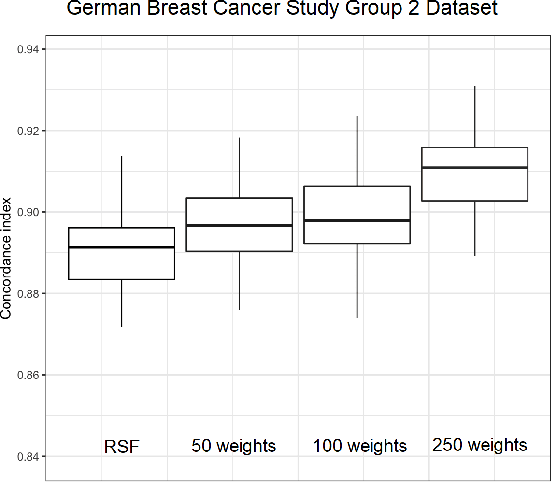

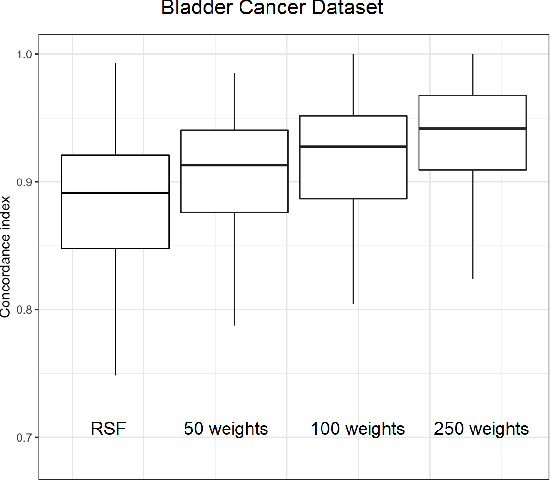

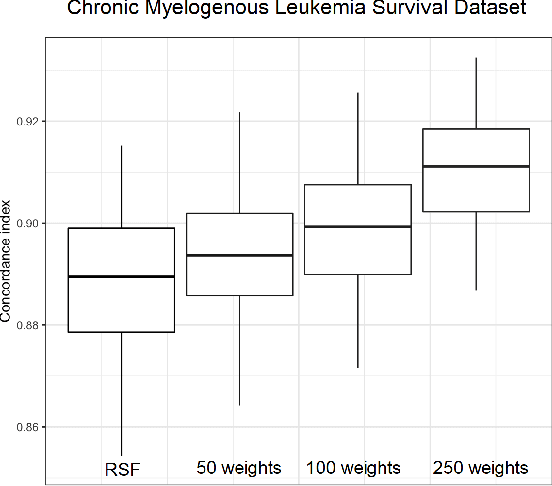

A weighted random survival forest is presented in the paper. It can be regarded as a modification of the random forest improving its performance. The main idea underlying the proposed model is to replace the standard procedure of averaging used for estimation of the random survival forest hazard function by weighted avaraging where the weights are assigned to every tree and can be veiwed as training paremeters which are computed in an optimal way by solving a standard quadratic optimization problem maximizing Harrell's C-index. Numerical examples with real data illustrate the outperformance of the proposed model in comparison with the original random survival forest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge