Predicting the Intention to Interact with a Service Robot:the Role of Gaze Cues

Apr 02, 2024Simone Arreghini, Gabriele Abbate, Alessandro Giusti, Antonio Paolillo

For a service robot, it is crucial to perceive as early as possible that an approaching person intends to interact: in this case, it can proactively enact friendly behaviors that lead to an improved user experience. We solve this perception task with a sequence-to-sequence classifier of a potential user intention to interact, which can be trained in a self-supervised way. Our main contribution is a study of the benefit of features representing the person's gaze in this context. Extensive experiments on a novel dataset show that the inclusion of gaze cues significantly improves the classifier performance (AUROC increases from 84.5% to 91.2%); the distance at which an accurate classification can be achieved improves from 2.4 m to 3.2 m. We also quantify the system's ability to adapt to new environments without external supervision. Qualitative experiments show practical applications with a waiter robot.

Imitation Learning-based Visual Servoing for Tracking Moving Objects

Sep 14, 2023Rocco Felici, Matteo Saveriano, Loris Roveda, Antonio Paolillo

In everyday life collaboration tasks between human operators and robots, the former necessitate simple ways for programming new skills, the latter have to show adaptive capabilities to cope with environmental changes. The joint use of visual servoing and imitation learning allows us to pursue the objective of realizing friendly robotic interfaces that (i) are able to adapt to the environment thanks to the use of visual perception and (ii) avoid explicit programming thanks to the emulation of previous demonstrations. This work aims to exploit imitation learning for the visual servoing paradigm to address the specific problem of tracking moving objects. In particular, we show that it is possible to infer from data the compensation term required for realizing the tracking controller, avoiding the explicit implementation of estimators or observers. The effectiveness of the proposed method has been validated through simulations with a robotic manipulator.

Self-Supervised Prediction of the Intention to Interact with a Service Robot

Sep 14, 2023Gabriele Abbate, Alessandro Giusti, Viktor Schmuck, Oya Celiktutan, Antonio Paolillo

A service robot can provide a smoother interaction experience if it has the ability to proactively detect whether a nearby user intends to interact, in order to adapt its behavior e.g. by explicitly showing that it is available to provide a service. In this work, we propose a learning-based approach to predict the probability that a human user will interact with a robot before the interaction actually begins; the approach is self-supervised because after each encounter with a human, the robot can automatically label it depending on whether it resulted in an interaction or not. We explore different classification approaches, using different sets of features considering the pose and the motion of the user. We validate and deploy the approach in three scenarios. The first collects $3442$ natural sequences (both interacting and non-interacting) representing employees in an office break area: a real-world, challenging setting, where we consider a coffee machine in place of a service robot. The other two scenarios represent researchers interacting with service robots ($200$ and $72$ sequences, respectively). Results show that, even in challenging real-world settings, our approach can learn without external supervision, and can achieve accurate classification (i.e. AUROC greater than $0.9$) of the user's intention to interact with an advance of more than $3$s before the interaction actually occurs.

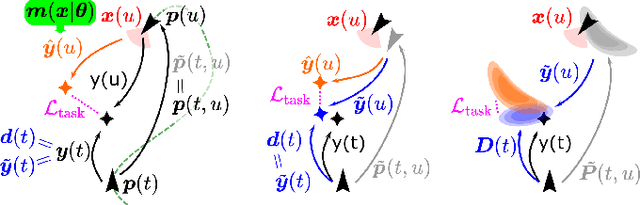

Visual Servoing with Geometrically Interpretable Neural Perception

Oct 19, 2022Antonio Paolillo, Mirko Nava, Dario Piga, Alessandro Giusti

An increasing number of nonspecialist robotic users demand easy-to-use machines. In the context of visual servoing, the removal of explicit image processing is becoming a trend, allowing an easy application of this technique. This work presents a deep learning approach for solving the perception problem within the visual servoing scheme. An artificial neural network is trained using the supervision coming from the knowledge of the controller and the visual features motion model. In this way, it is possible to give a geometrical interpretation to the estimated visual features, which can be used in the analytical law of the visual servoing. The approach keeps perception and control decoupled, conferring flexibility and interpretability on the whole framework. Simulated and real experiments with a robotic manipulator validate our approach.

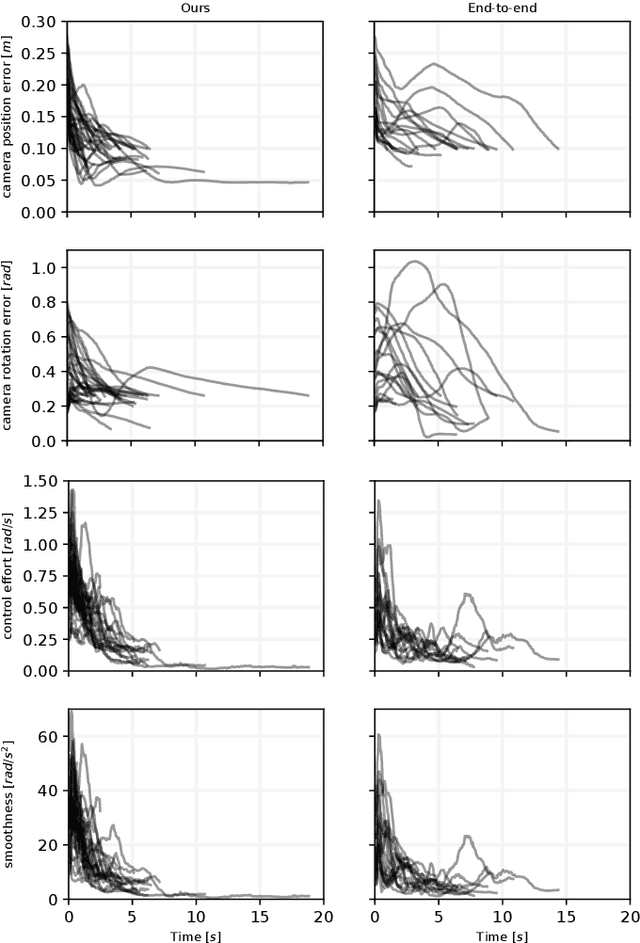

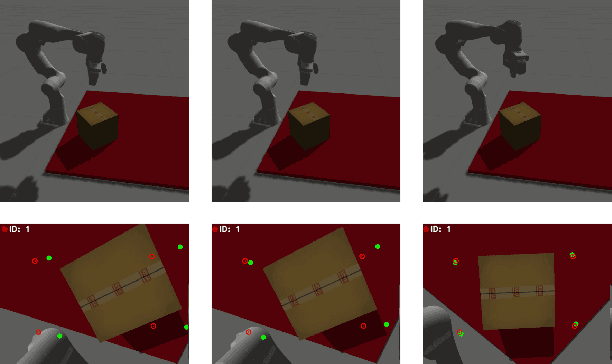

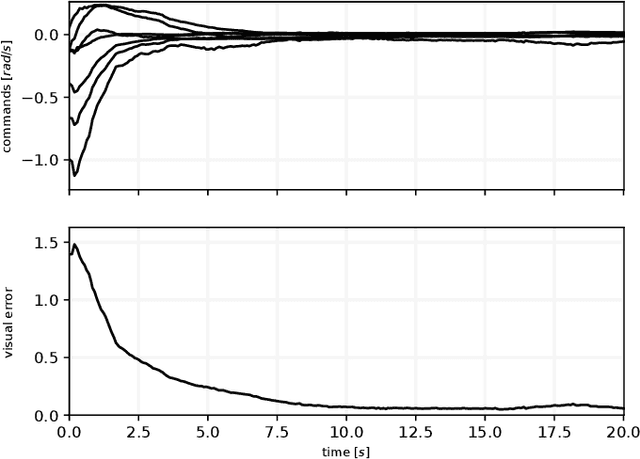

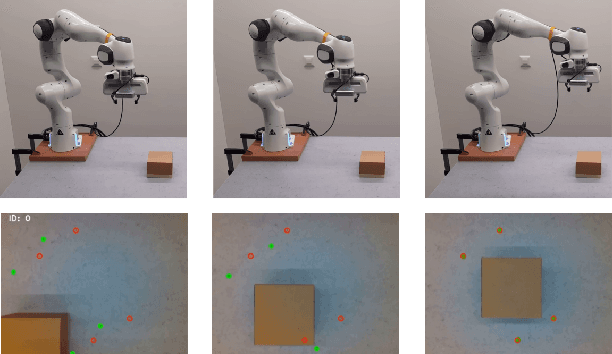

Learning Stable Dynamical Systems for Visual Servoing

Apr 12, 2022Antonio Paolillo, Matteo Saveriano

This work presents the dual benefit of integrating imitation learning techniques, based on the dynamical systems formalism, with the visual servoing paradigm. On the one hand, dynamical systems allow to program additional skills without explicitly coding them in the visual servoing law, but leveraging few demonstrations of the full desired behavior. On the other, visual servoing allows to consider exteroception into the dynamical system architecture and be able to adapt to unexpected environment changes. The beneficial combination of the two concepts is proven by applying three existing dynamical systems methods to the visual servoing case. Simulations validate and compare the methods; experiments with a robot manipulator show the validity of the approach in a real-world scenario.

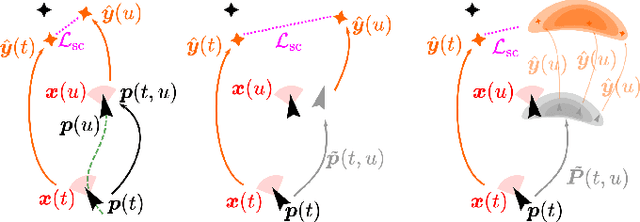

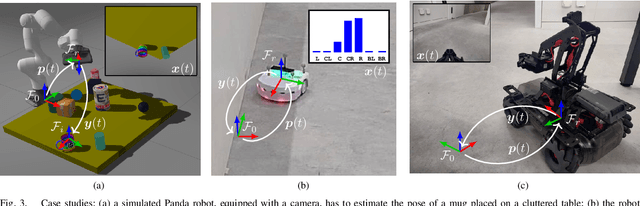

Uncertainty-Aware Self-Supervised Learning of Spatial Perception Tasks

Mar 22, 2021Mirko Nava, Antonio Paolillo, Jérôme Guzzi, Luca Maria Gambardella, Alessandro Giusti

We propose a general self-supervised approach to learn neural models that solve spatial perception tasks, such as estimating the pose of an object relative to the robot, from onboard sensor readings. The model is learned from training episodes, by relying on: a continuous state estimate, possibly inaccurate and affected by odometry drift; and a detector, that sporadically provides supervision about the target pose. We demonstrate the general approach in three different concrete scenarios: a simulated robot arm that visually estimates the pose of an object of interest; a small differential drive robot using 7 infrared sensors to localize a nearby wall; an omnidirectional mobile robot that localizes itself in an environment from camera images. Quantitative results show that the approach works well in all three scenarios, and that explicitly accounting for uncertainty yields statistically significant performance improvements.

A memory of motion for visual predictive control tasks

Feb 28, 2020Antonio Paolillo, Teguh Santoso Lembono, Sylvain Calinon

This paper addresses the problem of efficiently achieving visual predictive control tasks. To this end, a memory of motion, containing a set of trajectories built off-line, is used for leveraging precomputation and dealing with difficult visual tasks. Standard regression techniques, such as k-nearest neighbors and Gaussian process regression, are used to query the memory and provide on-line a warm-start and a way point to the control optimization process. The proposed technique allows the control scheme to achieve high performance and, at the same time, keep the computational time limited. Simulation and experimental results, carried out with a 7-axis manipulator, show the effectiveness of the approach.

Memory of Motion for Warm-starting Trajectory Optimization

Jul 02, 2019Teguh Santoso Lembono, Antonio Paolillo, Emmanuel Pignat, Sylvain Calinon

Trajectory optimization for motion planning requires a good initial guess to obtain good performance. In our proposed approach, we build a memory of motion based on a database of robot paths to provide a good initial guess online. The memory of motion relies on function approximators and dimensionality reduction techniques to learn the mapping between the task and the robot paths. Three function approximators are compared: k-Nearest Neighbor, Gaussian Process Regression, and Bayesian Gaussian Mixture Regression. In addition, we show that the usage of the memory of motion can be improved by using an ensemble method, and that the memory can also be used as a metric to choose between several possible goals. We demonstrate the proposed approach with the motion planning on a dual-arm PR2 robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge