Deep Imitation Learning for Humanoid Loco-manipulation through Human Teleoperation

Sep 05, 2023Mingyo Seo, Steve Han, Kyutae Sim, Seung Hyeon Bang, Carlos Gonzalez, Luis Sentis, Yuke Zhu

We tackle the problem of developing humanoid loco-manipulation skills with deep imitation learning. The difficulty of collecting task demonstrations and training policies for humanoids with a high degree of freedom presents substantial challenges. We introduce TRILL, a data-efficient framework for training humanoid loco-manipulation policies from human demonstrations. In this framework, we collect human demonstration data through an intuitive Virtual Reality (VR) interface. We employ the whole-body control formulation to transform task-space commands by human operators into the robot's joint-torque actuation while stabilizing its dynamics. By employing high-level action abstractions tailored for humanoid loco-manipulation, our method can efficiently learn complex sensorimotor skills. We demonstrate the effectiveness of TRILL in simulation and on a real-world robot for performing various loco-manipulation tasks. Videos and additional materials can be found on the project page: https://ut-austin-rpl.github.io/TRILL.

Control and Evaluation of a Humanoid Robot with Rolling Contact Knees

Oct 03, 2022Seung Hyeon Bang, Carlos Gonzalez, Junhyeok Ahn, Nicholas Paine, Luis Sentis

In this paper, we introduce the humanoid robot DRACO 3 by providing a high-level description of its design and control. This robot features proximal actuation and mechanical artifacts to provide a high range of hip, knee and ankle motion. Its versatile design brings interesting problems as it requires a more elaborate control system to perform its motions. For this reason, we introduce a whole body controller (WBC) with support for rolling contact joints and show how it can be easily integrated into our previously presented open-source Planning and Control (PnC) framework. We then validate our controller experimentally on DRACO 3 by showing preliminary results carrying out two postural tasks. Lastly, we analyze the impact of the proximal actuation design and show where it stands in comparison to other adult-size humanoids.

Dictionary Learning with Accumulator Neurons

May 30, 2022Gavin Parpart, Carlos Gonzalez, Terrence C. Stewart, Edward Kim, Jocelyn Rego, Andrew O'Brien, Steven Nesbit, Garrett T. Kenyon, Yijing Watkins

The Locally Competitive Algorithm (LCA) uses local competition between non-spiking leaky integrator neurons to infer sparse representations, allowing for potentially real-time execution on massively parallel neuromorphic architectures such as Intel's Loihi processor. Here, we focus on the problem of inferring sparse representations from streaming video using dictionaries of spatiotemporal features optimized in an unsupervised manner for sparse reconstruction. Non-spiking LCA has previously been used to achieve unsupervised learning of spatiotemporal dictionaries composed of convolutional kernels from raw, unlabeled video. We demonstrate how unsupervised dictionary learning with spiking LCA (\hbox{S-LCA}) can be efficiently implemented using accumulator neurons, which combine a conventional leaky-integrate-and-fire (\hbox{LIF}) spike generator with an additional state variable that is used to minimize the difference between the integrated input and the spiking output. We demonstrate dictionary learning across a wide range of dynamical regimes, from graded to intermittent spiking, for inferring sparse representations of both static images drawn from the CIFAR database as well as video frames captured from a DVS camera. On a classification task that requires identification of the suite from a deck of cards being rapidly flipped through as viewed by a DVS camera, we find essentially no degradation in performance as the LCA model used to infer sparse spatiotemporal representations migrates from graded to spiking. We conclude that accumulator neurons are likely to provide a powerful enabling component of future neuromorphic hardware for implementing online unsupervised learning of spatiotemporal dictionaries optimized for sparse reconstruction of streaming video from event based DVS cameras.

Data-Driven Safety Verification for Legged Robots

Feb 24, 2022Junhyeok Ahn, Seung Hyeon Bang, Carlos Gonzalez, Yuanchen Yuan, Luis Sentis

Planning safe motions for legged robots requires sophisticated safety verification tools. However, designing such tools for such complex systems is challenging due to the nonlinear and high-dimensional nature of these systems' dynamics. In this letter, we present a probabilistic verification framework for legged systems, which evaluates the safety of planned trajectories by learning an assessment function from trajectories collected from a closed-loop system. Our approach does not require an analytic expression of the closed-loop dynamics, thus enabling safety verification of systems with complex models and controllers. Our framework consists of an offline stage that initializes a safety assessment function by simulating a nominal model and an online stage that adapts the function to address the sim-to-real gap. The performance of the proposed approach for safety verification is demonstrated using a quadruped balancing task and a humanoid reaching task. The results demonstrate that our framework accurately predicts the systems' safety both at the planning phase to generate robust trajectories and at execution phase to detect unexpected external disturbances.

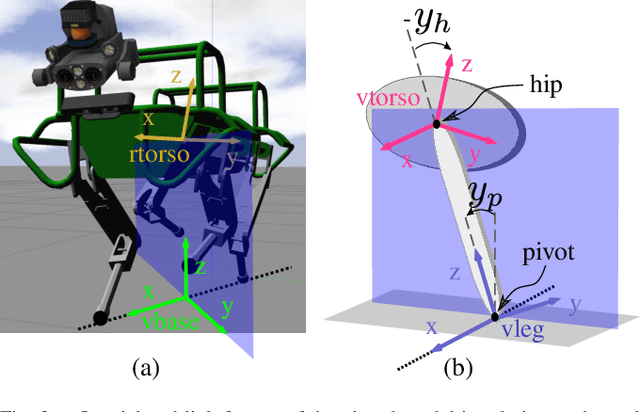

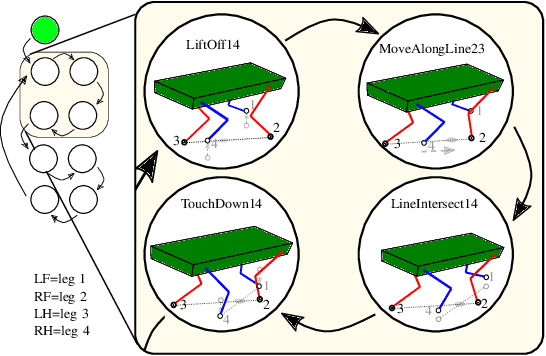

Line Walking and Balancing for Legged Robots with Point Feet

Jul 02, 2020Carlos Gonzalez, Victor Barasuol, Marco Frigerio, Roy Featherstone, Darwin G. Caldwell, Claudio Semini

The ability of legged systems to traverse highly-constrained environments depends by and large on the performance of their motion and balance controllers. This paper presents a controller that excels in a scenario that most state-of-the-art balance controllers have not yet addressed: line walking, or walking on nearly null support regions. Our approach uses a low-dimensional virtual model (2-DoF) to generate balancing actions through a previously derived four-term balance controller and transforms them to the robot through a derived kinematic mapping. The capabilities of this controller are tested in simulation, where we show the 90kg quadruped robot HyQ crossing a bridge of only 6 cm width (compared to its 4 cm diameter foot sphere), by balancing on two feet at any time while moving along a line. Additional simulations are carried to test the performance of the controller and the effect of external disturbances. The same controller is then used on the real robot to present for the first time a legged robot balancing on a contact line of nearly null support area.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge