ODSmoothGrad: Generating Saliency Maps for Object Detectors

Apr 15, 2023Chul Gwon, Steven C. Howell

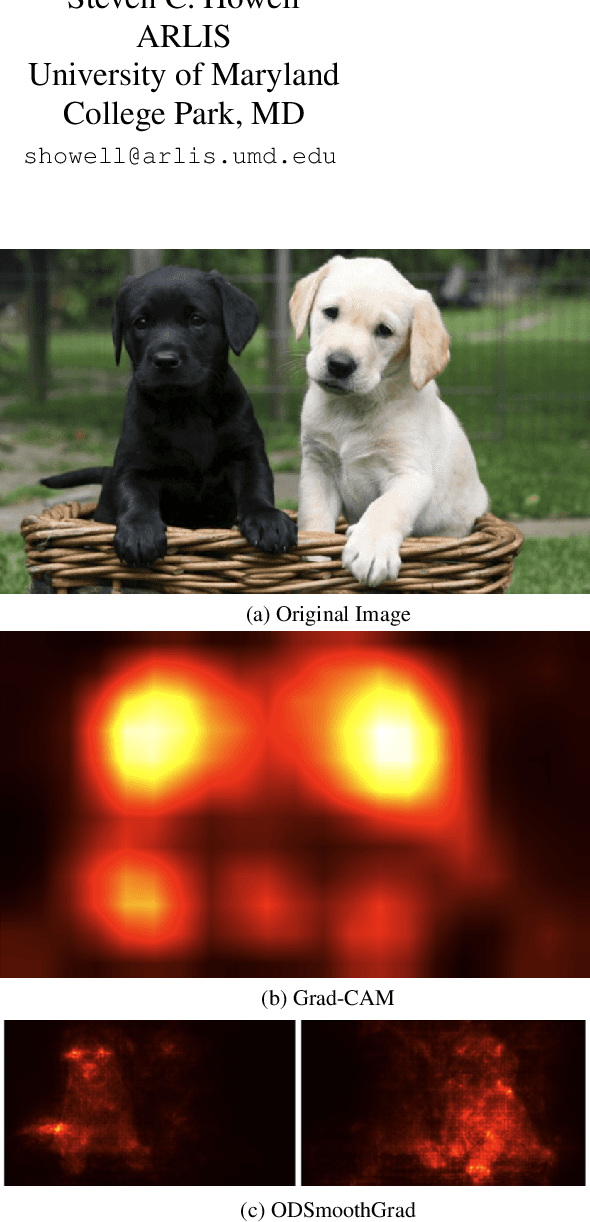

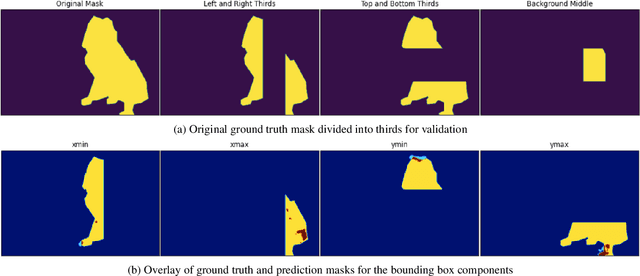

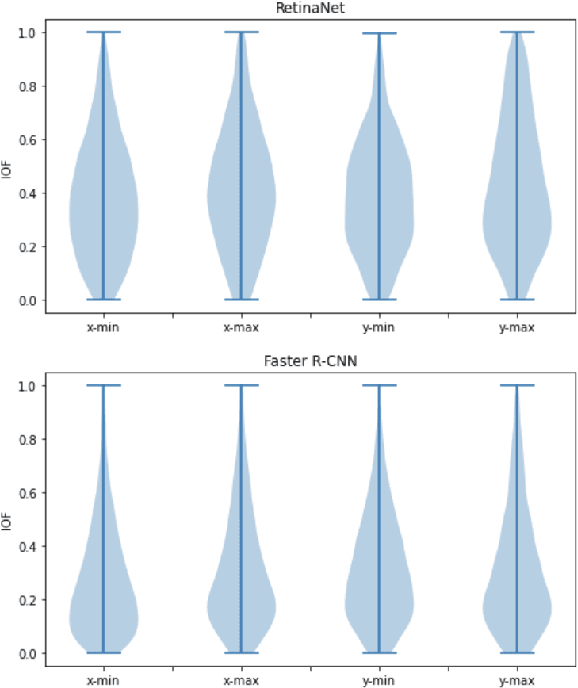

Techniques for generating saliency maps continue to be used for explainability of deep learning models, with efforts primarily applied to the image classification task. Such techniques, however, can also be applied to object detectors, not only with the classification scores, but also for the bounding box parameters, which are regressed values for which the relevant pixels contributing to these parameters can be identified. In this paper, we present ODSmoothGrad, a tool for generating saliency maps for the classification and the bounding box parameters in object detectors. Given the noisiness of saliency maps, we also apply the SmoothGrad algorithm to visually enhance the pixels of interest. We demonstrate these capabilities on one-stage and two-stage object detectors, with comparisons using classifier-based techniques.

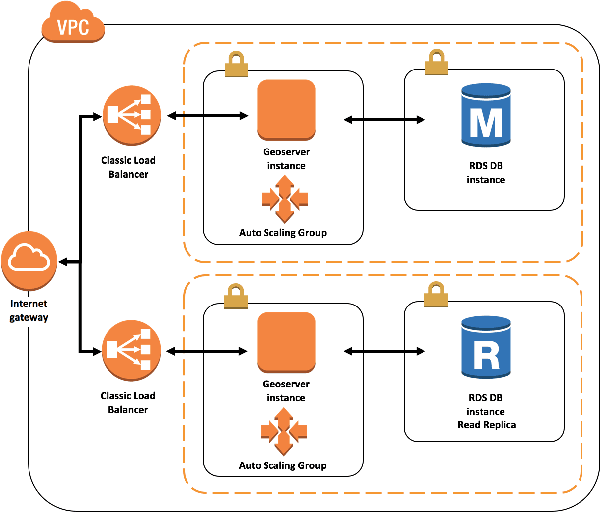

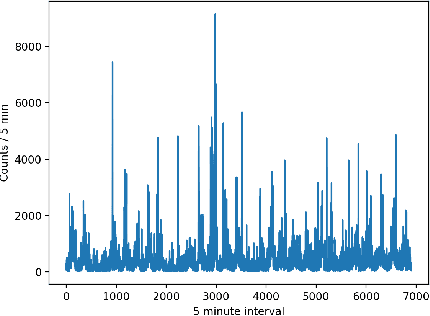

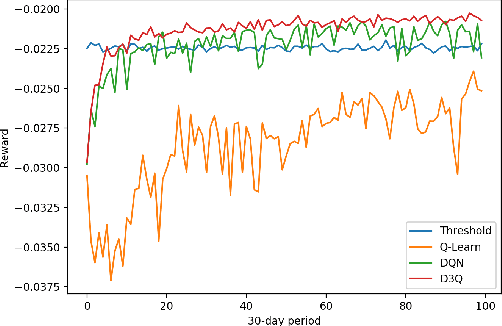

Automated Cloud Provisioning on AWS using Deep Reinforcement Learning

Sep 19, 2017Zhiguang Wang, Chul Gwon, Tim Oates, Adam Iezzi

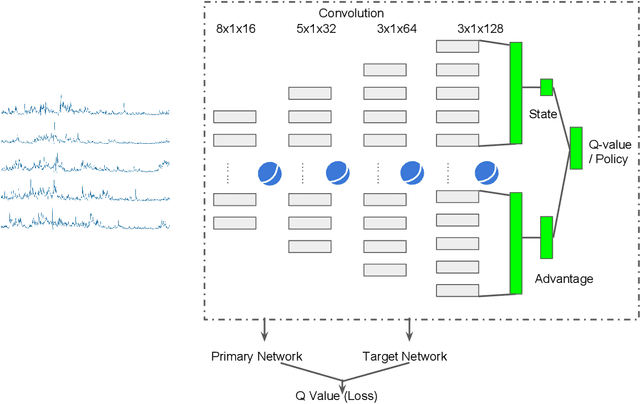

As the use of cloud computing continues to rise, controlling cost becomes increasingly important. Yet there is evidence that 30\% - 45\% of cloud spend is wasted. Existing tools for cloud provisioning typically rely on highly trained human experts to specify what to monitor, thresholds for triggering action, and actions. In this paper we explore the use of reinforcement learning (RL) to acquire policies to balance performance and spend, allowing humans to specify what they want as opposed to how to do it, minimizing the need for cloud expertise. Empirical results with tabular, deep, and dueling double deep Q-learning with the CloudSim simulator show the utility of RL and the relative merits of the approaches. We also demonstrate effective policy transfer learning from an extremely simple simulator to CloudSim, with the next step being transfer from CloudSim to an Amazon Web Services physical environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge