Exceeding Computational Complexity Trial-and-Error Dynamic Action and Intelligence

Dec 22, 2022Chuyu Xiong

Computational complexity is a core theory of computer science, which dictates the degree of difficulty of computation. There are many problems with high complexity that we have to deal, which is especially true for AI. This raises a big question: Is there a better way to deal with these highly complex problems other than bounded by computational complexity? We believe that ideas and methods from intelligence science can be applied to these problems and help us to exceed computational complexity. In this paper, we try to clarify concepts, and we propose definitions such as unparticularized computing, particularized computing, computing agents, and dynamic search. We also propose and discuss a framework, i.e., trial-and-error + dynamic search. Number Partition Problem is a well-known NP-complete problem, and we use this problem as an example to illustrate the ideas discussed.

Sampling and Complexity of Partition Function

Feb 01, 2021Chuyu Xiong

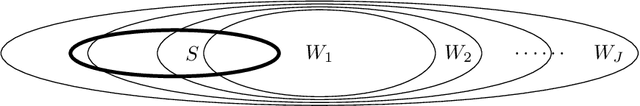

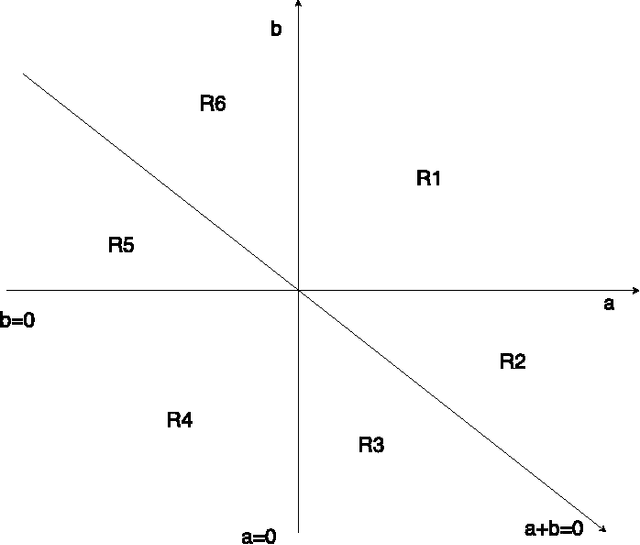

The number partition problem is a well-known problem, which is one of 21 Karp's NP-complete problems \cite{karp}. Partition function is a boolean function that is equivalent to the number partition problem with number range restricted. To understand the computational complexity of the number partition problem and partition function is quite important and hard. People speculate that we need new tools and methods \cite{aaronson} for such problem. In our recent research on universal learning machine \cite{paper5, paper8}, we developed some tools, namely, fitting extremum, proper sampling set, boolean function with parameters (used in trial-and-error fashion). We found that these tools could be applied to the partition function. In this article, we discuss the set up of the partition function, properties of the partition function, and the tools to be used. This approach leads us to prove that the lower bound of the computational complexity of partition function, as well as the lower bound of the computational complexity of the number partition problem, is exponential to the size of problem. This implies: {\bf P} $\ne$ {\bf NP} \cite{cook}.

Sampling and Learning for Boolean Function

Jan 21, 2020Chuyu Xiong

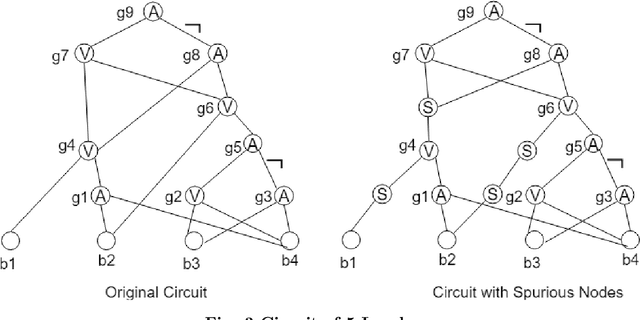

In this article, we continue our study on universal learning machine by introducing new tools. We first discuss boolean function and boolean circuit, and we establish one set of tools, namely, fitting extremum and proper sampling set. We proved the fundamental relationship between proper sampling set and complexity of boolean circuit. Armed with this set of tools, we then introduce much more effective learning strategies. We show that with such learning strategies and learning dynamics, universal learning can be achieved, and requires much less data.

Algebraic Expression of Subjective Spatial and Temporal Patterns

May 31, 2018Chuyu Xiong

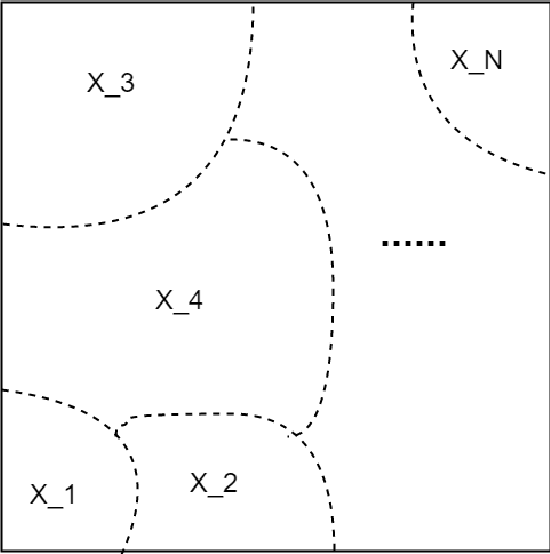

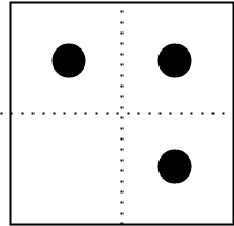

Universal learning machine is a theory trying to study machine learning from mathematical point of view. The outside world is reflected inside an universal learning machine according to pattern of incoming data. This is subjective pattern of learning machine. In [2,4], we discussed subjective spatial pattern, and established a powerful tool -- X-form, which is an algebraic expression for subjective spatial pattern. However, as the initial stage of study, there we only discussed spatial pattern. Here, we will discuss spatial and temporal patterns, and algebraic expression for them.

What Really is Deep Learning Doing?

Nov 06, 2017Chuyu Xiong

Deep learning has achieved a great success in many areas, from computer vision to natural language processing, to game playing, and much more. Yet, what deep learning is really doing is still an open question. There are a lot of works in this direction. For example, [5] tried to explain deep learning by group renormalization, and [6] tried to explain deep learning from the view of functional approximation. In order to address this very crucial question, here we see deep learning from perspective of mechanical learning and learning machine (see [1], [2]). From this particular angle, we can see deep learning much better and answer with confidence: What deep learning is really doing? why it works well, how it works, and how much data is necessary for learning. We also will discuss advantages and disadvantages of deep learning at the end of this work.

Descriptions of Objectives and Processes of Mechanical Learning

May 31, 2017Chuyu Xiong

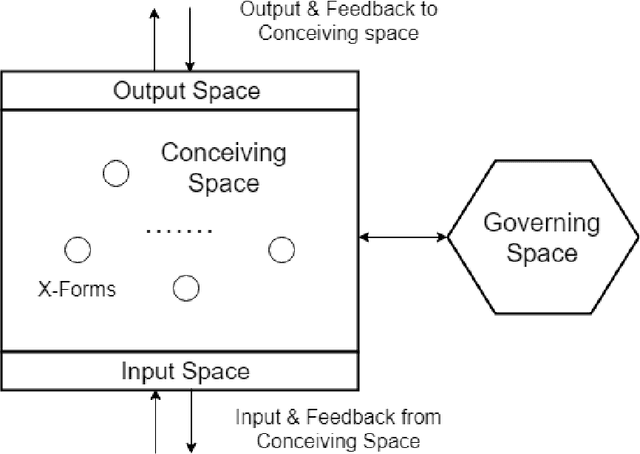

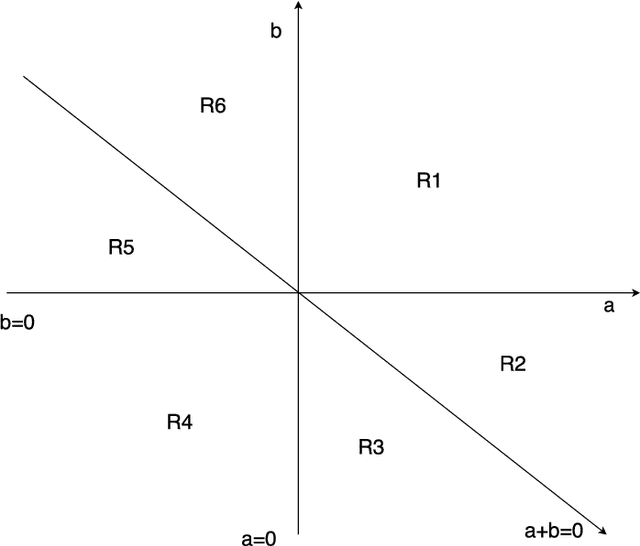

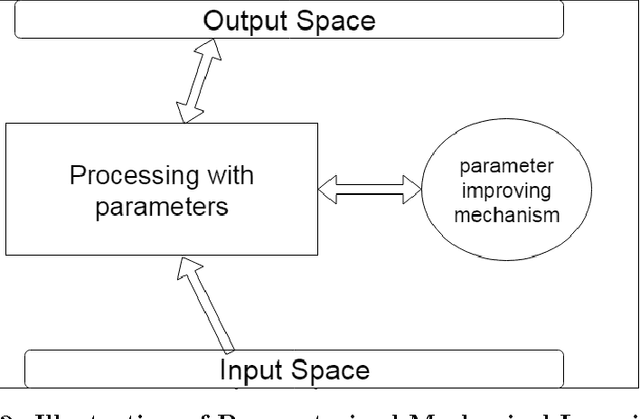

In [1], we introduced mechanical learning and proposed 2 approaches to mechanical learning. Here, we follow one such approach to well describe the objects and the processes of learning. We discuss 2 kinds of patterns: objective and subjective pattern. Subjective pattern is crucial for learning machine. We prove that for any objective pattern we can find a proper subjective pattern based upon least base patterns to express the objective pattern well. X-form is algebraic expression for subjective pattern. Collection of X-forms form internal representation space, which is center of learning machine. We discuss learning by teaching and without teaching. We define data sufficiency by X-form. We then discussed some learning strategies. We show, in each strategy, with sufficient data, and with certain capabilities, learning machine indeed can learn any pattern (universal learning machine). In appendix, with knowledge of learning machine, we try to view deep learning from a different angle, i.e. its internal representation space and its learning dynamics.

Discussion on Mechanical Learning and Learning Machine

Jan 31, 2016Chuyu Xiong

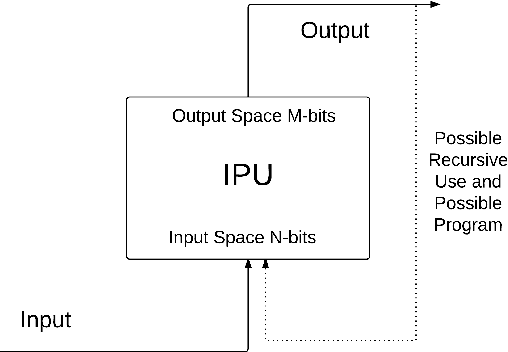

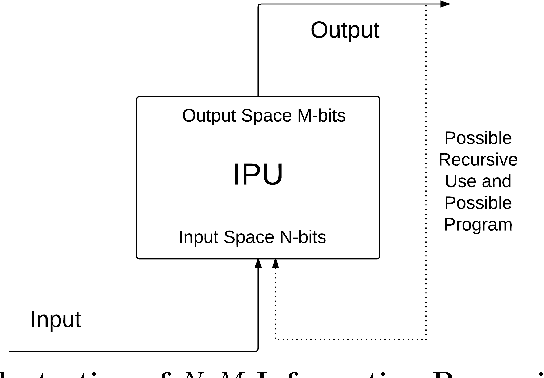

Mechanical learning is a computing system that is based on a set of simple and fixed rules, and can learn from incoming data. A learning machine is a system that realizes mechanical learning. Importantly, we emphasis that it is based on a set of simple and fixed rules, contrasting to often called machine learning that is sophisticated software based on very complicated mathematical theory, and often needs human intervene for software fine tune and manual adjustments. Here, we discuss some basic facts and principles of such system, and try to lay down a framework for further study. We propose 2 directions to approach mechanical learning, just like Church-Turing pair: one is trying to realize a learning machine, another is trying to well describe the mechanical learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge