Enhancing Spatiotemporal Disease Progression Models via Latent Diffusion and Prior Knowledge

May 06, 2024Lemuel Puglisi, Daniel C. Alexander, Daniele Ravì

In this work, we introduce Brain Latent Progression (BrLP), a novel spatiotemporal disease progression model based on latent diffusion. BrLP is designed to predict the evolution of diseases at the individual level on 3D brain MRIs. Existing deep generative models developed for this task are primarily data-driven and face challenges in learning disease progressions. BrLP addresses these challenges by incorporating prior knowledge from disease models to enhance the accuracy of predictions. To implement this, we propose to integrate an auxiliary model that infers volumetric changes in various brain regions. Additionally, we introduce Latent Average Stabilization (LAS), a novel technique to improve spatiotemporal consistency of the predicted progression. BrLP is trained and evaluated on a large dataset comprising 11,730 T1-weighted brain MRIs from 2,805 subjects, collected from three publicly available, longitudinal Alzheimer's Disease (AD) studies. In our experiments, we compare the MRI scans generated by BrLP with the actual follow-up MRIs available from the subjects, in both cross-sectional and longitudinal settings. BrLP demonstrates significant improvements over existing methods, with an increase of 22% in volumetric accuracy across AD-related brain regions and 43% in image similarity to the ground-truth scans. The ability of BrLP to generate conditioned 3D scans at the subject level, along with the novelty of integrating prior knowledge to enhance accuracy, represents a significant advancement in disease progression modeling, opening new avenues for precision medicine. The code of BrLP is available at the following link: https://github.com/LemuelPuglisi/BrLP.

DeepBrainPrint: A Novel Contrastive Framework for Brain MRI Re-Identification

Feb 25, 2023Lemuel Puglisi, Frederik Barkhof, Daniel C. Alexander, Geoffrey JM Parker, Arman Eshaghi, Daniele Ravì

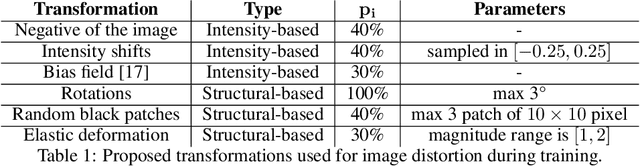

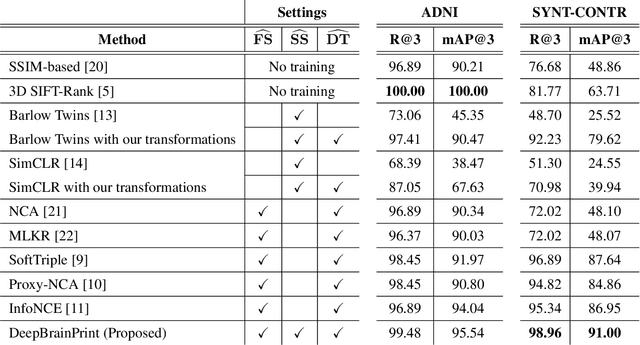

Recent advances in MRI have led to the creation of large datasets. With the increase in data volume, it has become difficult to locate previous scans of the same patient within these datasets (a process known as re-identification). To address this issue, we propose an AI-powered medical imaging retrieval framework called DeepBrainPrint, which is designed to retrieve brain MRI scans of the same patient. Our framework is a semi-self-supervised contrastive deep learning approach with three main innovations. First, we use a combination of self-supervised and supervised paradigms to create an effective brain fingerprint from MRI scans that can be used for real-time image retrieval. Second, we use a special weighting function to guide the training and improve model convergence. Third, we introduce new imaging transformations to improve retrieval robustness in the presence of intensity variations (i.e. different scan contrasts), and to account for age and disease progression in patients. We tested DeepBrainPrint on a large dataset of T1-weighted brain MRIs from the Alzheimer's Disease Neuroimaging Initiative (ADNI) and on a synthetic dataset designed to evaluate retrieval performance with different image modalities. Our results show that DeepBrainPrint outperforms previous methods, including simple similarity metrics and more advanced contrastive deep learning frameworks.

Adversarial training with cycle consistency for unsupervised super-resolution in endomicroscopy

Jan 21, 2019Daniele Ravì, Agnieszka Barbara Szczotka, Stephen P Pereira, Tom Vercauteren

In recent years, endomicroscopy has become increasingly used for diagnostic purposes and interventional guidance. It can provide intraoperative aids for real-time tissue characterization and can help to perform visual investigations aimed for example to discover epithelial cancers. Due to physical constraints on the acquisition process, endomicroscopy images, still today have a low number of informative pixels which hampers their quality. Post-processing techniques, such as Super-Resolution (SR), are a potential solution to increase the quality of these images. SR techniques are often supervised, requiring aligned pairs of low-resolution (LR) and high-resolution (HR) images patches to train a model. However, in our domain, the lack of HR images hinders the collection of such pairs and makes supervised training unsuitable. For this reason, we propose an unsupervised SR framework based on an adversarial deep neural network with a physically-inspired cycle consistency, designed to impose some acquisition properties on the super-resolved images. Our framework can exploit HR images, regardless of the domain where they are coming from, to transfer the quality of the HR images to the initial LR images. This property can be particularly useful in all situations where pairs of LR/HR are not available during the training. Our quantitative analysis, validated using a database of 238 endomicroscopy video sequences from 143 patients, shows the ability of the pipeline to produce convincing super-resolved images. A Mean Opinion Score (MOS) study also confirms this quantitative image quality assessment.

Effective deep learning training for single-image super-resolution in endomicroscopy exploiting video-registration-based reconstruction

Mar 23, 2018Daniele Ravì, Agnieszka Barbara Szczotka, Dzhoshkun Ismail Shakir, Stephen P Pereira, Tom Vercauteren

Purpose: Probe-based Confocal Laser Endomicroscopy (pCLE) is a recent imaging modality that allows performing in vivo optical biopsies. The design of pCLE hardware, and its reliance on an optical fibre bundle, fundamentally limits the image quality with a few tens of thousands fibres, each acting as the equivalent of a single-pixel detector, assembled into a single fibre bundle. Video-registration techniques can be used to estimate high-resolution (HR) images by exploiting the temporal information contained in a sequence of low-resolution (LR) images. However, the alignment of LR frames, required for the fusion, is computationally demanding and prone to artefacts. Methods: In this work, we propose a novel synthetic data generation approach to train exemplar-based Deep Neural Networks (DNNs). HR pCLE images with enhanced quality are recovered by the models trained on pairs of estimated HR images (generated by the video-registration algorithm) and realistic synthetic LR images. Performance of three different state-of-the-art DNNs techniques were analysed on a Smart Atlas database of 8806 images from 238 pCLE video sequences. The results were validated through an extensive Image Quality Assessment (IQA) that takes into account different quality scores, including a Mean Opinion Score (MOS). Results: Results indicate that the proposed solution produces an effective improvement in the quality of the obtained reconstructed image. Conclusion: The proposed training strategy and associated DNNs allows us to perform convincing super-resolution of pCLE images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge