Stochastic Configuration Machines: FPGA Implementation

Oct 30, 2023Matthew J. Felicetti, Dianhui Wang

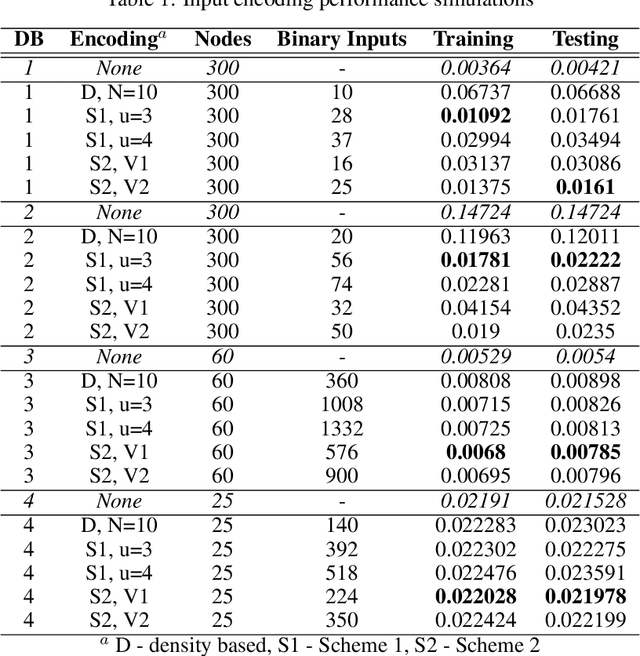

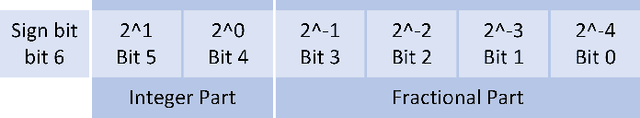

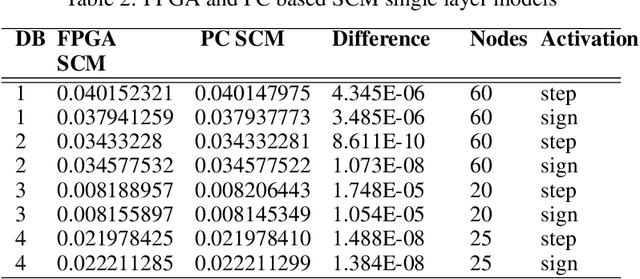

Neural networks for industrial applications generally have additional constraints such as response speed, memory size and power usage. Randomized learners can address some of these issues. However, hardware solutions can provide better resource reduction whilst maintaining the model's performance. Stochastic configuration networks (SCNs) are a prime choice in industrial applications due to their merits and feasibility for data modelling. Stochastic Configuration Machines (SCMs) extend this to focus on reducing the memory constraints by limiting the randomized weights to a binary value with a scalar for each node and using a mechanism model to improve the learning performance and result interpretability. This paper aims to implement SCM models on a field programmable gate array (FPGA) and introduce binary-coded inputs to the algorithm. Results are reported for two benchmark and two industrial datasets, including SCM with single-layer and deep architectures.

Stochastic Configuration Machines for Industrial Artificial Intelligence

Sep 11, 2023Dianhui Wang, Matthew J. Felicetti

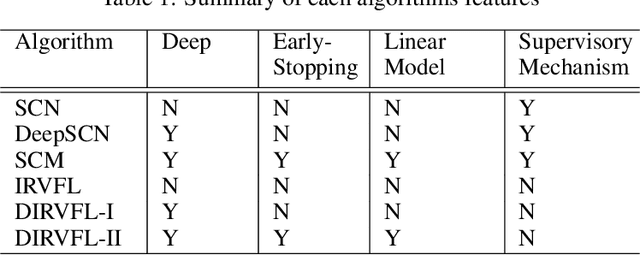

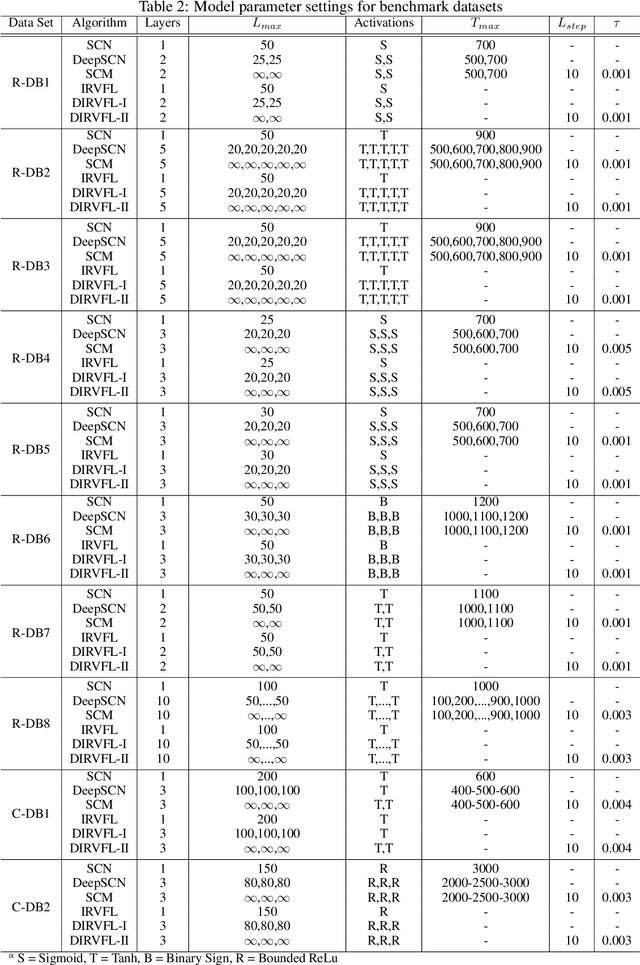

Real-time predictive modelling with desired accuracy is highly expected in industrial artificial intelligence (IAI), where neural networks play a key role. Neural networks in IAI require powerful, high-performance computing devices to operate a large number of floating point data. Based on stochastic configuration networks (SCNs), this paper proposes a new randomized learner model, termed stochastic configuration machines (SCMs), to stress effective modelling and data size saving that are useful and valuable for industrial applications. Compared to SCNs and random vector functional-link (RVFL) nets with binarized implementation, the model storage of SCMs can be significantly compressed while retaining favourable prediction performance. Besides the architecture of the SCM learner model and its learning algorithm, as an important part of this contribution, we also provide a theoretical basis on the learning capacity of SCMs by analysing the model's complexity. Experimental studies are carried out over some benchmark datasets and three industrial applications. The results demonstrate that SCM has great potential for dealing with industrial data analytics.

Two Dimensional Stochastic Configuration Networks for Image Data Analytics

Sep 06, 2018Ming Li, Dianhui Wang

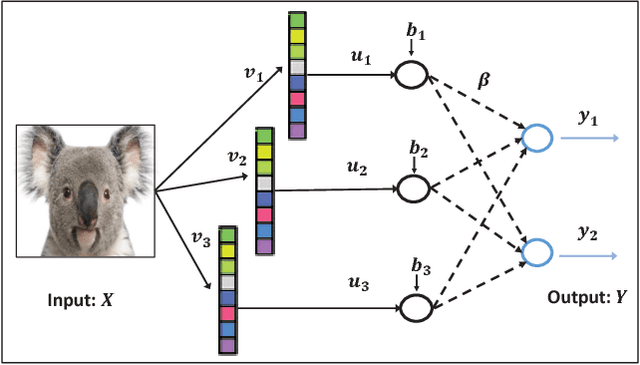

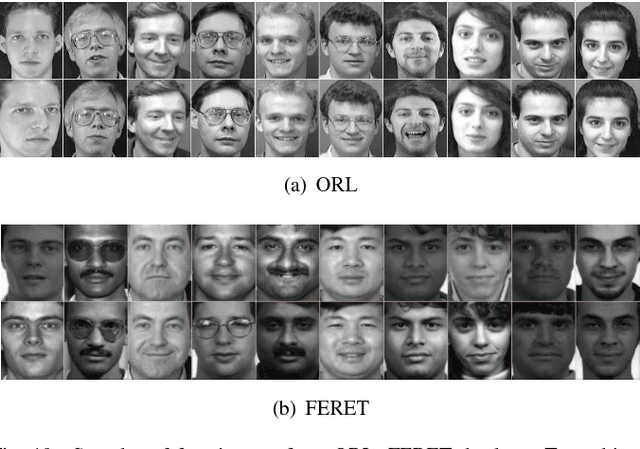

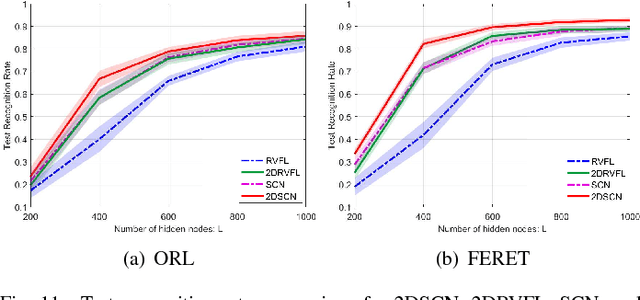

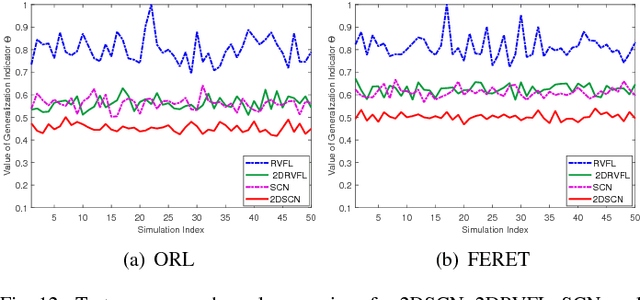

Stochastic configuration networks (SCNs) as a class of randomized learner model have been successfully employed in data analytics due to its universal approximation capability and fast modelling property. The technical essence lies in stochastically configuring hidden nodes (or basis functions) based on a supervisory mechanism rather than data-independent randomization as usually adopted for building randomized neural networks. Given image data modelling tasks, the use of one-dimensional SCNs potentially demolishes the spatial information of images, and may result in undesirable performance. This paper extends the original SCNs to two-dimensional version, termed 2DSCNs, for fast building randomized learners with matrix-inputs. Some theoretical analyses on the goodness of 2DSCNs against SCNs, including the complexity of the random parameter space, and the superiority of generalization, are presented. Empirical results over one regression, four benchmark handwritten digits classification, and two human face recognition datasets demonstrate that the proposed 2DSCNs perform favourably and show good potential for image data analytics.

Deep Stacked Stochastic Configuration Networks for Non-Stationary Data Streams

Aug 15, 2018Mahardhika Pratama, Dianhui Wang

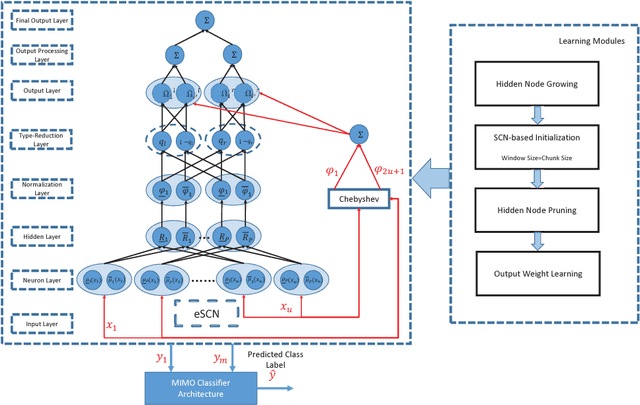

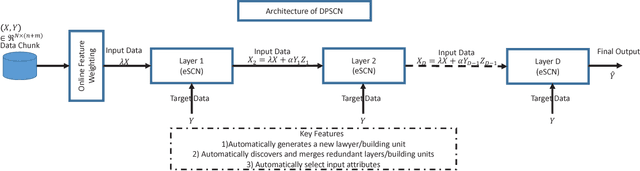

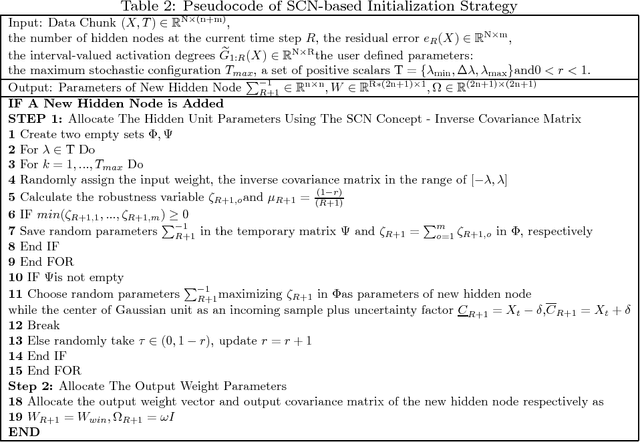

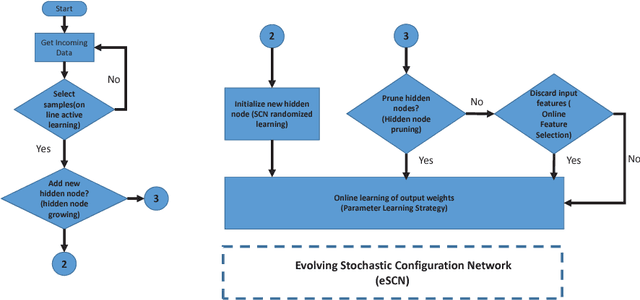

The concept of stochastic configuration networks (SCNs) others a solid framework for fast implementation of feedforward neural networks through randomized learning. Unlike conventional randomized approaches, SCNs provide an avenue to select appropriate scope of random parameters to ensure the universal approximation property. In this paper, a deep version of stochastic configuration networks, namely deep stacked stochastic configuration network (DSSCN), is proposed for modeling non-stationary data streams. As an extension of evolving stochastic connfiguration networks (eSCNs), this work contributes a way to grow and shrink the structure of deep stochastic configuration networks autonomously from data streams. The performance of DSSCN is evaluated by six benchmark datasets. Simulation results, compared with prominent data stream algorithms, show that the proposed method is capable of achieving comparable accuracy and evolving compact and parsimonious deep stacked network architecture.

Deep Stochastic Configuration Networks with Universal Approximation Property

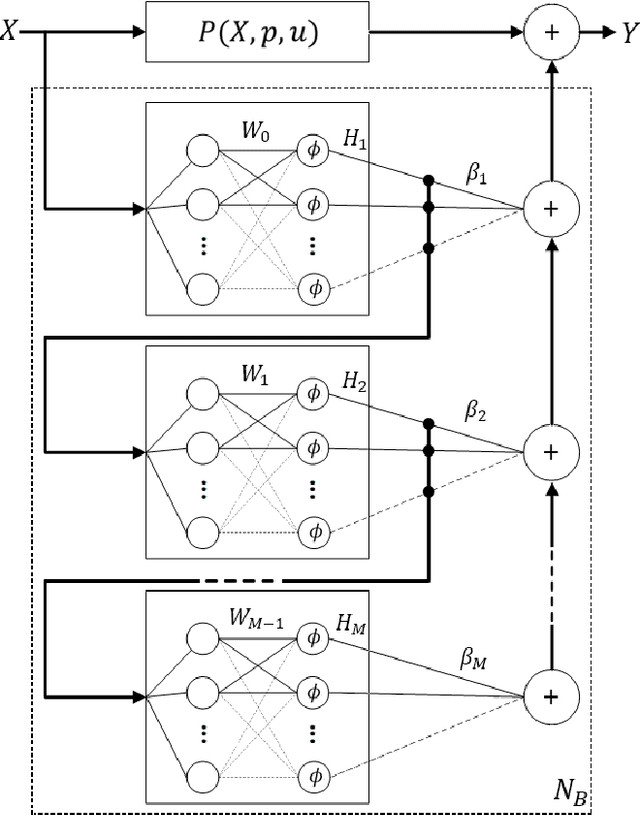

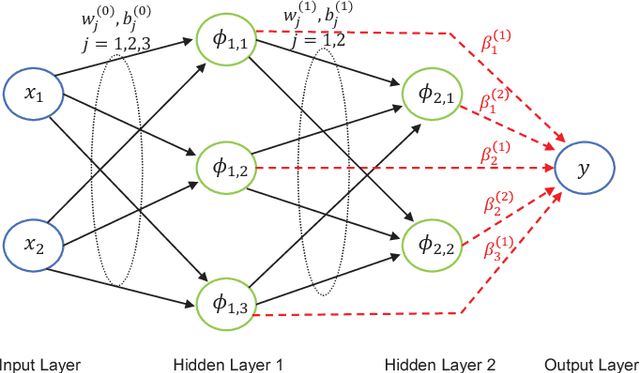

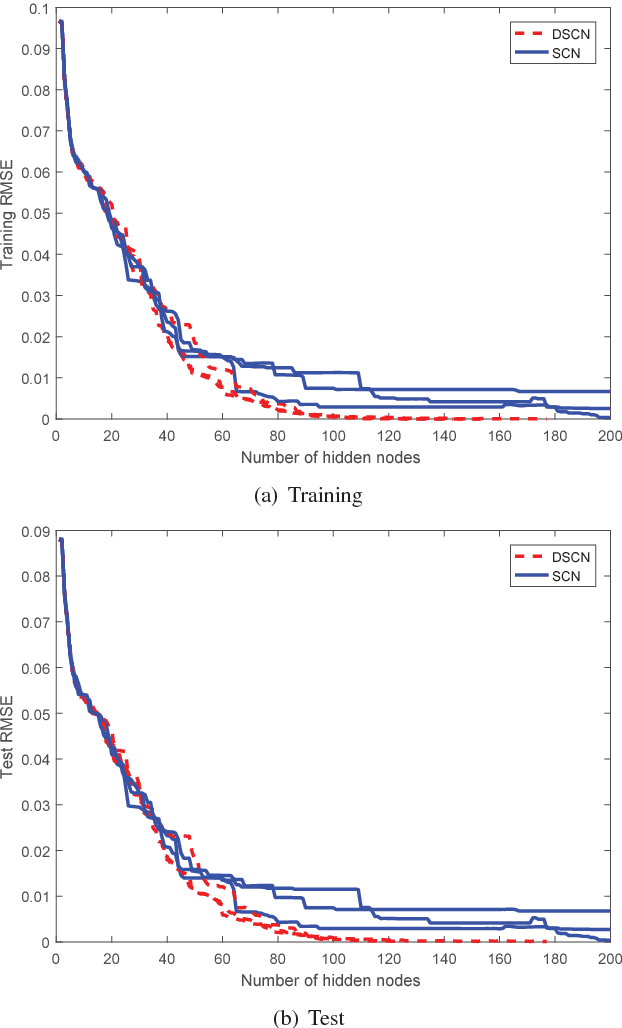

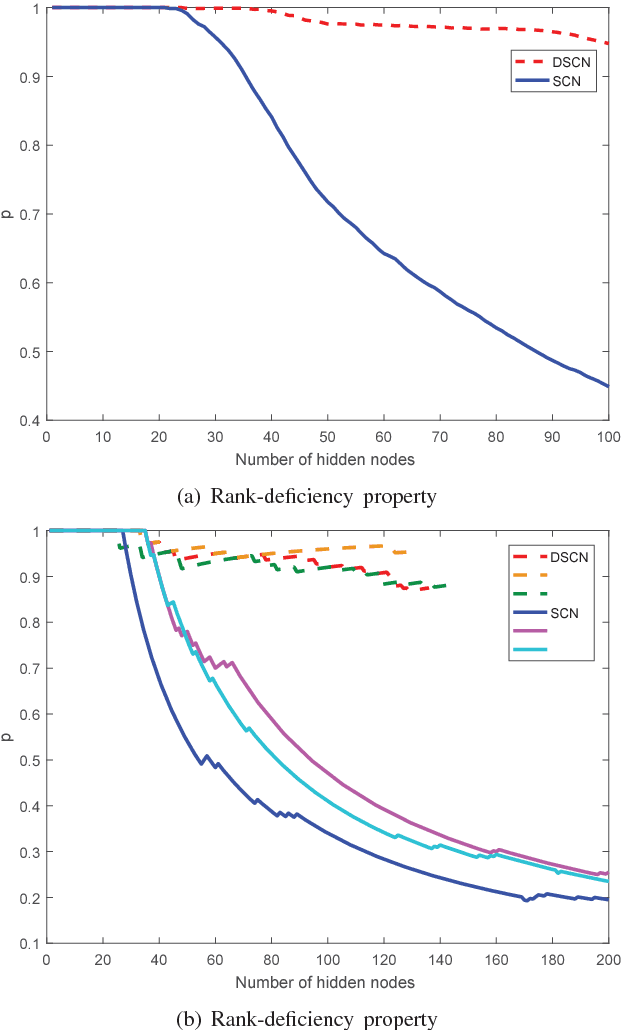

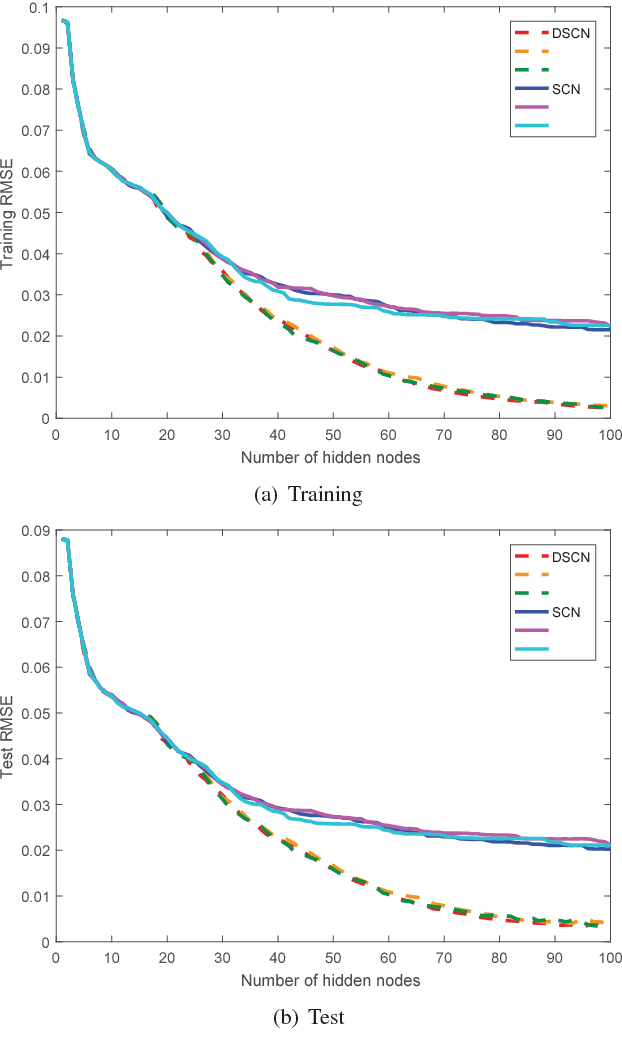

Mar 16, 2018Dianhui Wang, Ming Li

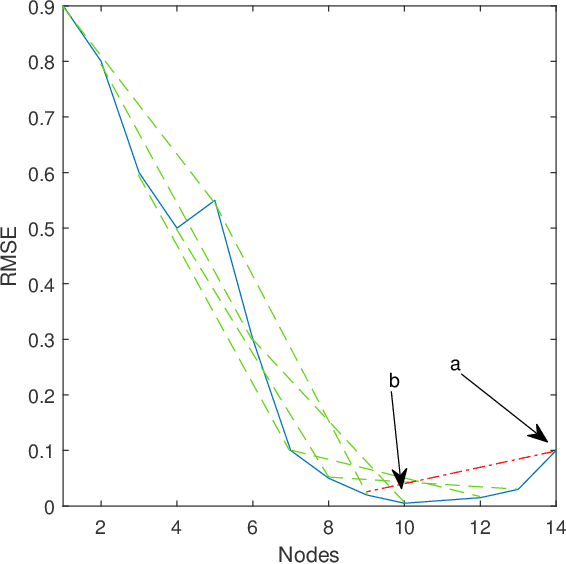

This paper develops a randomized approach for incrementally building deep neural networks, where a supervisory mechanism is proposed to constrain the random assignment of the weights and biases, and all the hidden layers have direct links to the output layer. A fundamental result on the universal approximation property is established for such a class of randomized leaner models, namely deep stochastic configuration networks (DeepSCNs). A learning algorithm is presented to implement DeepSCNs with either specific architecture or self-organization. The read-out weights attached with all direct links from each hidden layer to the output layer are evaluated by the least squares method. Given a set of training examples, DeepSCNs can speedily produce a learning representation, that is, a collection of random basis functions with the cascaded inputs together with the read-out weights. An empirical study on a function approximation is carried out to demonstrate some properties of the proposed deep learner model.

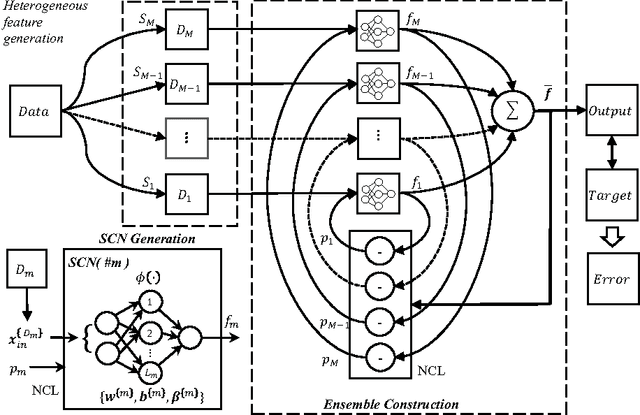

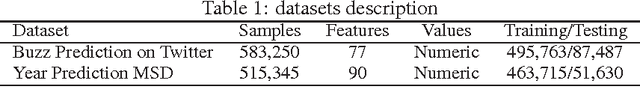

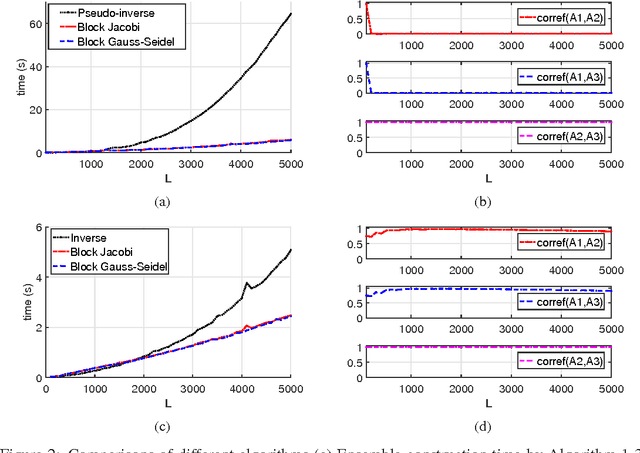

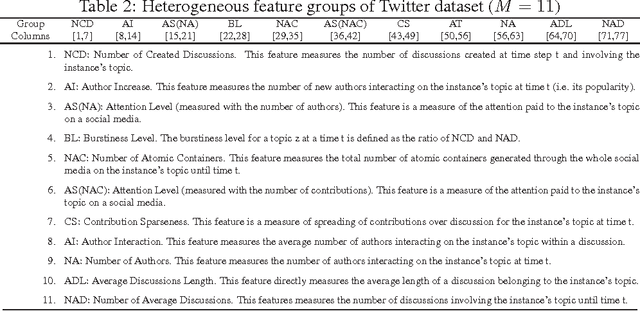

Stochastic Configuration Networks Ensemble for Large-Scale Data Analytics

Jul 05, 2017Dianhui Wang, Caihao Cui

This paper presents a fast decorrelated neuro-ensemble with heterogeneous features for large-scale data analytics, where stochastic configuration networks (SCNs) are employed as base learner models and the well-known negative correlation learning (NCL) strategy is adopted to evaluate the output weights. By feeding a large number of samples into the SCN base models, we obtain a huge sized linear equation system which is difficult to be solved by means of computing a pseudo-inverse used in the least squares method. Based on the group of heterogeneous features, the block Jacobi and Gauss-Seidel methods are employed to iteratively evaluate the output weights, and a convergence analysis is given with a demonstration on the uniqueness of these iterative solutions. Experiments with comparisons on two large-scale datasets are carried out, and the system robustness with respect to the regularizing factor used in NCL is given. Results indicate that the proposed ensemble learning techniques have good potential for resolving large-scale data modelling problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge