Nodule detection and generation on chest X-rays: NODE21 Challenge

Jan 04, 2024Ecem Sogancioglu, Bram van Ginneken, Finn Behrendt, Marcel Bengs, Alexander Schlaefer, Miron Radu, Di Xu, Ke Sheng, Fabien Scalzo, Eric Marcus, Samuele Papa, Jonas Teuwen, Ernst Th. Scholten, Steven Schalekamp, Nils Hendrix, Colin Jacobs, Ward Hendrix, Clara I Sánchez, Keelin Murphy

Pulmonary nodules may be an early manifestation of lung cancer, the leading cause of cancer-related deaths among both men and women. Numerous studies have established that deep learning methods can yield high-performance levels in the detection of lung nodules in chest X-rays. However, the lack of gold-standard public datasets slows down the progression of the research and prevents benchmarking of methods for this task. To address this, we organized a public research challenge, NODE21, aimed at the detection and generation of lung nodules in chest X-rays. While the detection track assesses state-of-the-art nodule detection systems, the generation track determines the utility of nodule generation algorithms to augment training data and hence improve the performance of the detection systems. This paper summarizes the results of the NODE21 challenge and performs extensive additional experiments to examine the impact of the synthetically generated nodule training images on the detection algorithm performance.

Kandinsky Conformal Prediction: Efficient Calibration of Image Segmentation Algorithms

Nov 20, 2023Joren Brunekreef, Eric Marcus, Ray Sheombarsing, Jan-Jakob Sonke, Jonas Teuwen

Image segmentation algorithms can be understood as a collection of pixel classifiers, for which the outcomes of nearby pixels are correlated. Classifier models can be calibrated using Inductive Conformal Prediction, but this requires holding back a sufficiently large calibration dataset for computing the distribution of non-conformity scores of the model's predictions. If one only requires only marginal calibration on the image level, this calibration set consists of all individual pixels in the images available for calibration. However, if the goal is to attain proper calibration for each individual pixel classifier, the calibration set consists of individual images. In a scenario where data are scarce (such as the medical domain), it may not always be possible to set aside sufficiently many images for this pixel-level calibration. The method we propose, dubbed ``Kandinsky calibration'', makes use of the spatial structure present in the distribution of natural images to simultaneously calibrate the classifiers of ``similar'' pixels. This can be seen as an intermediate approach between marginal (imagewise) and conditional (pixelwise) calibration, where non-conformity scores are aggregated over similar image regions, thereby making more efficient use of the images available for calibration. We run experiments on segmentation algorithms trained and calibrated on subsets of the public MS-COCO and Medical Decathlon datasets, demonstrating that Kandinsky calibration method can significantly improve the coverage. When compared to both pixelwise and imagewise calibration on little data, the Kandinsky method achieves much lower coverage errors, indicating the data efficiency of the Kandinsky calibration.

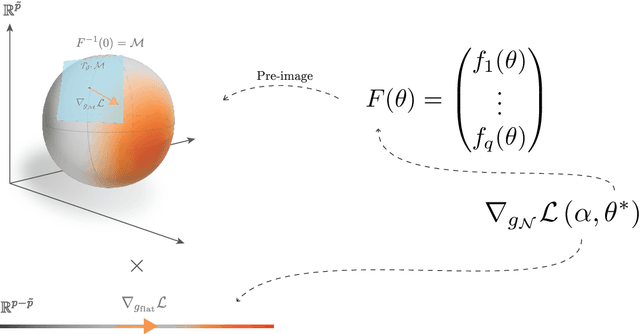

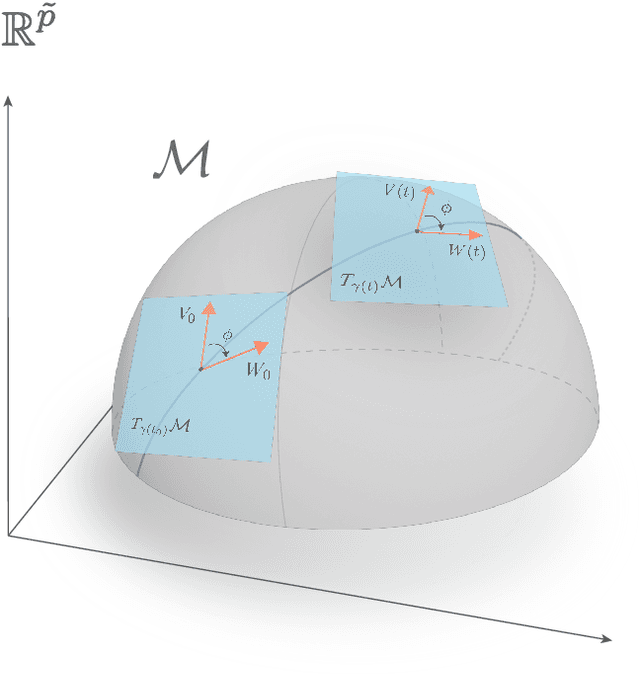

Constrained Empirical Risk Minimization: Theory and Practice

Feb 09, 2023Eric Marcus, Ray Sheombarsing, Jan-Jakob Sonke, Jonas Teuwen

Deep Neural Networks (DNNs) are widely used for their ability to effectively approximate large classes of functions. This flexibility, however, makes the strict enforcement of constraints on DNNs an open problem. Here we present a framework that, under mild assumptions, allows the exact enforcement of constraints on parameterized sets of functions such as DNNs. Instead of imposing "soft'' constraints via additional terms in the loss, we restrict (a subset of) the DNN parameters to a submanifold on which the constraints are satisfied exactly throughout the entire training procedure. We focus on constraints that are outside the scope of equivariant networks used in Geometric Deep Learning. As a major example of the framework, we restrict filters of a Convolutional Neural Network (CNN) to be wavelets, and apply these wavelet networks to the task of contour prediction in the medical domain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge