Commentary on explainable artificial intelligence methods: SHAP and LIME

May 08, 2023Ahmed Salih, Zahra Raisi-Estabragh, Ilaria Boscolo Galazzo, Petia Radeva, Steffen E. Petersen, Gloria Menegaz, Karim Lekadir

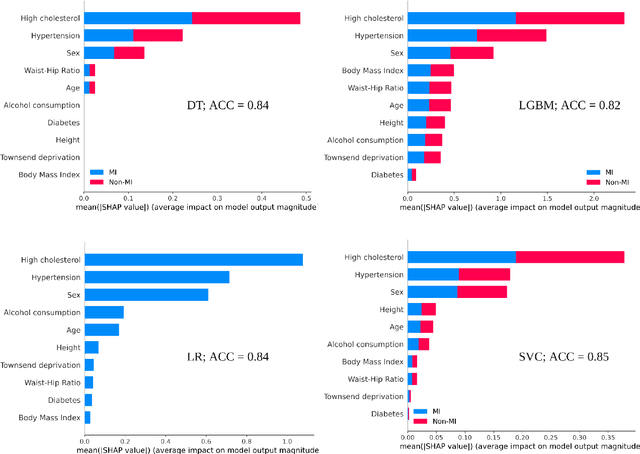

eXplainable artificial intelligence (XAI) methods have emerged to convert the black box of machine learning models into a more digestible form. These methods help to communicate how the model works with the aim of making machine learning models more transparent and increasing the trust of end-users into their output. SHapley Additive exPlanations (SHAP) and Local Interpretable Model Agnostic Explanation (LIME) are two widely used XAI methods particularly with tabular data. In this commentary piece, we discuss the way the explainability metrics of these two methods are generated and propose a framework for interpretation of their outputs, highlighting their weaknesses and strengths.

Characterizing the contribution of dependent features in XAI methods

Apr 04, 2023Ahmed Salih, Ilaria Boscolo Galazzo, Zahra Raisi-Estabragh, Steffen E. Petersen, Gloria Menegaz, Petia Radeva

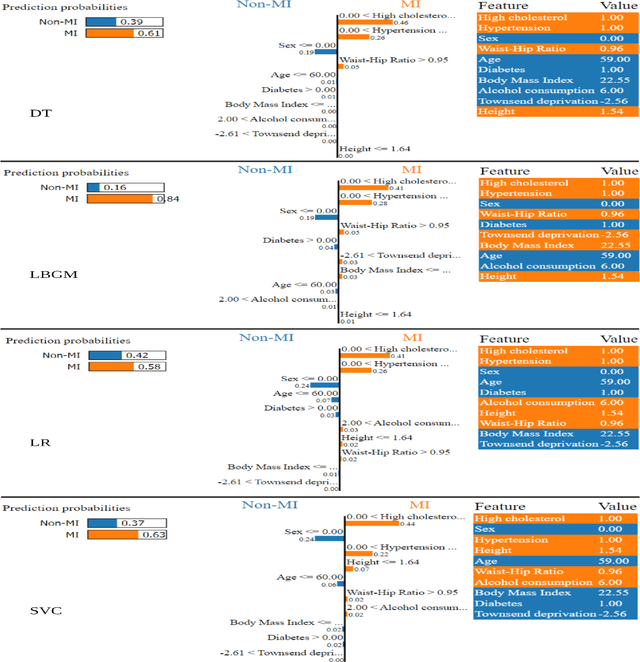

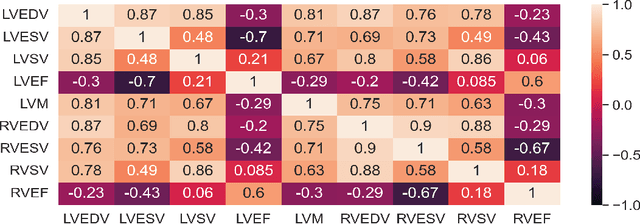

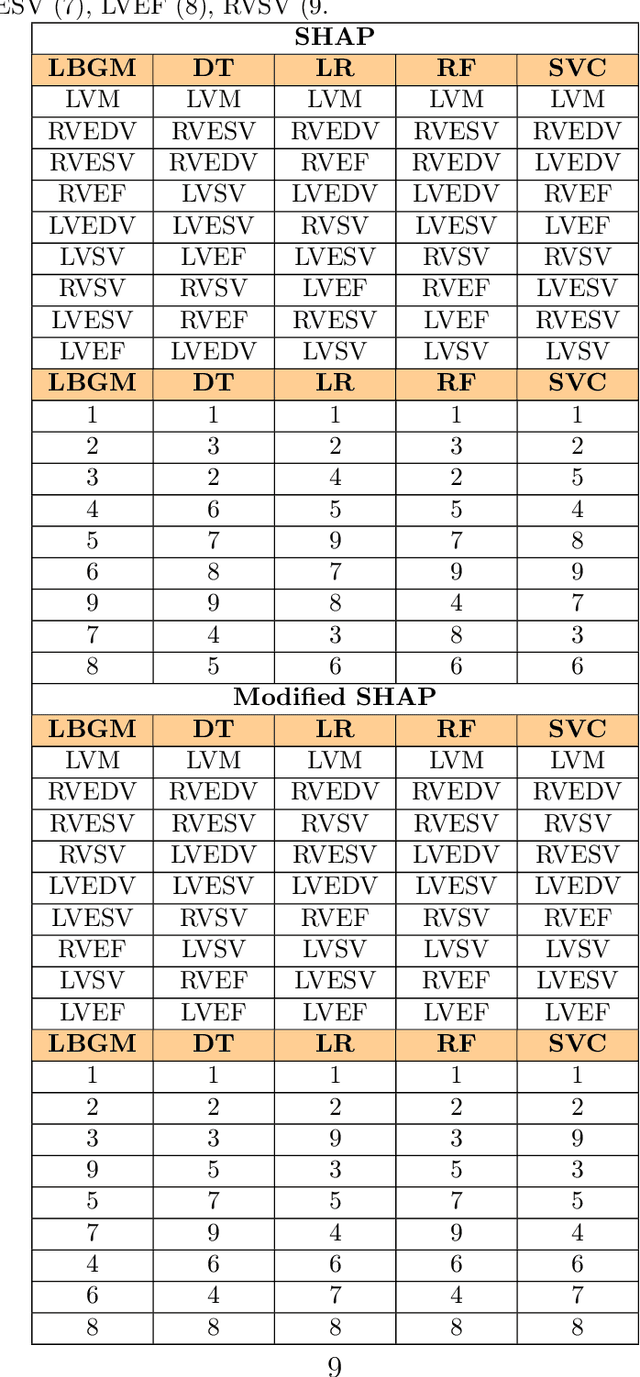

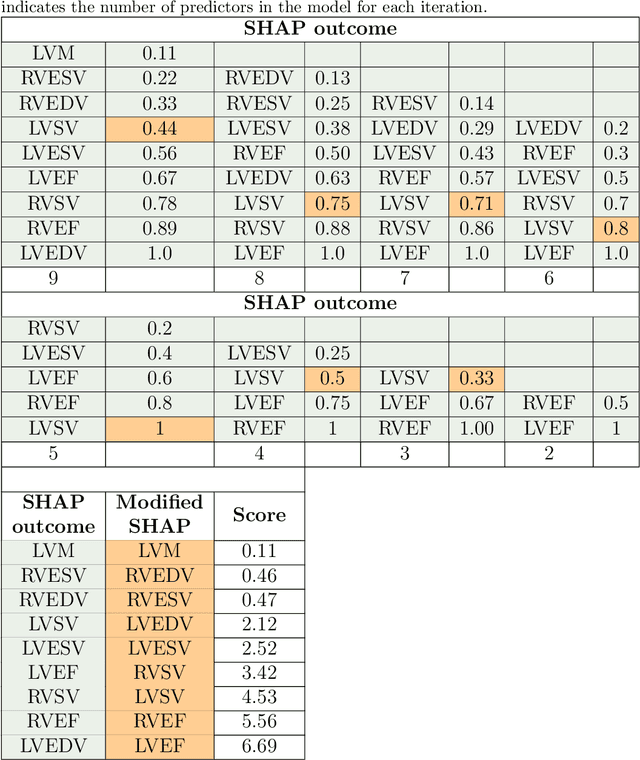

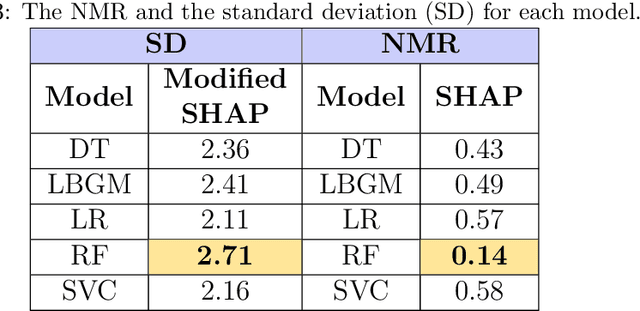

Explainable Artificial Intelligence (XAI) provides tools to help understanding how the machine learning models work and reach a specific outcome. It helps to increase the interpretability of models and makes the models more trustworthy and transparent. In this context, many XAI methods were proposed being SHAP and LIME the most popular. However, the proposed methods assume that used predictors in the machine learning models are independent which in general is not necessarily true. Such assumption casts shadows on the robustness of the XAI outcomes such as the list of informative predictors. Here, we propose a simple, yet useful proxy that modifies the outcome of any XAI feature ranking method allowing to account for the dependency among the predictors. The proposed approach has the advantage of being model-agnostic as well as simple to calculate the impact of each predictor in the model in presence of collinearity.

Explainable AI (XAI) in Biomedical Signal and Image Processing: Promises and Challenges

Jul 09, 2022Guang Yang, Arvind Rao, Christine Fernandez-Maloigne, Vince Calhoun, Gloria Menegaz

Artificial intelligence has become pervasive across disciplines and fields, and biomedical image and signal processing is no exception. The growing and widespread interest on the topic has triggered a vast research activity that is reflected in an exponential research effort. Through study of massive and diverse biomedical data, machine and deep learning models have revolutionized various tasks such as modeling, segmentation, registration, classification and synthesis, outperforming traditional techniques. However, the difficulty in translating the results into biologically/clinically interpretable information is preventing their full exploitation in the field. Explainable AI (XAI) attempts to fill this translational gap by providing means to make the models interpretable and providing explanations. Different solutions have been proposed so far and are gaining increasing interest from the community. This paper aims at providing an overview on XAI in biomedical data processing and points to an upcoming Special Issue on Deep Learning in Biomedical Image and Signal Processing of the IEEE Signal Processing Magazine that is going to appear in March 2022.

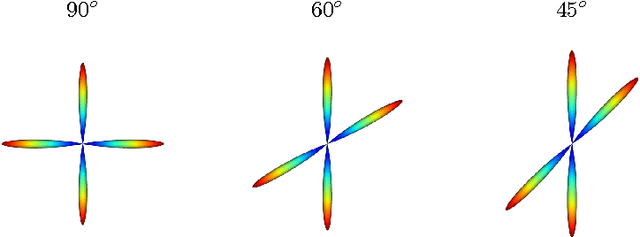

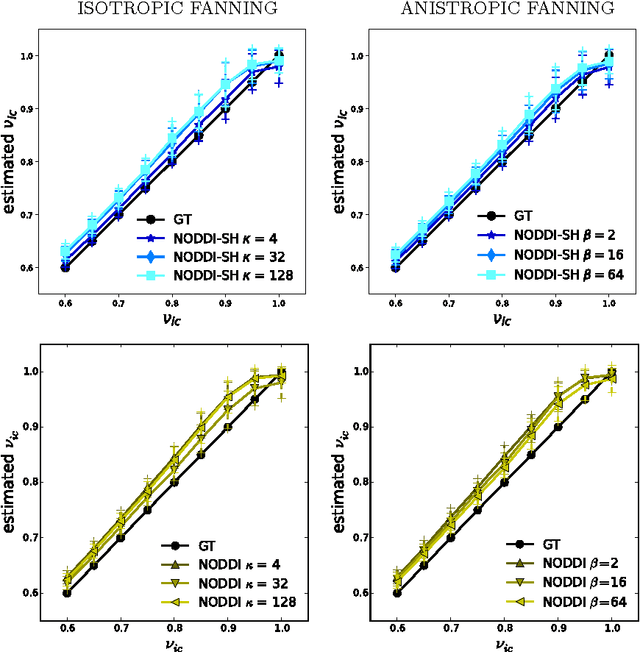

NODDI-SH: a computational efficient NODDI extension for fODF estimation in diffusion MRI

Sep 01, 2017Mauro Zucchelli, Maxime Descoteaux, Gloria Menegaz

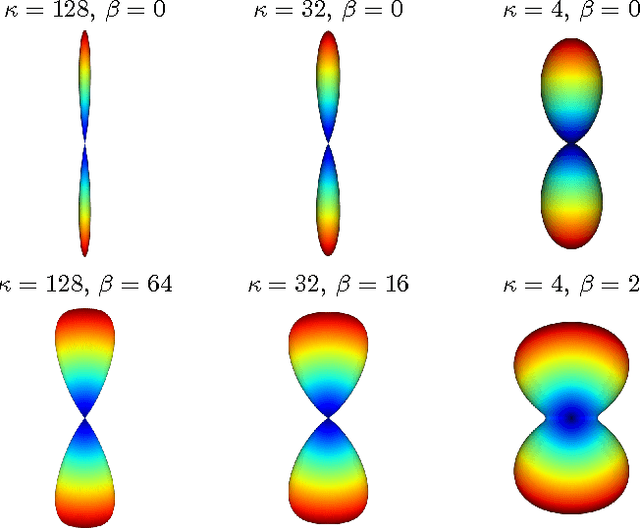

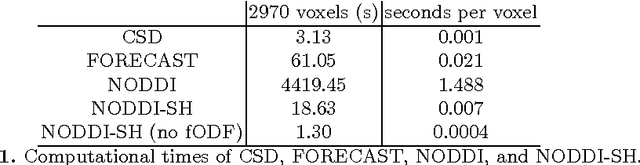

Diffusion Magnetic Resonance Imaging (DMRI) is the only non-invasive imaging technique which is able to detect the principal directions of water diffusion as well as neurites density in the human brain. Exploiting the ability of Spherical Harmonics (SH) to model spherical functions, we propose a new reconstruction model for DMRI data which is able to estimate both the fiber Orientation Distribution Function (fODF) and the relative volume fractions of the neurites in each voxel, which is robust to multiple fiber crossings. We consider a Neurite Orientation Dispersion and Density Imaging (NODDI) inspired single fiber diffusion signal to be derived from three compartments: intracellular, extracellular, and cerebrospinal fluid. The model, called NODDI-SH, is derived by convolving the single fiber response with the fODF in each voxel. NODDI-SH embeds the calculation of the fODF and the neurite density in a unified mathematical model providing efficient, robust and accurate results. Results were validated on simulated data and tested on \textit{in-vivo} data of human brain, and compared to and Constrained Spherical Deconvolution (CSD) for benchmarking. Results revealed competitive performance in all respects and inherent adaptivity to local microstructure, while sensibly reducing the computational cost. We also investigated NODDI-SH performance when only a limited number of samples are available for the fitting, demonstrating that 60 samples are enough to obtain reliable results. The fast computational time and the low number of signal samples required, make NODDI-SH feasible for clinical application.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge