Capturing scattered discriminative information using a deep architecture in acoustic scene classification

Jul 09, 2020Hye-jin Shim, Jee-weon Jung, Ju-ho Kim, Ha-jin Yu

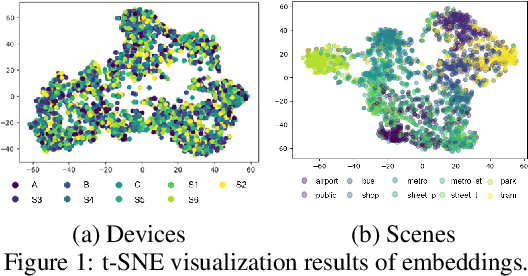

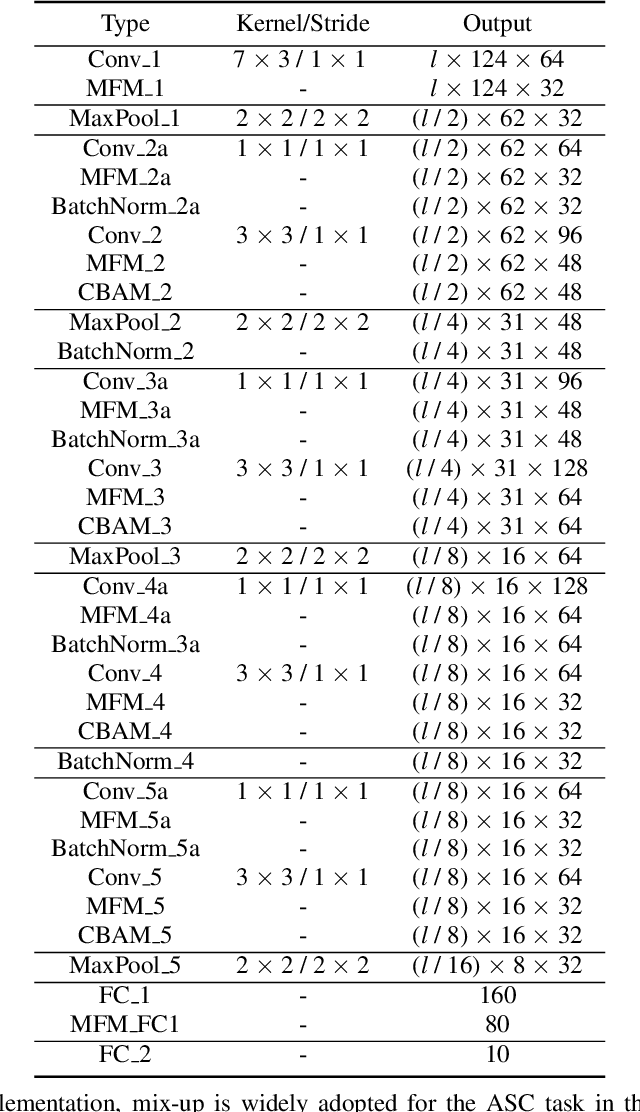

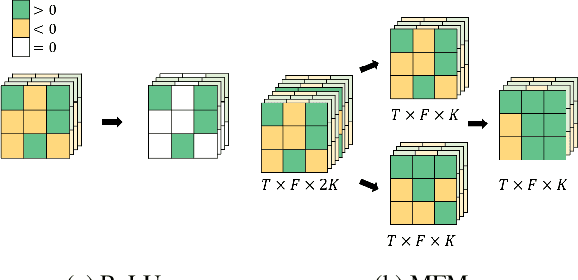

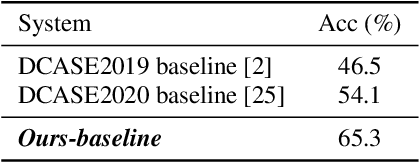

Frequently misclassified pairs of classes that share many common acoustic properties exist in acoustic scene classification (ASC). To distinguish such pairs of classes, trivial details scattered throughout the data could be vital clues. However, these details are less noticeable and are easily removed using conventional non-linear activations (e.g. ReLU). Furthermore, making design choices to emphasize trivial details can easily lead to overfitting if the system is not sufficiently generalized. In this study, based on the analysis of the ASC task's characteristics, we investigate various methods to capture discriminative information and simultaneously mitigate the overfitting problem. We adopt a max feature map method to replace conventional non-linear activations in a deep neural network, and therefore, we apply an element-wise comparison between different filters of a convolution layer's output. Two data augment methods and two deep architecture modules are further explored to reduce overfitting and sustain the system's discriminative power. Various experiments are conducted using the detection and classification of acoustic scenes and events 2020 task1-a dataset to validate the proposed methods. Our results show that the proposed system consistently outperforms the baseline, where the single best performing system has an accuracy of 70.4% compared to 65.1% of the baseline.

Short utterance compensation in speaker verification via cosine-based teacher-student learning of speaker embeddings

Oct 25, 2018Jee-weon Jung, Hee-soo Heo, Hye-jin Shim, Ha-jin Yu

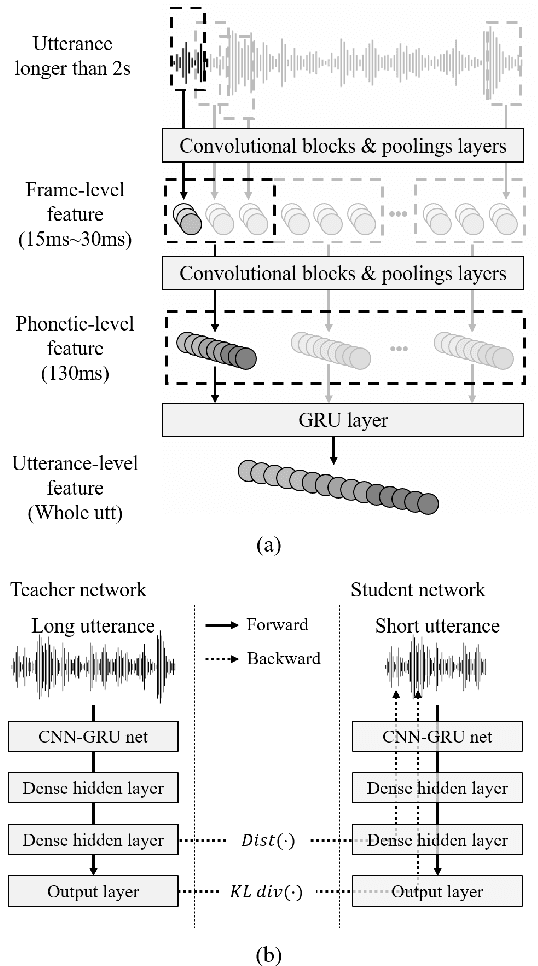

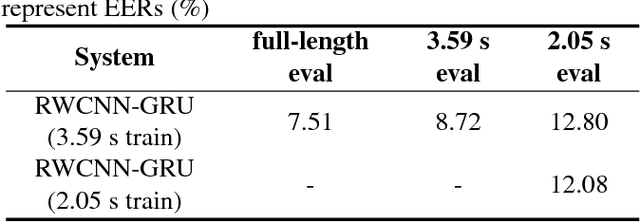

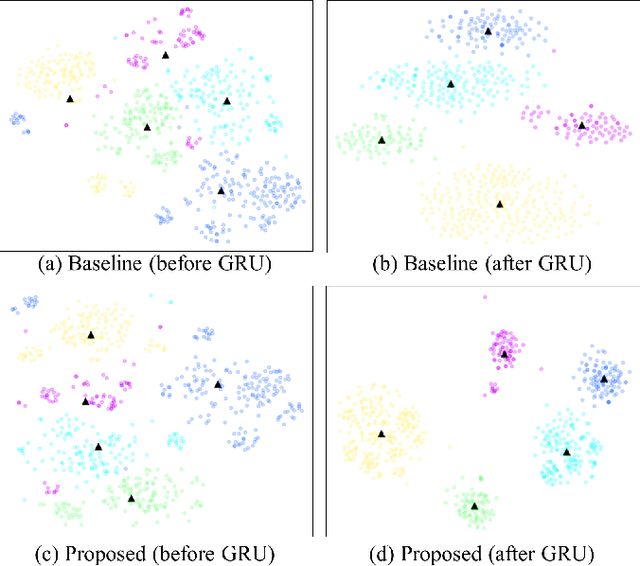

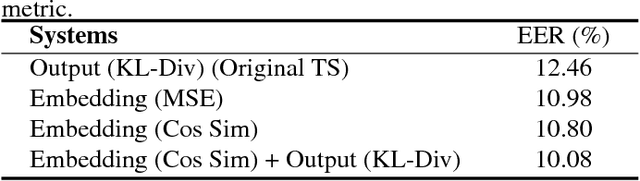

Input utterance with short duration is one of the most critical threats that degrade the performance of speaker verification systems. This study aimed to develop an integrated text-independent speaker verification system that inputs utterances with short durations of 2.05 seconds. For this goal, we propose an approach using a teacher-student learning framework that maximizes the cosine similarity of two speaker embeddings extracted from long and short utterances. In the proposed architecture, phonetic-level features in which each feature represents a segment of 130 ms are extracted using convolutional layers. The gated recurrent units extract an utterance-level speaker embedding using the phonetic-level features. Experiments were conducted using deep neural networks that take raw waveforms as input, and output speaker embeddings on the VoxCeleb 1 dataset. The equal error rates without short utterance compensation are 8.72 % and 12.8 %, for evaluation sets with durations of 3.59 s and 2.05 s, respectively. The proposed model with compensation exhibits an equal error rate of 10.08 % for 2.05 s utterances, which compensates more than 65 % of the performance degradation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge