RED: A ReRAM-based Deconvolution Accelerator

Jul 05, 2019Zichen Fan, Ziru Li, Bing Li, Yiran Chen, Hai, Li

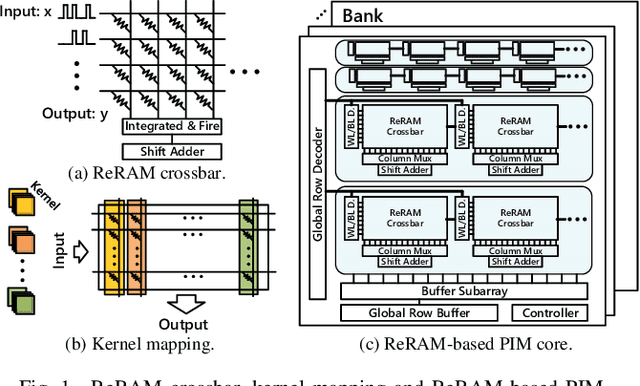

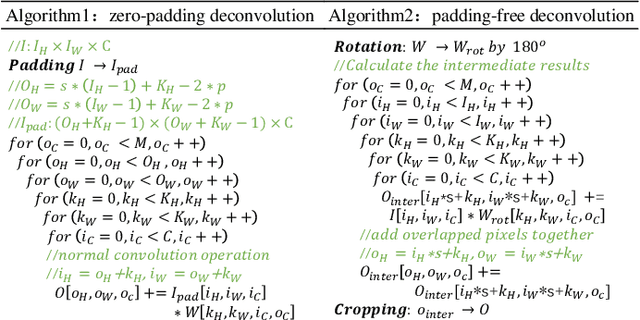

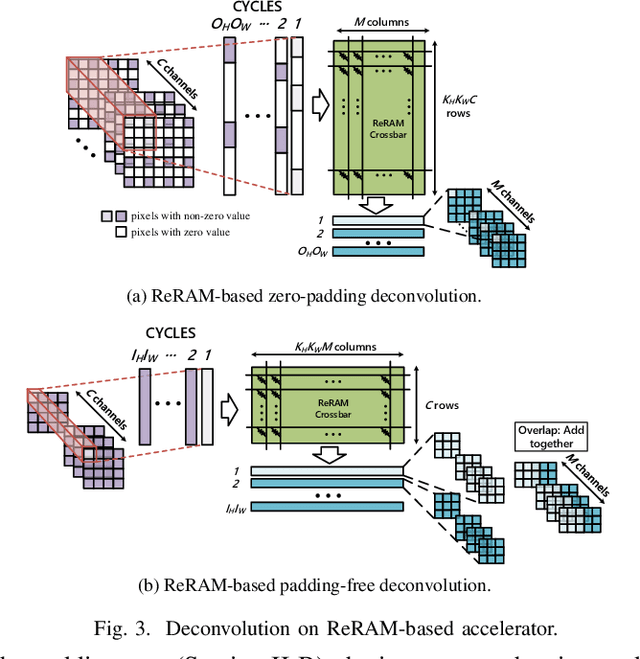

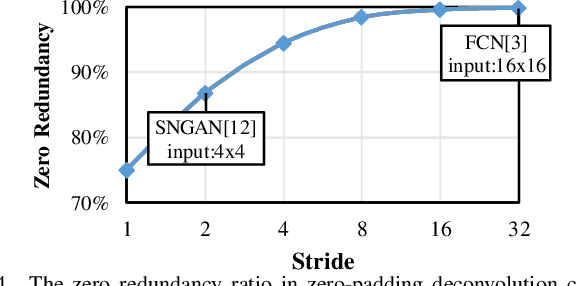

Deconvolution has been widespread in neural networks. For example, it is essential for performing unsupervised learning in generative adversarial networks or constructing fully convolutional networks for semantic segmentation. Resistive RAM (ReRAM)-based processing-in-memory architecture has been widely explored in accelerating convolutional computation and demonstrates good performance. Performing deconvolution on existing ReRAM-based accelerator designs, however, suffers from long latency and high energy consumption because deconvolutional computation includes not only convolution but also extra add-on operations. To realize the more efficient execution for deconvolution, we analyze its computation requirement and propose a ReRAM-based accelerator design, namely, RED. More specific, RED integrates two orthogonal methods, the pixel-wise mapping scheme for reducing redundancy caused by zero-inserting operations and the zero-skipping data flow for increasing the computation parallelism and therefore improving performance. Experimental evaluations show that compared to the state-of-the-art ReRAM-based accelerator, RED can speed up operation 3.69x~1.15x and reduce 8%~88.36% energy consumption.

An Overview of In-memory Processing with Emerging Non-volatile Memory for Data-intensive Applications

Jun 15, 2019Bing Li, Bonan Yan, Hai, Li

The conventional von Neumann architecture has been revealed as a major performance and energy bottleneck for rising data-intensive applications. %, due to the intensive data movements. The decade-old idea of leveraging in-memory processing to eliminate substantial data movements has returned and led extensive research activities. The effectiveness of in-memory processing heavily relies on memory scalability, which cannot be satisfied by traditional memory technologies. Emerging non-volatile memories (eNVMs) that pose appealing qualities such as excellent scaling and low energy consumption, on the other hand, have been heavily investigated and explored for realizing in-memory processing architecture. In this paper, we summarize the recent research progress in eNVM-based in-memory processing from various aspects, including the adopted memory technologies, locations of the in-memory processing in the system, supported arithmetics, as well as applied applications.

Spintronics based Stochastic Computing for Efficient Bayesian Inference System

Nov 03, 2017Xiaotao Jia, Jianlei Yang, Zhaohao Wang, Yiran Chen, Hai, Li, Weisheng Zhao

Bayesian inference is an effective approach for solving statistical learning problems especially with uncertainty and incompleteness. However, inference efficiencies are physically limited by the bottlenecks of conventional computing platforms. In this paper, an emerging Bayesian inference system is proposed by exploiting spintronics based stochastic computing. A stochastic bitstream generator is realized as the kernel components by leveraging the inherent randomness of spintronics devices. The proposed system is evaluated by typical applications of data fusion and Bayesian belief networks. Simulation results indicate that the proposed approach could achieve significant improvement on inference efficiencies in terms of power consumption and inference speed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge