GMC: Grid Based Motion Clustering in Dynamic Environment

Feb 25, 2019Handuo Zhang, Karunasekera Hasith, Han Wang

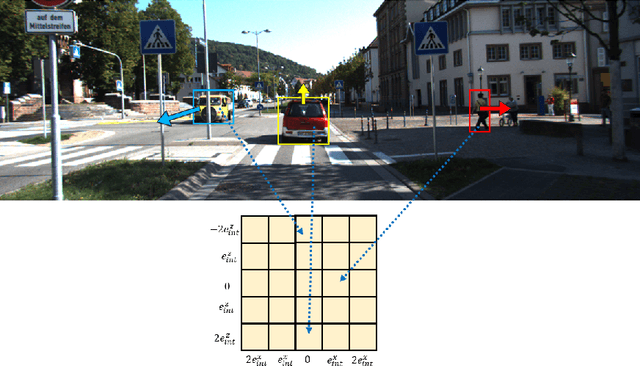

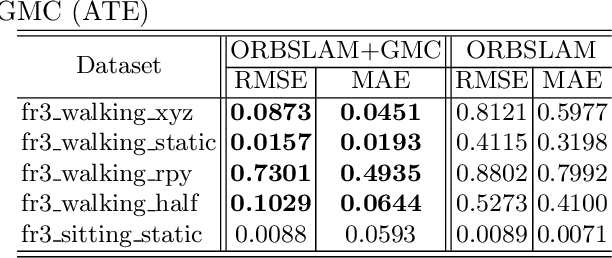

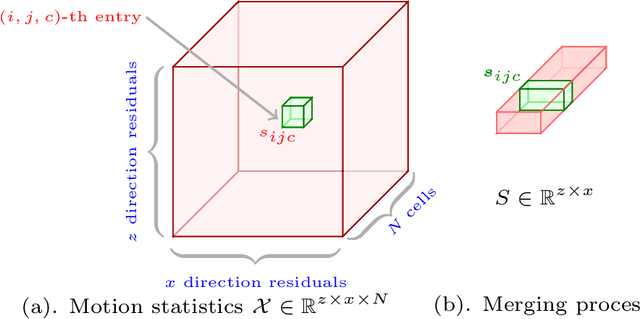

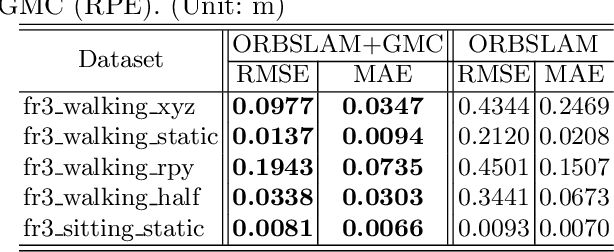

Conventional SLAM algorithms takes a strong assumption of scene motionlessness, which limits the application in real environments. This paper tries to tackle the challenging visual SLAM issue of moving objects in dynamic environments. We present GMC, grid-based motion clustering approach, a lightweight dynamic object filtering method that is free from high-power and expensive processors. GMC encapsulates motion consistency as the statistical likelihood of detected key points within a certain region. Using this method can we provide real-time and robust correspondence algorithm that can differentiate dynamic objects with static backgrounds. We evaluate our system in public TUM dataset. To compare with the state-of-the-art methods, our system can provide more accurate results by detecting dynamic objects.

Ultra-Wideband Aided Fast Localization and Mapping System

Sep 30, 2017Chen Wang, Handuo Zhang, Thien-Minh Nguyen, Lihua Xie

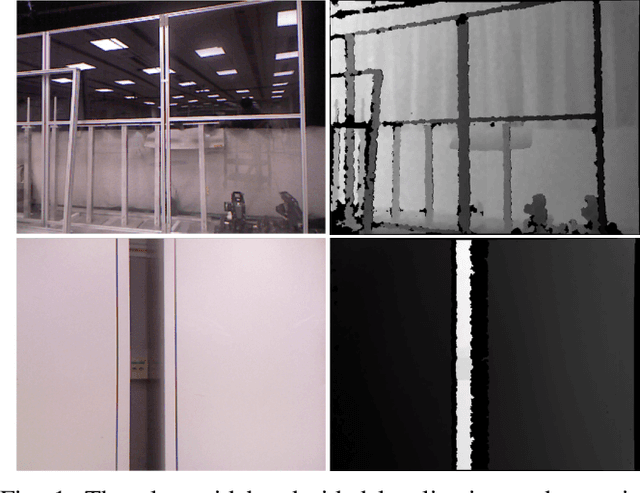

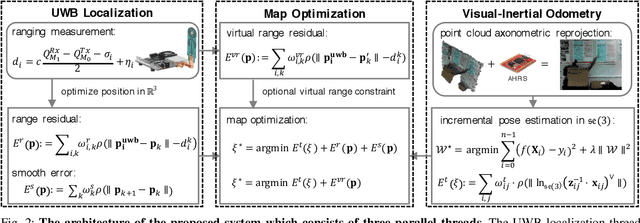

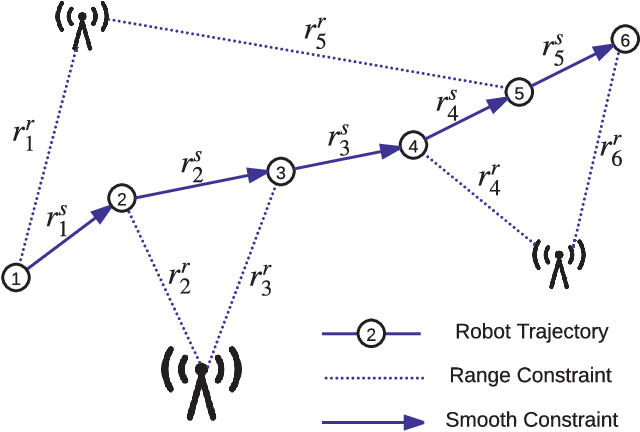

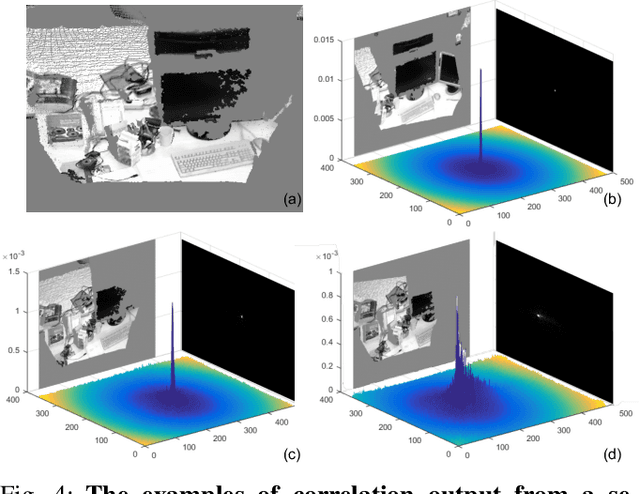

This paper proposes an ultra-wideband (UWB) aided localization and mapping system that leverages on inertial sensor and depth camera. Inspired by the fact that visual odometry (VO) system, regardless of its accuracy in the short term, still faces challenges with accumulated errors in the long run or under unfavourable environments, the UWB ranging measurements are fused to remove the visual drift and improve the robustness. A general framework is developed which consists of three parallel threads, two of which carry out the visual-inertial odometry (VIO) and UWB localization respectively. The other mapping thread integrates visual tracking constraints into a pose graph with the proposed smooth and virtual range constraints, such that an optimization is performed to provide robust trajectory estimation. Experiments show that the proposed system is able to create dense drift-free maps in real-time even running on an ultra-low power processor in featureless environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge