Transolver: A Fast Transformer Solver for PDEs on General Geometries

Feb 04, 2024Haixu Wu, Huakun Luo, Haowen Wang, Jianmin Wang, Mingsheng Long

Transformers have empowered many milestones across various fields and have recently been applied to solve partial differential equations (PDEs). However, since PDEs are typically discretized into large-scale meshes with complex geometries, it is challenging for Transformers to capture intricate physical correlations directly from massive individual points. Going beyond superficial and unwieldy meshes, we present Transolver based on a more foundational idea, which is learning intrinsic physical states hidden behind discretized geometries. Specifically, we propose a new Physics-Attention to adaptively split the discretized domain into a series of learnable slices of flexible shapes, where mesh points under similar physical states will be ascribed to the same slice. By calculating attention to physics-aware tokens encoded from slices, Transovler can effectively capture intricate physical correlations under complex geometrics, which also empowers the solver with endogenetic geometry-general modeling capacity and can be efficiently computed in linear complexity. Transolver achieves consistent state-of-the-art with 22\% relative gain across six standard benchmarks and also excels in large-scale industrial simulations, including car and airfoil designs.

Solving High-Dimensional PDEs with Latent Spectral Models

Jan 30, 2023Haixu Wu, Tengge Hu, Huakun Luo, Jianmin Wang, Mingsheng Long

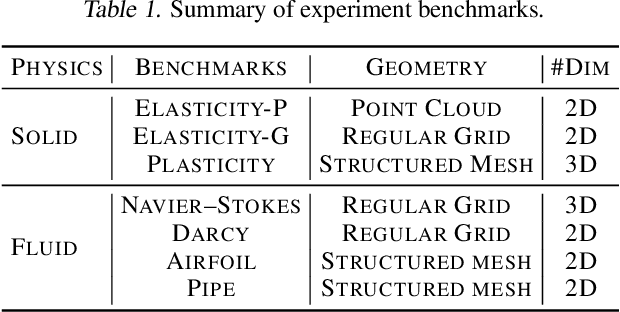

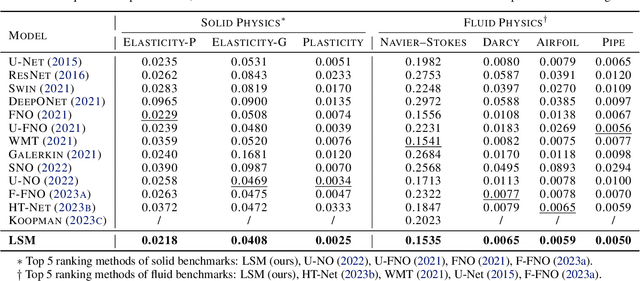

Deep models have achieved impressive progress in solving partial differential equations (PDEs). A burgeoning paradigm is learning neural operators to approximate the input-output mappings of PDEs. While previous deep models have explored the multiscale architectures and elaborative operator designs, they are limited to learning the operators as a whole in the coordinate space. In real physical science problems, PDEs are complex coupled equations with numerical solvers relying on discretization into high-dimensional coordinate space, which cannot be precisely approximated by a single operator nor efficiently learned for the curse of dimensionality. We present Latent Spectral Models (LSM) toward an efficient and precise solver for high-dimensional PDEs. Going beyond the coordinate space, LSM enables an attention-based hierarchical projection network to reduce the high-dimensional data into a compact latent space in linear time. Inspired by classical spectral methods in numerical analysis, we design a neural spectral block to solve PDEs in the latent space that well approximates complex input-output mappings via learning multiple basis operators, enjoying nice theoretical guarantees for convergence and approximation. Experimentally, LSM achieves consistent state-of-the-art and yields a relative gain of 11.5% averaged on seven benchmarks covering both solid and fluid physics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge