Multivariate regression and fit function uncertainty

Oct 03, 2013Peter Kovesarki, Ian C. Brock

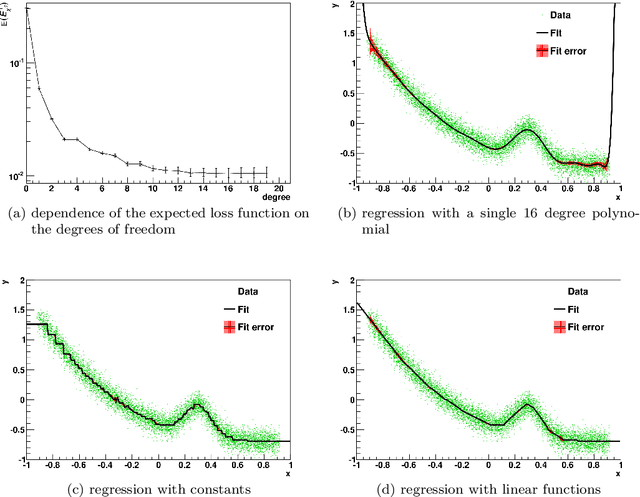

This article describes a multivariate polynomial regression method where the uncertainty of the input parameters are approximated with Gaussian distributions, derived from the central limit theorem for large weighted sums, directly from the training sample. The estimated uncertainties can be propagated into the optimal fit function, as an alternative to the statistical bootstrap method. This uncertainty can be propagated further into a loss function like quantity, with which it is possible to calculate the expected loss function, and allows to select the optimal polynomial degree with statistical significance. Combined with simple phase space splitting methods, it is possible to model most features of the training data even with low degree polynomials or constants.

Green's function based unparameterised multi-dimensional kernel density and likelihood ratio estimator

Apr 18, 2012Peter Kovesarki, Ian C. Brock, A. Elizabeth Nuncio Quiroz

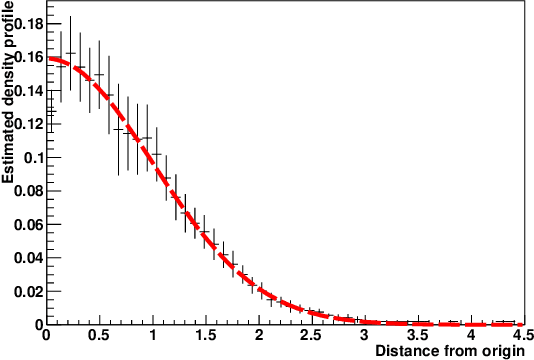

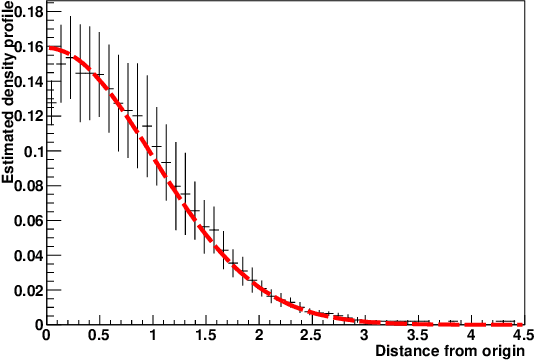

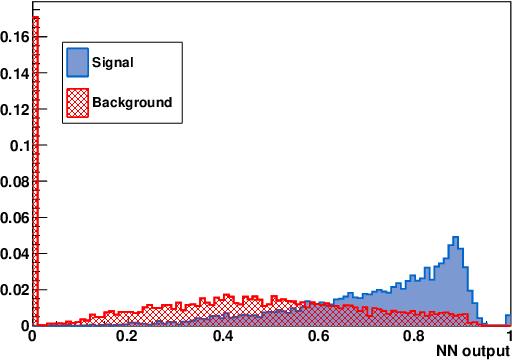

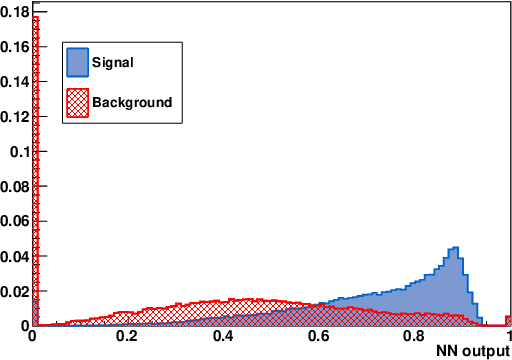

This paper introduces a probability density estimator based on Green's function identities. A density model is constructed under the sole assumption that the probability density is differentiable. The method is implemented as a binary likelihood estimator for classification purposes, so issues such as mis-modeling and overtraining are also discussed. The identity behind the density estimator can be interpreted as a real-valued, non-scalar kernel method which is able to reconstruct differentiable density functions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge