Early and Accurate Detection of Tomato Leaf Diseases Using TomFormer

Dec 26, 2023Asim Khan, Umair Nawaz, Lochan Kshetrimayum, Lakmal Seneviratne, Irfan Hussain

Tomato leaf diseases pose a significant challenge for tomato farmers, resulting in substantial reductions in crop productivity. The timely and precise identification of tomato leaf diseases is crucial for successfully implementing disease management strategies. This paper introduces a transformer-based model called TomFormer for the purpose of tomato leaf disease detection. The paper's primary contributions include the following: Firstly, we present a novel approach for detecting tomato leaf diseases by employing a fusion model that combines a visual transformer and a convolutional neural network. Secondly, we aim to apply our proposed methodology to the Hello Stretch robot to achieve real-time diagnosis of tomato leaf diseases. Thirdly, we assessed our method by comparing it to models like YOLOS, DETR, ViT, and Swin, demonstrating its ability to achieve state-of-the-art outcomes. For the purpose of the experiment, we used three datasets of tomato leaf diseases, namely KUTomaDATA, PlantDoc, and PlanVillage, where KUTomaDATA is being collected from a greenhouse in Abu Dhabi, UAE. Finally, we present a comprehensive analysis of the performance of our model and thoroughly discuss the limitations inherent in our approach. TomFormer performed well on the KUTomaDATA, PlantDoc, and PlantVillage datasets, with mean average accuracy (mAP) scores of 87%, 81%, and 83%, respectively. The comparative results in terms of mAP demonstrate that our method exhibits robustness, accuracy, efficiency, and scalability. Furthermore, it can be readily adapted to new datasets. We are confident that our work holds the potential to significantly influence the tomato industry by effectively mitigating crop losses and enhancing crop yields.

MuLA-GAN: Multi-Level Attention GAN for Enhanced Underwater Visibility

Dec 25, 2023Ahsan Baidar Bakht, Zikai Jia, Muhayy ud Din, Waseem Akram, Lyes Saad Soud, Lakmal Seneviratne, Defu Lin, Shaoming He, Irfan Hussain

The underwater environment presents unique challenges, including color distortions, reduced contrast, and blurriness, hindering accurate analysis. In this work, we introduce MuLA-GAN, a novel approach that leverages the synergistic power of Generative Adversarial Networks (GANs) and Multi-Level Attention mechanisms for comprehensive underwater image enhancement. The integration of Multi-Level Attention within the GAN architecture significantly enhances the model's capacity to learn discriminative features crucial for precise image restoration. By selectively focusing on relevant spatial and multi-level features, our model excels in capturing and preserving intricate details in underwater imagery, essential for various applications. Extensive qualitative and quantitative analyses on diverse datasets, including UIEB test dataset, UIEB challenge dataset, U45, and UCCS dataset, highlight the superior performance of MuLA-GAN compared to existing state-of-the-art methods. Experimental evaluations on a specialized dataset tailored for bio-fouling and aquaculture applications demonstrate the model's robustness in challenging environmental conditions. On the UIEB test dataset, MuLA-GAN achieves exceptional PSNR (25.59) and SSIM (0.893) scores, surpassing Water-Net, the second-best model, with scores of 24.36 and 0.885, respectively. This work not only addresses a significant research gap in underwater image enhancement but also underscores the pivotal role of Multi-Level Attention in enhancing GANs, providing a novel and comprehensive framework for restoring underwater image quality.

MARS: Multi-Scale Adaptive Robotics Vision for Underwater Object Detection and Domain Generalization

Dec 23, 2023Lyes Saad Saoud, Lakmal Seneviratne, Irfan Hussain

Underwater robotic vision encounters significant challenges, necessitating advanced solutions to enhance performance and adaptability. This paper presents MARS (Multi-Scale Adaptive Robotics Vision), a novel approach to underwater object detection tailored for diverse underwater scenarios. MARS integrates Residual Attention YOLOv3 with Domain-Adaptive Multi-Scale Attention (DAMSA) to enhance detection accuracy and adapt to different domains. During training, DAMSA introduces domain class-based attention, enabling the model to emphasize domain-specific features. Our comprehensive evaluation across various underwater datasets demonstrates MARS's performance. On the original dataset, MARS achieves a mean Average Precision (mAP) of 58.57\%, showcasing its proficiency in detecting critical underwater objects like echinus, starfish, holothurian, scallop, and waterweeds. This capability holds promise for applications in marine robotics, marine biology research, and environmental monitoring. Furthermore, MARS excels at mitigating domain shifts. On the augmented dataset, which incorporates all enhancements (+Domain +Residual+Channel Attention+Multi-Scale Attention), MARS achieves an mAP of 36.16\%. This result underscores its robustness and adaptability in recognizing objects and performing well across a range of underwater conditions. The source code for MARS is publicly available on GitHub at https://github.com/LyesSaadSaoud/MARS-Object-Detection/

ADOD: Adaptive Domain-Aware Object Detection with Residual Attention for Underwater Environments

Dec 11, 2023Lyes Saad Saoud, Zhenwei Niu, Atif Sultan, Lakmal Seneviratne, Irfan Hussain

This research presents ADOD, a novel approach to address domain generalization in underwater object detection. Our method enhances the model's ability to generalize across diverse and unseen domains, ensuring robustness in various underwater environments. The first key contribution is Residual Attention YOLOv3, a novel variant of the YOLOv3 framework empowered by residual attention modules. These modules enable the model to focus on informative features while suppressing background noise, leading to improved detection accuracy and adaptability to different domains. The second contribution is the attention-based domain classification module, vital during training. This module helps the model identify domain-specific information, facilitating the learning of domain-invariant features. Consequently, ADOD can generalize effectively to underwater environments with distinct visual characteristics. Extensive experiments on diverse underwater datasets demonstrate ADOD's superior performance compared to state-of-the-art domain generalization methods, particularly in challenging scenarios. The proposed model achieves exceptional detection performance in both seen and unseen domains, showcasing its effectiveness in handling domain shifts in underwater object detection tasks. ADOD represents a significant advancement in adaptive object detection, providing a promising solution for real-world applications in underwater environments. With the prevalence of domain shifts in such settings, the model's strong generalization ability becomes a valuable asset for practical underwater surveillance and marine research endeavors.

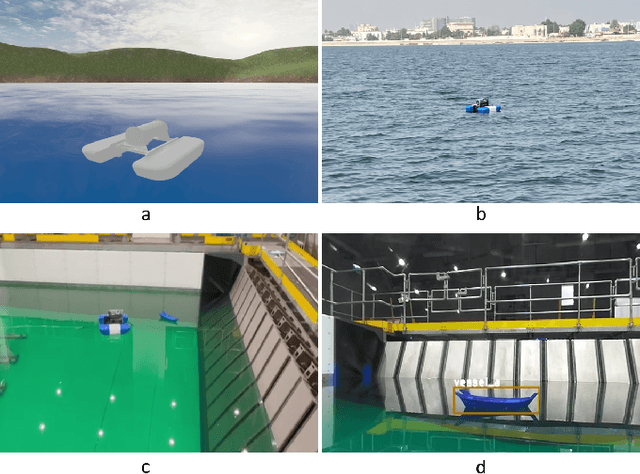

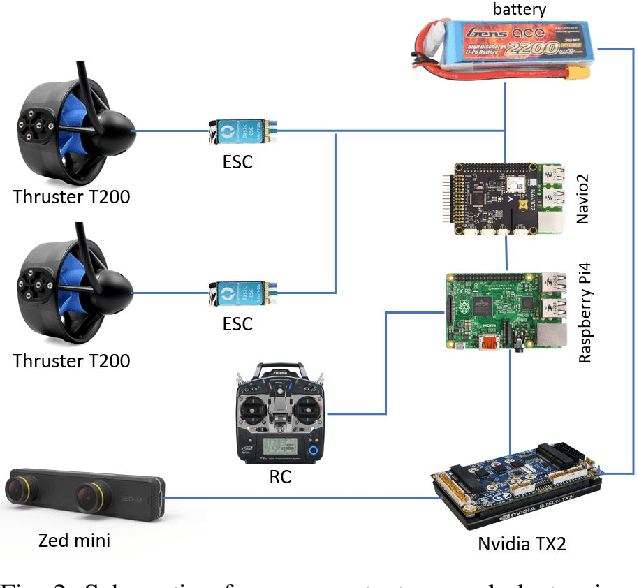

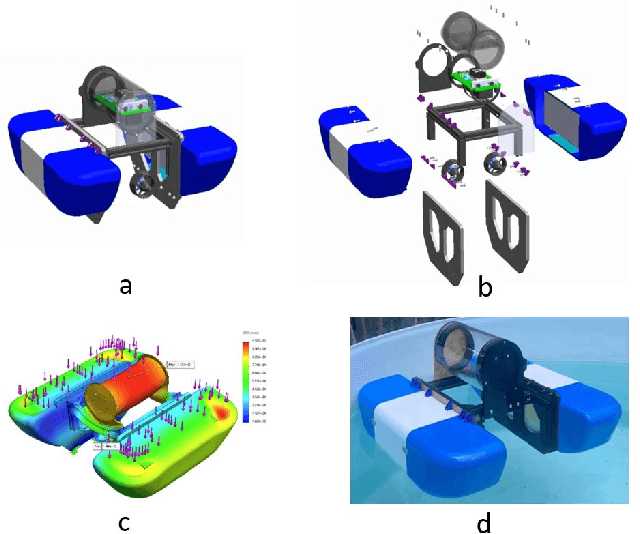

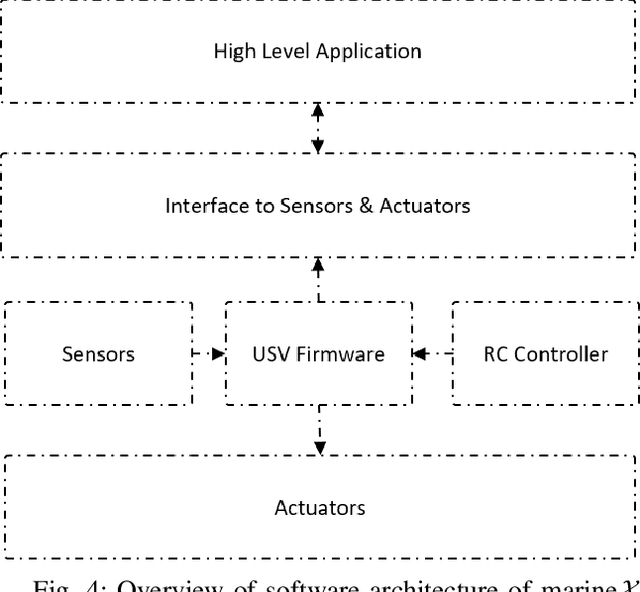

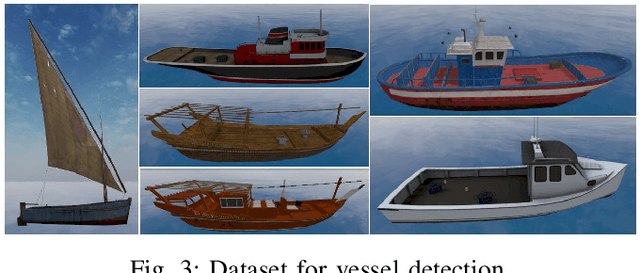

Marine$\mathcal{X}$: Design and Implementation of Unmanned Surface Vessel for Vision Guided Navigation

Nov 28, 2023Muhayy Ud Din, Ahmed Humais, Waseem Akram, Mohamed Alblooshi, Lyes Saad Saoud, Abdelrahman Alblooshi, Lakmal Seneviratne, Irfan Hussain

Marine robots, particularly Unmanned Surface Vessels (USVs), have gained considerable attention for their diverse applications in maritime tasks, including search and rescue, environmental monitoring, and maritime security. This paper presents the design and implementation of a USV named marine$\mathcal{X}$. The hardware components of marine$\mathcal{X}$ are meticulously developed to ensure robustness, efficiency, and adaptability to varying environmental conditions. Furthermore, the integration of a vision-based object tracking algorithm empowers marine$\mathcal{X}$ to autonomously track and monitor specific objects on the water surface. The control system utilizes PID control, enabling precise navigation of marine$\mathcal{X}$ while maintaining a desired course and distance to the target object. To assess the performance of marine$\mathcal{X}$, comprehensive testing is conducted, encompassing simulation, trials in the marine pool, and real-world tests in the open sea. The successful outcomes of these tests demonstrate the USV's capabilities in achieving real-time object tracking, showcasing its potential for various applications in maritime operations.

* accepted in ICAR

Robust Collision Detection for Robots with Variable Stiffness Actuation by Using MAD-CNN: Modularized-Attention-Dilated Convolutional Neural Network

Oct 04, 2023Zhenwei Niu, Lyes Saad Saoud, Irfan Hussain

Ensuring safety is paramount in the field of collaborative robotics to mitigate the risks of human injury and environmental damage. Apart from collision avoidance, it is crucial for robots to rapidly detect and respond to unexpected collisions. While several learning-based collision detection methods have been introduced as alternatives to purely model-based detection techniques, there is currently a lack of such methods designed for collaborative robots equipped with variable stiffness actuators. Moreover, there is potential for further enhancing the network's robustness and improving the efficiency of data training. In this paper, we propose a new network, the Modularized Attention-Dilated Convolutional Neural Network (MAD-CNN), for collision detection in robots equipped with variable stiffness actuators. Our model incorporates a dual inductive bias mechanism and an attention module to enhance data efficiency and improve robustness. In particular, MAD-CNN is trained using only a four-minute collision dataset focusing on the highest level of joint stiffness. Despite limited training data, MAD-CNN robustly detects all collisions with minimal detection delay across various stiffness conditions. Moreover, it exhibits a higher level of collision sensitivity, which is beneficial for effectively handling false positives, which is a common issue in learning-based methods. Experimental results demonstrate that the proposed MAD-CNN model outperforms existing state-of-the-art models in terms of collision sensitivity and robustness.

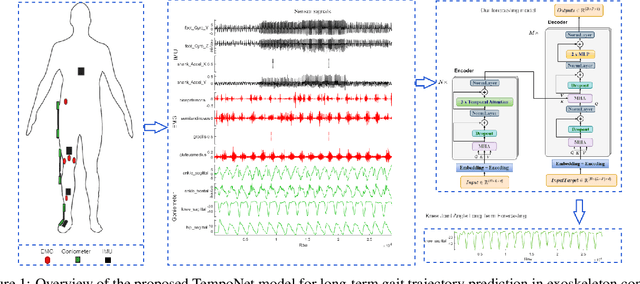

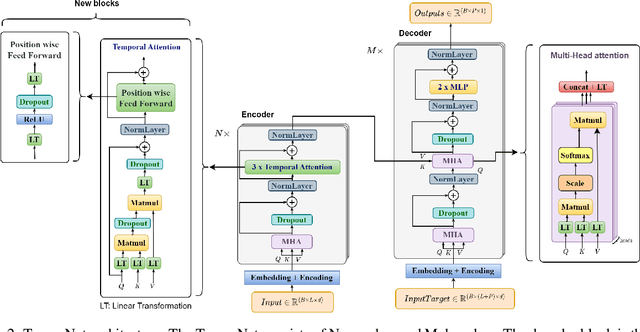

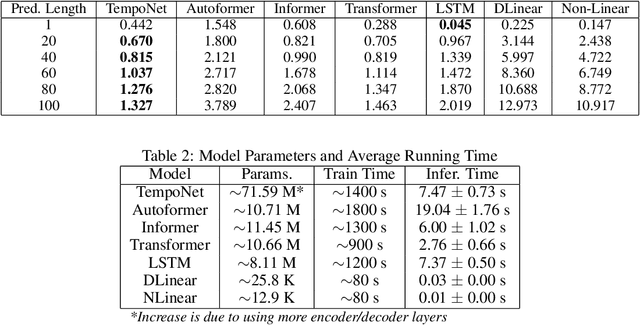

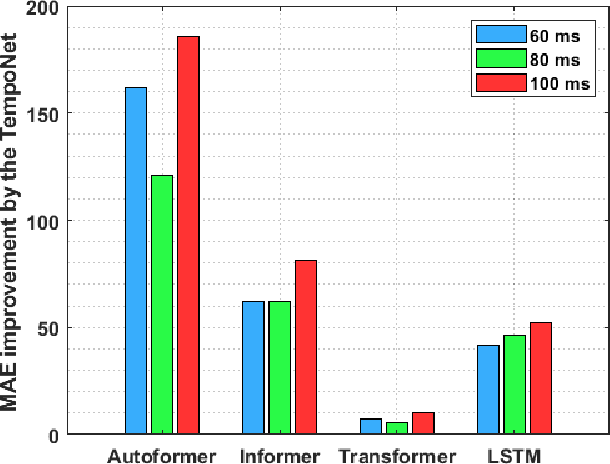

TempoNet: Empowering long-term Knee Joint Angle Prediction with Dynamic Temporal Attention in Exoskeleton Control

Oct 03, 2023Lyes Saad Saoud, Irfan Hussain

In the realm of exoskeleton control, achieving precise control poses challenges due to the mechanical delay of exoskeletons. To address this, incorporating future gait trajectories as feed-forward input has been proposed. However, existing deep learning models for gait prediction mainly focus on short-term predictions, leaving the long-term performance of these models relatively unexplored. In this study, we present TempoNet, a novel model specifically designed for precise knee joint angle prediction. By harnessing dynamic temporal attention within the Transformer-based architecture, TempoNet surpasses existing models in forecasting knee joint angles over extended time horizons. Notably, our model achieves a remarkable reduction of 10\% to 185\% in Mean Absolute Error (MAE) for 100 ms ahead forecasting compared to other transformer-based models, demonstrating its effectiveness. Furthermore, TempoNet exhibits further reliability and superiority over the baseline Transformer model, outperforming it by 14\% in MAE for the 200 ms prediction horizon. These findings highlight the efficacy of TempoNet in accurately predicting knee joint angles and emphasize the importance of incorporating dynamic temporal attention. TempoNet's capability to enhance knee joint angle prediction accuracy opens up possibilities for precise control, improved rehabilitation outcomes, advanced sports performance analysis, and deeper insights into biomechanical research. Code implementation for the TempoNet model can be found in the GitHub repository: https://github.com/LyesSaadSaoud/TempoNet.

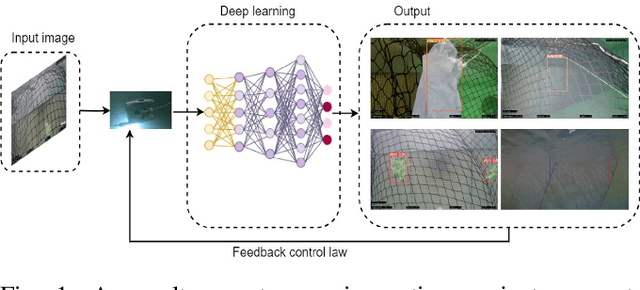

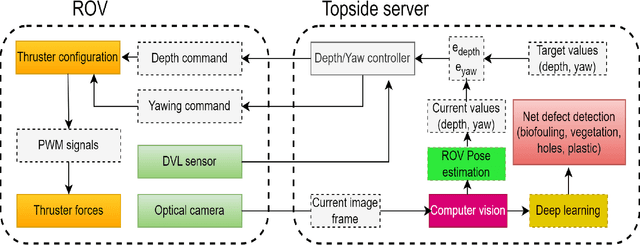

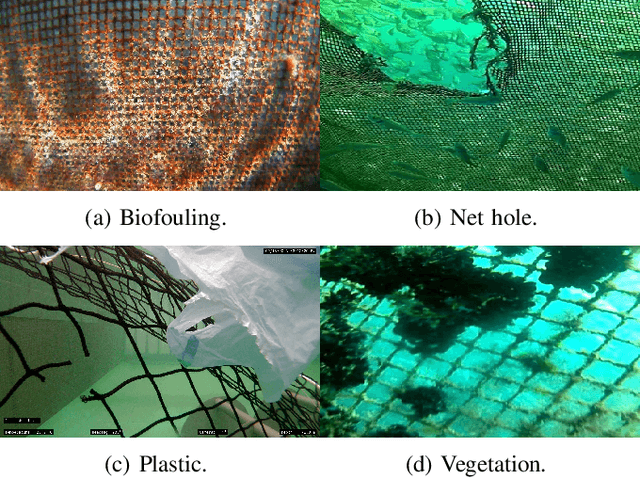

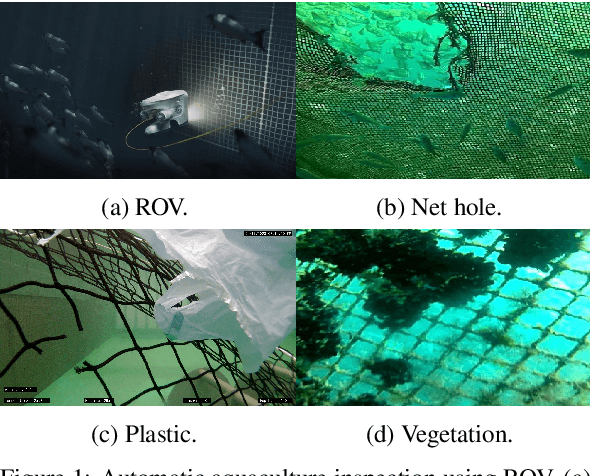

Autonomous Underwater Robotic System for Aquaculture Applications

Aug 26, 2023Waseem Akram, Muhayyuddin Ahmed, Lyes Saad Saoud, Lakmal Seneviratne, Irfan Hussain

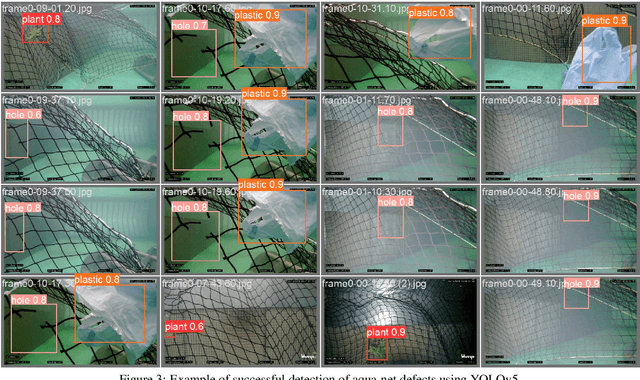

Aquaculture is a thriving food-producing sector producing over half of the global fish consumption. However, these aquafarms pose significant challenges such as biofouling, vegetation, and holes within their net pens and have a profound effect on the efficiency and sustainability of fish production. Currently, divers and/or remotely operated vehicles are deployed for inspecting and maintaining aquafarms; this approach is expensive and requires highly skilled human operators. This work aims to develop a robotic-based automatic net defect detection system for aquaculture net pens oriented to on- ROV processing and real-time detection of different aqua-net defects such as biofouling, vegetation, net holes, and plastic. The proposed system integrates both deep learning-based methods for aqua-net defect detection and feedback control law for the vehicle movement around the aqua-net to obtain a clear sequence of net images and inspect the status of the net via performing the inspection tasks. This work contributes to the area of aquaculture inspection, marine robotics, and deep learning aiming to reduce cost, improve quality, and ease of operation.

Evaluating Deep Learning Assisted Automated Aquaculture Net Pens Inspection Using ROV

Aug 26, 2023Waseem Akram, Muhayyuddin Ahmed, Lakmal Seneviratne, Irfan Hussain

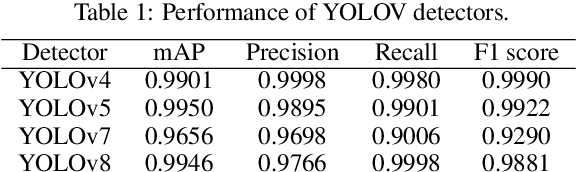

In marine aquaculture, inspecting sea cages is an essential activity for managing both the facilities' environmental impact and the quality of the fish development process. Fish escape from fish farms into the open sea due to net damage, which can result in significant financial losses and compromise the nearby marine ecosystem. The traditional inspection system in use relies on visual inspection by expert divers or ROVs, which is not only laborious, time-consuming, and inaccurate but also largely dependent on the level of knowledge of the operator and has a poor degree of verifiability. This article presents a robotic-based automatic net defect detection system for aquaculture net pens oriented to on-ROV processing and real-time detection. The proposed system takes a video stream from an onboard camera of the ROV, employs a deep learning detector, and segments the defective part of the image from the background under different underwater conditions. The system was first tested using a set of collected images for comparison with the state-of-the-art approaches and then using the ROV inspection sequences to evaluate its effectiveness in real-world scenarios. Results show that our approach presents high levels of accuracy even for adverse scenarios and is adequate for real-time processing on embedded platforms.

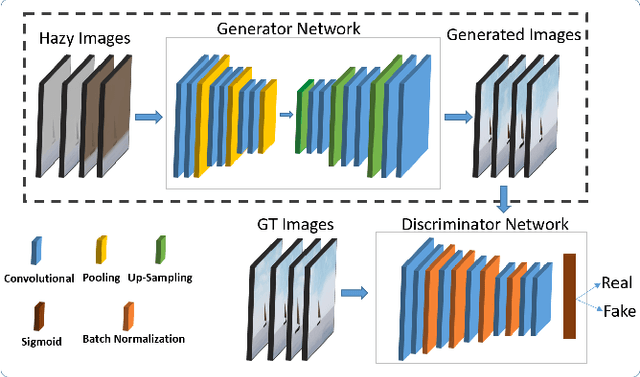

Vision-Based Autonomous Navigation for Unmanned Surface Vessel in Extreme Marine Conditions

Aug 08, 2023Muhayyuddin Ahmed, Ahsan Baidar Bakht, Taimur Hassan, Waseem Akram, Ahmed Humais, Lakmal Seneviratne, Shaoming He, Defu Lin, Irfan Hussain

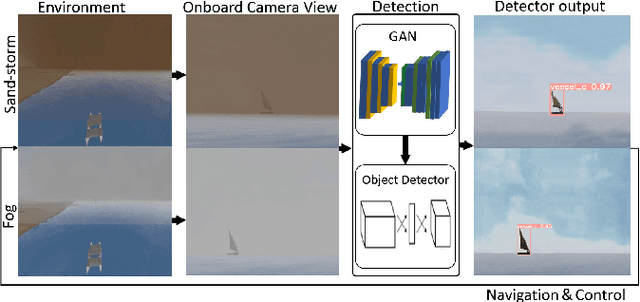

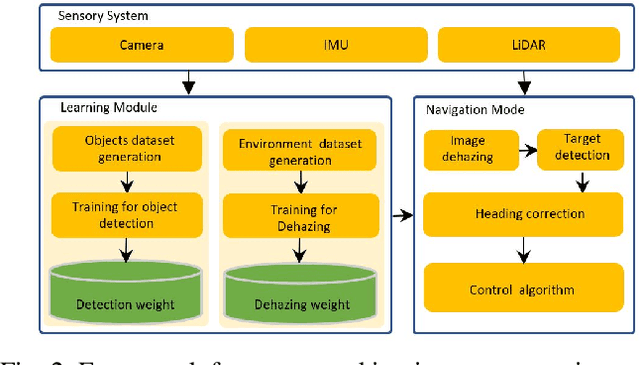

Visual perception is an important component for autonomous navigation of unmanned surface vessels (USV), particularly for the tasks related to autonomous inspection and tracking. These tasks involve vision-based navigation techniques to identify the target for navigation. Reduced visibility under extreme weather conditions in marine environments makes it difficult for vision-based approaches to work properly. To overcome these issues, this paper presents an autonomous vision-based navigation framework for tracking target objects in extreme marine conditions. The proposed framework consists of an integrated perception pipeline that uses a generative adversarial network (GAN) to remove noise and highlight the object features before passing them to the object detector (i.e., YOLOv5). The detected visual features are then used by the USV to track the target. The proposed framework has been thoroughly tested in simulation under extremely reduced visibility due to sandstorms and fog. The results are compared with state-of-the-art de-hazing methods across the benchmarked MBZIRC simulation dataset, on which the proposed scheme has outperformed the existing methods across various metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge