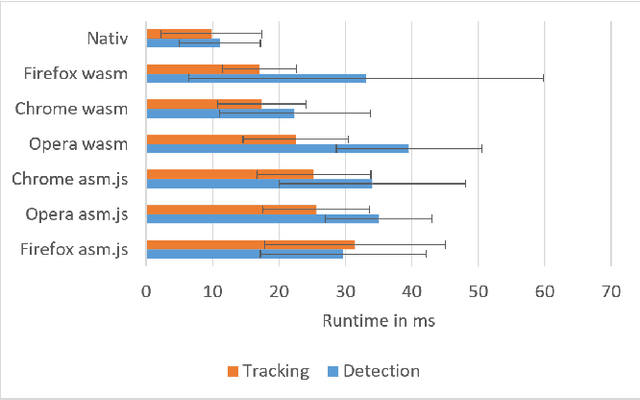

Efficient Pose Tracking from Natural Features in Standard Web Browsers

Apr 23, 2018Fabian Göttl, Philipp Gagel, Jens Grubert

Computer Vision-based natural feature tracking is at the core of modern Augmented Reality applications. Still, Web-based Augmented Reality typically relies on location-based sensing (using GPS and orientation sensors) or marker-based approaches to solve the pose estimation problem. We present an implementation and evaluation of an efficient natural feature tracking pipeline for standard Web browsers using HTML5 and WebAssembly. Our system can track image targets at real-time frame rates tablet PCs (up to 60 Hz) and smartphones (up to 25 Hz).

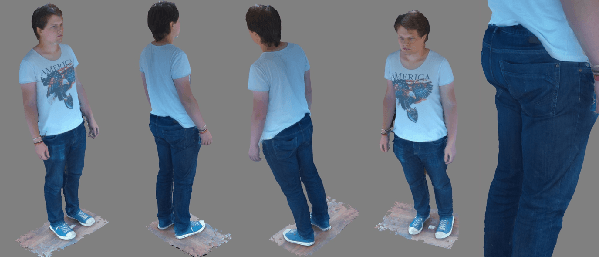

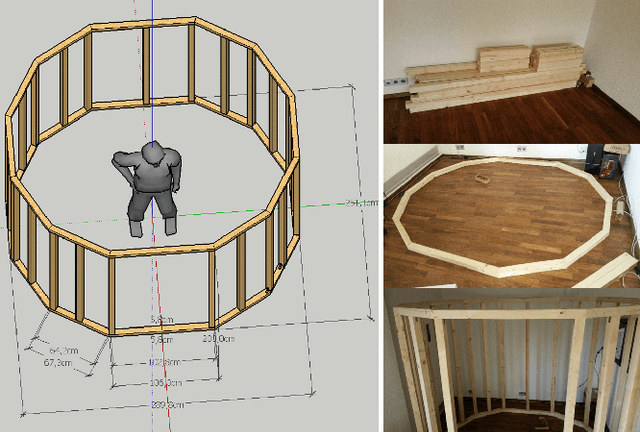

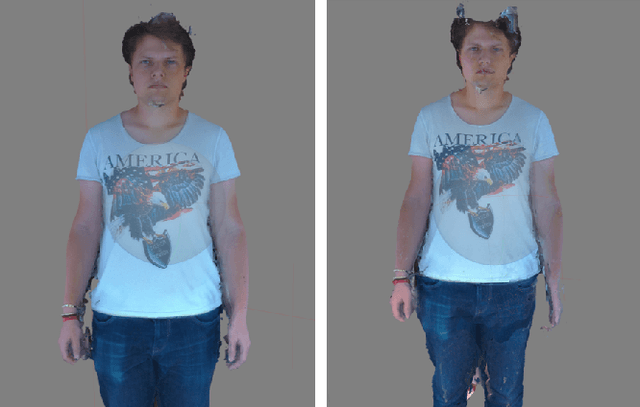

BodyDigitizer: An Open Source Photogrammetry-based 3D Body Scanner

Oct 28, 2017Travis Gesslein, Daniel Scherer, Jens Grubert

With the rising popularity of Augmented and Virtual Reality, there is a need for representing humans as virtual avatars in various application domains ranging from remote telepresence, games to medical applications. Besides explicitly modelling 3D avatars, sensing approaches that create person-specific avatars are becoming popular. However, affordable solutions typically suffer from a low visual quality and professional solution are often too expensive to be deployed in nonprofit projects. We present an open-source project, BodyDigitizer, which aims at providing both build instructions and configuration software for a high-resolution photogrammetry-based 3D body scanner. Our system encompasses up to 96 Rasperry PI cameras, active LED lighting, a sturdy frame construction and open-source configuration software. %We demonstrate the applicability of the body scanner in a nonprofit Mixed Reality health project. The detailed build instruction and software are available at http://www.bodydigitizer.org.

A Survey of Calibration Methods for Optical See-Through Head-Mounted Displays

Sep 13, 2017Jens Grubert, Yuta Itoh, Kenneth Moser, J. Edward Swan II

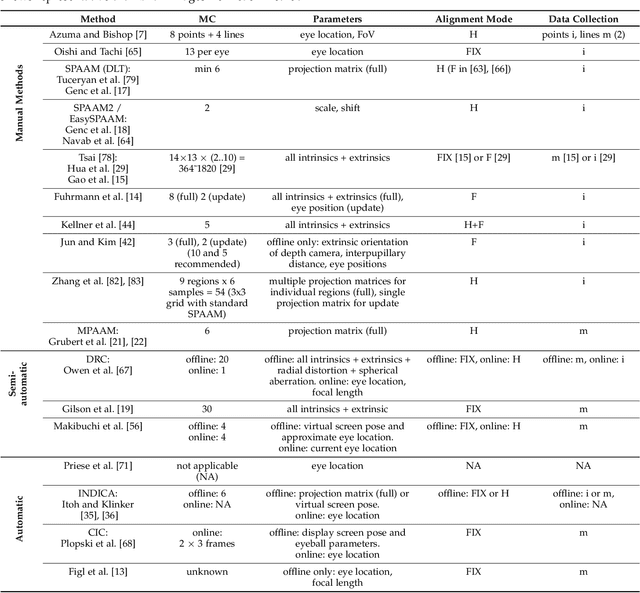

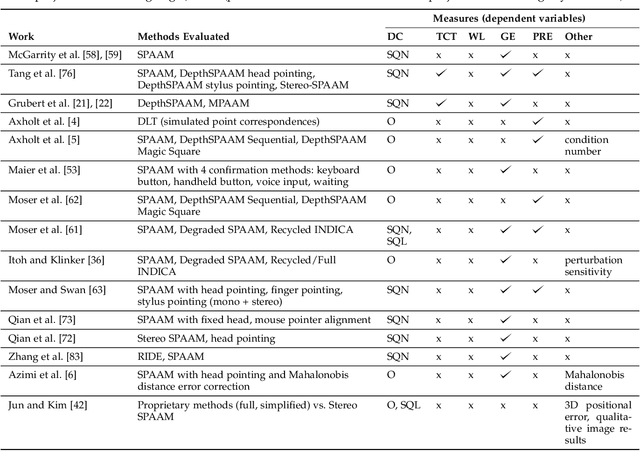

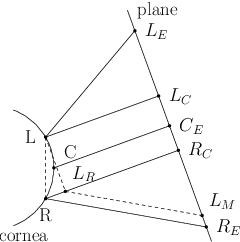

Optical see-through head-mounted displays (OST HMDs) are a major output medium for Augmented Reality, which have seen significant growth in popularity and usage among the general public due to the growing release of consumer-oriented models, such as the Microsoft Hololens. Unlike Virtual Reality headsets, OST HMDs inherently support the addition of computer-generated graphics directly into the light path between a user's eyes and their view of the physical world. As with most Augmented and Virtual Reality systems, the physical position of an OST HMD is typically determined by an external or embedded 6-Degree-of-Freedom tracking system. However, in order to properly render virtual objects, which are perceived as spatially aligned with the physical environment, it is also necessary to accurately measure the position of the user's eyes within the tracking system's coordinate frame. For over 20 years, researchers have proposed various calibration methods to determine this needed eye position. However, to date, there has not been a comprehensive overview of these procedures and their requirements. Hence, this paper surveys the field of calibration methods for OST HMDs. Specifically, it provides insights into the fundamentals of calibration techniques, and presents an overview of both manual and automatic approaches, as well as evaluation methods and metrics. Finally, it also identifies opportunities for future research. % relative to the tracking coordinate system, and, hence, its position in 3D space.

Towards Around-Device Interaction using Corneal Imaging

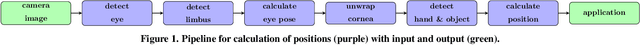

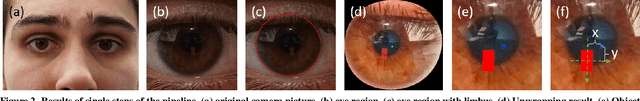

Sep 04, 2017Daniel Schneider, Jens Grubert

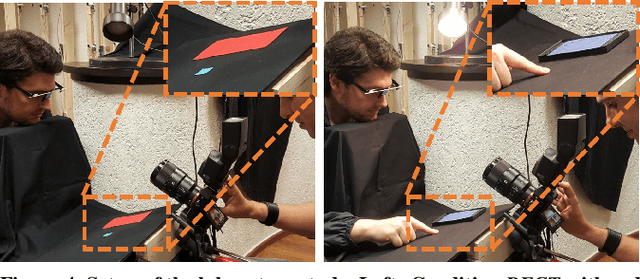

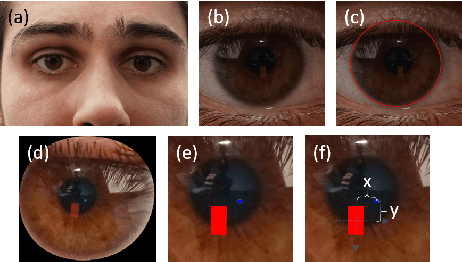

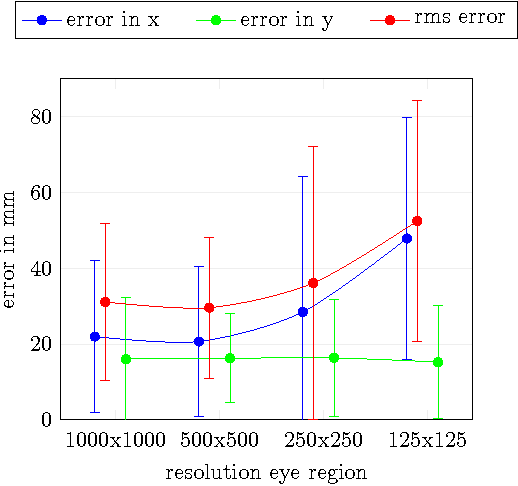

Around-device interaction techniques aim at extending the input space using various sensing modalities on mobile and wearable devices. In this paper, we present our work towards extending the input area of mobile devices using front-facing device-centered cameras that capture reflections in the human eye. As current generation mobile devices lack high resolution front-facing cameras we study the feasibility of around-device interaction using corneal reflective imaging based on a high resolution camera. We present a workflow, a technical prototype and an evaluation, including a migration path from high resolution to low resolution imagers. Our study indicates, that under optimal conditions a spatial sensing resolution of 5 cm in the vicinity of a mobile phone is possible.

Feasibility of Corneal Imaging for Handheld Augmented Reality

Sep 04, 2017Daniel Schneider, Jens Grubert

Smartphones are a popular device class for mobile Augmented Reality but suffer from a limited input space. Around-device interaction techniques aim at extending this input space using various sensing modalities. In this paper we present our work towards extending the input area of mobile devices using front-facing device-centered cameras that capture reflections in the cornea. As current generation mobile devices lack high resolution front-facing cameras, we study the feasibility of around-device interaction using corneal reflective imaging based on a high resolution camera. We present a workflow, a technical prototype and a feasibility evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge