Interference-Aware Emergent Random Access Protocol for Downlink LEO Satellite Networks

Feb 04, 2024Chang-Yong Lim, Jihong Park, Jinho Choi, Ju-Hyung Lee, Daesub Oh, Heewook Kim

In this article, we propose a multi-agent deep reinforcement learning (MADRL) framework to train a multiple access protocol for downlink low earth orbit (LEO) satellite networks. By improving the existing learned protocol, emergent random access channel (eRACH), our proposed method, coined centralized and compressed emergent signaling for eRACH (Ce2RACH), can mitigate inter-satellite interference by exchanging additional signaling messages jointly learned through the MADRL training process. Simulations demonstrate that Ce2RACH achieves up to 36.65% higher network throughput compared to eRACH, while the cost of signaling messages increase linearly with the number of users.

Knowledge Distillation from Language-Oriented to Emergent Communication for Multi-Agent Remote Control

Jan 23, 2024Yongjun Kim, Sejin Seo, Jihong Park, Mehdi Bennis, Seong-Lyun Kim, Junil Choi

In this work, we compare emergent communication (EC) built upon multi-agent deep reinforcement learning (MADRL) and language-oriented semantic communication (LSC) empowered by a pre-trained large language model (LLM) using human language. In a multi-agent remote navigation task, with multimodal input data comprising location and channel maps, it is shown that EC incurs high training cost and struggles when using multimodal data, whereas LSC yields high inference computing cost due to the LLM's large size. To address their respective bottlenecks, we propose a novel framework of language-guided EC (LEC) by guiding the EC training using LSC via knowledge distillation (KD). Simulations corroborate that LEC achieves faster travel time while avoiding areas with poor channel conditions, as well as speeding up the MADRL training convergence by up to 61.8% compared to EC.

Generative AI Meets Semantic Communication: Evolution and Revolution of Communication Tasks

Jan 10, 2024Eleonora Grassucci, Jihong Park, Sergio Barbarossa, Seong-Lyun Kim, Jinho Choi, Danilo Comminiello

While deep generative models are showing exciting abilities in computer vision and natural language processing, their adoption in communication frameworks is still far underestimated. These methods are demonstrated to evolve solutions to classic communication problems such as denoising, restoration, or compression. Nevertheless, generative models can unveil their real potential in semantic communication frameworks, in which the receiver is not asked to recover the sequence of bits used to encode the transmitted (semantic) message, but only to regenerate content that is semantically consistent with the transmitted message. Disclosing generative models capabilities in semantic communication paves the way for a paradigm shift with respect to conventional communication systems, which has great potential to reduce the amount of data traffic and offers a revolutionary versatility to novel tasks and applications that were not even conceivable a few years ago. In this paper, we present a unified perspective of deep generative models in semantic communication and we unveil their revolutionary role in future communication frameworks, enabling emerging applications and tasks. Finally, we analyze the challenges and opportunities to face to develop generative models specifically tailored for communication systems.

Towards Semantic Communication Protocols for 6G: From Protocol Learning to Language-Oriented Approaches

Oct 14, 2023Jihong Park, Seung-Woo Ko, Jinho Choi, Seong-Lyun Kim, Mehdi Bennis

The forthcoming 6G systems are expected to address a wide range of non-stationary tasks. This poses challenges to traditional medium access control (MAC) protocols that are static and predefined. In response, data-driven MAC protocols have recently emerged, offering ability to tailor their signaling messages for specific tasks. This article presents a novel categorization of these data-driven MAC protocols into three levels: Level 1 MAC. task-oriented neural protocols constructed using multi-agent deep reinforcement learning (MADRL); Level 2 MAC. neural network-oriented symbolic protocols developed by converting Level 1 MAC outputs into explicit symbols; and Level 3 MAC. language-oriented semantic protocols harnessing large language models (LLMs) and generative models. With this categorization, we aim to explore the opportunities and challenges of each level by delving into their foundational techniques. Drawing from information theory and associated principles as well as selected case studies, this study provides insights into the trajectory of data-driven MAC protocols and sheds light on future research directions.

Semantics Alignment via Split Learning for Resilient Multi-User Semantic Communication

Oct 13, 2023Jinhyuk Choi, Jihong Park, Seung-Woo Ko, Jinho Choi, Mehdi Bennis, Seong-Lyun Kim

Recent studies on semantic communication commonly rely on neural network (NN) based transceivers such as deep joint source and channel coding (DeepJSCC). Unlike traditional transceivers, these neural transceivers are trainable using actual source data and channels, enabling them to extract and communicate semantics. On the flip side, each neural transceiver is inherently biased towards specific source data and channels, making different transceivers difficult to understand intended semantics, particularly upon their initial encounter. To align semantics over multiple neural transceivers, we propose a distributed learning based solution, which leverages split learning (SL) and partial NN fine-tuning techniques. In this method, referred to as SL with layer freezing (SLF), each encoder downloads a misaligned decoder, and locally fine-tunes a fraction of these encoder-decoder NN layers. By adjusting this fraction, SLF controls computing and communication costs. Simulation results confirm the effectiveness of SLF in aligning semantics under different source data and channel dissimilarities, in terms of classification accuracy, reconstruction errors, and recovery time for comprehending intended semantics from misalignment.

Language-Oriented Communication with Semantic Coding and Knowledge Distillation for Text-to-Image Generation

Sep 20, 2023Hyelin Nam, Jihong Park, Jinho Choi, Mehdi Bennis, Seong-Lyun Kim

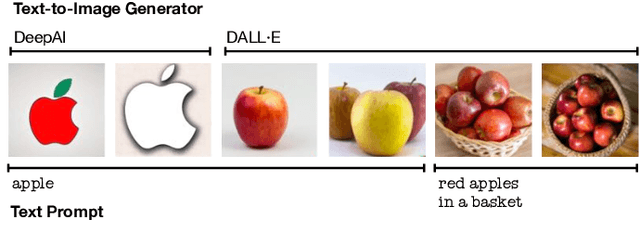

By integrating recent advances in large language models (LLMs) and generative models into the emerging semantic communication (SC) paradigm, in this article we put forward to a novel framework of language-oriented semantic communication (LSC). In LSC, machines communicate using human language messages that can be interpreted and manipulated via natural language processing (NLP) techniques for SC efficiency. To demonstrate LSC's potential, we introduce three innovative algorithms: 1) semantic source coding (SSC) which compresses a text prompt into its key head words capturing the prompt's syntactic essence while maintaining their appearance order to keep the prompt's context; 2) semantic channel coding (SCC) that improves robustness against errors by substituting head words with their lenghthier synonyms; and 3) semantic knowledge distillation (SKD) that produces listener-customized prompts via in-context learning the listener's language style. In a communication task for progressive text-to-image generation, the proposed methods achieve higher perceptual similarities with fewer transmissions while enhancing robustness in noisy communication channels.

Sequential Semantic Generative Communication for Progressive Text-to-Image Generation

Sep 08, 2023Hyelin Nam, Jihong Park, Jinho Choi, Seong-Lyun Kim

This paper proposes new framework of communication system leveraging promising generation capabilities of multi-modal generative models. Regarding nowadays smart applications, successful communication can be made by conveying the perceptual meaning, which we set as text prompt. Text serves as a suitable semantic representation of image data as it has evolved to instruct an image or generate image through multi-modal techniques, by being interpreted in a manner similar to human cognition. Utilizing text can also reduce the overload compared to transmitting the intact data itself. The transmitter converts objective image to text through multi-model generation process and the receiver reconstructs the image using reverse process. Each word in the text sentence has each syntactic role, responsible for particular piece of information the text contains. For further efficiency in communication load, the transmitter sequentially sends words in priority of carrying the most information until reaches successful communication. Therefore, our primary focus is on the promising design of a communication system based on image-to-text transformation and the proposed schemes for sequentially transmitting word tokens. Our work is expected to pave a new road of utilizing state-of-the-art generative models to real communication systems

Energy-Efficient Downlink Semantic Generative Communication with Text-to-Image Generators

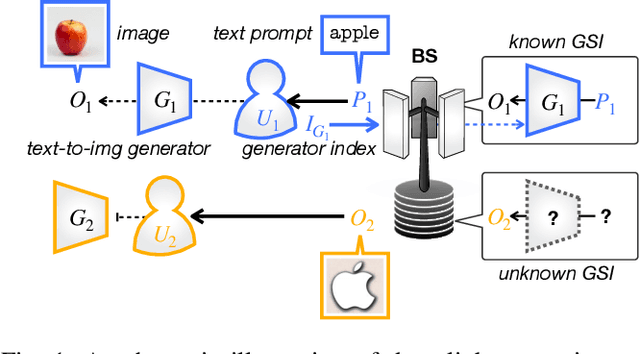

Jun 08, 2023Hyein Lee, Jihong Park, Sooyoung Kim, Jinho Choi

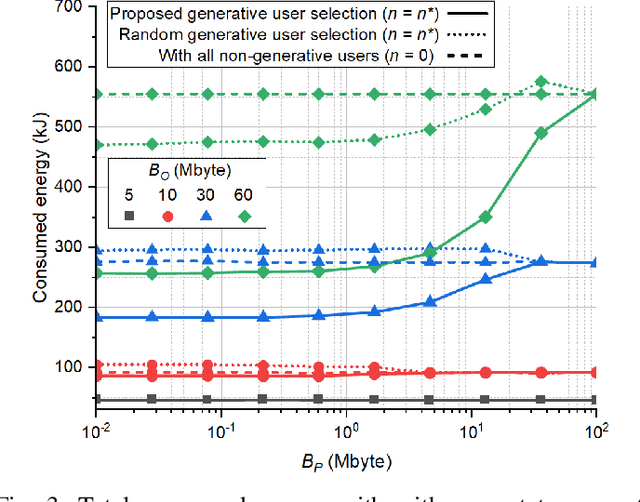

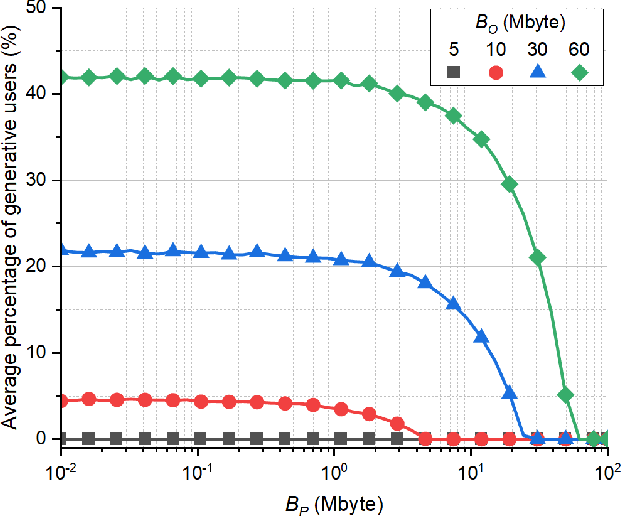

In this paper, we introduce a novel semantic generative communication (SGC) framework, where generative users leverage text-to-image (T2I) generators to create images locally from downloaded text prompts, while non-generative users directly download images from a base station (BS). Although generative users help reduce downlink transmission energy at the BS, they consume additional energy for image generation and for uploading their generator state information (GSI). We formulate the problem of minimizing the total energy consumption of the BS and the users, and devise a generative user selection algorithm. Simulation results corroborate that our proposed algorithm reduces total energy by up to 54% compared to a baseline with all non-generative users.

Energy-Efficient UAV-Assisted IoT Data Collection via TSP-Based Solution Space Reduction

Jun 02, 2023Sivaram Krishnan, Mahyar Nemati, Seng W. Loke, Jihong Park, Jinho Choi

This paper presents a wireless data collection framework that employs an unmanned aerial vehicle (UAV) to efficiently gather data from distributed IoT sensors deployed in a large area. Our approach takes into account the non-zero communication ranges of the sensors to optimize the flight path of the UAV, resulting in a variation of the Traveling Salesman Problem (TSP). We prove mathematically that the optimal waypoints for this TSP-variant problem are restricted to the boundaries of the sensor communication ranges, greatly reducing the solution space. Building on this finding, we develop a low-complexity UAV-assisted sensor data collection algorithm, and demonstrate its effectiveness in a selected use case where we minimize the total energy consumption of the UAV and sensors by jointly optimizing the UAV's travel distance and the sensors' communication ranges.

Federated Graph Learning for Low Probability of Detection in Wireless Ad-Hoc Networks

Jun 01, 2023Sivaram Krishnan, Jihong Park, Subhash Sagar, Gregory Sherman, Benjamin Campbell, Jinho Choi

Low probability of detection (LPD) has recently emerged as a means to enhance the privacy and security of wireless networks. Unlike existing wireless security techniques, LPD measures aim to conceal the entire existence of wireless communication instead of safeguarding the information transmitted from users. Motivated by LPD communication, in this paper, we study a privacy-preserving and distributed framework based on graph neural networks to minimise the detectability of a wireless ad-hoc network as a whole and predict an optimal communication region for each node in the wireless network, allowing them to communicate while remaining undetected from external actors. We also demonstrate the effectiveness of the proposed method in terms of two performance measures, i.e., mean absolute error and median absolute error.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge