Hybrid Quantum-Classical Scheduling for Accelerating Neural Network Training with Newton's Gradient Descent

Apr 30, 2024Pingzhi Li, Junyu Liu, Hanrui Wang, Tianlong Chen

Optimization techniques in deep learning are predominantly led by first-order gradient methodologies, such as SGD. However, neural network training can greatly benefit from the rapid convergence characteristics of second-order optimization. Newton's GD stands out in this category, by rescaling the gradient using the inverse Hessian. Nevertheless, one of its major bottlenecks is matrix inversion, which is notably time-consuming in $O(N^3)$ time with weak scalability. Matrix inversion can be translated into solving a series of linear equations. Given that quantum linear solver algorithms (QLSAs), leveraging the principles of quantum superposition and entanglement, can operate within a $\text{polylog}(N)$ time frame, they present a promising approach with exponential acceleration. Specifically, one of the most recent QLSAs demonstrates a complexity scaling of $O(d\cdot\kappa \log(N\cdot\kappa/\epsilon))$, depending on: {size~$N$, condition number~$\kappa$, error tolerance~$\epsilon$, quantum oracle sparsity~$d$} of the matrix. However, this also implies that their potential exponential advantage may be hindered by certain properties (i.e. $\kappa$ and $d$). We propose Q-Newton, a hybrid quantum-classical scheduler for accelerating neural network training with Newton's GD. Q-Newton utilizes a streamlined scheduling module that coordinates between quantum and classical linear solvers, by estimating & reducing $\kappa$ and constructing $d$ for the quantum solver. Our evaluation showcases the potential for Q-Newton to significantly reduce the total training time compared to commonly used optimizers like SGD. We hypothesize a future scenario where the gate time of quantum machines is reduced, possibly realized by attoseconds physics. Our evaluation establishes an ambitious and promising target for the evolution of quantum computing.

Feint in Multi-Player Games

Mar 04, 2024Junyu Liu, Wangkai Jin, Xiangjun Peng

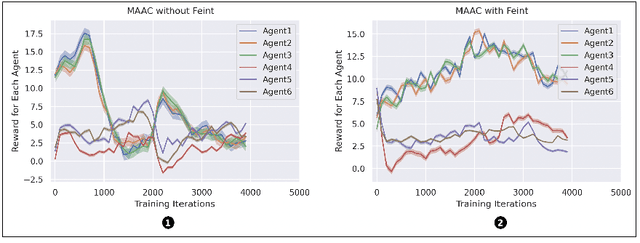

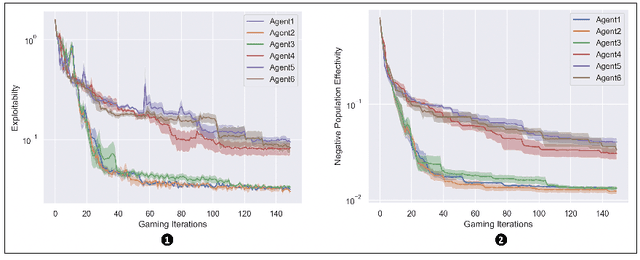

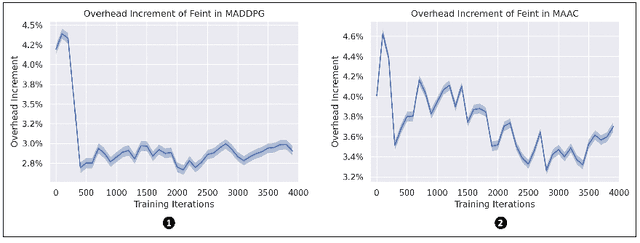

This paper introduces the first formalization, implementation and quantitative evaluation of Feint in Multi-Player Games. Our work first formalizes Feint from the perspective of Multi-Player Games, in terms of the temporal, spatial, and their collective impacts. The formalization is built upon Non-transitive Active Markov Game Model, where Feint can have a considerable amount of impacts. Then, our work considers practical implementation details of Feint in Multi-Player Games, under the state-of-the-art progress of multi-agent modeling to date (namely Multi-Agent Reinforcement Learning). Finally, our work quantitatively examines the effectiveness of our design, and the results show that our design of Feint can (1) greatly improve the reward gains from the game; (2) significantly improve the diversity of Multi-Player Games; and (3) only incur negligible overheads in terms of time consumption. We conclude that our design of Feint is effective and practical, to make Multi-Player Games more interesting.

Quantum Machine Learning: from NISQ to Fault Tolerance

Jan 21, 2024Yunfei Wang, Junyu Liu

Quantum machine learning, which involves running machine learning algorithms on quantum devices, has garnered significant attention in both academic and business circles. In this paper, we offer a comprehensive and unbiased review of the various concepts that have emerged in the field of quantum machine learning. This includes techniques used in Noisy Intermediate-Scale Quantum (NISQ) technologies and approaches for algorithms compatible with fault-tolerant quantum computing hardware. Our review covers fundamental concepts, algorithms, and the statistical learning theory pertinent to quantum machine learning.

Energy-Efficient Power Control for Multiple-Task Split Inference in UAVs: A Tiny Learning-Based Approach

Dec 31, 2023Chenxi Zhao, Min Sheng, Junyu Liu, Tianshu Chu, Jiandong Li

The limited energy and computing resources of unmanned aerial vehicles (UAVs) hinder the application of aerial artificial intelligence. The utilization of split inference in UAVs garners significant attention due to its effectiveness in mitigating computing and energy requirements. However, achieving energy-efficient split inference in UAVs remains complex considering of various crucial parameters such as energy level and delay constraints, especially involving multiple tasks. In this paper, we present a two-timescale approach for energy minimization in split inference, where discrete and continuous variables are segregated into two timescales to reduce the size of action space and computational complexity. This segregation enables the utilization of tiny reinforcement learning (TRL) for selecting discrete transmission modes for sequential tasks. Moreover, optimization programming (OP) is embedded between TRL's output and reward function to optimize the continuous transmit power. Specifically, we replace the optimization of transmit power with that of transmission time to decrease the computational complexity of OP since we reveal that energy consumption monotonically decreases with increasing transmission time. The replacement significantly reduces the feasible region and enables a fast solution according to the closed-form expression for optimal transmit power. Simulation results show that the proposed algorithm can achieve a higher probability of successful task completion with lower energy consumption.

Coordinated Intra- and Inter-system Interference Management in Integrated Satellite Terrestrial Networks

Dec 13, 2023Ziyue Zhang, Min Sheng, Junyu Liu, Jiandong Li

Leveraging the advantage of satellite and terrestrial networks, the integrated satellite terrestrial networks (ISTNs) can help to achieve seamless global access and eliminate the digital divide. However, the dense deployment and frequent handover of satellites aggravate intra- and inter-system interference, resulting in a decrease in downlink sum rate. To address this issue, we propose a coordinated intra- and inter-system interference management algorithm for ISTN. This algorithm coordinates multidimensional interference through a joint design of inter-satellite handover and resource allocation method. On the one hand, we take inter-system interference between low earth orbit (LEO) and geostationary orbit (GEO) satellites as a constraint, and reduce interference to GEO satellite ground stations (GEO-GS) while ensuring system capacity through inter-satellite handover. On the other hand, satellite and terrestrial resource allocation schemes are designed based on the matching idea, and channel gain and interference to other channels are considered during the matching process to coordinate co-channel interference. In order to avoid too many unnecessary handovers, we consider handover scenarios related to service capabilities and service time to determine the optimal handover target satellite. Numerical results show that the gap between the results on the system sum rate obtained by the proposed method and the upper bound is reduced as the user density increases, and the handover frequency can be significantly reduced.

Dynamical phase transition in quantum neural networks with large depth

Nov 29, 2023Bingzhi Zhang, Junyu Liu, Xiao-Chuan Wu, Liang Jiang, Quntao Zhuang

Understanding the training dynamics of quantum neural networks is a fundamental task in quantum information science with wide impact in physics, chemistry and machine learning. In this work, we show that the late-time training dynamics of quantum neural networks can be described by the generalized Lotka-Volterra equations, which lead to a dynamical phase transition. When the targeted value of cost function crosses the minimum achievable value from above to below, the dynamics evolve from a frozen-kernel phase to a frozen-error phase, showing a duality between the quantum neural tangent kernel and the total error. In both phases, the convergence towards the fixed point is exponential, while at the critical point becomes polynomial. Via mapping the Hessian of the training dynamics to a Hamiltonian in the imaginary time, we reveal the nature of the phase transition to be second-order with the exponent $\nu=1$, where scale invariance and closing gap are observed at critical point. We also provide a non-perturbative analytical theory to explain the phase transition via a restricted Haar ensemble at late time, when the output state approaches the steady state. The theory findings are verified experimentally on IBM quantum devices.

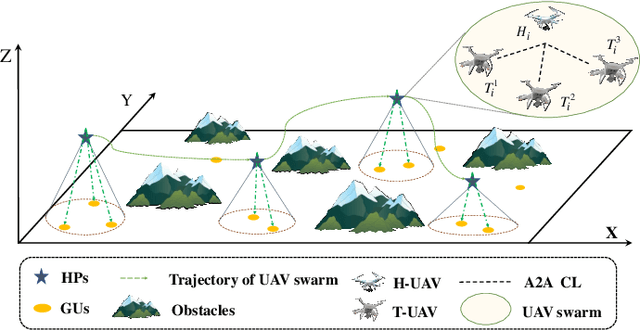

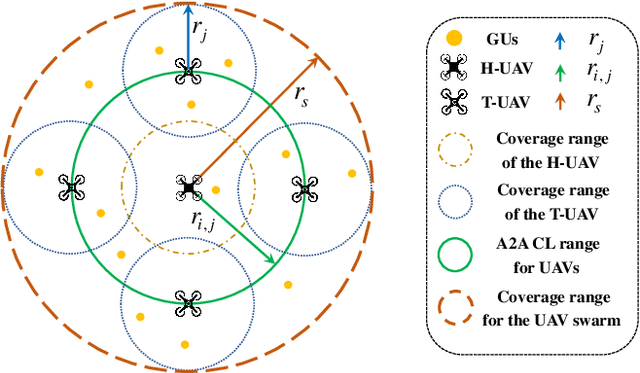

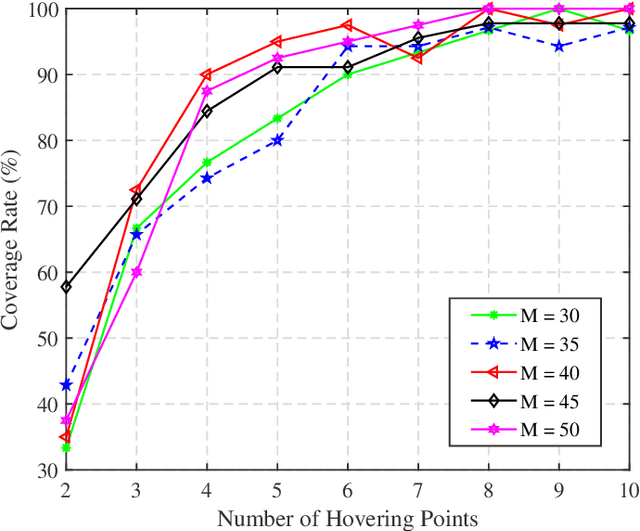

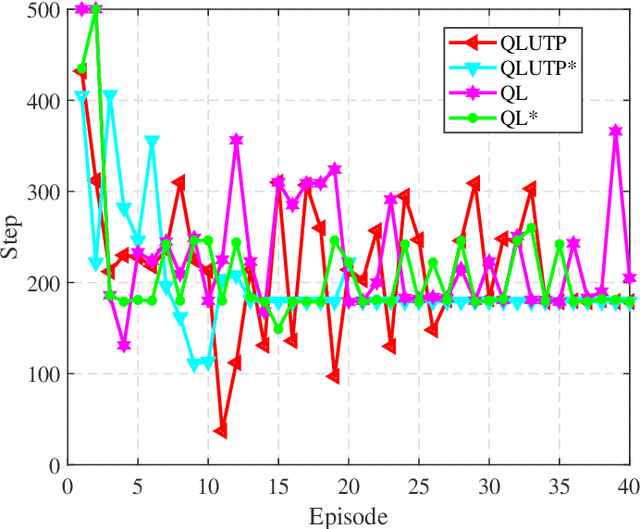

UAV Swarm Deployment and Trajectory for 3D Area Coverage via Reinforcement Learning

Sep 21, 2023Jia He, Ziye Jia, Chao Dong, Junyu Liu, Qihui Wu, Jingxian Liu

Unmanned aerial vehicles (UAVs) are recognized as promising technologies for area coverage due to the flexibility and adaptability. However, the ability of a single UAV is limited, and as for the large-scale three-dimensional (3D) scenario, UAV swarms can establish seamless wireless communication services. Hence, in this work, we consider a scenario of UAV swarm deployment and trajectory to satisfy 3D coverage considering the effects of obstacles. In detail, we propose a hierarchical swarm framework to efficiently serve the large-area users. Then, the problem is formulated to minimize the total trajectory loss of the UAV swarm. However, the problem is intractable due to the non-convex property, and we decompose it into smaller issues of users clustering, UAV swarm hovering points selection, and swarm trajectory determination. Moreover, we design a Q-learning based algorithm to accelerate the solution efficiency. Finally, we conduct extensive simulations to verify the proposed mechanisms, and the designed algorithm outperforms other referred methods.

Quantum Data Center: Perspectives

Sep 12, 2023Junyu Liu, Liang Jiang

A quantum version of data centers might be significant in the quantum era. In this paper, we introduce Quantum Data Center (QDC), a quantum version of existing classical data centers, with a specific emphasis on combining Quantum Random Access Memory (QRAM) and quantum networks. We argue that QDC will provide significant benefits to customers in terms of efficiency, security, and precision, and will be helpful for quantum computing, communication, and sensing. We investigate potential scientific and business opportunities along this novel research direction through hardware realization and possible specific applications. We show the possible impacts of QDCs in business and science, especially the machine learning and big data industries.

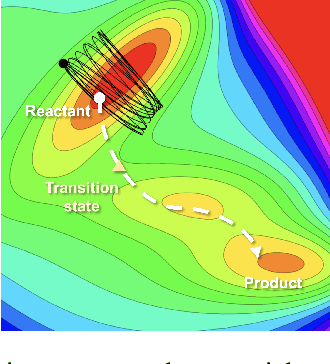

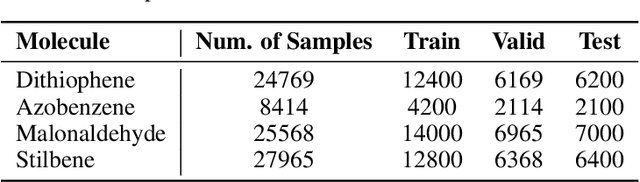

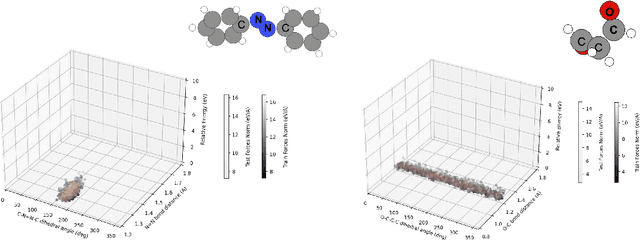

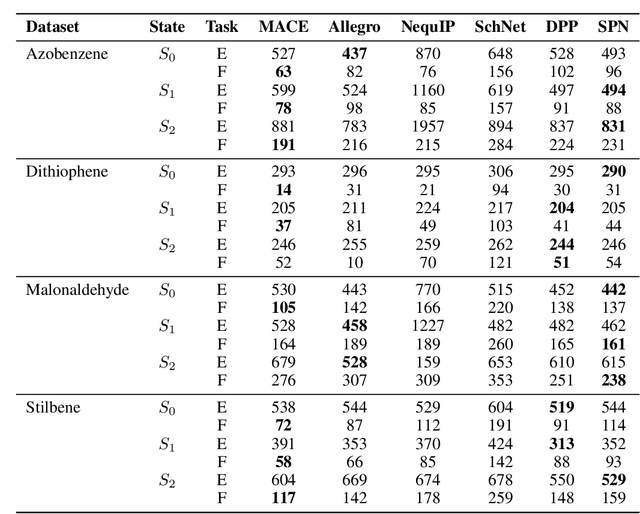

xxMD: Benchmarking Neural Force Fields Using Extended Dynamics beyond Equilibrium

Aug 30, 2023Zihan Pengmei, Yinan Shu, Junyu Liu

Neural force fields (NFFs) have gained prominence in computational chemistry as surrogate models, superseding quantum-chemistry calculations in ab initio molecular dynamics. The prevalent benchmark for NFFs has been the MD17 dataset and its subsequent extension. These datasets predominantly comprise geometries from the equilibrium region of the ground electronic state potential energy surface, sampling from direct adiabatic dynamics. However, many chemical reactions entail significant molecular deformations, notably bond breaking. We demonstrate the constrained distribution of internal coordinates and energies in the MD17 datasets, underscoring their inadequacy for representing systems undergoing chemical reactions. Addressing this sampling limitation, we introduce the xxMD (Extended Excited-state Molecular Dynamics) dataset, derived from non-adiabatic dynamics. This dataset encompasses energies and forces ascertained from both multireference wave function theory and density functional theory. Furthermore, its nuclear configuration spaces authentically depict chemical reactions, making xxMD a more chemically relevant dataset. Our re-assessment of equivariant models on the xxMD datasets reveals notably higher mean absolute errors than those reported for MD17 and its variants. This observation underscores the challenges faced in crafting a generalizable NFF model with extrapolation capability. Our proposed xxMD-CASSCF and xxMD-DFT datasets are available at https://github.com/zpengmei/xxMD.

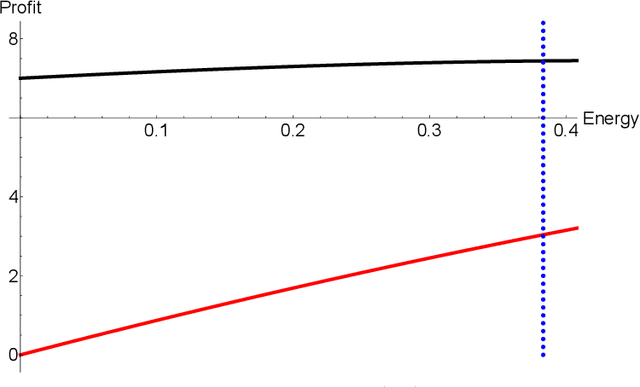

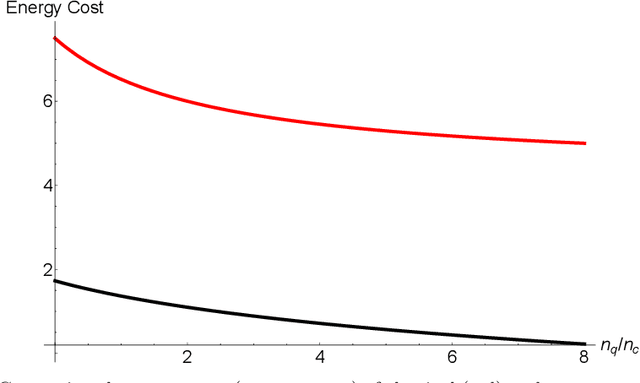

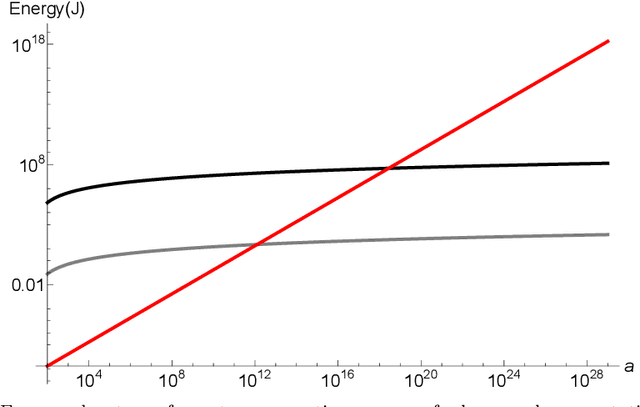

Potential Energy Advantage of Quantum Economy

Aug 15, 2023Junyu Liu, Hansheng Jiang, Zuo-Jun Max Shen

Energy cost is increasingly crucial in the modern computing industry with the wide deployment of large-scale machine learning models and language models. For the firms that provide computing services, low energy consumption is important both from the perspective of their own market growth and the government's regulations. In this paper, we study the energy benefits of quantum computing vis-a-vis classical computing. Deviating from the conventional notion of quantum advantage based solely on computational complexity, we redefine advantage in an energy efficiency context. Through a Cournot competition model constrained by energy usage, we demonstrate quantum computing firms can outperform classical counterparts in both profitability and energy efficiency at Nash equilibrium. Therefore quantum computing may represent a more sustainable pathway for the computing industry. Moreover, we discover that the energy benefits of quantum computing economies are contingent on large-scale computation. Based on real physical parameters, we further illustrate the scale of operation necessary for realizing this energy efficiency advantage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge