Weighing and Integrating Evidence for Stochastic Simulation in Bayesian Networks

Mar 27, 2013Robert Fung, Kuo-Chu Chang

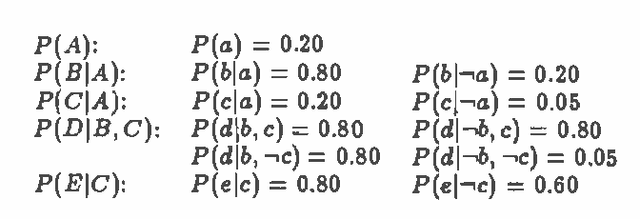

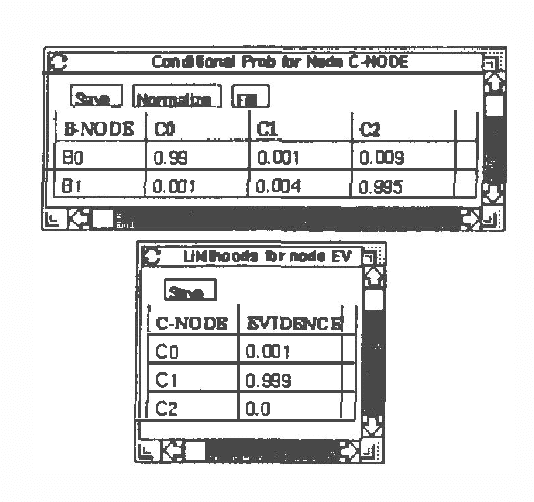

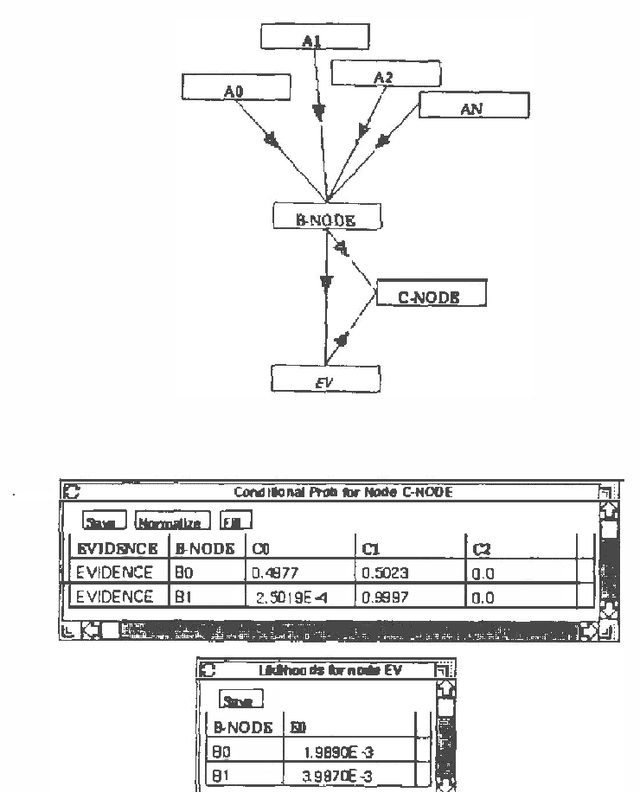

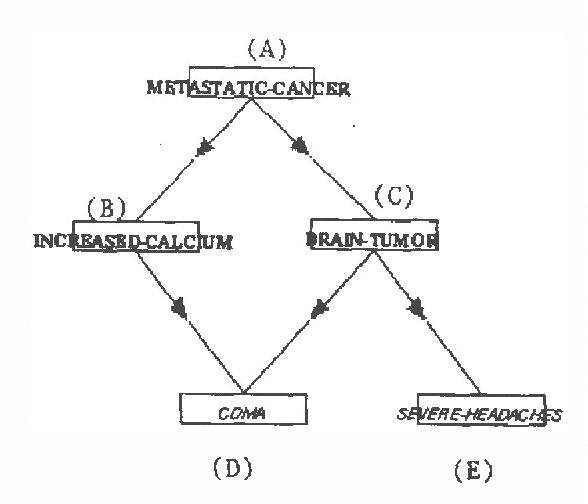

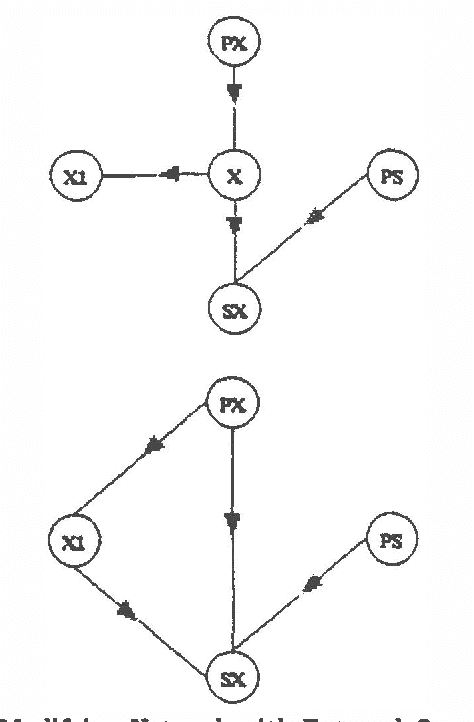

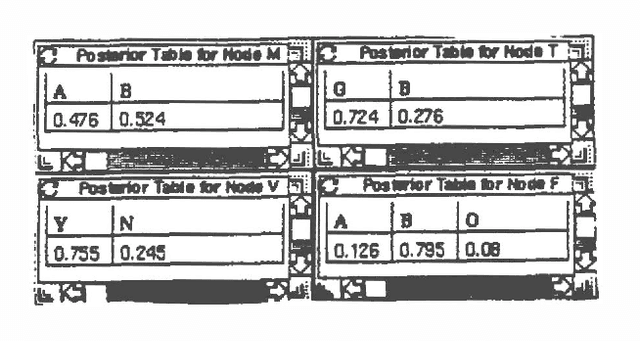

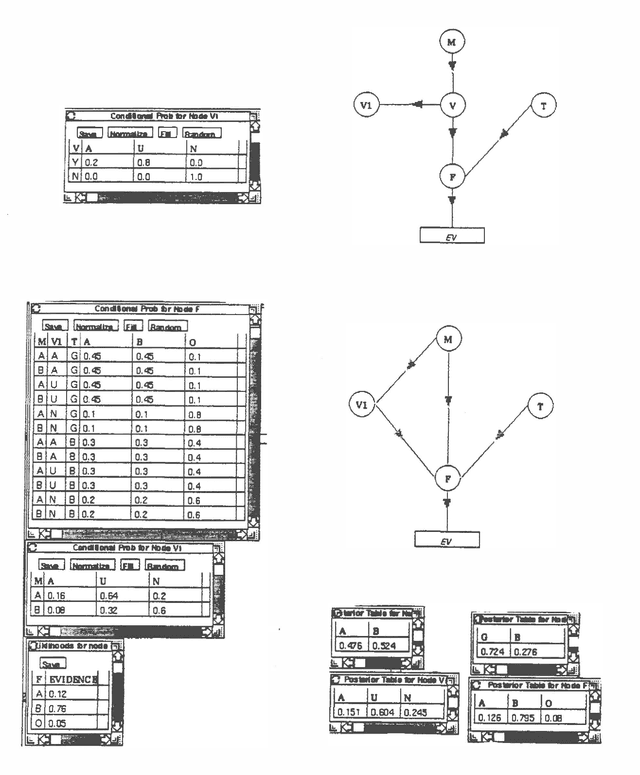

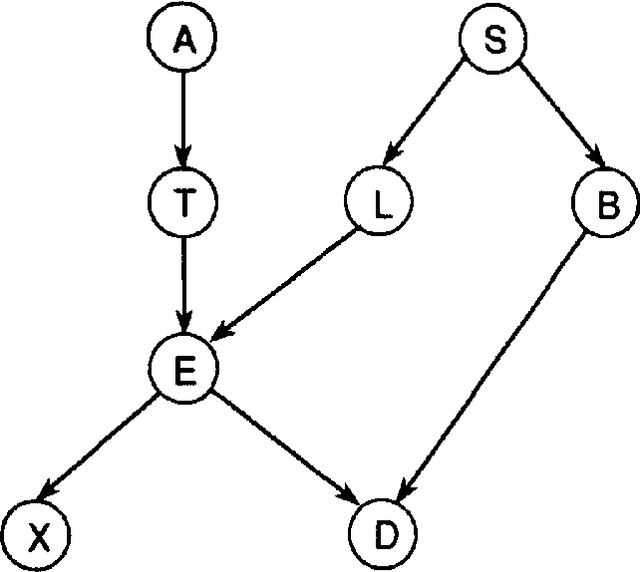

Stochastic simulation approaches perform probabilistic inference in Bayesian networks by estimating the probability of an event based on the frequency that the event occurs in a set of simulation trials. This paper describes the evidence weighting mechanism, for augmenting the logic sampling stochastic simulation algorithm [Henrion, 1986]. Evidence weighting modifies the logic sampling algorithm by weighting each simulation trial by the likelihood of a network's evidence given the sampled state node values for that trial. We also describe an enhancement to the basic algorithm which uses the evidential integration technique [Chin and Cooper, 1987]. A comparison of the basic evidence weighting mechanism with the Markov blanket algorithm [Pearl, 1987], the logic sampling algorithm, and the evidence integration algorithm is presented. The comparison is aided by analyzing the performance of the algorithms in a simple example network.

Refinement and Coarsening of Bayesian Networks

Mar 27, 2013Kuo-Chu Chang, Robert Fung

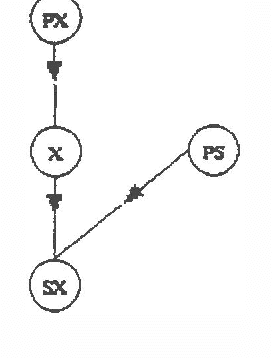

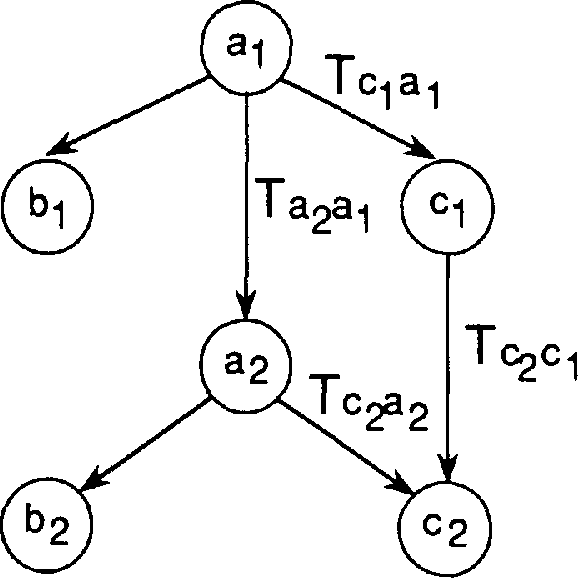

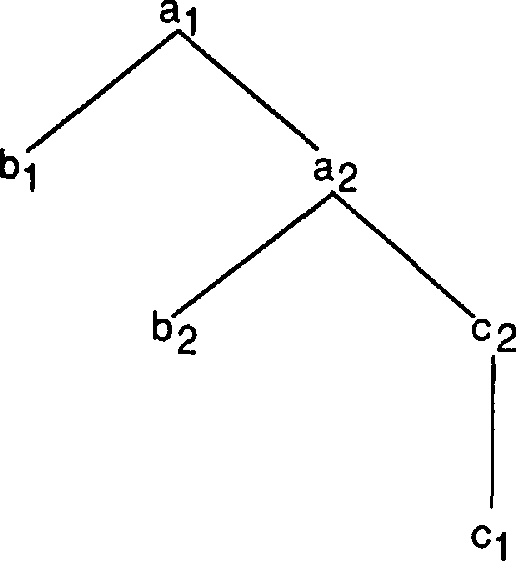

In almost all situation assessment problems, it is useful to dynamically contract and expand the states under consideration as assessment proceeds. Contraction is most often used to combine similar events or low probability events together in order to reduce computation. Expansion is most often used to make distinctions of interest which have significant probability in order to improve the quality of the assessment. Although other uncertainty calculi, notably Dempster-Shafer [Shafer, 1976], have addressed these operations, there has not yet been any approach of refining and coarsening state spaces for the Bayesian Network technology. This paper presents two operations for refining and coarsening the state space in Bayesian Networks. We also discuss their practical implications for knowledge acquisition.

Symbolic Probabilistic Inference with Evidence Potential

Mar 20, 2013Kuo-Chu Chang, Robert Fung

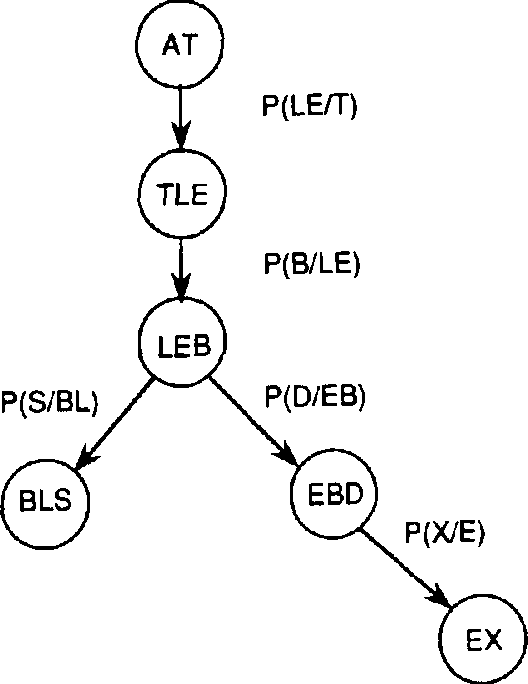

Recent research on the Symbolic Probabilistic Inference (SPI) algorithm[2] has focused attention on the importance of resolving general queries in Bayesian networks. SPI applies the concept of dependency-directed backward search to probabilistic inference, and is incremental with respect to both queries and observations. In response to this research we have extended the evidence potential algorithm [3] with the same features. We call the extension symbolic evidence potential inference (SEPI). SEPI like SPI can handle generic queries and is incremental with respect to queries and observations. While in SPI, operations are done on a search tree constructed from the nodes of the original network, in SEPI, a clique-tree structure obtained from the evidence potential algorithm [3] is the basic framework for recursive query processing. In this paper, we describe the systematic query and caching procedure of SEPI. SEPI begins with finding a clique tree from a Bayesian network-the standard procedure of the evidence potential algorithm. With the clique tree, various probability distributions are computed and stored in each clique. This is the ?pre-processing? step of SEPI. Once this step is done, the query can then be computed. To process a query, a recursive process similar to the SPI algorithm is used. The queries are directed to the root clique and decomposed into queries for the clique's subtrees until a particular query can be answered at the clique at which it is directed. The algorithm and the computation are simple. The SEPI algorithm will be presented in this paper along with several examples.

Symbolic Probabilistic Inference with Continuous Variables

Mar 20, 2013Kuo-Chu Chang, Robert Fung

Research on Symbolic Probabilistic Inference (SPI) [2, 3] has provided an algorithm for resolving general queries in Bayesian networks. SPI applies the concept of dependency directed backward search to probabilistic inference, and is incremental with respect to both queries and observations. Unlike traditional Bayesian network inferencing algorithms, SPI algorithm is goal directed, performing only those calculations that are required to respond to queries. Research to date on SPI applies to Bayesian networks with discrete-valued variables and does not address variables with continuous values. In this papers, we extend the SPI algorithm to handle Bayesian networks made up of continuous variables where the relationships between the variables are restricted to be ?linear gaussian?. We call this variation of the SPI algorithm, SPI Continuous (SPIC). SPIC modifies the three basic SPI operations: multiplication, summation, and substitution. However, SPIC retains the framework of the SPI algorithm, namely building the search tree and recursive query mechanism and therefore retains the goal-directed and incrementality features of SPI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge