Addressing caveats of neural persistence with deep graph persistence

Jul 20, 2023Leander Girrbach, Anders Christensen, Ole Winther, Zeynep Akata, A. Sophia Koepke

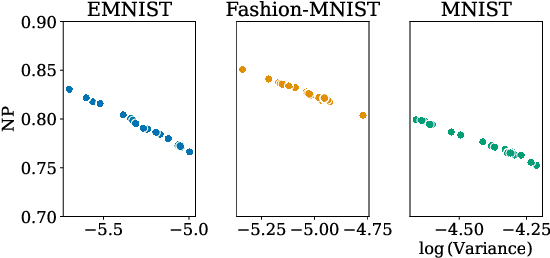

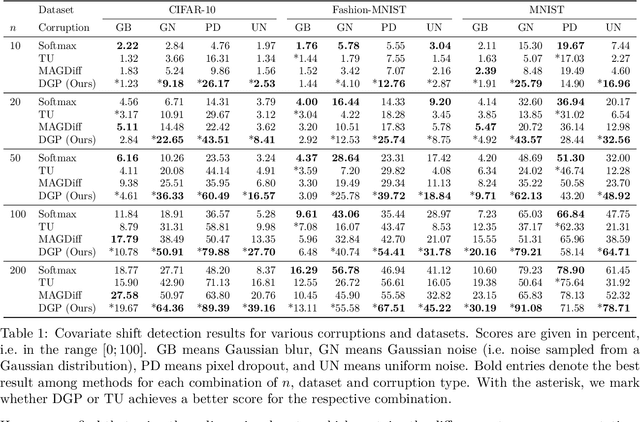

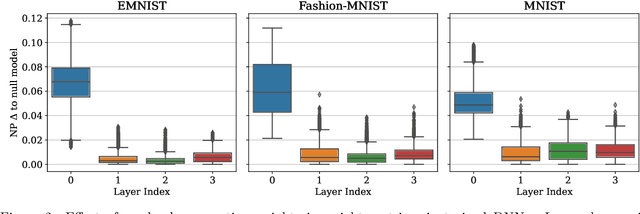

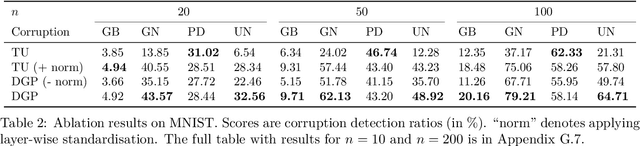

Neural Persistence is a prominent measure for quantifying neural network complexity, proposed in the emerging field of topological data analysis in deep learning. In this work, however, we find both theoretically and empirically that the variance of network weights and spatial concentration of large weights are the main factors that impact neural persistence. Whilst this captures useful information for linear classifiers, we find that no relevant spatial structure is present in later layers of deep neural networks, making neural persistence roughly equivalent to the variance of weights. Additionally, the proposed averaging procedure across layers for deep neural networks does not consider interaction between layers. Based on our analysis, we propose an extension of the filtration underlying neural persistence to the whole neural network instead of single layers, which is equivalent to calculating neural persistence on one particular matrix. This yields our deep graph persistence measure, which implicitly incorporates persistent paths through the network and alleviates variance-related issues through standardisation. Code is available at https://github.com/ExplainableML/Deep-Graph-Persistence .

Word Segmentation and Morphological Parsing for Sanskrit

Jan 30, 2022Jingwen Li, Leander Girrbach

We describe our participation in the Word Segmentation and Morphological Parsing (WSMP) for Sanskrit hackathon. We approach the word segmentation task as a sequence labelling task by predicting edit operations from which segmentations are derived. We approach the morphological analysis task by predicting morphological tags and rules that transform inflected words into their corresponding stems. Also, we propose an end-to-end trainable pipeline model for joint segmentation and morphological analysis. Our model performed best in the joint segmentation and analysis subtask (80.018 F1 score) and performed second best in the individual subtasks (segmentation: 96.189 F1 score / analysis: 69.180 F1 score). Finally, we analyse errors made by our models and suggest future work and possible improvements regarding data and evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge