Dynamic Change of Amplitude for OCT Functional Imaging

Nov 28, 2023Yang Jianlong, Zhang Haoran, Liu Chang, Gu Chengfu

Optical coherence tomography (OCT) is capable of non-destructively obtaining cross-sectional information of samples with micrometer spatial resolution, which plays an important role in ophthalmology and endovascular medicine. Measuring OCT amplitude can obtain three-dimensional structural information of the sample, such as the layered structure of the retina, but is of limited use for functional information such as tissue specificity, blood flow, and mechanical properties. OCT functional imaging techniques based on other optical field properties including phase, polarization state, and wavelength have emerged, such as Doppler OCT, optical coherence elastography, polarization-sensitive OCT, and visible-light OCT. Among them, functional imaging techniques based on dynamic changes of amplitude have significant robustness and complexity advantages, and achieved significant clinical success in label-free blood flow imaging. In addition, dynamic light scattering OCT for 3D blood flow velocity measurement, dynamic OCT with the ability to display label-free tissue/cell specificity, and OCT thermometry for monitoring the temperature field of thermophysical treatments are the frontiers in OCT functional imaging. In this paper, the principles and applications of the above technologies are summarized, the remaining technical challenges are analyzed, and the future development is envisioned.

SikuGPT: A Generative Pre-trained Model for Intelligent Information Processing of Ancient Texts from the Perspective of Digital Humanities

Apr 16, 2023Liu Chang, Wang Dongbo, Zhao Zhixiao, Hu Die, Wu Mengcheng, Lin Litao, Shen Si, Li Bin, Liu Jiangfeng, Zhang Hai, Zhao Lianzheng

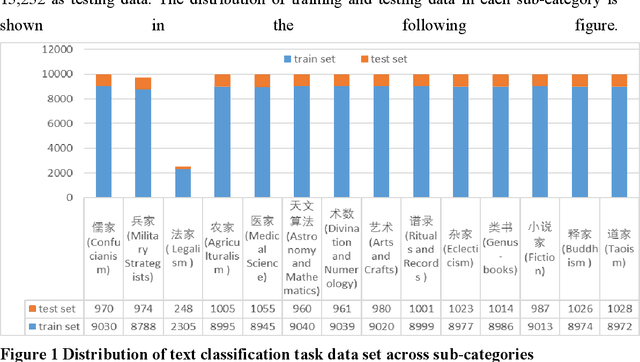

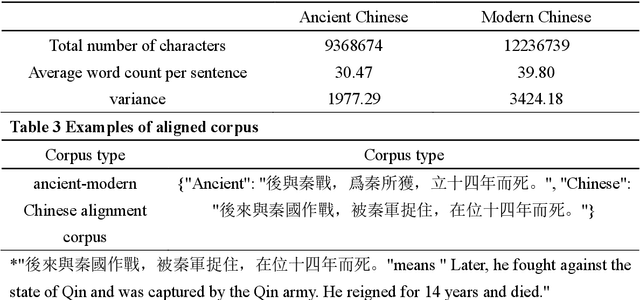

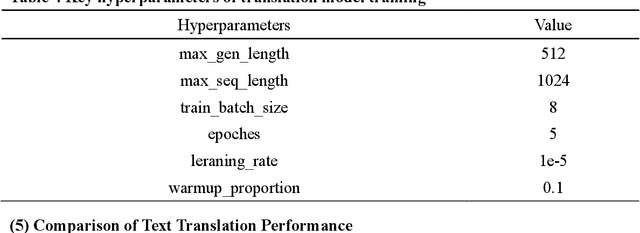

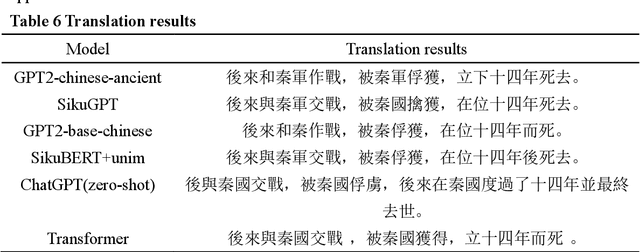

The rapid advance in artificial intelligence technology has facilitated the prosperity of digital humanities research. Against such backdrop, research methods need to be transformed in the intelligent processing of ancient texts, which is a crucial component of digital humanities research, so as to adapt to new development trends in the wave of AIGC. In this study, we propose a GPT model called SikuGPT based on the corpus of Siku Quanshu. The model's performance in tasks such as intralingual translation and text classification exceeds that of other GPT-type models aimed at processing ancient texts. SikuGPT's ability to process traditional Chinese ancient texts can help promote the organization of ancient information and knowledge services, as well as the international dissemination of Chinese ancient culture.

PolSAR Image Classification Based on Dilated Convolution and Pixel-Refining Parallel Mapping network in the Complex Domain

Sep 24, 2019Xiao Dongling, Liu Chang

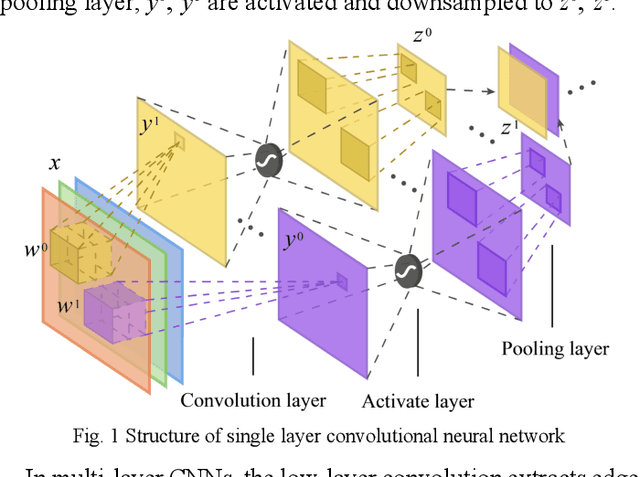

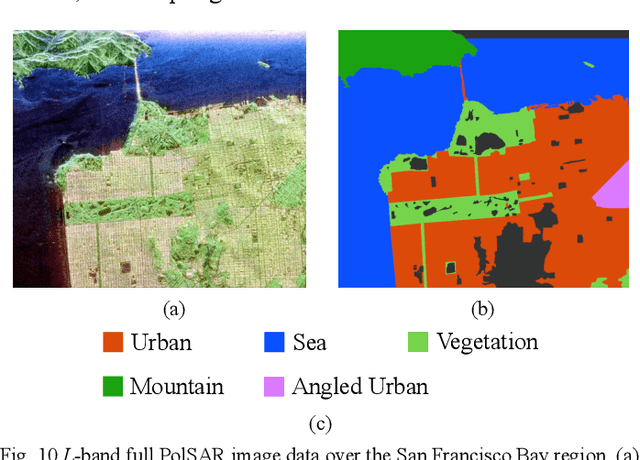

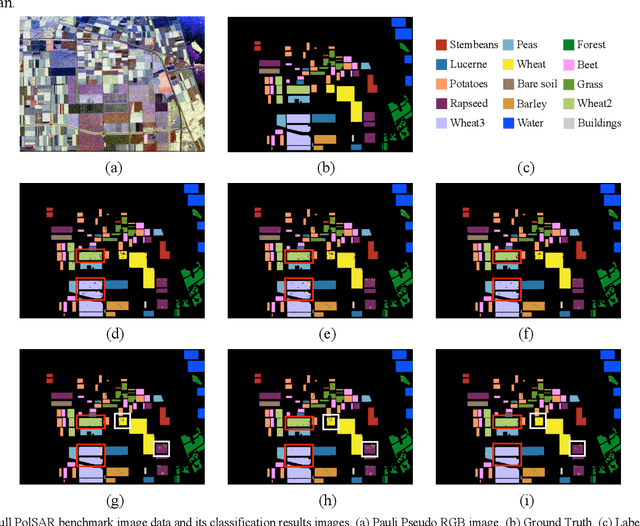

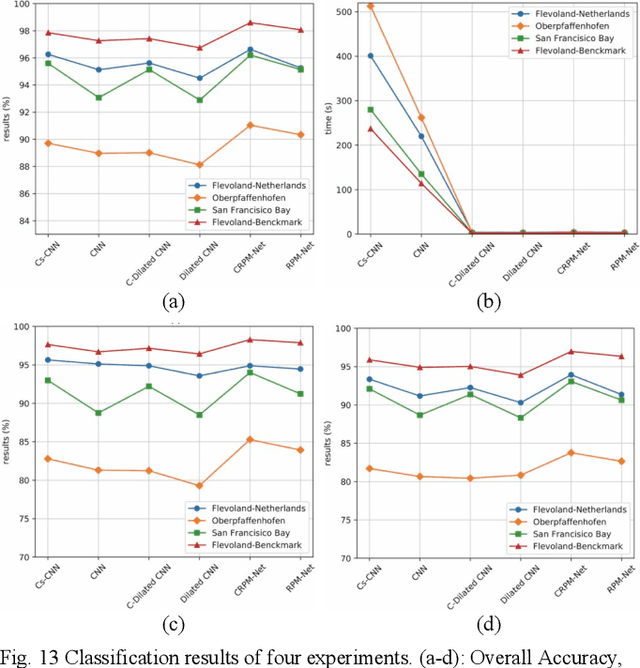

Efficient and accurate polarimetric synthetic aperture radar (PolSAR) image classification with a limited number of prior labels is always full of challenges. For general supervised deep learning classification algorithms, the pixel-by-pixel algorithm achieves precise yet inefficient classification with a small number of labeled pixels, whereas the pixel mapping algorithm achieves efficient yet edge-rough classification with more prior labels required. To take efficiency, accuracy and prior labels into account, we propose a novel pixel-refining parallel mapping network in the complex domain named CRPM-Net and the corresponding training algorithm for PolSAR image classification. CRPM-Net consists of two parallel sub-networks: a) A transfer dilated convolution mapping network in the complex domain (C-Dilated CNN) activated by a complex cross-convolution neural network (Cs-CNN), which is aiming at precise localization, high efficiency and the full use of phase information; b) A complex domain encoder-decoder network connected parallelly with C-Dilated CNN, which is to extract more contextual semantic features. Finally, we design a two-step algorithm to train the Cs-CNN and CRPM-Net with a small number of labeled pixels for higher accuracy by refining misclassified labeled pixels. We verify the proposed method on AIRSAR and E-SAR datasets. The experimental results demonstrate that CRPM-Net achieves the best classification results and substantially outperforms some latest state-of-the-art approaches in both efficiency and accuracy for PolSAR image classification. The source code and trained models for CRPM-Net is available at: https://github.com/PROoshio/CRPM-Net.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge