Machine Learning Techniques for Intrusion Detection

May 09, 2015Mahdi Zamani, Mahnush Movahedi

An Intrusion Detection System (IDS) is a software that monitors a single or a network of computers for malicious activities (attacks) that are aimed at stealing or censoring information or corrupting network protocols. Most techniques used in today's IDS are not able to deal with the dynamic and complex nature of cyber attacks on computer networks. Hence, efficient adaptive methods like various techniques of machine learning can result in higher detection rates, lower false alarm rates and reasonable computation and communication costs. In this paper, we study several such schemes and compare their performance. We divide the schemes into methods based on classical artificial intelligence (AI) and methods based on computational intelligence (CI). We explain how various characteristics of CI techniques can be used to build efficient IDS.

A DDoS-Aware IDS Model Based on Danger Theory and Mobile Agents

Dec 28, 2014Mahdi Zamani, Mahnush Movahedi, Mohammad Ebadzadeh, Hossein Pedram

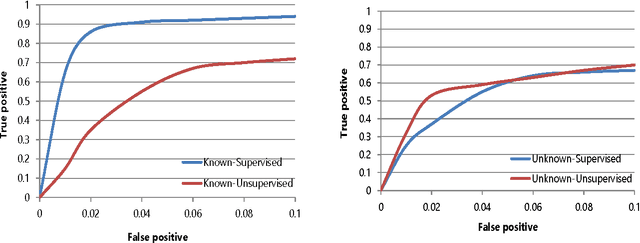

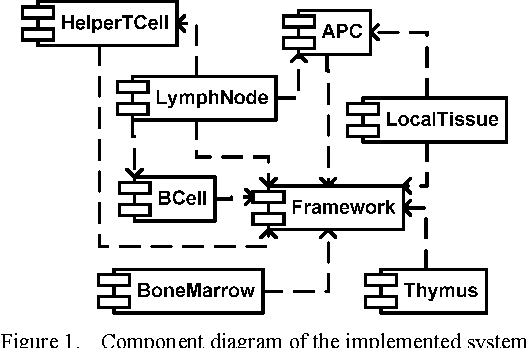

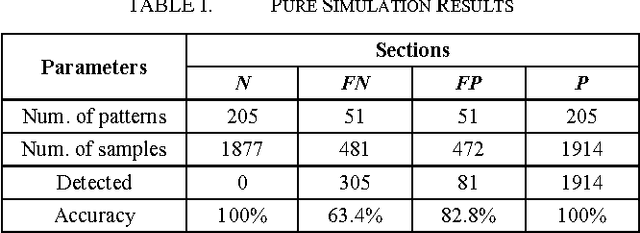

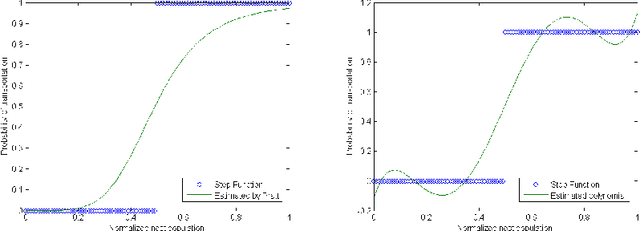

We propose an artificial immune model for intrusion detection in distributed systems based on a relatively recent theory in immunology called Danger theory. Based on Danger theory, immune response in natural systems is a result of sensing corruption as well as sensing unknown substances. In contrast, traditional self-nonself discrimination theory states that immune response is only initiated by sensing nonself (unknown) patterns. Danger theory solves many problems that could only be partially explained by the traditional model. Although the traditional model is simpler, such problems result in high false positive rates in immune-inspired intrusion detection systems. We believe using danger theory in a multi-agent environment that computationally emulates the behavior of natural immune systems is effective in reducing false positive rates. We first describe a simplified scenario of immune response in natural systems based on danger theory and then, convert it to a computational model as a network protocol. In our protocol, we define several immune signals and model cell signaling via message passing between agents that emulate cells. Most messages include application-specific patterns that must be meaningfully extracted from various system properties. We show how to model these messages in practice by performing a case study on the problem of detecting distributed denial-of-service attacks in wireless sensor networks. We conduct a set of systematic experiments to find a set of performance metrics that can accurately distinguish malicious patterns. The results indicate that the system can be efficiently used to detect malicious patterns with a high level of accuracy.

On Optimal Decision-Making in Ant Colonies

Aug 19, 2014Mahnush Movahedi, Mahdi Zamani

Colonies of ants can collectively choose the best of several nests, even when many of the active ants who organize the move visit only one site. Understanding such a behavior can help us design efficient distributed decision making algorithms. Marshall et al. propose a model for house-hunting in colonies of ant Temnothorax albipennis. Unfortunately, their model does not achieve optimal decision-making while laboratory experiments show that, in fact, colonies usually achieve optimality during the house-hunting process. In this paper, we argue that the model of Marshall et al. can achieve optimality by including nest size information in their mathematical model. We use lab results of Pratt et al. to re-define the differential equations of Marshall et al. Finally, we sketch our strategy for testing the optimality of the new model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge