Marina Petrova

Unknown Interference Modeling for Rate Adaptation in Cell-Free Massive MIMO Networks

Apr 18, 2024

Co-channel interference poses a challenge in any wireless communication network where the time-frequency resources are reused over different geographical areas. The interference is particularly diverse in cell-free massive multiple-input multiple-output (MIMO) networks, where a large number of user equipments (UEs) are multiplexed by a multitude of access points (APs) on the same time-frequency resources. For realistic and scalable network operation, only the interference from UEs belonging to the same serving cluster of APs can be estimated in real-time and suppressed by precoding/combining. As a result, the unknown interference arising from scheduling variations in neighboring clusters makes the rate adaptation hard and can lead to outages. This paper aims to model the unknown interference power in the uplink of a cell-free massive MIMO network. The results show that the proposed method effectively describes the distribution of the unknown interference power and provides a tool for rate adaptation with guaranteed target outage.

HBF MU-MIMO with Interference-Aware Beam Pair Link Allocation for Beyond-5G mm-Wave Networks

Feb 27, 2024Hybrid beamforming (HBF) multi-user multiple-input multiple-output (MU-MIMO) is a key technology for unlocking the directional millimeter-wave (mm-wave) nature for spatial multiplexing beyond current codebook-based 5G-NR networks. In order to suppress co-scheduled users' interference, HBF MU-MIMO is predicated on having sufficient radio frequency chains and accurate channel state information (CSI), which can otherwise lead to performance losses due to imperfect interference cancellation. In this work, we propose IABA, a 5G-NR standard-compliant beam pair link (BPL) allocation scheme for mitigating spatial interference in practical HBF MU-MIMO networks. IABA solves the network sum throughput optimization via either a distributed or a centralized BPL allocation using dedicated CSI reference signals for candidate BPL monitoring. We present a comprehensive study of practical multi-cell mm-wave networks and demonstrate that HBF MU-MIMO without interference-aware BPL allocation experiences strong residual interference which limits the achievable network performance. Our results show that IABA offers significant performance gains over the default interference-agnostic 5G-NR BPL allocation, and even allows HBF MU-MIMO to outperform the fully digital MU-MIMO baseline, by facilitating allocation of secondary BPLs other than the strongest BPL found during initial access. We further demonstrate the scalability of IABA with increased gNB antennas and densification for beyond-5G mm-wave networks.

Power Allocation Scheme for Device-Free Localization in 6G ISAC Networks

Feb 16, 2024Integrated Sensing and Communication (ISAC) is considered one of the crucial technologies in the upcoming sixth-generation (6G) mobile communication systems that could facilitate ultra-precise positioning of passive and active targets and extremely high data rates through spectrum coexistence and hardware sharing. Such an ISAC network offers a lot of benefits, but comes with the challenge of managing the mutual interference between the sensing and communication services. In this paper, we investigate the problem of localization accuracy in a monostatic ISAC network under consideration of inter-BS interference due to communication signal and sensing echoes, and self-interference at the respective BS. We propose a power allocation algorithm that minimizes BS's maximum range estimate error while considering minimum communication signal-to-interference-plus-noise ratio (SINR) and total power constraint. Our numerical results demonstrate the effectiveness of the proposed algorithm and indicate that it can enhance the sensing performance when the self-interference is effectively suppressed.

Cooperative Multi-Monostatic Sensing for Object Localization in 6G Networks

Nov 24, 2023Enabling passive sensing of the environment using cellular base stations (BSs) will be one of the disruptive features of the sixth-generation (6G) networks. However, accurate localization and positioning of objects are challenging to achieve as multipath significantly degrades the reflected echos. Existing localization techniques perform well under the assumption of large bandwidth available but perform poorly in bandwidth-limited scenarios. To alleviate this problem, in this work, we introduce a 5G New Radio (NR)-based cooperative multi-monostatic sensing framework for passive target localization that operates in the Frequency Range 1 (FR1) band. We propose a novel fusion-based estimation process that can mitigate the effect of multipath by assigning appropriate weight to the range estimation of each BS. Extensive simulation results using ray-tracing demonstrate the efficacy of the proposed multi-sensing framework in bandwidth-limited scenarios.

Communication-Efficient Orchestrations for URLLC Service via Hierarchical Reinforcement Learning

Jul 25, 2023

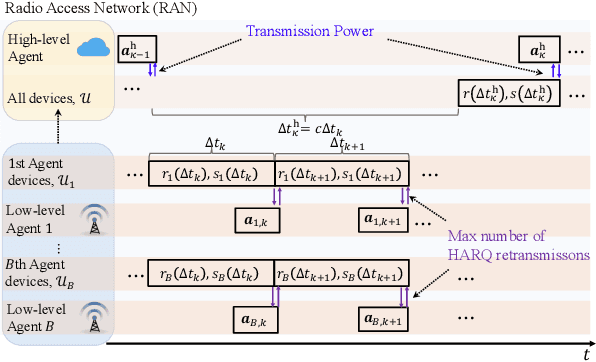

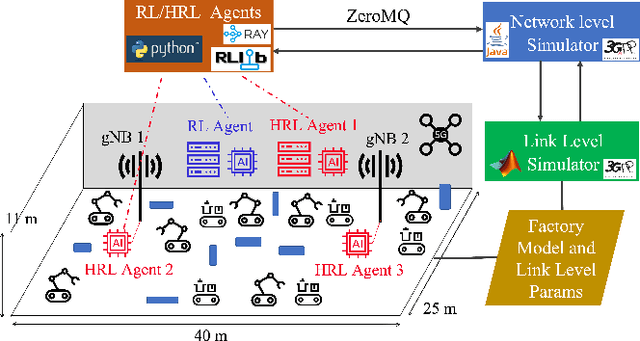

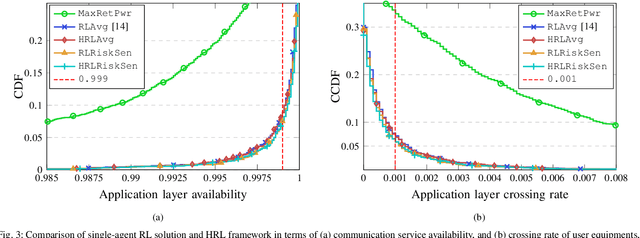

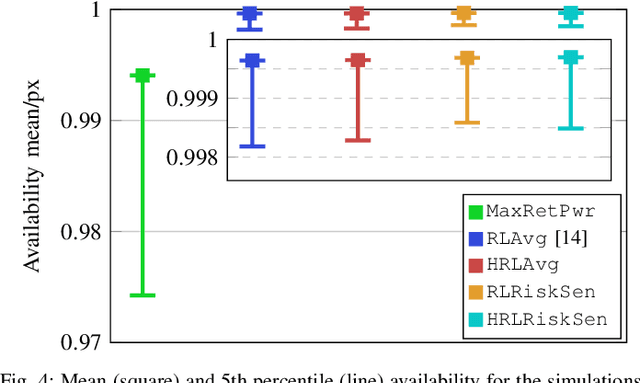

Ultra-reliable low latency communications (URLLC) service is envisioned to enable use cases with strict reliability and latency requirements in 5G. One approach for enabling URLLC services is to leverage Reinforcement Learning (RL) to efficiently allocate wireless resources. However, with conventional RL methods, the decision variables (though being deployed at various network layers) are typically optimized in the same control loop, leading to significant practical limitations on the control loop's delay as well as excessive signaling and energy consumption. In this paper, we propose a multi-agent Hierarchical RL (HRL) framework that enables the implementation of multi-level policies with different control loop timescales. Agents with faster control loops are deployed closer to the base station, while the ones with slower control loops are at the edge or closer to the core network providing high-level guidelines for low-level actions. On a use case from the prior art, with our HRL framework, we optimized the maximum number of retransmissions and transmission power of industrial devices. Our extensive simulation results on the factory automation scenario show that the HRL framework achieves better performance as the baseline single-agent RL method, with significantly less overhead of signal transmissions and delay compared to the one-agent RL methods.

A Bayesian Approach to Characterize Unknown Interference Power in Wireless Networks

May 12, 2023

The existence of unknown interference is a prevalent problem in wireless communication networks. Especially in multi-user multiple-input multiple-output (MIMO) networks, where a large number of user equipments are served on the same time-frequency resources, the outage performance may be dominated by the unknown interference arising from scheduling variations in neighboring cells. In this letter, we propose a Bayesian method for modeling the unknown interference power in the uplink of a cellular network. Numerical results show that our method accurately models the distribution of the unknown interference power and can be effectively used for rate adaptation with guaranteed target outage performance.

Device Selection for the Coexistence of URLLC and Distributed Learning Services

Dec 22, 2022

Recent advances in distributed artificial intelligence (AI) have led to tremendous breakthroughs in various communication services, from fault-tolerant factory automation to smart cities. When distributed learning is run over a set of wirelessly connected devices, random channel fluctuations and the incumbent services running on the same network impact the performance of both distributed learning and the coexisting service. In this paper, we investigate a mixed service scenario where distributed AI workflow and ultra-reliable low latency communication (URLLC) services run concurrently over a network. Consequently, we propose a risk sensitivity-based formulation for device selection to minimize the AI training delays during its convergence period while ensuring that the operational requirements of the URLLC service are met. To address this challenging coexistence problem, we transform it into a deep reinforcement learning problem and address it via a framework based on soft actor-critic algorithm. We evaluate our solution with a realistic and 3GPP-compliant simulator for factory automation use cases. Our simulation results confirm that our solution can significantly decrease the training delay of the distributed AI service while keeping the URLLC availability above its required threshold and close to the scenario where URLLC solely consumes all network resources.

Soft Handover Procedures in mmWave Cell-Free Massive MIMO Networks

Sep 06, 2022

This paper considers a mmWave cell-free massive MIMO (multiple-input multiple-output) network composed of a large number of geographically distributed access points (APs) simultaneously serving multiple user equipments (UEs) via coherent joint transmission. We address UE mobility in the downlink (DL) with imperfect channel state information (CSI) and pilot training. Aiming at extending traditional handover concepts to the challenging AP-UE association strategies of cell-free networks, distributed algorithms for joint pilot assignment and cluster formation are proposed in a dynamic environment considering UE mobility. The algorithms provide a systematic procedure for initial access and update of the serving set of APs and assigned pilot sequence to each UE. The principal goal is to limit the necessary number of AP and pilot changes, while limiting computational complexity. The performance of the system is evaluated, with maximum ratio and regularized zero-forcing precoding, in terms of spectral efficiency (SE). The results show that our proposed distributed algorithms effectively identify the essential AP-UE association refinements. It also provides a significantly lower average number of pilot changes compared to an ultra-dense network (UDN). Moreover, we develop an improved pilot assignment procedure that facilitates massive access to the network in highly loaded scenarios.

Interplay between Distributed AI Workflow and URLLC

Aug 02, 2022

Distributed artificial intelligence (AI) has recently accomplished tremendous breakthroughs in various communication services, ranging from fault-tolerant factory automation to smart cities. When distributed learning is run over a set of wireless connected devices, random channel fluctuations, and the incumbent services simultaneously running on the same network affect the performance of distributed learning. In this paper, we investigate the interplay between distributed AI workflow and ultra-reliable low latency communication (URLLC) services running concurrently over a network. Using 3GPP compliant simulations in a factory automation use case, we show the impact of various distributed AI settings (e.g., model size and the number of participating devices) on the convergence time of distributed AI and the application layer performance of URLLC. Unless we leverage the existing 5G-NR quality of service handling mechanisms to separate the traffic from the two services, our simulation results show that the impact of distributed AI on the availability of the URLLC devices is significant. Moreover, with proper setting of distributed AI (e.g., proper user selection), we can substantially reduce network resource utilization, leading to lower latency for distributed AI and higher availability for the URLLC users. Our results provide important insights for future 6G and AI standardization.

Low Complexity Beam Searching Using Trajectory Information in Mobile Millimeter-wave Networks

Jun 06, 2022

Millimeter-wave and terahertz systems rely on beamforming/combining codebooks for finding the best beam directions during the initial access procedure. Existing approaches suffer from large codebook sizes and high beam searching overhead in the presence of mobile devices. To alleviate this problem, we suggest utilizing the similarity of the channel in adjacent locations to divide the UE trajectory into a set of separate regions and maintain a set of candidate paths for each region in a database. In this paper, we show the tradeoff between the number of regions and the signalling overhead, i.e., higher number of regions corresponds to higher signal-to-noise ratio (SNR) but also higher signalling overhead for the database. We then propose an optimization framework to find the minimum number of regions based on the trajectory of a mobile device. Using realistic ray tracing datasets, we demonstrate that the proposed method reduces the beam searching complexity and latency while providing high SNR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge