Interpretable Network Visualizations: A Human-in-the-Loop Approach for Post-hoc Explainability of CNN-based Image Classification

May 06, 2024Matteo Bianchi, Antonio De Santis, Andrea Tocchetti, Marco Brambilla

Transparency and explainability in image classification are essential for establishing trust in machine learning models and detecting biases and errors. State-of-the-art explainability methods generate saliency maps to show where a specific class is identified, without providing a detailed explanation of the model's decision process. Striving to address such a need, we introduce a post-hoc method that explains the entire feature extraction process of a Convolutional Neural Network. These explanations include a layer-wise representation of the features the model extracts from the input. Such features are represented as saliency maps generated by clustering and merging similar feature maps, to which we associate a weight derived by generalizing Grad-CAM for the proposed methodology. To further enhance these explanations, we include a set of textual labels collected through a gamified crowdsourcing activity and processed using NLP techniques and Sentence-BERT. Finally, we show an approach to generate global explanations by aggregating labels across multiple images.

Tactile Perception in Upper Limb Prostheses: Mechanical Characterization, Human Experiments, and Computational Findings

Feb 20, 2024Alessia Silvia Ivani, Manuel G. Catalano, Giorgio Grioli, Matteo Bianchi, Yon Visell, Antonio Bicchi

Our research investigates vibrotactile perception in four prosthetic hands with distinct kinematics and mechanical characteristics. We found that rigid and simple socket-based prosthetic devices can transmit tactile information and surprisingly enable users to identify the stimulated finger with high reliability. This ability decreases with more advanced prosthetic hands with additional articulations and softer mechanics. We conducted experiments to understand the underlying mechanisms. We assessed a prosthetic user's ability to discriminate finger contacts based on vibrations transmitted through the four prosthetic hands. We also performed numerical and mechanical vibration tests on the prostheses and used a machine learning classifier to identify the contacted finger. Our results show that simpler and rigid prosthetic hands facilitate contact discrimination (for instance, a user of a purely cosmetic hand can distinguish a contact on the index finger from other fingers with 83% accuracy), but all tested hands, including soft advanced ones, performed above chance level. Despite advanced hands reducing vibration transmission, a machine learning algorithm still exceeded human performance in discriminating finger contacts. These findings suggest the potential for enhancing vibrotactile feedback in advanced prosthetic hands and lay the groundwork for future integration of such feedback in prosthetic devices.

VIBES: Vibro-Inertial Bionic Enhancement System in a Prosthetic Socket

Dec 20, 2023Alessia Silvia Ivani, Federica Barontini, Manuel G. Catalano, Giorgio Grioli, Matteo Bianchi, Antonio Bicchi

The use of vibrotactile feedback is of growing interest in the field of prosthetics, but few devices fully integrate this technology in the prosthesis to transmit high-frequency contact information (such as surface roughness and first contact) arising from the interaction of the prosthetic device with external items. This study describes a wearable vibrotactile system for high-frequency tactile information embedded in the prosthetic socket. The device consists of two compact planar vibrotactile actuators in direct contact with the user's skin to transmit tactile cues. These stimuli are directly related to the acceleration profiles recorded with two IMUS placed on the distal phalanx of a soft under-actuated robotic prosthesis (SoftHand Pro). We characterized the system from a psychophysical point of view with fifteen able-bodied participants by computing participants' Just Noticeable Difference (JND) related to the discrimination of vibrotactile cues delivered on the index finger, which are associated with the exploration of different sandpapers. Moreover, we performed a pilot experiment with one SoftHand Pro prosthesis user by designing a task, i.e. Active Texture Identification, to investigate if our feedback could enhance users' roughness discrimination. Results indicate that the device can effectively convey contact and texture cues, which users can readily detect and distinguish.

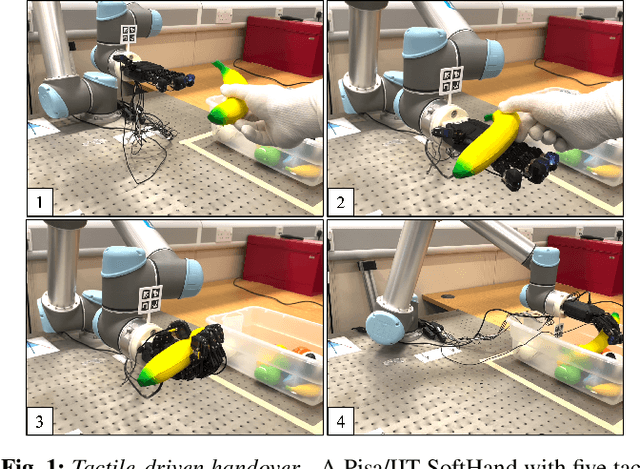

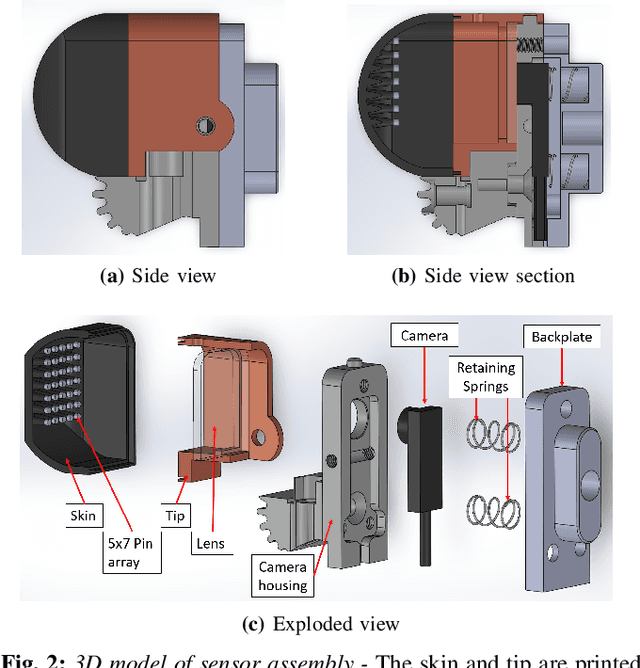

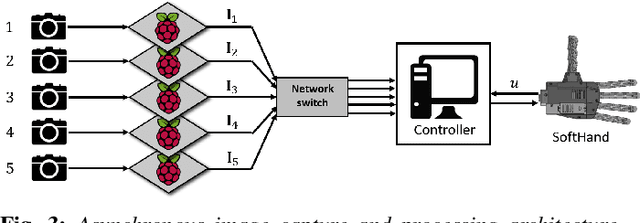

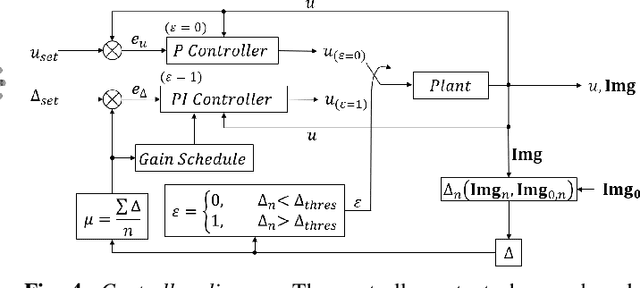

Tactile-Driven Gentle Grasping for Human-Robot Collaborative Tasks

Mar 16, 2023Christopher J. Ford, Haoran Li, John Lloyd, Manuel G. Catalano, Matteo Bianchi, Efi Psomopoulou, Nathan F. Lepora

This paper presents a control scheme for force sensitive, gentle grasping with a Pisa/IIT anthropomorphic SoftHand equipped with a miniaturised version of the TacTip optical tactile sensor on all five fingertips. The tactile sensors provide high-resolution information about a grasp and how the fingers interact with held objects. We first describe a series of hardware developments for performing asynchronous sensor data acquisition and processing, resulting in a fast control loop sufficient for real-time grasp control. We then develop a novel grasp controller that uses tactile feedback from all five fingertip sensors simultaneously to gently and stably grasp 43 objects of varying geometry and stiffness, which is then applied to a human-to-robot handover task. These developments open the door to more advanced manipulation with underactuated hands via fast reflexive control using high-resolution tactile sensing.

Performance Analysis of Vibrotactile and Slide-and-Squeeze Haptic Feedback Devices for Limbs Postural Adjustment

Jul 08, 2022Marta Lorenzini, Simone Ciotti, Juan M. Gandarias, Simone Fani, Matteo Bianchi, Arash Ajoudani

Recurrent or sustained awkward body postures are among the most frequently cited risk factors to the development of work-related musculoskeletal disorders (MSDs). To prevent workers from adopting harmful configurations but also to guide them toward more ergonomic ones, wearable haptic devices may be the ideal solution. In this paper, a vibrotactile unit, called ErgoTac, and a slide-and-squeeze unit, called CUFF, were evaluated in a limbs postural correction setting. Their capability of providing single-joint (shoulder or knee) and multi-joint (shoulder and knee at once) guidance was compared in twelve healthy subjects, using quantitative task-related metrics and subjective quantitative evaluation. An integrated environment was also built to ease communication and data sharing between the involved sensor and feedback systems. Results show good acceptability and intuitiveness for both devices. ErgoTac appeared as the suitable feedback device for the shoulder, while the CUFF may be the effective solution for the knee. This comparative study, although preliminary, was propaedeutic to the potential integration of the two devices for effective whole-body postural corrections, with the aim to develop a feedback and assistive apparatus to increase workers' awareness about risky working conditions and therefore to prevent MSDs.

BRL/Pisa/IIT SoftHand: A Low-cost, 3D-Printed, Underactuated, Tendon-Driven Hand with Soft and Adaptive Synergies

Jun 25, 2022Haoran Li, Christopher J. Ford, Matteo Bianchi, Manuel G. Catalano, Efi Psomopoulou, Nathan F. Lepora

This paper introduces the BRL/Pisa/IIT (BPI) SoftHand: a single actuator-driven, low-cost, 3D-printed, tendon-driven, underactuated robot hand that can be used to perform a range of grasping tasks. Based on the adaptive synergies of the Pisa/IIT SoftHand, we design a new joint system and tendon routing to facilitate the inclusion of both soft and adaptive synergies, which helps us balance durability, affordability and grasping performance of the hand. The focus of this work is on the design, simulation, synergies and grasping tests of this SoftHand. The novel phalanges are designed and printed based on linkages, gear pairs and geometric restraint mechanisms, and can be applied to most tendon-driven robotic hands. We show that the robot hand can successfully grasp and lift various target objects and adapt to hold complex geometric shapes, reflecting the successful adoption of the soft and adaptive synergies. We intend to open-source the design of the hand so that it can be built cheaply on a home 3D-printer. For more detail: https://sites.google.com/view/bpi-softhandtactile-group-bri/brlpisaiit-softhand-design

Towards integrated tactile sensorimotor control in anthropomorphic soft robotic hands

Feb 05, 2021Nathan F. Lepora, Andrew Stinchcombe, Chris Ford, Alfred Brown, John Lloyd, Manuel G. Catalano, Matteo Bianchi, Benjamin Ward-Cherrier

In this work, we report on the integrated sensorimotor control of the Pisa/IIT SoftHand, an anthropomorphic soft robot hand designed around the principle of adaptive synergies, with the BRL tactile fingertip (TacTip), a soft biomimetic optical tactile sensor based on the human sense of touch. Our focus is how a sense of touch can be used to control an anthropomorphic hand with one degree of actuation, based on an integration that respects the hand's mechanical functionality. We consider: (i) closed-loop tactile control to establish a light contact on an unknown held object, based on the structural similarity with an undeformed tactile image; and (ii) controlling the estimated pose of an edge feature of a held object, using a convolutional neural network approach developed for controlling other sensors in the TacTip family. Overall, this gives a foundation to endow soft robotic hands with human-like touch, with implications for autonomous grasping, manipulation, human-robot interaction and prosthetics. Supplemental video: https://youtu.be/ndsxj659bkQ

Latest Datasets and Technologies Presented in the Workshop on Grasping and Manipulation Datasets

Sep 08, 2016Matteo Bianchi, Jeannette Bohg, Yu Sun

This paper reports the activities and outcomes in the Workshop on Grasping and Manipulation Datasets that was organized under the International Conference on Robotics and Automation (ICRA) 2016. The half day workshop was packed with nine invited talks, 12 interactive presentations, and one panel discussion with ten panelists. This paper summarizes all the talks and presentations and recaps what has been discussed in the panels session. This summary servers as a review of recent developments in data collection in grasping and manipulation. Many of the presentations describe ongoing efforts or explorations that could be achieved and fully available in a year or two. The panel discussion not only commented on the current approaches, but also indicates new directions and focuses. The workshop clearly displayed the importance of quality datasets in robotics and robotic grasping and manipulation field. Hopefully the workshop could motivate larger efforts to create big datasets that are comparable with big datasets in other communities such as computer vision.

Synergy-Based Hand Pose Sensing: Optimal Glove Design

Jun 04, 2012Matteo Bianchi, Paolo Salaris, Antonio Bicchi

In this paper we study the problem of improving human hand pose sensing device performance by exploiting the knowledge on how humans most frequently use their hands in grasping tasks. In a companion paper we studied the problem of maximizing the reconstruction accuracy of the hand pose from partial and noisy data provided by any given pose sensing device (a sensorized "glove") taking into account statistical a priori information. In this paper we consider the dual problem of how to design pose sensing devices, i.e. how and where to place sensors on a glove, to get maximum information about the actual hand posture. We study the continuous case, whereas individual sensing elements in the glove measure a linear combination of joint angles, the discrete case, whereas each measure corresponds to a single joint angle, and the most general hybrid case, whereas both continuous and discrete sensing elements are available. The objective is to provide, for given a priori information and fixed number of measurements, the optimal design minimizing in average the reconstruction error. Solutions relying on the geometrical synergy definition as well as gradient flow-based techniques are provided. Simulations of reconstruction performance show the effectiveness of the proposed optimal design.

Synergy-based Hand Pose Sensing: Reconstruction Enhancement

Jun 04, 2012Matteo Bianchi, Paolo Salaris, Antonio Bicchi

Low-cost sensing gloves for reconstruction posture provide measurements which are limited under several regards. They are generated through an imperfectly known model, are subject to noise, and may be less than the number of Degrees of Freedom (DoFs) of the hand. Under these conditions, direct reconstruction of the hand posture is an ill-posed problem, and performance can be very poor. This paper examines the problem of estimating the posture of a human hand using(low-cost) sensing gloves, and how to improve their performance by exploiting the knowledge on how humans most frequently use their hands. To increase the accuracy of pose reconstruction without modifying the glove hardware - hence basically at no extra cost - we propose to collect, organize, and exploit information on the probabilistic distribution of human hand poses in common tasks. We discuss how a database of such an a priori information can be built, represented in a hierarchy of correlation patterns or postural synergies, and fused with glove data in a consistent way, so as to provide a good hand pose reconstruction in spite of insufficient and inaccurate sensing data. Simulations and experiments on a low-cost glove are reported which demonstrate the effectiveness of the proposed techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge