Michael Röder

BENGAL: An Automatic Benchmark Generator for Entity Recognition and Linking

Nov 01, 2018

The manual creation of gold standards for named entity recognition and entity linking is time- and resource-intensive. Moreover, recent works show that such gold standards contain a large proportion of mistakes in addition to being difficult to maintain. We hence present BENGAL, a novel automatic generation of such gold standards as a complement to manually created benchmarks. The main advantage of our benchmarks is that they can be readily generated at any time. They are also cost-effective while being guaranteed to be free of annotation errors. We compare the performance of 11 tools on benchmarks in English generated by BENGAL and on 16benchmarks created manually. We show that our approach can be ported easily across languages by presenting results achieved by 4 tools on both Brazilian Portuguese and Spanish. Overall, our results suggest that our automatic benchmark generation approach can create varied benchmarks that have characteristics similar to those of existing benchmarks. Our approach is open-source. Our experimental results are available at http://faturl.com/bengalexpinlg and the code at https://github.com/dice-group/BENGAL.

Entity Linking in 40 Languages using MAG

May 29, 2018

A plethora of Entity Linking (EL) approaches has recently been developed. While many claim to be multilingual, the MAG (Multilingual AGDISTIS) approach has been shown recently to outperform the state of the art in multilingual EL on 7 languages. With this demo, we extend MAG to support EL in 40 different languages, including especially low-resources languages such as Ukrainian, Greek, Hungarian, Croatian, Portuguese, Japanese and Korean. Our demo relies on online web services which allow for an easy access to our entity linking approaches and can disambiguate against DBpedia and Wikidata. During the demo, we will show how to use MAG by means of POST requests as well as using its user-friendly web interface. All data used in the demo is available at https://hobbitdata.informatik.uni-leipzig.de/agdistis/

Using Multi-Label Classification for Improved Question Answering

Oct 24, 2017

A plethora of diverse approaches for question answering over RDF data have been developed in recent years. While the accuracy of these systems has increased significantly over time, most systems still focus on particular types of questions or particular challenges in question answering. What is a curse for single systems is a blessing for the combination of these systems. We show in this paper how machine learning techniques can be applied to create a more accurate question answering metasystem by reusing existing systems. In particular, we develop a multi-label classification-based metasystem for question answering over 6 existing systems using an innovative set of 14 question features. The metasystem outperforms the best single system by 14% F-measure on the recent QALD-6 benchmark. Furthermore, we analyzed the influence and correlation of the underlying features on the metasystem quality.

MAG: A Multilingual, Knowledge-base Agnostic and Deterministic Entity Linking Approach

Oct 17, 2017

Entity linking has recently been the subject of a significant body of research. Currently, the best performing approaches rely on trained mono-lingual models. Porting these approaches to other languages is consequently a difficult endeavor as it requires corresponding training data and retraining of the models. We address this drawback by presenting a novel multilingual, knowledge-based agnostic and deterministic approach to entity linking, dubbed MAG. MAG is based on a combination of context-based retrieval on structured knowledge bases and graph algorithms. We evaluate MAG on 23 data sets and in 7 languages. Our results show that the best approach trained on English datasets (PBOH) achieves a micro F-measure that is up to 4 times worse on datasets in other languages. MAG, on the other hand, achieves state-of-the-art performance on English datasets and reaches a micro F-measure that is up to 0.6 higher than that of PBOH on non-English languages.

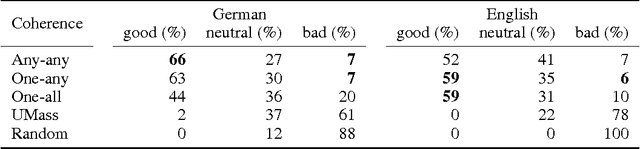

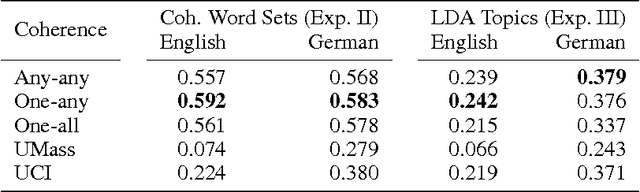

Evaluating topic coherence measures

Mar 25, 2014

Topic models extract representative word sets - called topics - from word counts in documents without requiring any semantic annotations. Topics are not guaranteed to be well interpretable, therefore, coherence measures have been proposed to distinguish between good and bad topics. Studies of topic coherence so far are limited to measures that score pairs of individual words. For the first time, we include coherence measures from scientific philosophy that score pairs of more complex word subsets and apply them to topic scoring.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge