Decoding AI: The inside story of data analysis in ChatGPT

Apr 12, 2024Ozan Evkaya, Miguel de Carvalho

As a result of recent advancements in generative AI, the field of Data Science is prone to various changes. This review critically examines the Data Analysis (DA) capabilities of ChatGPT assessing its performance across a wide range of tasks. While DA provides researchers and practitioners with unprecedented analytical capabilities, it is far from being perfect, and it is important to recognize and address its limitations.

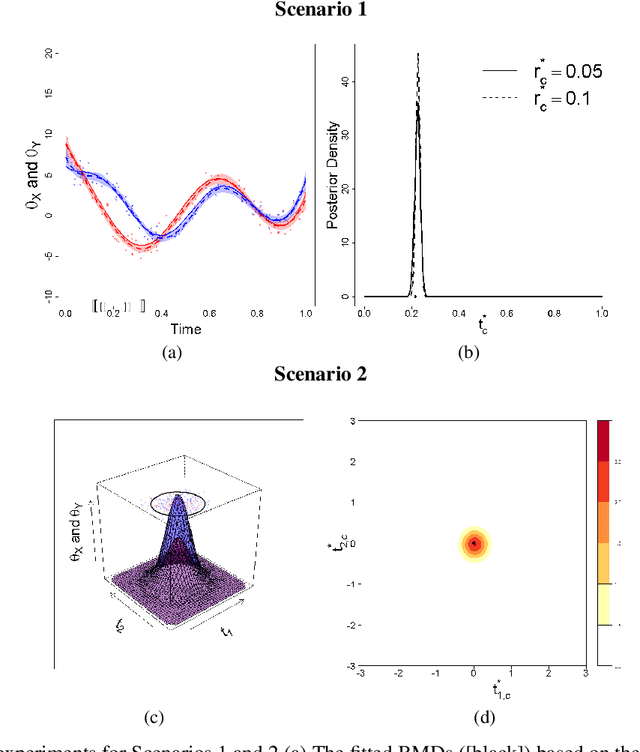

A parallelizable model-based approach for marginal and multivariate clustering

Dec 07, 2022Miguel de Carvalho, Gabriel Martos Venturini, Andrej Svetlošák

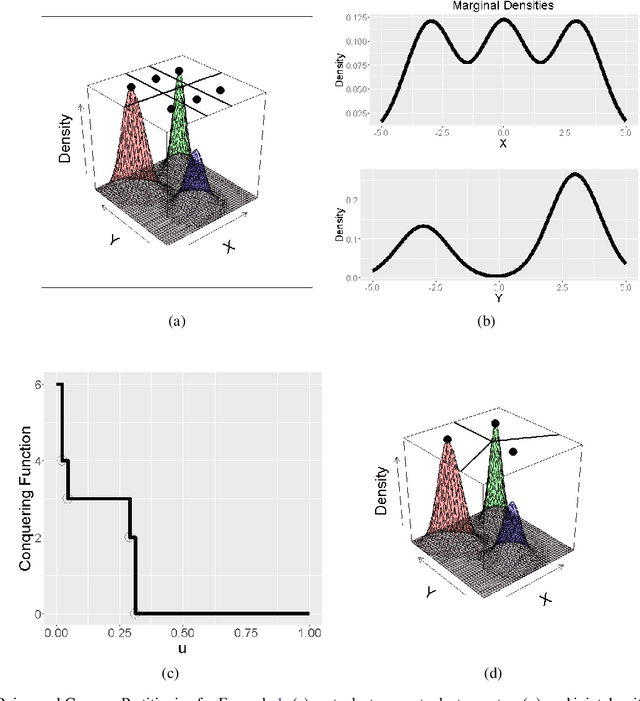

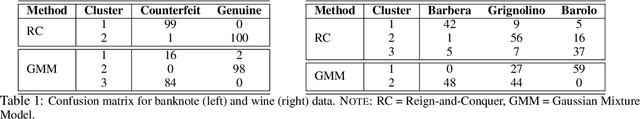

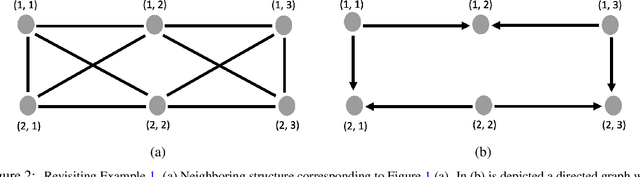

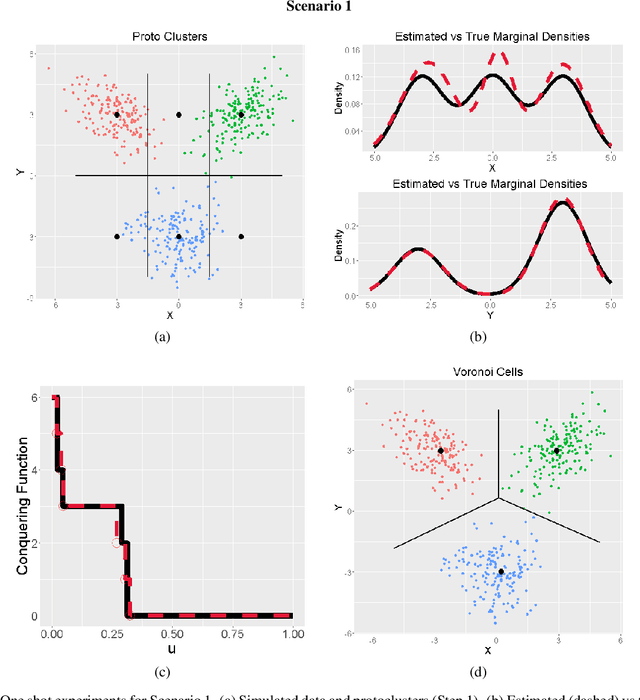

This paper develops a clustering method that takes advantage of the sturdiness of model-based clustering, while attempting to mitigate some of its pitfalls. First, we note that standard model-based clustering likely leads to the same number of clusters per margin, which seems a rather artificial assumption for a variety of datasets. We tackle this issue by specifying a finite mixture model per margin that allows each margin to have a different number of clusters, and then cluster the multivariate data using a strategy game-inspired algorithm to which we call Reign-and-Conquer. Second, since the proposed clustering approach only specifies a model for the margins -- but leaves the joint unspecified -- it has the advantage of being partially parallelizable; hence, the proposed approach is computationally appealing as well as more tractable for moderate to high dimensions than a `full' (joint) model-based clustering approach. A battery of numerical experiments on artificial data indicate an overall good performance of the proposed methods in a variety of scenarios, and real datasets are used to showcase their application in practice.

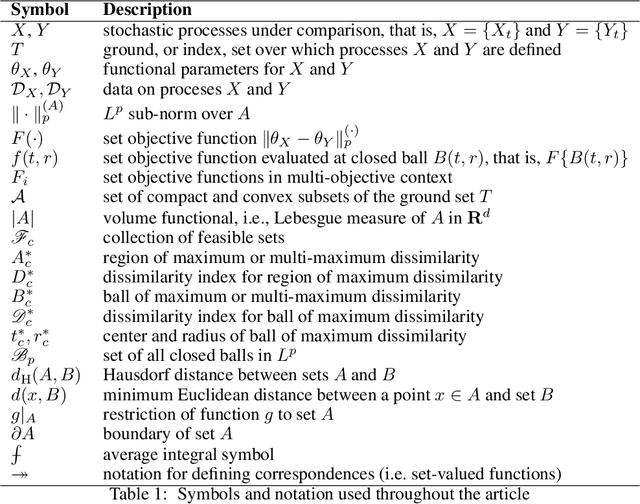

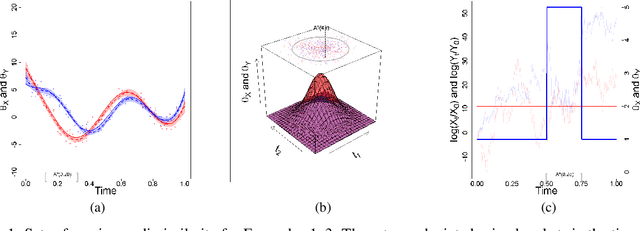

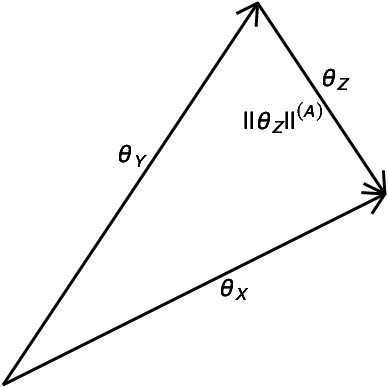

Uncovering Regions of Maximum Dissimilarity on Random Process Data

Sep 12, 2022Miguel de Carvalho, Gabriel Martos Venturini

The comparison of local characteristics of two random processes can shed light on periods of time or space at which the processes differ the most. This paper proposes a method that learns about regions with a certain volume, where the marginal attributes of two processes are less similar. The proposed methods are devised in full generality for the setting where the data of interest are themselves stochastic processes, and thus the proposed method can be used for pointing out the regions of maximum dissimilarity with a certain volume, in the contexts of functional data, time series, and point processes. The parameter functions underlying both stochastic processes of interest are modeled via a basis representation, and Bayesian inference is conducted via an integrated nested Laplace approximation. The numerical studies validate the proposed methods, and we showcase their application with case studies on criminology, finance, and medicine.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge