Expressive Priors in Bayesian Neural Networks: Kernel Combinations and Periodic Functions

May 15, 2019Tim Pearce, Mohamed Zaki, Alexandra Brintrup, Andy Neely

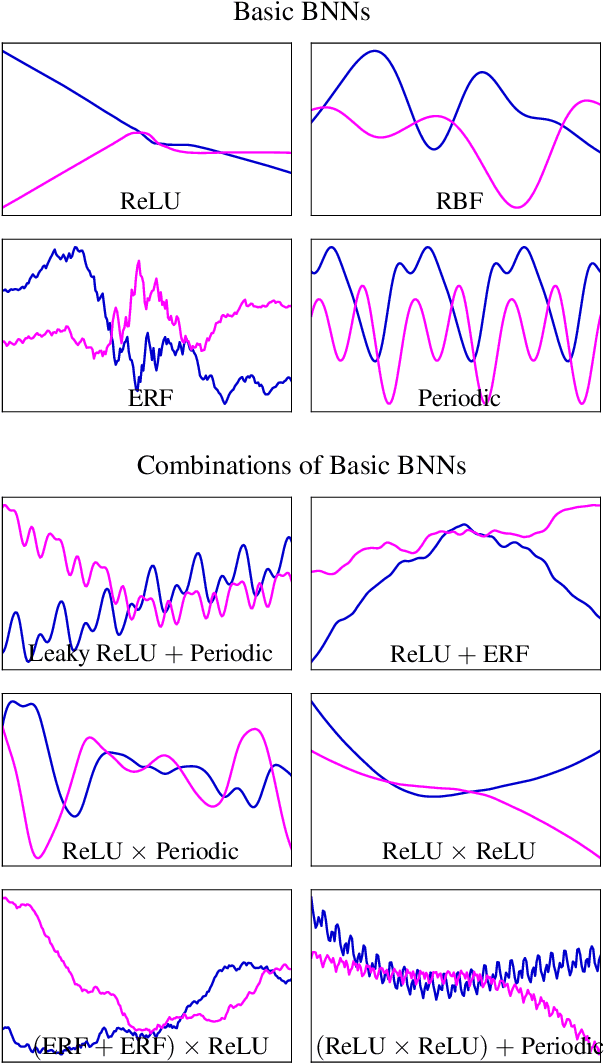

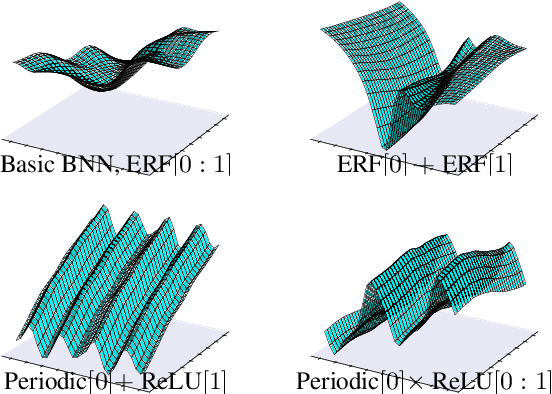

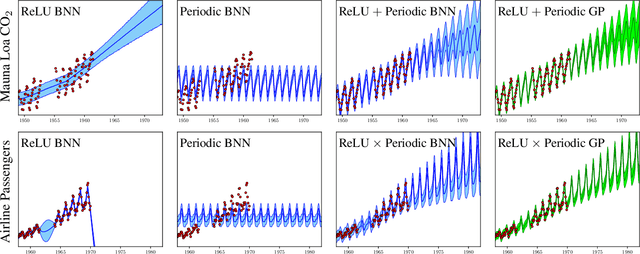

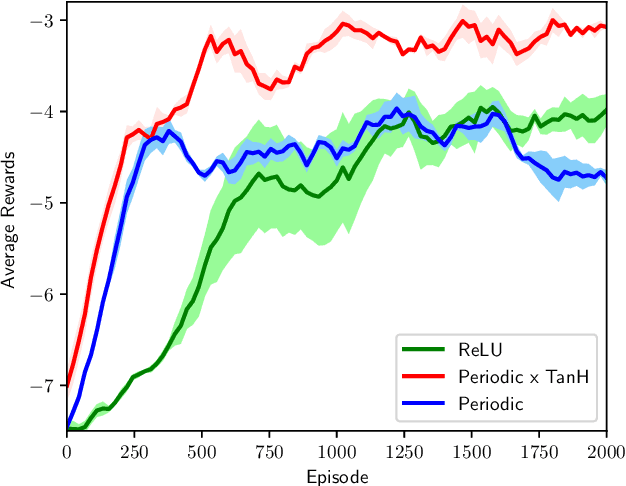

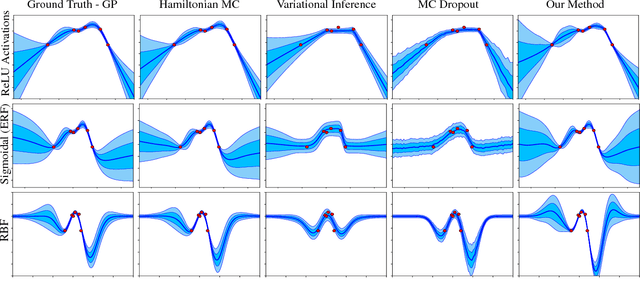

A simple, flexible approach to creating expressive priors in Gaussian process (GP) models makes new kernels from a combination of basic kernels, e.g. summing a periodic and linear kernel can capture seasonal variation with a long term trend. Despite a well-studied link between GPs and Bayesian neural networks (BNNs), the BNN analogue of this has not yet been explored. This paper derives BNN architectures mirroring such kernel combinations. Furthermore, it shows how BNNs can produce periodic kernels, which are often useful in this context. These ideas provide a principled approach to designing BNNs that incorporate prior knowledge about a function. We showcase the practical value of these ideas with illustrative experiments in supervised and reinforcement learning settings.

Fast CNN-Based Object Tracking Using Localization Layers and Deep Features Interpolation

Jan 09, 2019Al-Hussein A. El-Shafie, Mohamed Zaki, Serag El-Din Habib

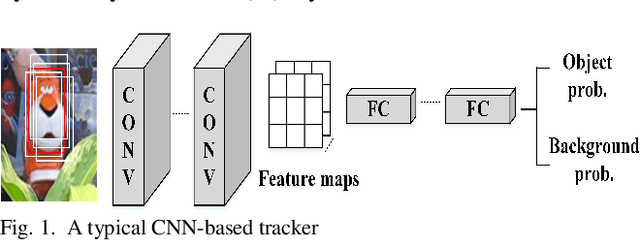

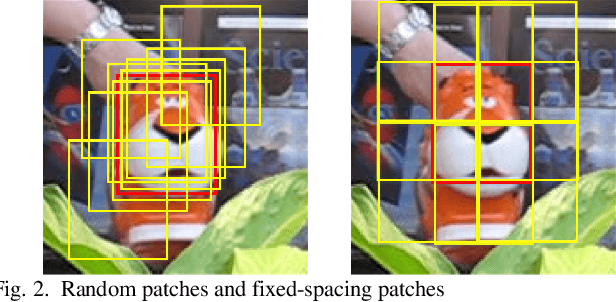

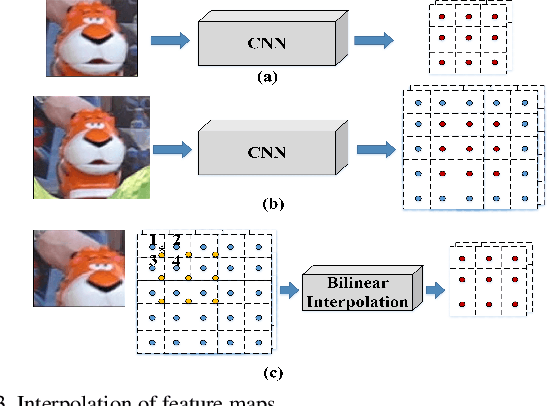

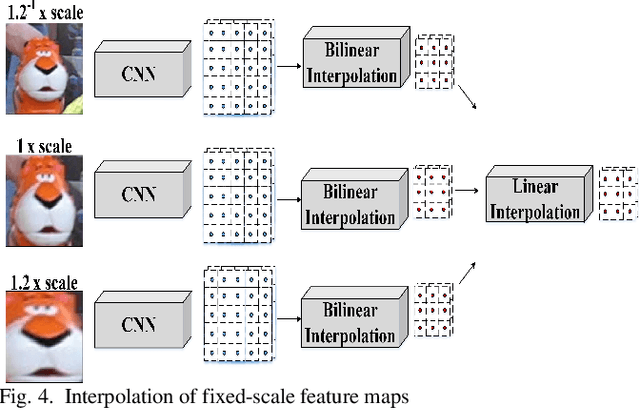

Object trackers based on Convolution Neural Network (CNN) have achieved state-of-the-art performance on recent tracking benchmarks, while they suffer from slow computational speed. The high computational load arises from the extraction of the feature maps of the candidate and training patches in every video frame. The candidate and training patches are typically placed randomly around the previous target location and the estimated target location respectively. In this paper, we propose novel schemes to speed-up the processing of the CNN-based trackers. We input the whole region-of-interest once to the CNN to eliminate the redundant computations of the random candidate patches. In addition to classifying each candidate patch as an object or background, we adapt the CNN to classify the target location inside the object patches as a coarse localization step, and we employ bilinear interpolation for the CNN feature maps as a fine localization step. Moreover, bilinear interpolation is exploited to generate CNN feature maps of the training patches without actually forwarding the training patches through the network which achieves a significant reduction of the required computations. Our tracker does not rely on offline video training. It achieves competitive performance results on the OTB benchmark with 8x speed improvements compared to the equivalent tracker.

Bayesian Neural Network Ensembles

Nov 27, 2018Tim Pearce, Mohamed Zaki, Andy Neely

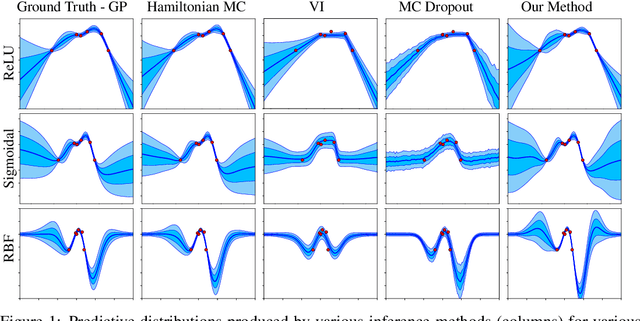

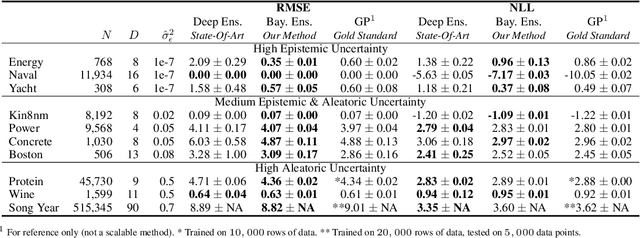

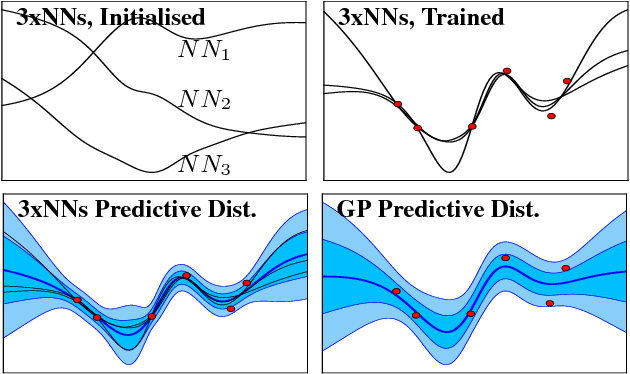

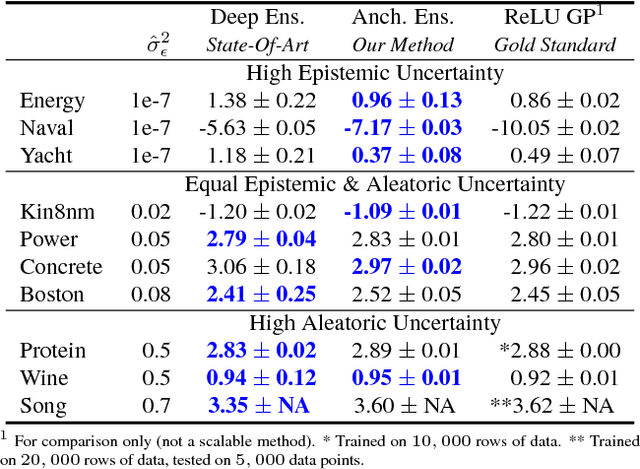

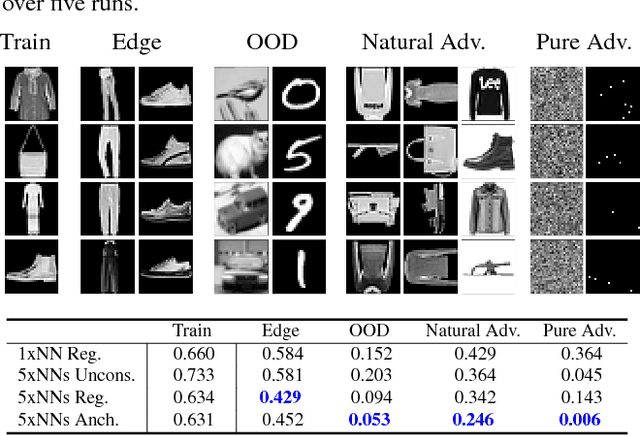

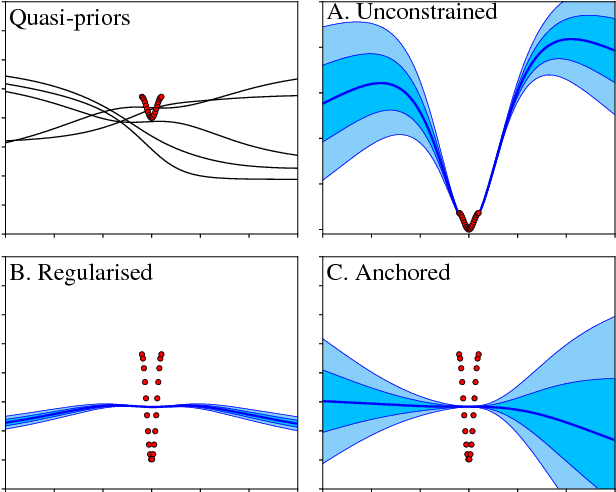

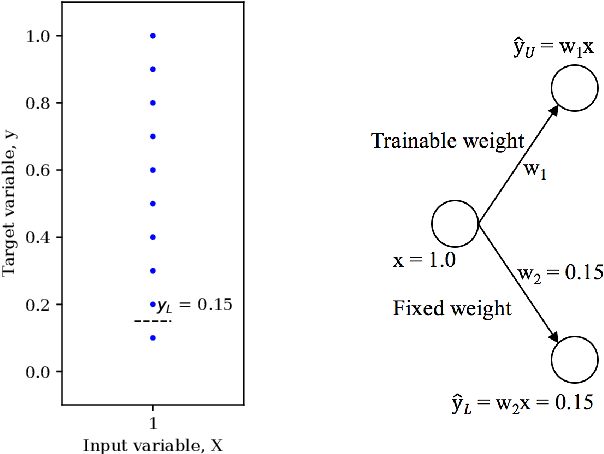

Ensembles of neural networks (NNs) have long been used to estimate predictive uncertainty; a small number of NNs are trained from different initialisations and sometimes on differing versions of the dataset. The variance of the ensemble's predictions is interpreted as its epistemic uncertainty. The appeal of ensembling stems from being a collection of regular NNs - this makes them both scalable and easily implementable. They have achieved strong empirical results in recent years, often presented as a practical alternative to more costly Bayesian NNs (BNNs). The departure from Bayesian methodology is of concern since the Bayesian framework provides a principled, widely-accepted approach to handling uncertainty. In this extended abstract we derive and implement a modified NN ensembling scheme, which provides a consistent estimator of the Bayesian posterior in wide NNs - regularising parameters about values drawn from a prior distribution.

Uncertainty in Neural Networks: Bayesian Ensembling

Oct 22, 2018Tim Pearce, Mohamed Zaki, Alexandra Brintrup, Andy Neel

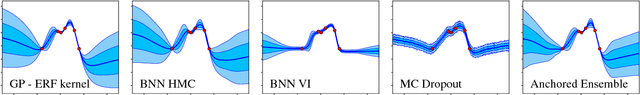

Understanding the uncertainty of a neural network's (NN) predictions is essential for many applications. The Bayesian framework provides a principled approach to this, however applying it to NNs is challenging due to the large number of parameters and data. Ensembling NNs provides a practical and scalable method for uncertainty quantification. Its drawback is that its justification is heuristic rather than Bayesian. In this work we propose one modification to the usual ensembling process, that does result in Bayesian behaviour: regularising parameters about values drawn from a prior distribution. Hence, we present an easily implementable, scalable technique for performing approximate Bayesian inference in NNs.

Bayesian Inference with Anchored Ensembles of Neural Networks, and Application to Exploration in Reinforcement Learning

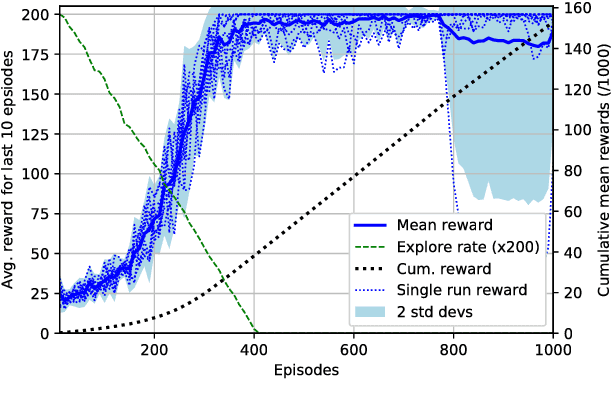

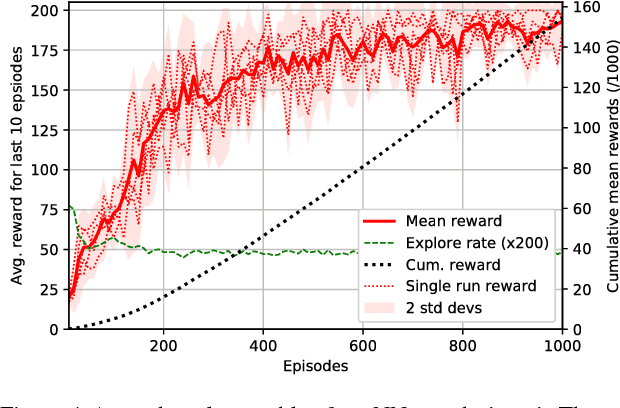

Jul 02, 2018Tim Pearce, Nicolas Anastassacos, Mohamed Zaki, Andy Neely

The use of ensembles of neural networks (NNs) for the quantification of predictive uncertainty is widespread. However, the current justification is intuitive rather than analytical. This work proposes one minor modification to the normal ensembling methodology, which we prove allows the ensemble to perform Bayesian inference, hence converging to the corresponding Gaussian Process as both the total number of NNs, and the size of each, tend to infinity. This working paper provides early-stage results in a reinforcement learning setting, analysing the practicality of the technique for an ensemble of small, finite number. Using the uncertainty estimates produced by anchored ensembles to govern the exploration-exploitation process results in steadier, more stable learning.

High-Quality Prediction Intervals for Deep Learning: A Distribution-Free, Ensembled Approach

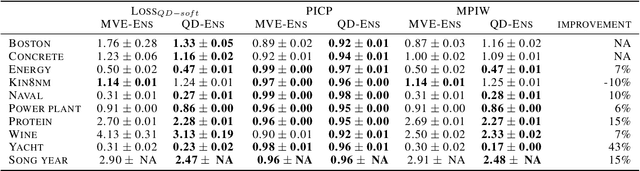

Jun 15, 2018Tim Pearce, Mohamed Zaki, Alexandra Brintrup, Andy Neely

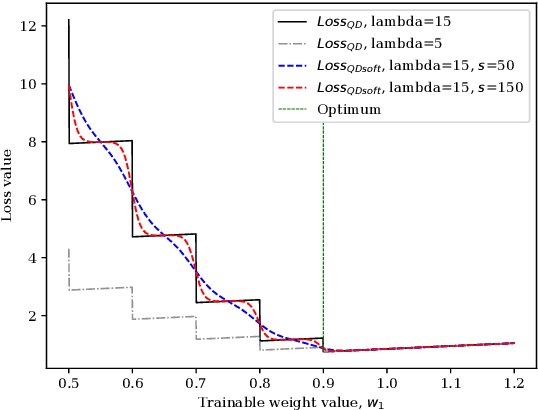

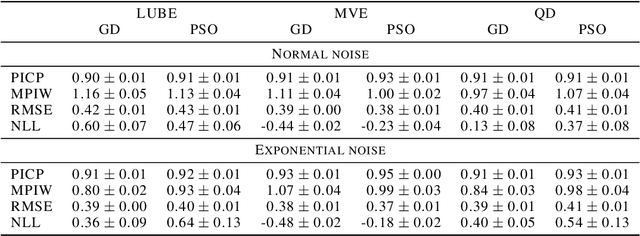

This paper considers the generation of prediction intervals (PIs) by neural networks for quantifying uncertainty in regression tasks. It is axiomatic that high-quality PIs should be as narrow as possible, whilst capturing a specified portion of data. We derive a loss function directly from this axiom that requires no distributional assumption. We show how its form derives from a likelihood principle, that it can be used with gradient descent, and that model uncertainty is accounted for in ensembled form. Benchmark experiments show the method outperforms current state-of-the-art uncertainty quantification methods, reducing average PI width by over 10%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge