Digital Twin-Based User-Centric Edge Continual Learning in Integrated Sensing and Communication

Nov 20, 2023Shisheng Hu, Jie Gao, Xinyu Huang, Mushu Li, Kaige Qu, Conghao Zhou, Xuemin, Shen

In this paper, we propose a digital twin (DT)-based user-centric approach for processing sensing data in an integrated sensing and communication (ISAC) system with high accuracy and efficient resource utilization. The considered scenario involves an ISAC device with a lightweight deep neural network (DNN) and a mobile edge computing (MEC) server with a large DNN. After collecting sensing data, the ISAC device either processes the data locally or uploads them to the server for higher-accuracy data processing. To cope with data drifts, the server updates the lightweight DNN when necessary, referred to as continual learning. Our objective is to minimize the long-term average computation cost of the MEC server by optimizing two decisions, i.e., sensing data offloading and sensing data selection for the DNN update. A DT of the ISAC device is constructed to predict the impact of potential decisions on the long-term computation cost of the server, based on which the decisions are made with closed-form formulas. Experiments on executing DNN-based human motion recognition tasks are conducted to demonstrate the outstanding performance of the proposed DT-based approach in computation cost minimization.

User Dynamics-Aware Edge Caching and Computing for Mobile Virtual Reality

Nov 17, 2023Mushu Li, Jie Gao, Conghao Zhou, Xuemin Shen, Weihua Zhuang

In this paper, we present a novel content caching and delivery approach for mobile virtual reality (VR) video streaming. The proposed approach aims to maximize VR video streaming performance, i.e., minimizing video frame missing rate, by proactively caching popular VR video chunks and adaptively scheduling computing resources at an edge server based on user and network dynamics. First, we design a scalable content placement scheme for deciding which video chunks to cache at the edge server based on tradeoffs between computing and caching resource consumption. Second, we propose a machine learning-assisted VR video delivery scheme, which allocates computing resources at the edge server to satisfy video delivery requests from multiple VR headsets. A Whittle index-based method is adopted to reduce the video frame missing rate by identifying network and user dynamics with low signaling overhead. Simulation results demonstrate that the proposed approach can significantly improve VR video streaming performance over conventional caching and computing resource scheduling strategies.

* 38 pages, 13 figures, single column double spaced, published in IEEE Journal of Selected Topics in Signal Processing

AI-Assisted Slicing-Based Resource Management for Two-Tier Radio Access Networks

Aug 21, 2023Conghao Zhou, Jie Gao, Mushu Li, Xuemin Shen, Weihua Zhuang, Xu Li, Weisen Shi

While network slicing has become a prevalent approach to service differentiation, radio access network (RAN) slicing remains challenging due to the need of substantial adaptivity and flexibility to cope with the highly dynamic network environment in RANs. In this paper, we develop a slicing-based resource management framework for a two-tier RAN to support multiple services with different quality of service (QoS) requirements. The developed framework focuses on base station (BS) service coverage (SC) and interference management for multiple slices, each of which corresponds to a service. New designs are introduced in the spatial, temporal, and slice dimensions to cope with spatiotemporal variations in data traffic, balance adaptivity and overhead of resource management, and enhance flexibility in service differentiation. Based on the proposed framework, an energy efficiency maximization problem is formulated, and an artificial intelligence (AI)-assisted approach is proposed to solve the problem. Specifically, a deep unsupervised learning-assisted algorithm is proposed for searching the optimal SC of the BSs, and an optimization-based analytical solution is found for managing interference among BSs. Simulation results under different data traffic distributions demonstrate that our proposed slicing-based resource management framework, empowered by the AI-assisted approach, outperforms the benchmark frameworks and achieves a close-to-optimal performance in energy efficiency.

Digital Twin-Based 3D Map Management for Edge-Assisted Mobile Augmented Reality

May 26, 2023Conghao Zhou, Jie Gao, Mushu Li, Nan Cheng, Xuemin Shen, Weihua Zhuang

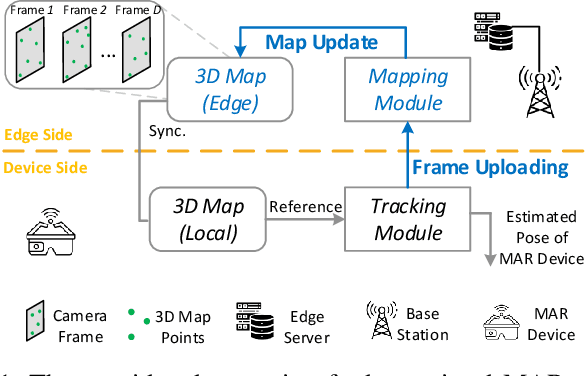

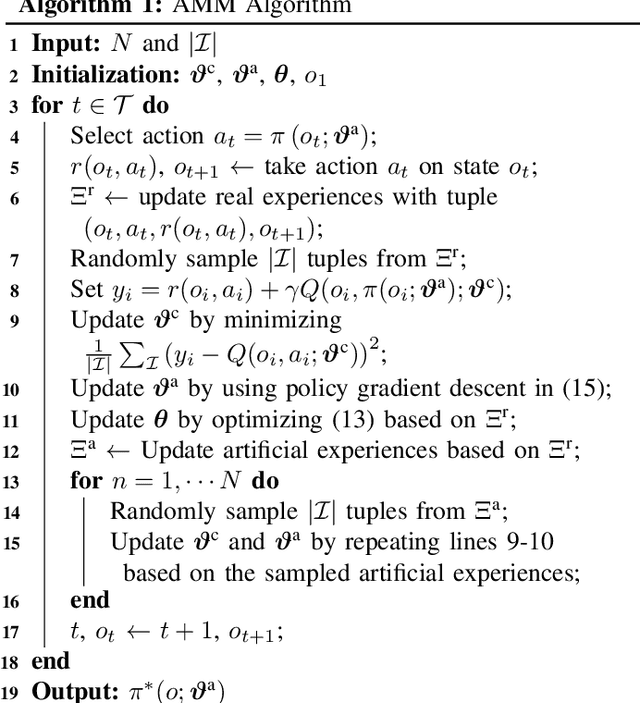

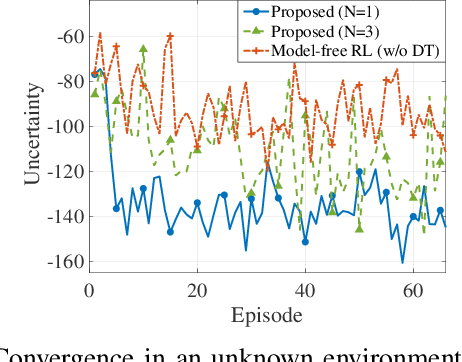

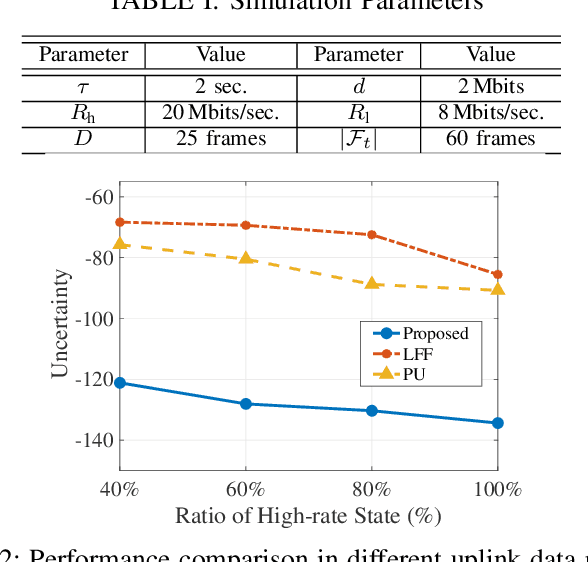

In this paper, we design a 3D map management scheme for edge-assisted mobile augmented reality (MAR) to support the pose estimation of individual MAR device, which uploads camera frames to an edge server. Our objective is to minimize the pose estimation uncertainty of the MAR device by periodically selecting a proper set of camera frames for uploading to update the 3D map. To address the challenges of the dynamic uplink data rate and the time-varying pose of the MAR device, we propose a digital twin (DT)-based approach to 3D map management. First, a DT is created for the MAR device, which emulates 3D map management based on predicting subsequent camera frames. Second, a model-based reinforcement learning (MBRL) algorithm is developed, utilizing the data collected from both the actual and the emulated data to manage the 3D map. With extensive emulated data provided by the DT, the MBRL algorithm can quickly provide an adaptive map management policy in a highly dynamic environment. Simulation results demonstrate that the proposed DT-based 3D map management outperforms benchmark schemes by achieving lower pose estimation uncertainty and higher data efficiency in dynamic environments.

Toward Immersive Communications in 6G

Mar 07, 2023Xuemin Shen, Jie Gao, Mushu Li, Conghao Zhou, Shisheng Hu, Mingcheng He, Weihua Zhuang

The sixth generation (6G) networks are expected to enable immersive communications and bridge the physical and the virtual worlds. Integrating extended reality, holography, and haptics, immersive communications will revolutionize how people work, entertain, and communicate by enabling lifelike interactions. However, the unprecedented demand for data transmission rate and the stringent requirements on latency and reliability create challenges for 6G networks to support immersive communications. In this survey article, we present the prospect of immersive communications and investigate emerging solutions to the corresponding challenges for 6G. First, we introduce use cases of immersive communications, in the fields of entertainment, education, and healthcare. Second, we present the concepts of immersive communications, including extended reality, haptic communication, and holographic communication, their basic implementation procedures, and their requirements on networks in terms of transmission rate, latency, and reliability. Third, we summarize the potential solutions to addressing the challenges from the aspects of communication, computing, and networking. Finally, we discuss future research directions and conclude this study.

* 29 pages, 8 Figures, published by Frontiers of Computer Science

Holistic Network Virtualization and Pervasive Network Intelligence for 6G

Jan 02, 2023Xuemin, Shen, Jie Gao, Wen Wu, Mushu Li, Conghao Zhou, Weihua Zhuang

In this tutorial paper, we look into the evolution and prospect of network architecture and propose a novel conceptual architecture for the 6th generation (6G) networks. The proposed architecture has two key elements, i.e., holistic network virtualization and pervasive artificial intelligence (AI). The holistic network virtualization consists of network slicing and digital twin, from the aspects of service provision and service demand, respectively, to incorporate service-centric and user-centric networking. The pervasive network intelligence integrates AI into future networks from the perspectives of networking for AI and AI for networking, respectively. Building on holistic network virtualization and pervasive network intelligence, the proposed architecture can facilitate three types of interplay, i.e., the interplay between digital twin and network slicing paradigms, between model-driven and data-driven methods for network management, and between virtualization and AI, to maximize the flexibility, scalability, adaptivity, and intelligence for 6G networks. We also identify challenges and open issues related to the proposed architecture. By providing our vision, we aim to inspire further discussions and developments on the potential architecture of 6G.

Digital Twin-Assisted Collaborative Transcoding for Better User Satisfaction in Live Streaming

Nov 13, 2022Xinyu Huang, Mushu Li, Wen Wu, Conghao Zhou, Xuemin Sherman Shen

In this paper, we propose a digital twin (DT)-assisted cloud-edge collaborative transcoding scheme to enhance user satisfaction in live streaming. We first present a DT-assisted transcoding workload estimation (TWE) model for the cloud-edge collaborative transcoding. Particularly, two DTs are constructed for emulating the cloud-edge collaborative transcoding process by analyzing spatial-temporal information of individual videos and transcoding configurations of transcoding queues, respectively. Two light-weight Bayesian neural networks are adopted to fit the TWE models in DTs, respectively. We then formulate a transcoding-path selection problem to maximize long-term user satisfaction within an average service delay threshold, taking into account the dynamics of video arrivals and video requests. The problem is transformed into a standard Markov decision process by using the Lyapunov optimization and solved by a deep reinforcement learning algorithm. Simulation results based on the real-world dataset demonstrate that the proposed scheme can effectively enhance user satisfaction compared with benchmark schemes.

Digital Twin-Empowered Network Planning for Multi-Tier Computing

Oct 06, 2022Conghao Zhou, Jie Gao, Mushu Li, Xuemin, Shen, Weihua Zhuang

In this paper, we design a resource management scheme to support stateful applications, which will be prevalent in 6G networks. Different from stateless applications, stateful applications require context data while executing computing tasks from user terminals (UTs). Using a multi-tier computing paradigm with servers deployed at the core network, gateways, and base stations to support stateful applications, we aim to optimize long-term resource reservation by jointly minimizing the usage of computing, storage, and communication resources and the cost from reconfiguring resource reservation. The coupling among different resources and the impact of UT mobility create challenges in resource management. To address the challenges, we develop digital twin (DT) empowered network planning with two elements, i.e., multi-resource reservation and resource reservation reconfiguration. First, DTs are designed for collecting UT status data, based on which UTs are grouped according to their mobility patterns. Second, an algorithm is proposed to customize resource reservation for different groups to satisfy their different resource demands. Last, a Meta-learning-based approach is developed to reconfigure resource reservation for balancing the network resource usage and the reconfiguration cost. Simulation results demonstrate that the proposed DT-empowered network planning outperforms benchmark frameworks by using less resources and incurring lower reconfiguration costs.

Personalized QoE Enhancement for Adaptive Video Streaming: A Digital Twin-Assisted Scheme

May 09, 2022Xinyu Huang, Conghao Zhou, Wen Wu, Mushu Li, Huaqing Wu, Xuemin, Shen

In this paper, we present a digital twin (DT)-assisted adaptive video streaming scheme to enhance personalized quality-of-experience (PQoE). Since PQoE models are user-specific and time-varying, existing schemes based on universal and time-invariant PQoE models may suffer from performance degradation. To address this issue, we first propose a DT-assisted PQoE model construction method to obtain accurate user-specific PQoE models. Specifically, user DTs (UDTs) are respectively constructed for individual users, which can acquire and utilize users' data to accurately tune PQoE model parameters in real time. Next, given the obtained PQoE models, we formulate a resource management problem to maximize the overall long-term PQoE by taking the dynamics of user' locations, video content requests, and buffer statuses into account. To solve this problem, a deep reinforcement learning algorithm is developed to jointly determine segment version selection, and communication and computing resource allocation. Simulation results on the real-world dataset demonstrate that the proposed scheme can effectively enhance PQoE compared with benchmark schemes.

AI-Native Network Slicing for 6G Networks

May 18, 2021Wen Wu, Conghao Zhou, Mushu Li, Huaqing Wu, Haibo Zhou, Ning Zhang, Xuemin, Shen, Weihua Zhuang

With the global roll-out of the fifth generation (5G) networks, it is necessary to look beyond 5G and envision the sixth generation (6G) networks. The 6G networks are expected to have space-air-ground integrated networking, advanced network virtualization, and ubiquitous intelligence. This article proposes an artificial intelligence (AI)-native network slicing architecture for 6G networks to facilitate intelligent network management and support emerging AI services. AI is built in the proposed network slicing architecture to enable the synergy of AI and network slicing. AI solutions are investigated for the entire lifecycle of network slicing to facilitate intelligent network management, i.e., AI for slicing. Furthermore, network slicing approaches are discussed to support emerging AI services by constructing slice instances and performing efficient resource management, i.e., slicing for AI. Finally, a case study is presented, followed by a discussion of open research issues that are essential for AI-native network slicing in 6G.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge