SoK: Privacy-preserving Deep Learning with Homomorphic Encryption

Jan 01, 2022Robert Podschwadt, Daniel Takabi, Peizhao Hu

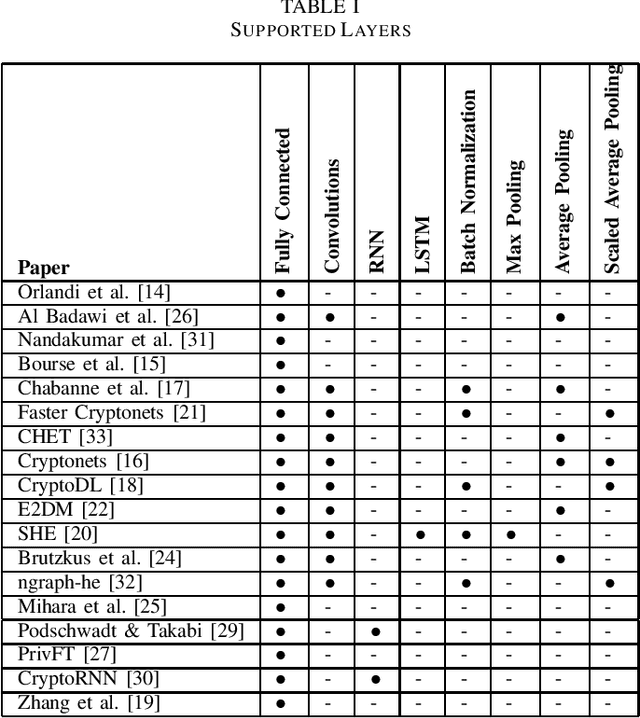

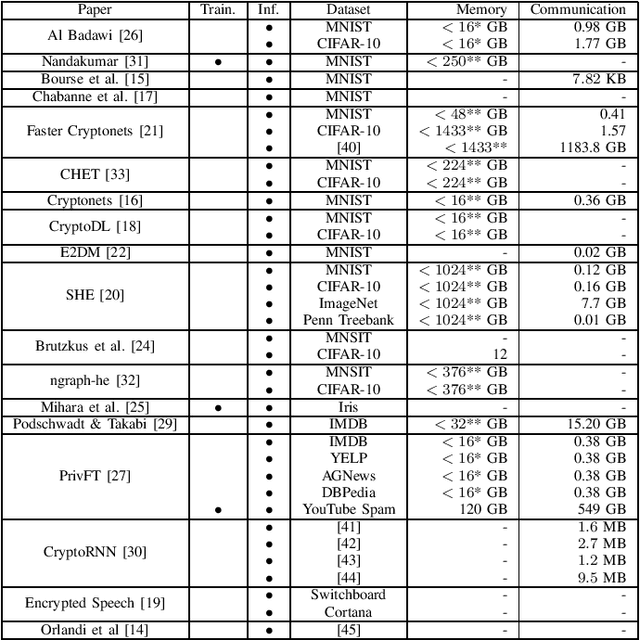

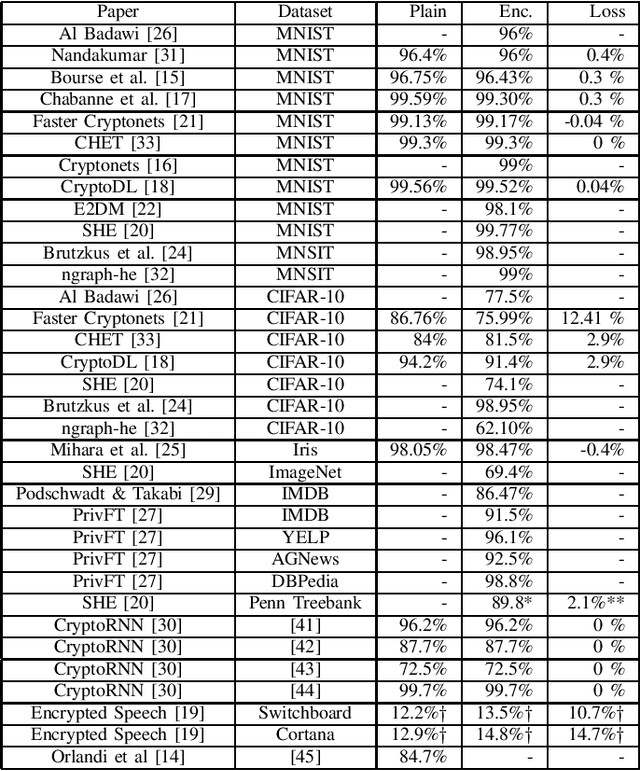

Outsourced computation for neural networks allows users access to state of the art models without needing to invest in specialized hardware and know-how. The problem is that the users lose control over potentially privacy sensitive data. With homomorphic encryption (HE) computation can be performed on encrypted data without revealing its content. In this systematization of knowledge, we take an in-depth look at approaches that combine neural networks with HE for privacy preservation. We categorize the changes to neural network models and architectures to make them computable over HE and how these changes impact performance. We find numerous challenges to HE based privacy-preserving deep learning such as computational overhead, usability, and limitations posed by the encryption schemes.

Collaborative Homomorphic Computation on Data Encrypted under Multiple Keys

Nov 11, 2019Asma Aloufi, Peizhao Hu

Homomorphic encryption (HE) is a promising cryptographic technique for enabling secure collaborative machine learning in the cloud. However, support for homomorphic computation on ciphertexts under multiple keys is inefficient. Current solutions often require key setup before any computation or incur large ciphertext size (at best, grow linearly to the number of involved keys). In this paper, we proposed a new approach that leverages threshold and multi-key HE to support computations on ciphertexts under different keys. Our new approach removes the need for key setup between each client and the set of model owners. At the same time, this approach reduces the number of encrypted models to be offloaded to the cloud evaluator, and the ciphertext size with a dimension reduction from (N+1)x2 to 2x2. We present the details of each step and discuss the complexity and security of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge