Artificial Intelligence Methods Based Hierarchical Classification of Frontotemporal Dementia to Improve Diagnostic Predictability

Apr 12, 2021Km Poonam, Rajlakshmi Guha, Partha P Chakrabarti

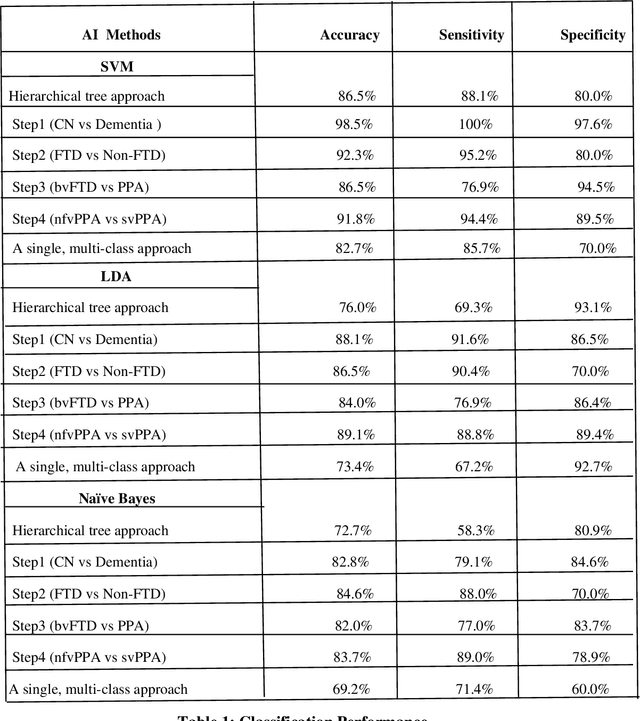

Patients with Frontotemporal Dementia (FTD) have impaired cognitive abilities, executive and behavioral traits, loss of language ability, and decreased memory capabilities. Based on the distinct patterns of cortical atrophy and symptoms, the FTD spectrum primarily includes three variants: behavioral variant FTD (bvFTD), non-fluent variant primary progressive aphasia (nfvPPA), and semantic variant primary progressive aphasia (svPPA). The purpose of this study is to classify MRI images of every single subject into one of the spectrums of the FTD in a hierarchical order by applying data-driven techniques of Artificial Intelligence (AI) on cortical thickness data. This data is computed by FreeSurfer software. We used the Smallest Univalue Segment Assimilating Nucleus (SUSAN) technique to minimize the noise in cortical thickness data. Specifically, we took 204 subjects from the frontotemporal lobar degeneration neuroimaging initiative (NIFTD) database to validate this approach, and each subject was diagnosed in one of the diagnostic categories (bvFTD, svPPA, nfvPPA and cognitively normal). Our proposed automated classification model yielded classification accuracy of 86.5, 76, and 72.7 with support vector machine (SVM), linear discriminant analysis (LDA), and Naive Bayes methods, respectively, in 10-fold cross-validation analysis, which is a significant improvement on a traditional single multi-class model with an accuracy of 82.7, 73.4, and 69.2.

The Indian Spontaneous Expression Database for Emotion Recognition

Jun 16, 2016S L Happy, Priyadarshi Patnaik, Aurobinda Routray, Rajlakshmi Guha

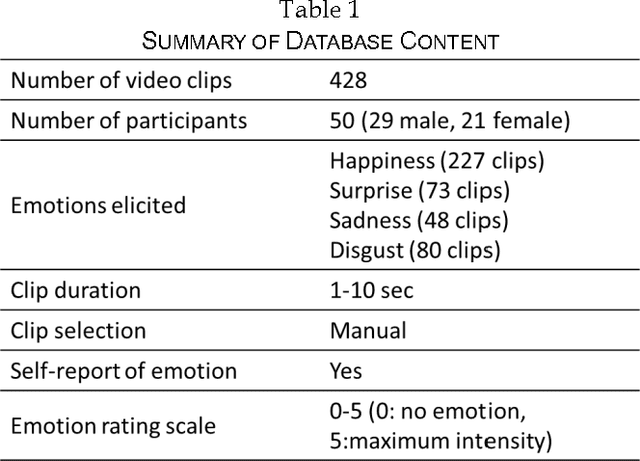

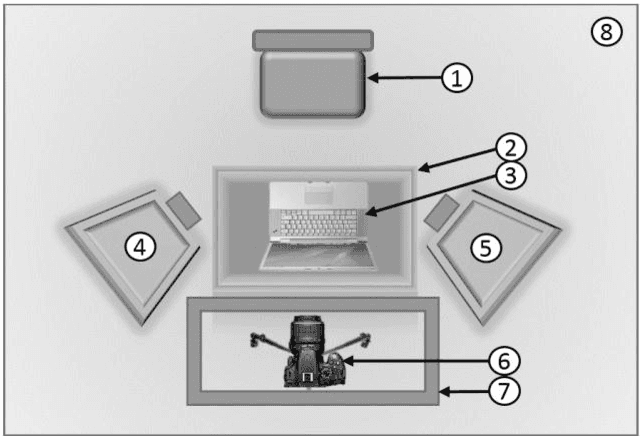

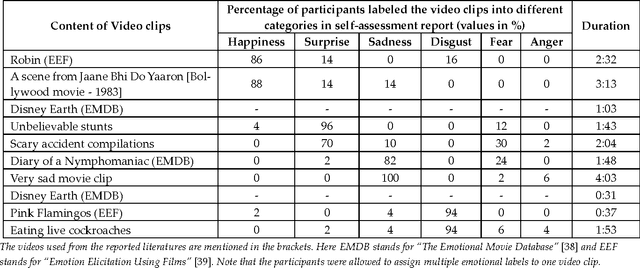

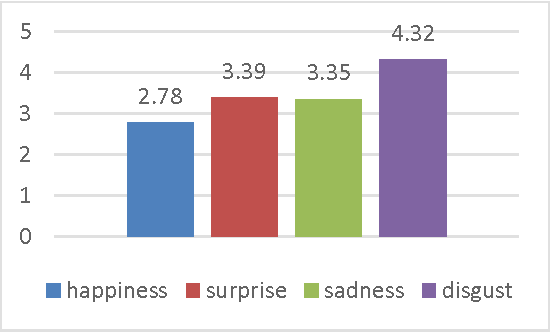

Automatic recognition of spontaneous facial expressions is a major challenge in the field of affective computing. Head rotation, face pose, illumination variation, occlusion etc. are the attributes that increase the complexity of recognition of spontaneous expressions in practical applications. Effective recognition of expressions depends significantly on the quality of the database used. Most well-known facial expression databases consist of posed expressions. However, currently there is a huge demand for spontaneous expression databases for the pragmatic implementation of the facial expression recognition algorithms. In this paper, we propose and establish a new facial expression database containing spontaneous expressions of both male and female participants of Indian origin. The database consists of 428 segmented video clips of the spontaneous facial expressions of 50 participants. In our experiment, emotions were induced among the participants by using emotional videos and simultaneously their self-ratings were collected for each experienced emotion. Facial expression clips were annotated carefully by four trained decoders, which were further validated by the nature of stimuli used and self-report of emotions. An extensive analysis was carried out on the database using several machine learning algorithms and the results are provided for future reference. Such a spontaneous database will help in the development and validation of algorithms for recognition of spontaneous expressions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge