Continuous Learning in a Single-Incremental-Task Scenario with Spike Features

May 03, 2020Ruthvik Vaila, John Chiasson, Vishal Saxena

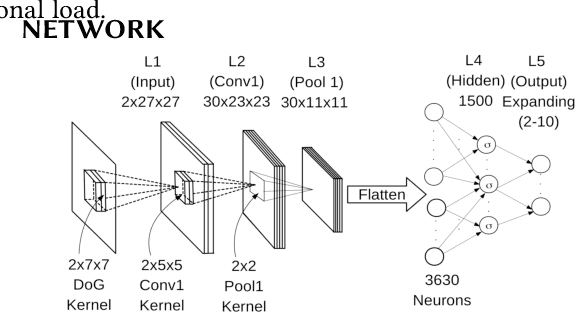

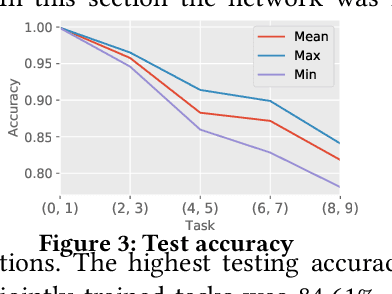

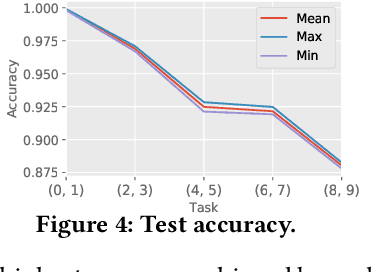

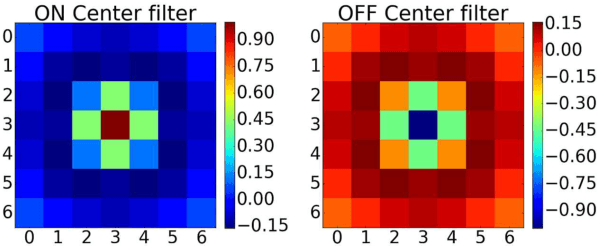

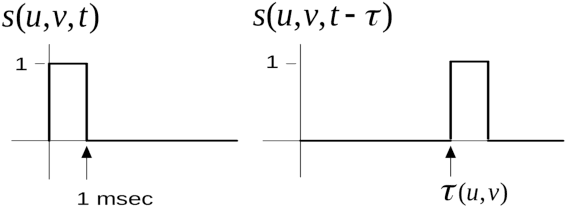

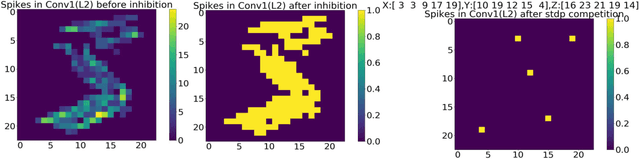

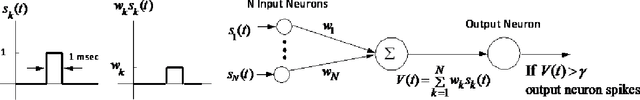

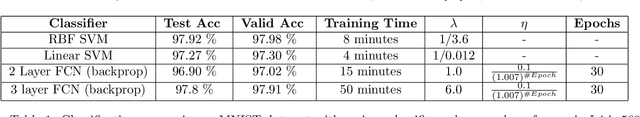

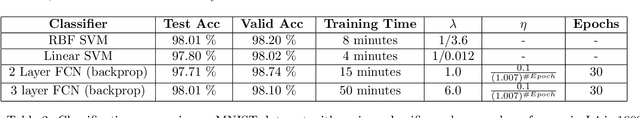

Deep Neural Networks (DNNs) have two key deficiencies, their dependence on high precision computing and their inability to perform sequential learning, that is, when a DNN is trained on a first task and the same DNN is trained on the next task it forgets the first task. This phenomenon of forgetting previous tasks is also referred to as catastrophic forgetting. On the other hand a mammalian brain outperforms DNNs in terms of energy efficiency and the ability to learn sequentially without catastrophically forgetting. Here, we use bio-inspired Spike Timing Dependent Plasticity (STDP)in the feature extraction layers of the network with instantaneous neurons to extract meaningful features. In the classification sections of the network we use a modified synaptic intelligence that we refer to as cost per synapse metric as a regularizer to immunize the network against catastrophic forgetting in a Single-Incremental-Task scenario (SIT). In this study, we use MNIST handwritten digits dataset that was divided into five sub-tasks.

A Deep Unsupervised Feature Learning Spiking Neural Network with Binarized Classification Layers for EMNIST Classification

Feb 26, 2020Ruthvik Vaila, John Chiasson, Vishal Saxena

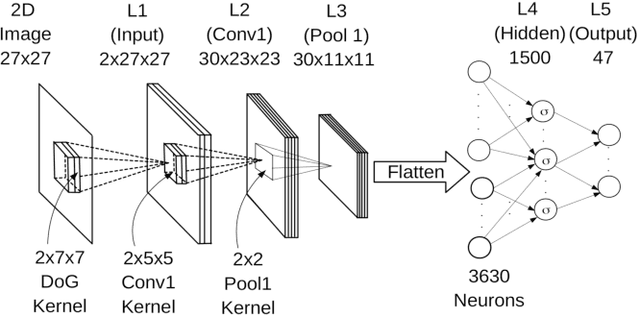

End user AI is trained on large server farms with data collected from the users. With ever increasing demand for IOT devices, there is a need for deep learning approaches that can be implemented (at the edge) in an energy efficient manner. In this work we approach this using spiking neural networks. The unsupervised learning technique of spike timing dependent plasticity (STDP) using binary activations are used to extract features from spiking input data. Gradient descent (backpropagation) is used only on the output layer to perform the training for classification. The accuracies obtained for the balanced EMNIST data set compare favorably with other approaches. The effect of stochastic gradient descent (SGD) approximations on learning capabilities of our network are also explored.

Regression with Deep Learning for Sensor Performance Optimization

Feb 22, 2020Ruthvik Vaila, Denver Lloyd, Kevin Tetz

Neural networks with at least two hidden layers are called deep networks. Recent developments in AI and computer programming in general has led to development of tools such as Tensorflow, Keras, NumPy etc. making it easier to model and draw conclusions from data. In this work we re-approach non-linear regression with deep learning enabled by Keras and Tensorflow. In particular, we use deep learning to parametrize a non-linear multivariate relationship between inputs and outputs of an industrial sensor with an intent to optimize the sensor performance based on selected key metrics.

* Accepted in Workshop on Microelectronics and Electron Devices March 30th, 2020

Deep Convolutional Spiking Neural Networks for Image Classification

Mar 28, 2019Ruthvik Vaila, John Chiasson, Vishal Saxena

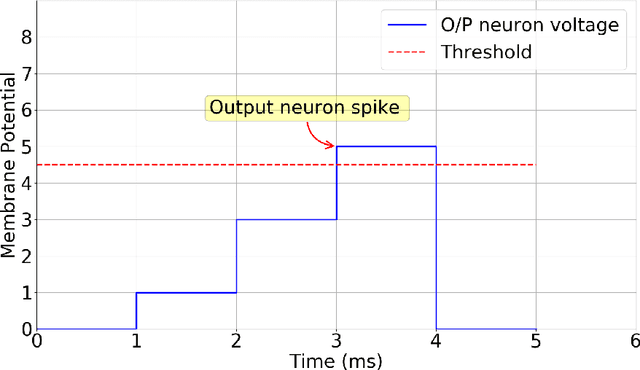

Spiking neural networks are biologically plausible counterparts of the artificial neural networks, artificial neural networks are usually trained with stochastic gradient descent and spiking neural networks are trained with spike timing dependant plasticity. Training deep convolutional neural networks is a memory and power intensive job. Spiking networks could potentially help in reducing the power usage. There is a large pool of tools for one to chose to train artificial neural networks of any size, on the other hand all the available tools to simulate spiking neural networks are geared towards computational neuroscience applications and they are not suitable for real life applications. In this work we focus on implementing a spiking CNN using Tensorflow to examine behaviour of the network and empirically study the effect of various parameters on learning capabilities and also study catastrophic forgetting in the spiking CNN and weight initialization problem in R-STDP using MNIST and N-MNIST data sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge