L0 Regularization Based Neural Network Design and Compression

May 31, 2019S. Asim Ahmed

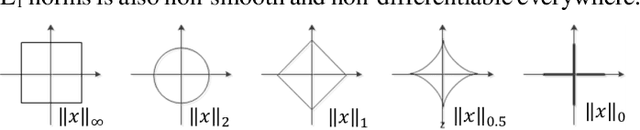

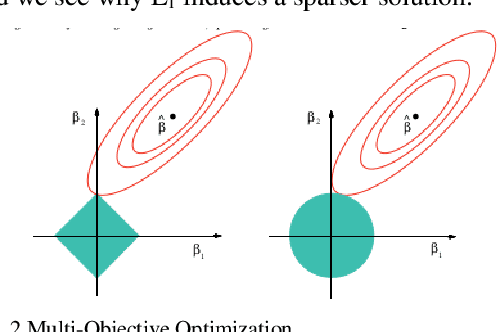

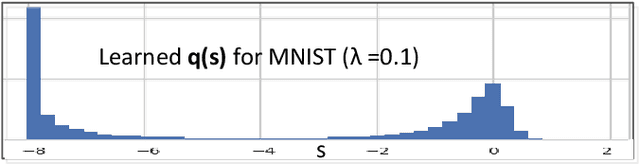

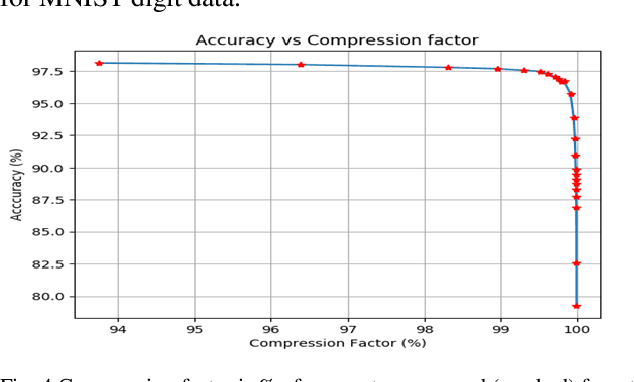

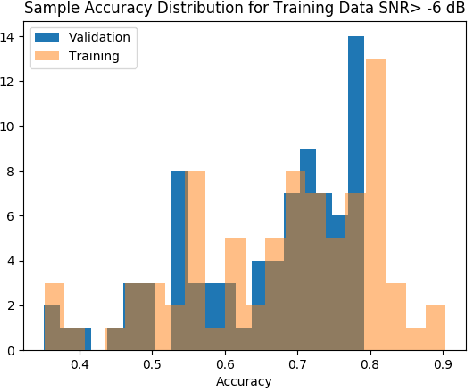

We consider complexity of Deep Neural Networks (DNNs) and their associated massive over-parameterization. Such over-parametrization may entail susceptibility to adversarial attacks, loss of interpretability and adverse Size, Weight and Power - Cost (SWaP-C) considerations. We ask if there are methodical ways (regularization) to reduce complexity and how can we interpret trade-off between desired metric and complexity of DNN. Reducing complexity is directly applicable to scaling of AI applications to real world problems (especially for off-the-cloud applications). We show that presence and evaluation of the knee of the tradeoff curve. We apply a form of L0 regularization to MNIST data and signal modulation classifications. We show that such regularization captures saliency in the input space as well.

Deep Neural Networks based Modrec: Some Results with Inter-Symbol Interference and Adversarial Examples

Nov 14, 2018S. Asim Ahmed, Subhashish Chakravarty, Michael Newhouse

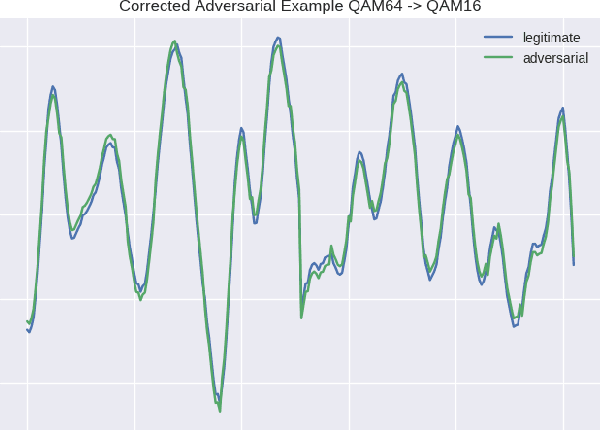

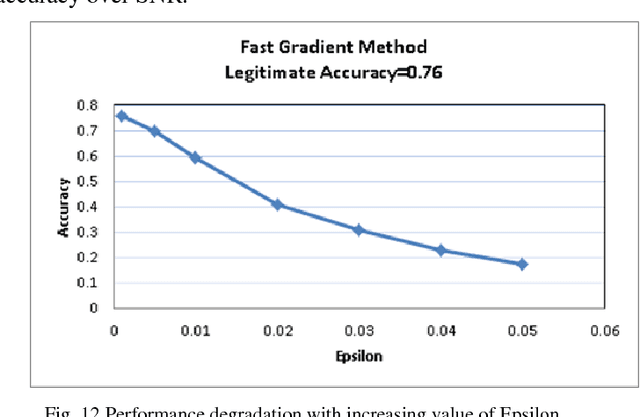

Recent successes and advances in Deep Neural Networks (DNN) in machine vision and Natural Language Processing (NLP) have motivated their use in traditional signal processing and communications systems. In this paper, we present results of such applications to the problem of automatic modulation recognition. Variations in wireless communication channels are represented by statistical channel models and their parameterization will increase with the advent of 5G. In this paper, we report effect of simple two path channel model on our naive deep neural network based implementation. We also report impact of adversarial perturbation to the input signal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge