Robust Few-Shot Ensemble Learning with Focal Diversity-Based Pruning

Apr 05, 2024Selim Furkan Tekin, Fatih Ilhan, Tiansheng Huang, Sihao Hu, Ka-Ho Chow, Margaret L. Loper, Ling Liu

This paper presents FusionShot, a focal diversity optimized few-shot ensemble learning approach for boosting the robustness and generalization performance of pre-trained few-shot models. The paper makes three original contributions. First, we explore the unique characteristics of few-shot learning to ensemble multiple few-shot (FS) models by creating three alternative fusion channels. Second, we introduce the concept of focal error diversity to learn the most efficient ensemble teaming strategy, rather than assuming that an ensemble of a larger number of base models will outperform those sub-ensembles of smaller size. We develop a focal-diversity ensemble pruning method to effectively prune out the candidate ensembles with low ensemble error diversity and recommend top-$K$ FS ensembles with the highest focal error diversity. Finally, we capture the complex non-linear patterns of ensemble few-shot predictions by designing the learn-to-combine algorithm, which can learn the diverse weight assignments for robust ensemble fusion over different member models. Extensive experiments on representative few-shot benchmarks show that the top-K ensembles recommended by FusionShot can outperform the representative SOTA few-shot models on novel tasks (different distributions and unknown at training), and can prevail over existing few-shot learners in both cross-domain settings and adversarial settings. For reproducibility purposes, FusionShot trained models, results, and code are made available at https://github.com/sftekin/fusionshot

Large Language Model-Powered Smart Contract Vulnerability Detection: New Perspectives

Oct 16, 2023Sihao Hu, Tiansheng Huang, Fatih İlhan, Selim Furkan Tekin, Ling Liu

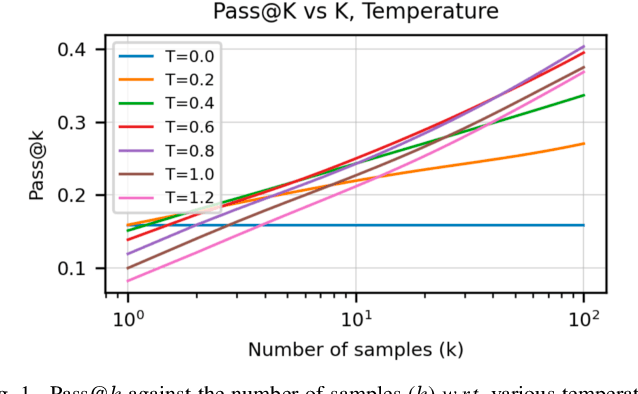

This paper provides a systematic analysis of the opportunities, challenges, and potential solutions of harnessing Large Language Models (LLMs) such as GPT-4 to dig out vulnerabilities within smart contracts based on our ongoing research. For the task of smart contract vulnerability detection, achieving practical usability hinges on identifying as many true vulnerabilities as possible while minimizing the number of false positives. Nonetheless, our empirical study reveals contradictory yet interesting findings: generating more answers with higher randomness largely boosts the likelihood of producing a correct answer but inevitably leads to a higher number of false positives. To mitigate this tension, we propose an adversarial framework dubbed GPTLens that breaks the conventional one-stage detection into two synergistic stages $-$ generation and discrimination, for progressive detection and refinement, wherein the LLM plays dual roles, i.e., auditor and critic, respectively. The goal of auditor is to yield a broad spectrum of vulnerabilities with the hope of encompassing the correct answer, whereas the goal of critic that evaluates the validity of identified vulnerabilities is to minimize the number of false positives. Experimental results and illustrative examples demonstrate that auditor and critic work together harmoniously to yield pronounced improvements over the conventional one-stage detection. GPTLens is intuitive, strategic, and entirely LLM-driven without relying on specialist expertise in smart contracts, showcasing its methodical generality and potential to detect a broad spectrum of vulnerabilities. Our code is available at: https://github.com/git-disl/GPTLens.

* 10 pages

Crime Prediction with Graph Neural Networks and Multivariate Normal Distributions

Dec 16, 2021Selim Furkan Tekin, Suleyman Serdar Kozat

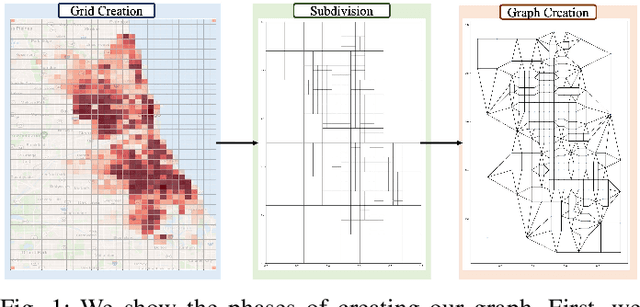

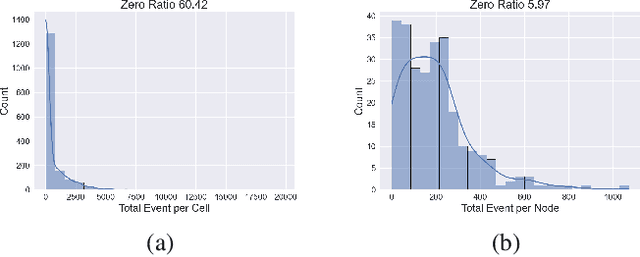

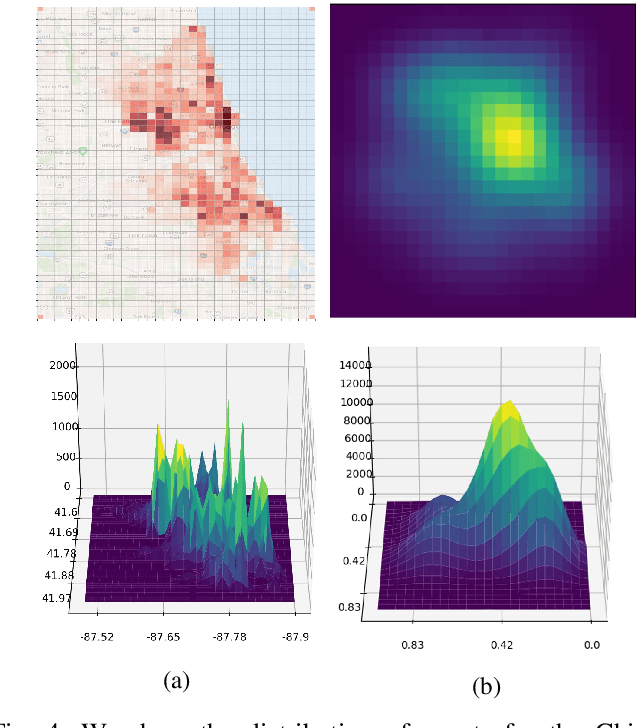

Existing approaches to the crime prediction problem are unsuccessful in expressing the details since they assign the probability values to large regions. This paper introduces a new architecture with the graph convolutional networks (GCN) and multivariate Gaussian distributions to perform high-resolution forecasting that applies to any spatiotemporal data. We tackle the sparsity problem in high resolution by leveraging the flexible structure of GCNs and providing a subdivision algorithm. We build our model with Graph Convolutional Gated Recurrent Units (Graph-ConvGRU) to learn spatial, temporal, and categorical relations. In each node of the graph, we learn a multivariate probability distribution from the extracted features of GCNs. We perform experiments on real-life and synthetic datasets, and our model obtains the best validation and the best test score among the baseline models with significant improvements. We show that our model is not only generative but also precise.

Spatio-temporal Weather Forecasting and Attention Mechanism on Convolutional LSTMs

Feb 01, 2021Selim Furkan Tekin, Oguzhan Karaahmetoglu, Fatih Ilhan, Ismail Balaban, Suleyman Serdar Kozat

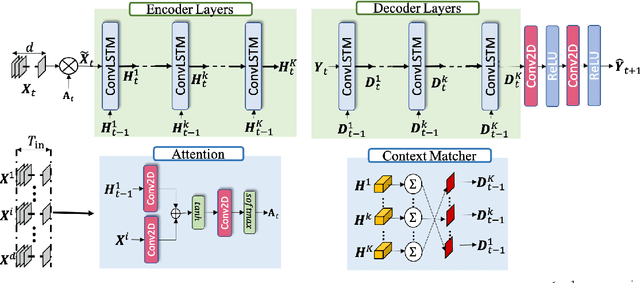

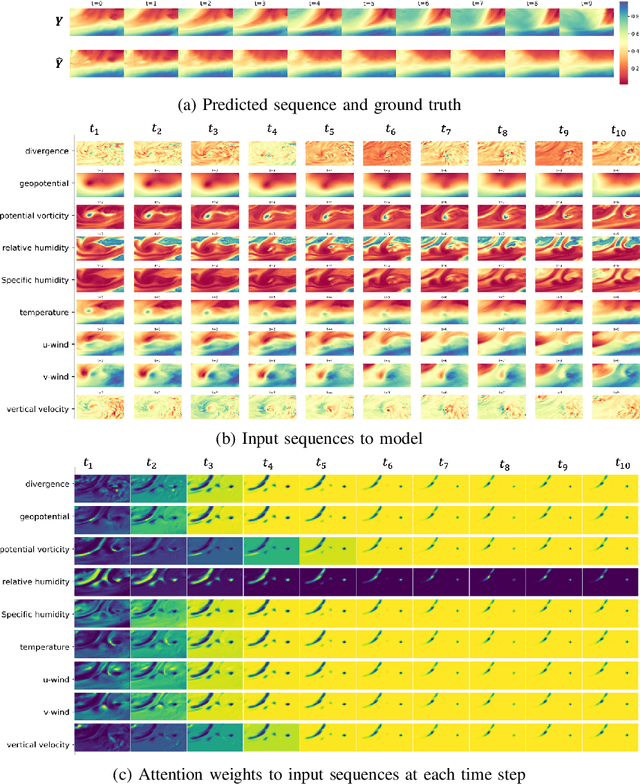

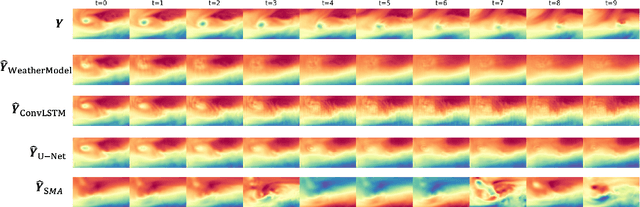

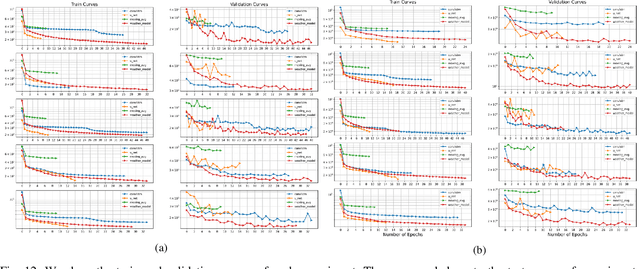

Numerical weather forecasting on high-resolution physical models consume hours of computations on supercomputers. Application of deep learning and machine learning methods in forecasting revealed new solutions in this area. In this paper, we forecast high-resolution numeric weather data using both input weather data and observations by providing a novel deep learning architecture. We formulate the problem as spatio-temporal prediction. Our model is composed of Convolutional Long-short Term Memory, and Convolutional Neural Network units with encoder-decoder structure. We enhance the short-long term performance and interpretability with an attention and a context matcher mechanism. We perform experiments on high-scale, real-life, benchmark numerical weather dataset, ERA5 hourly data on pressure levels, and forecast the temperature. The results show significant improvements in capturing both spatial and temporal correlations with attention matrices focusing on different parts of the input series. Our model obtains the best validation and the best test score among the baseline models, including ConvLSTM forecasting network and U-Net. We provide qualitative and quantitative results and show that our model forecasts 10 time steps with 3 hour frequency with an average of 2 degrees error. Our code and the data are publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge