Adaptive strategy in differential evolution via explicit exploitation and exploration controls

Feb 03, 2020Sheng Xin Zhang, Wing Shing Chan, Kit Sang Tang, Shao Yong Zheng

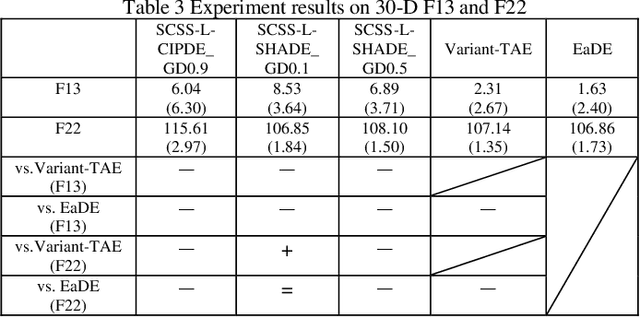

When introducing new strategies to the existing one, two key issues should be addressed. One is to efficiently distribute computational resources so that the appropriate strategy dominates. The other is to remedy or even eliminate the drawback of inappropriate strategies. Adaptation is a popular and efficient method for strategy adjustments and has been widely studied in the literature. Existing methods commonly involve the trials of multiple strategies and then reward better-performing one with more resources based on their previous performance. As a result, it may not efficiently address those two key issues. On the one hand, they are based on trial-and-error with inappropriate strategies consuming resources. On the other hand, since multiple strategies are involved in the trial, the inappropriate strategies could mislead the search. In this paper, we propose an adaptive differential evolution (DE) with explicit exploitation and exploration controls (Explicit adaptation DE, EaDE), which is the first attempt using offline knowledge to separate multiple strategies to exempt the optimization from trial-and-error. EaDE divides the evolution process into several SCSS (Selective-candidate with similarity selection) generations and adaptive generations. Exploitation and exploration needs are learned in the SCSS generations by a relatively balanced strategy. While in the adaptive generations, to meet these needs, two other alternative strategies, an exploitative one or an explorative one is employed. Experimental studies on 28 benchmark functions confirm the effectiveness of the proposed method.

Multi-Layer Competitive-Cooperative Framework for Performance Enhancement of Differential Evolution

Feb 07, 2018Sheng Xin Zhang, Li Ming Zheng, Kit Sang Tang, Shao Yong Zheng, Wing Shing Chan

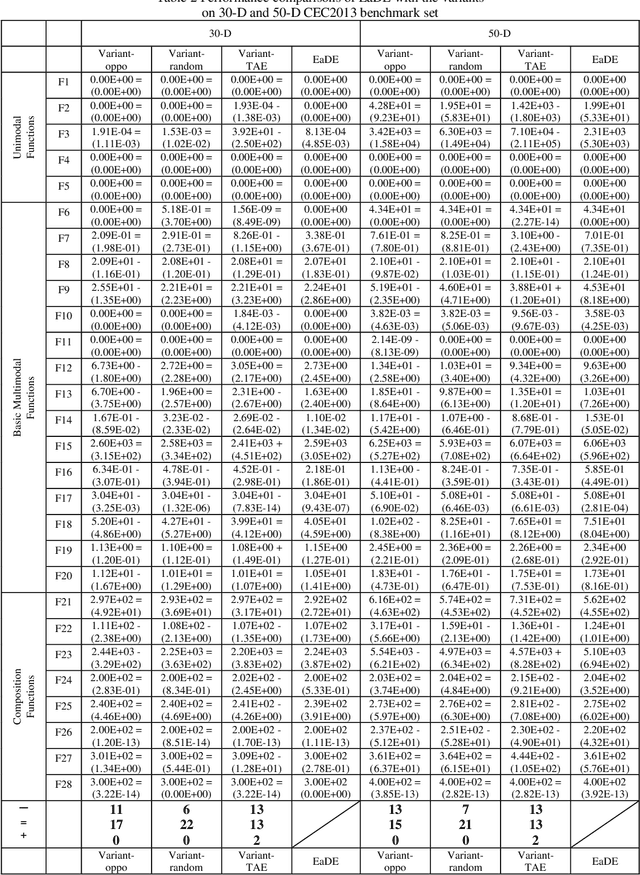

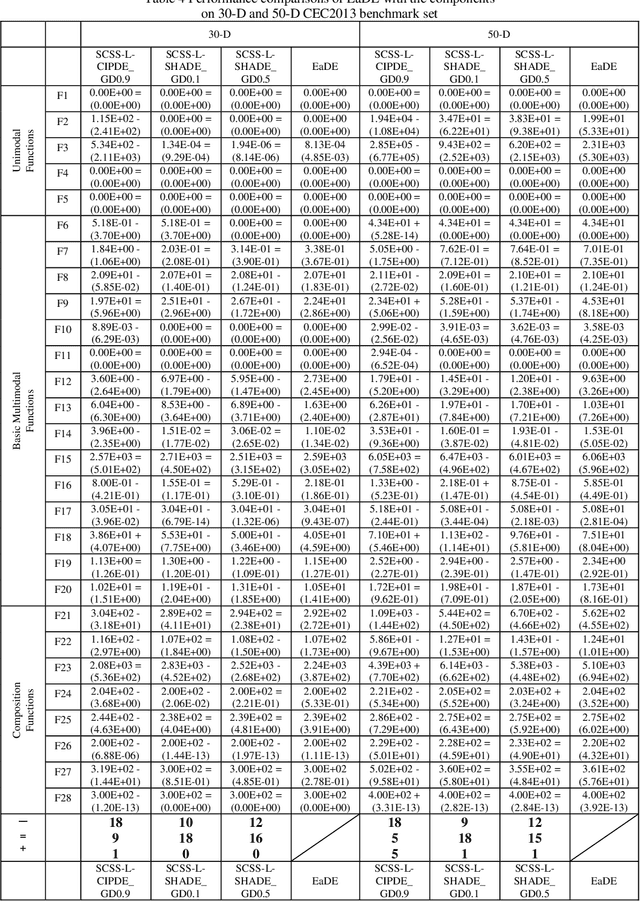

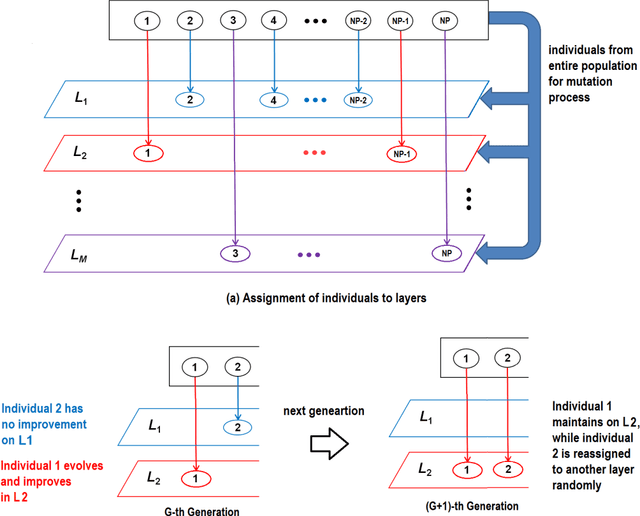

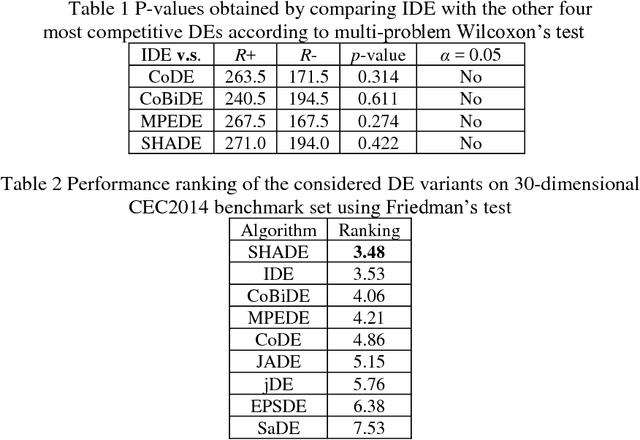

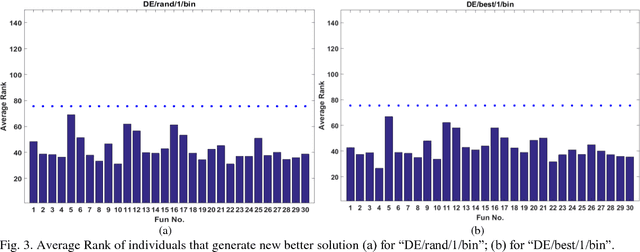

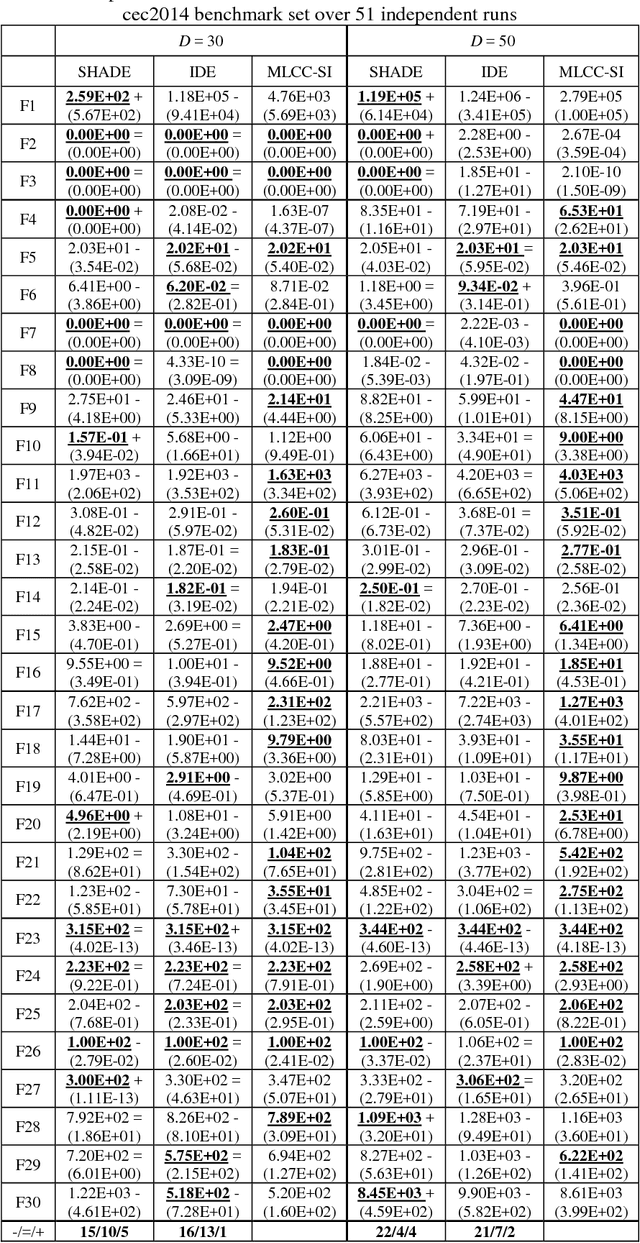

Differential Evolution (DE) is one of the most powerful optimizers in the evolutionary algorithm (EA) family. In recent years, many DE variants have been proposed to enhance performance. However, when compared with each other, significant differences in performances are seldomly observed. To meet this challenge of a more significant improvement, this paper proposes a multi-layer competitive-cooperative (MLCC) framework to combine the advantages of multiple DEs. Existing multi-method strategies commonly use a multi-population based structure, which classifies the entire population into several subpopulations and evolve individuals only in their corresponding subgroups. MLCC proposes to implement a parallel structure with the entire population simultaneously monitored by multiple DEs assigned in multiple layers. Each individual can store, utilize and update its evolution information in different layers by using a novel individual preference based layer selecting (IPLS) mechanism and a computational resource allocation bias (RAB) mechanism. In IPLS, individuals only connect to one favorite layer. While in RAB, high quality solutions are evolved by considering all the layers. In this way, the multiple layers work in a competitive and cooperative manner. The proposed MLCC framework has been implemented on several highly competitive DEs. Experimental studies show that MLCC variants significantly outperform the baseline DEs as well as several state-of-the-art and up-to-date DEs on the CEC benchmark functions.

Selective-Candidate Framework with Similarity Selection Rule for Evolutionary Optimization

Jan 04, 2018Sheng Xin Zhang, Wing Shing Chan, Zi Kang Peng, Shao Yong Zheng, Kit Sang Tang

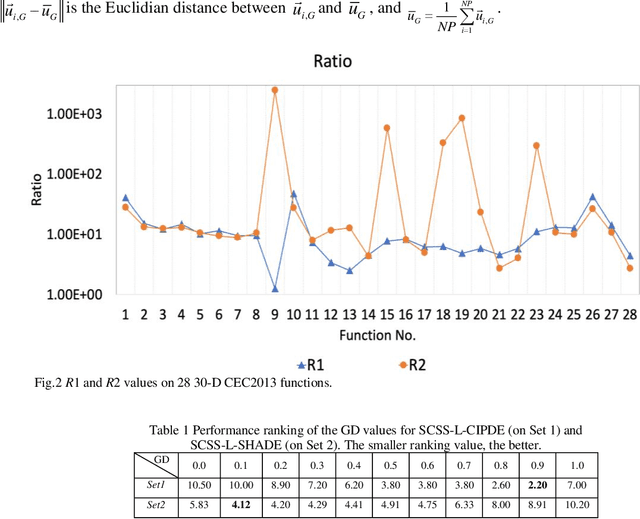

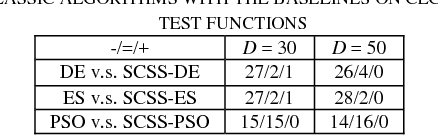

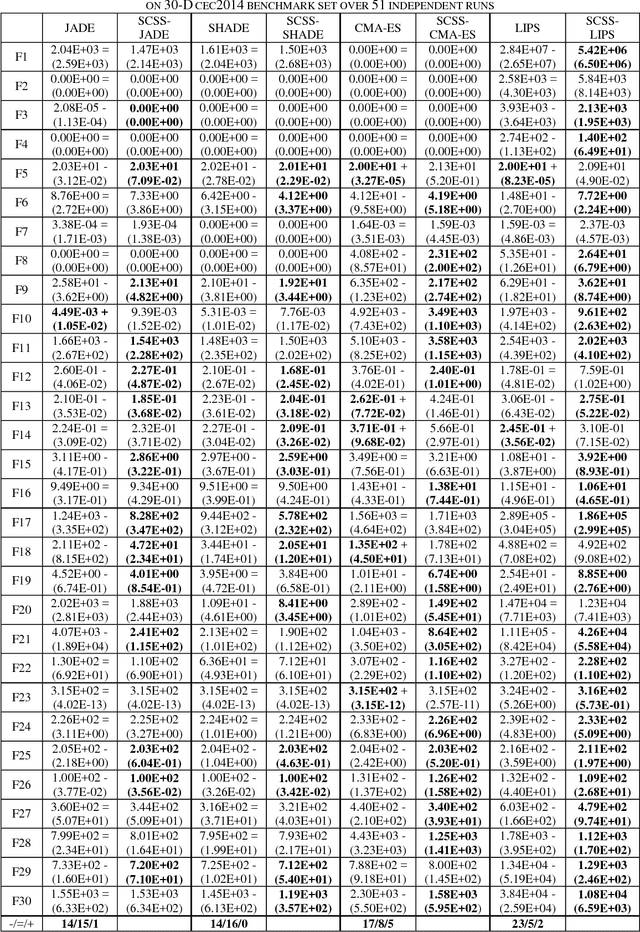

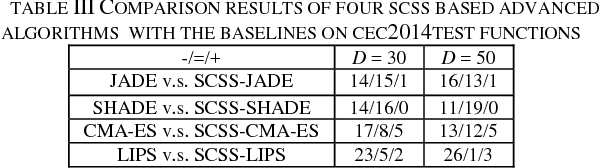

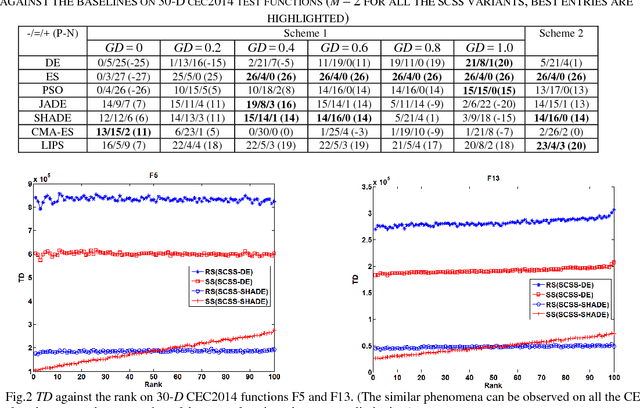

This paper proposes to resolve limitations of the traditional one-reproduction (OR) framework which produces only one candidate in a single reproduction procedure. A selective-candidate framework with similarity selection rule (SCSS) is suggested to make possible, a selective direction of search. In the SCSS framework, M (M > 1) candidates are generated from each current solution by independently conducting the reproduction procedure M times. The winner is then determined by employing a similarity selection rule. To maintain balanced exploitation and exploration capabilities, an efficient similarity selection rule based on the Euclidian distances between each of the M candidates and the corresponding current solution is proposed. The SCSS framework can be easily applied to any evolutionary algorithms or swarm intelligences. Experiments conducted with 60 benchmark functions show the superiority of SCSS over OR in three classic, four state-of-the-art and four up-to-date algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge