Unsupervised Audio-Visual Segmentation with Modality Alignment

Mar 21, 2024Swapnil Bhosale, Haosen Yang, Diptesh Kanojia, Jiangkang Deng, Xiatian Zhu

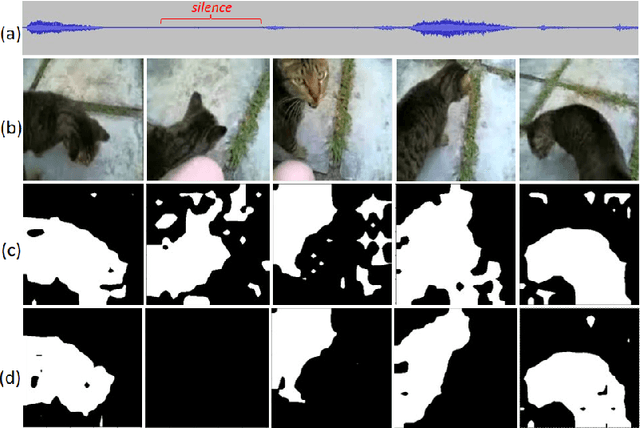

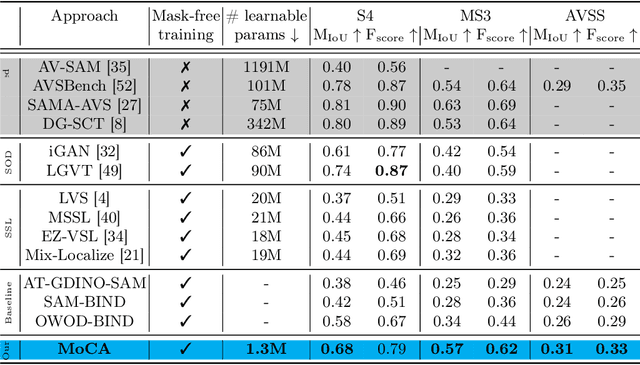

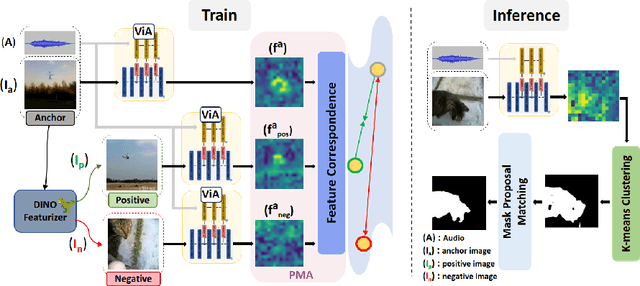

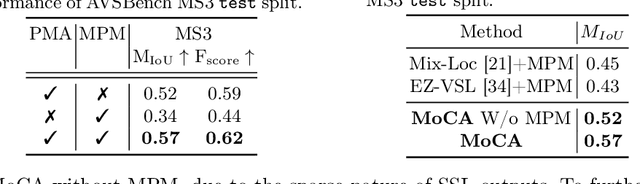

Audio-Visual Segmentation (AVS) aims to identify, at the pixel level, the object in a visual scene that produces a given sound. Current AVS methods rely on costly fine-grained annotations of mask-audio pairs, making them impractical for scalability. To address this, we introduce unsupervised AVS, eliminating the need for such expensive annotation. To tackle this more challenging problem, we propose an unsupervised learning method, named Modality Correspondence Alignment (MoCA), which seamlessly integrates off-the-shelf foundation models like DINO, SAM, and ImageBind. This approach leverages their knowledge complementarity and optimizes their joint usage for multi-modality association. Initially, we estimate positive and negative image pairs in the feature space. For pixel-level association, we introduce an audio-visual adapter and a novel pixel matching aggregation strategy within the image-level contrastive learning framework. This allows for a flexible connection between object appearance and audio signal at the pixel level, with tolerance to imaging variations such as translation and rotation. Extensive experiments on the AVSBench (single and multi-object splits) and AVSS datasets demonstrate that our MoCA outperforms strongly designed baseline methods and approaches supervised counterparts, particularly in complex scenarios with multiple auditory objects. Notably when comparing mIoU, MoCA achieves a substantial improvement over baselines in both the AVSBench (S4: +17.24%; MS3: +67.64%) and AVSS (+19.23%) audio-visual segmentation challenges.

Sarcasm in Sight and Sound: Benchmarking and Expansion to Improve Multimodal Sarcasm Detection

Sep 29, 2023Swapnil Bhosale, Abhra Chaudhuri, Alex Lee Robert Williams, Divyank Tiwari, Anjan Dutta, Xiatian Zhu, Pushpak Bhattacharyya, Diptesh Kanojia

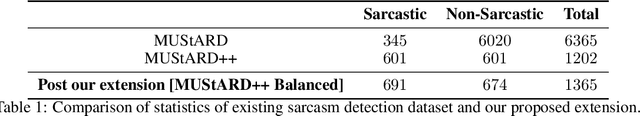

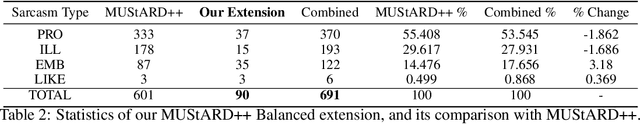

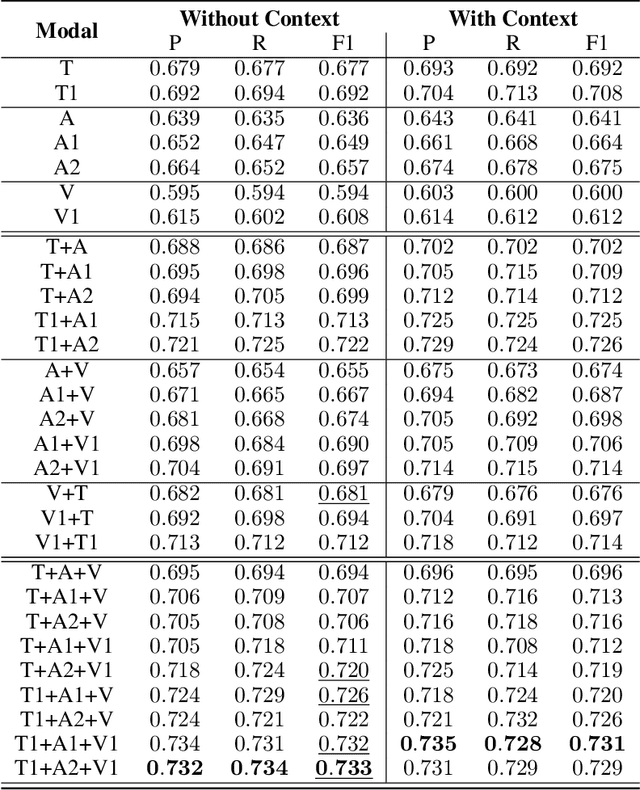

The introduction of the MUStARD dataset, and its emotion recognition extension MUStARD++, have identified sarcasm to be a multi-modal phenomenon -- expressed not only in natural language text, but also through manners of speech (like tonality and intonation) and visual cues (facial expression). With this work, we aim to perform a rigorous benchmarking of the MUStARD++ dataset by considering state-of-the-art language, speech, and visual encoders, for fully utilizing the totality of the multi-modal richness that it has to offer, achieving a 2\% improvement in macro-F1 over the existing benchmark. Additionally, to cure the imbalance in the `sarcasm type' category in MUStARD++, we propose an extension, which we call \emph{MUStARD++ Balanced}, benchmarking the same with instances from the extension split across both train and test sets, achieving a further 2.4\% macro-F1 boost. The new clips were taken from a novel source -- the TV show, House MD, which adds to the diversity of the dataset, and were manually annotated by multiple annotators with substantial inter-annotator agreement in terms of Cohen's kappa and Krippendorf's alpha. Our code, extended data, and SOTA benchmark models are made public.

Leveraging Foundation models for Unsupervised Audio-Visual Segmentation

Sep 13, 2023Swapnil Bhosale, Haosen Yang, Diptesh Kanojia, Xiatian Zhu

Audio-Visual Segmentation (AVS) aims to precisely outline audible objects in a visual scene at the pixel level. Existing AVS methods require fine-grained annotations of audio-mask pairs in supervised learning fashion. This limits their scalability since it is time consuming and tedious to acquire such cross-modality pixel level labels. To overcome this obstacle, in this work we introduce unsupervised audio-visual segmentation with no need for task-specific data annotations and model training. For tackling this newly proposed problem, we formulate a novel Cross-Modality Semantic Filtering (CMSF) approach to accurately associate the underlying audio-mask pairs by leveraging the off-the-shelf multi-modal foundation models (e.g., detection [1], open-world segmentation [2] and multi-modal alignment [3]). Guiding the proposal generation by either audio or visual cues, we design two training-free variants: AT-GDINO-SAM and OWOD-BIND. Extensive experiments on the AVS-Bench dataset show that our unsupervised approach can perform well in comparison to prior art supervised counterparts across complex scenarios with multiple auditory objects. Particularly, in situations where existing supervised AVS methods struggle with overlapping foreground objects, our models still excel in accurately segmenting overlapped auditory objects. Our code will be publicly released.

DiffSED: Sound Event Detection with Denoising Diffusion

Aug 16, 2023Swapnil Bhosale, Sauradip Nag, Diptesh Kanojia, Jiankang Deng, Xiatian Zhu

Sound Event Detection (SED) aims to predict the temporal boundaries of all the events of interest and their class labels, given an unconstrained audio sample. Taking either the splitand-classify (i.e., frame-level) strategy or the more principled event-level modeling approach, all existing methods consider the SED problem from the discriminative learning perspective. In this work, we reformulate the SED problem by taking a generative learning perspective. Specifically, we aim to generate sound temporal boundaries from noisy proposals in a denoising diffusion process, conditioned on a target audio sample. During training, our model learns to reverse the noising process by converting noisy latent queries to the groundtruth versions in the elegant Transformer decoder framework. Doing so enables the model generate accurate event boundaries from even noisy queries during inference. Extensive experiments on the Urban-SED and EPIC-Sounds datasets demonstrate that our model significantly outperforms existing alternatives, with 40+% faster convergence in training.

Text-to-Audio Grounding Based Novel Metric for Evaluating Audio Caption Similarity

Oct 03, 2022Swapnil Bhosale, Rupayan Chakraborty, Sunil Kumar Kopparapu

Automatic Audio Captioning (AAC) refers to the task of translating an audio sample into a natural language (NL) text that describes the audio events, source of the events and their relationships. Unlike NL text generation tasks, which rely on metrics like BLEU, ROUGE, METEOR based on lexical semantics for evaluation, the AAC evaluation metric requires an ability to map NL text (phrases) that correspond to similar sounds in addition lexical semantics. Current metrics used for evaluation of AAC tasks lack an understanding of the perceived properties of sound represented by text. In this paper, wepropose a novel metric based on Text-to-Audio Grounding (TAG), which is, useful for evaluating cross modal tasks like AAC. Experiments on publicly available AAC data-set shows our evaluation metric to perform better compared to existing metrics used in NL text and image captioning literature.

Automatic Audio Captioning using Attention weighted Event based Embeddings

Jan 28, 2022Swapnil Bhosale, Rupayan Chakraborty, Sunil Kumar Kopparapu

Automatic Audio Captioning (AAC) refers to the task of translating audio into a natural language that describes the audio events, source of the events and their relationships. The limited samples in AAC datasets at present, has set up a trend to incorporate transfer learning with Audio Event Detection (AED) as a parent task. Towards this direction, in this paper, we propose an encoder-decoder architecture with light-weight (i.e. with lesser learnable parameters) Bi-LSTM recurrent layers for AAC and compare the performance of two state-of-the-art pre-trained AED models as embedding extractors. Our results show that an efficient AED based embedding extractor combined with temporal attention and augmentation techniques is able to surpass existing literature with computationally intensive architectures. Further, we provide evidence of the ability of the non-uniform attention weighted encoding generated as a part of our model to facilitate the decoder glance over specific sections of the audio while generating each token.

Automatic Speaker Independent Dysarthric Speech Intelligibility Assessment System

Mar 10, 2021Ayush Tripathi, Swapnil Bhosale, Sunil Kumar Kopparapu

Dysarthria is a condition which hampers the ability of an individual to control the muscles that play a major role in speech delivery. The loss of fine control over muscles that assist the movement of lips, vocal chords, tongue and diaphragm results in abnormal speech delivery. One can assess the severity level of dysarthria by analyzing the intelligibility of speech spoken by an individual. Continuous intelligibility assessment helps speech language pathologists not only study the impact of medication but also allows them to plan personalized therapy. It helps the clinicians immensely if the intelligibility assessment system is reliable, automatic, simple for (a) patients to undergo and (b) clinicians to interpret. Lack of availability of dysarthric data has resulted in development of speaker dependent automatic intelligibility assessment systems which requires patients to speak a large number of utterances. In this paper, we propose (a) a cost minimization procedure to select an optimal (small) number of utterances that need to be spoken by the dysarthric patient, (b) four different speaker independent intelligibility assessment systems which require the patient to speak a small number of words, and (c) the assessment score is close to the perceptual score that the Speech Language Pathologist (SLP) can relate to. The need for small number of utterances to be spoken by the patient and the score being relatable to the SLP benefits both the dysarthric patient and the clinician from usability perspective.

Semi Supervised Learning For Few-shot Audio Classification By Episodic Triplet Mining

Feb 16, 2021Swapnil Bhosale, Rupayan Chakraborty, Sunil Kumar Kopparapu

Few-shot learning aims to generalize unseen classes that appear during testing but are unavailable during training. Prototypical networks incorporate few-shot metric learning, by constructing a class prototype in the form of a mean vector of the embedded support points within a class. The performance of prototypical networks in extreme few-shot scenarios (like one-shot) degrades drastically, mainly due to the desuetude of variations within the clusters while constructing prototypes. In this paper, we propose to replace the typical prototypical loss function with an Episodic Triplet Mining (ETM) technique. The conventional triplet selection leads to overfitting, because of all possible combinations being used during training. We incorporate episodic training for mining the semi hard positive and the semi hard negative triplets to overcome the overfitting. We also propose an adaptation to make use of unlabeled training samples for better modeling. Experimenting on two different audio processing tasks, namely speaker recognition and audio event detection; show improved performances and hence the efficacy of ETM over the prototypical loss function and other meta-learning frameworks. Further, we show improved performances when unlabeled training samples are used.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge