Thermodynamic-RAM Technology Stack

Mar 29, 2017M. Alexander Nugent, Timothy W. Molter

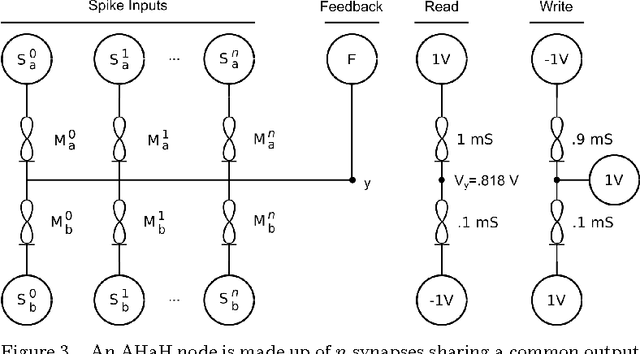

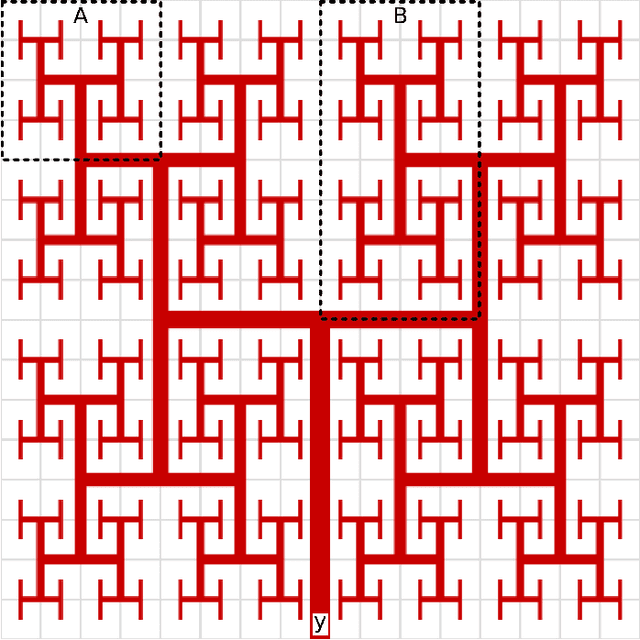

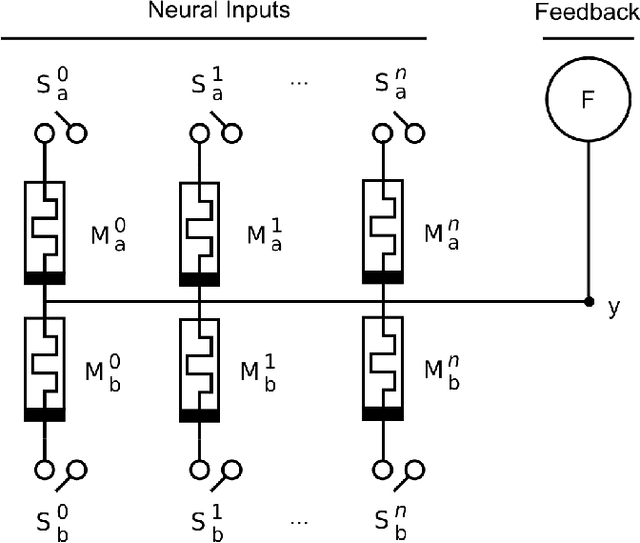

We introduce a technology stack or specification describing the multiple levels of abstraction and specialization needed to implement a neuromorphic processor (NPU) based on the previously-described concept of AHaH Computing and integrate it into today's digital computing systems. The general purpose NPU implementation described here is called Thermodynamic-RAM (kT-RAM) and is just one of many possible architectures, each with varying advantages and trade offs. Bringing us closer to brain-like neural computation, kT-RAM will provide a general-purpose adaptive hardware resource to existing computing platforms enabling fast and low-power machine learning capabilities that are currently hampered by the separation of memory and processing, a.k.a the von Neumann bottleneck. Because understanding such a processor based on non-traditional principles can be difficult, by presenting the various levels of the stack from the bottom up, layer by layer, explaining kT-RAM becomes a much easier task. The levels of the Thermodynamic-RAM technology stack include the memristor, synapse, AHaH node, kT-RAM, instruction set, sparse spike encoding, kT-RAM emulator, and SENSE server.

Machine Learning with Memristors via Thermodynamic RAM

Aug 14, 2016Timothy W. Molter, M. Alexander Nugent

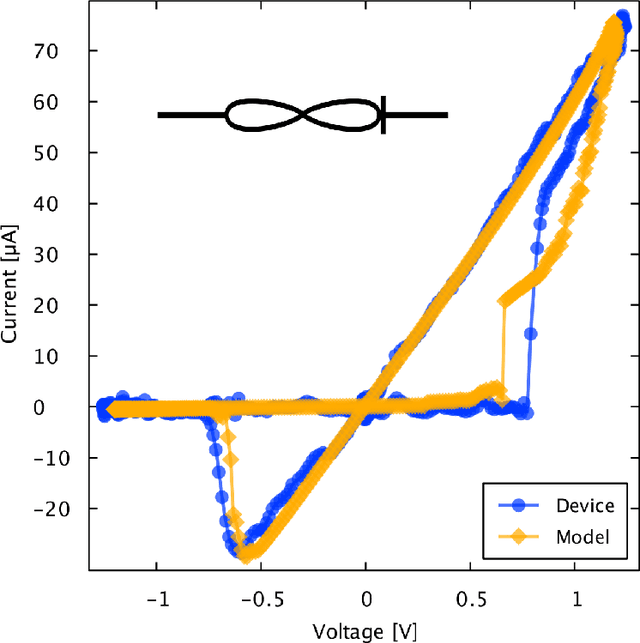

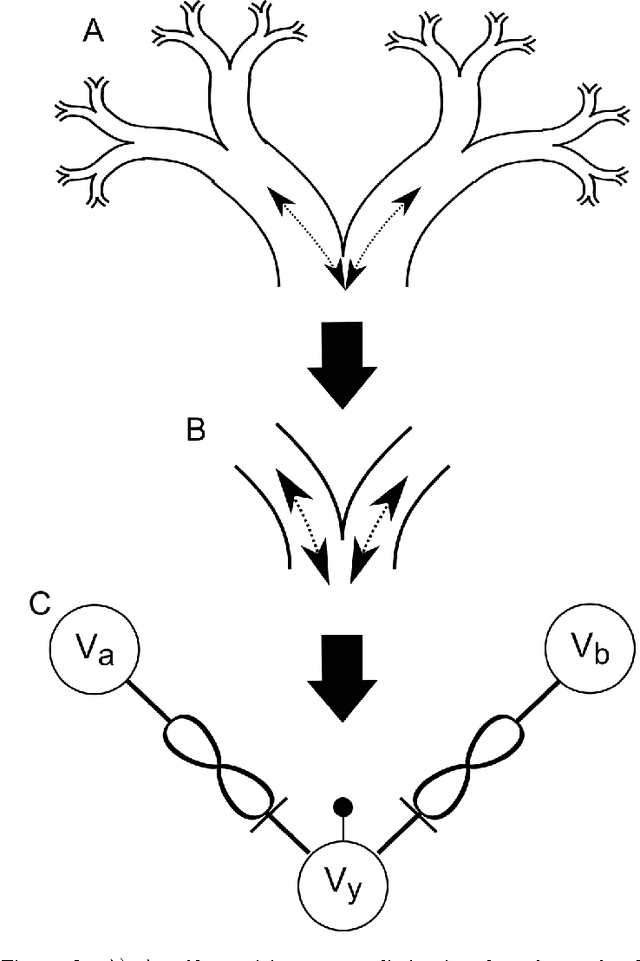

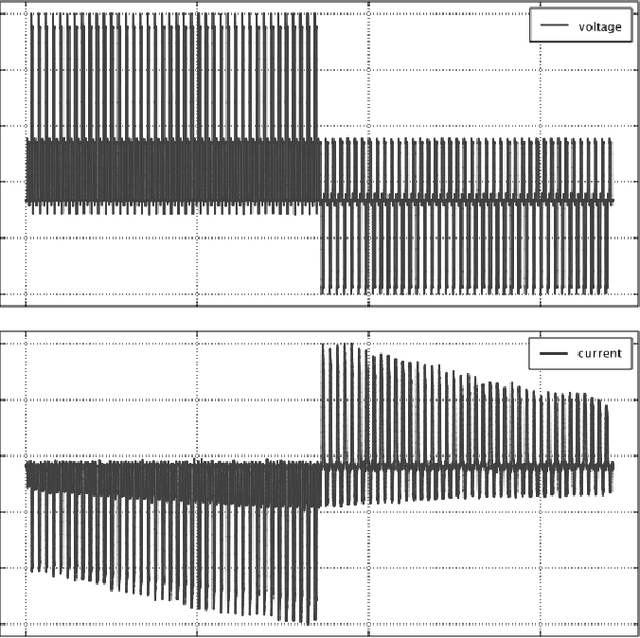

Thermodynamic RAM (kT-RAM) is a neuromemristive co-processor design based on the theory of AHaH Computing and implemented via CMOS and memristors. The co-processor is a 2-D array of differential memristor pairs (synapses) that can be selectively coupled together (neurons) via the digital bit addressing of the underlying CMOS RAM circuitry. The chip is designed to plug into existing digital computers and be interacted with via a simple instruction set. Anti-Hebbian and Hebbian (AHaH) computing forms the theoretical framework from which a nature-inspired type of computing architecture is built where, unlike von Neumann architectures, memory and processor are physically combined for synaptic operations. Through exploitation of AHaH attractor states, memristor-based circuits converge to attractor basins that represents machine learning solutions such as unsupervised feature learning, supervised classification and anomaly detection. Because kT-RAM eliminates the need to shuttle bits back and forth between memory and processor and can operate at very low voltage levels, it can significantly surpass CPU, GPU, and FPGA performance for synaptic integration and learning operations. Here, we present a memristor technology developed for use in kT-RAM, in particular bi-directional incremental adaptation of conductance via short low-voltage 1.0 V, 1.0 microsecond pulses.

Cortical Processing with Thermodynamic-RAM

Aug 14, 2014M. Alexander Nugent, Timothy W. Molter

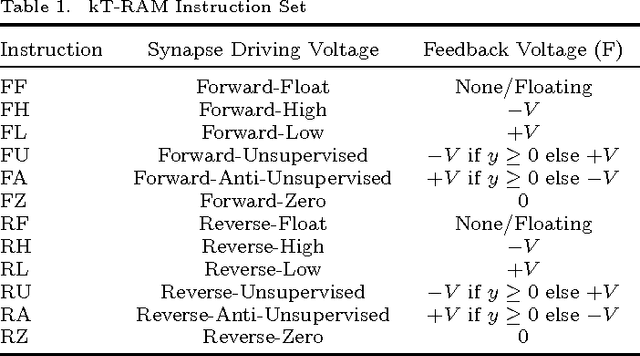

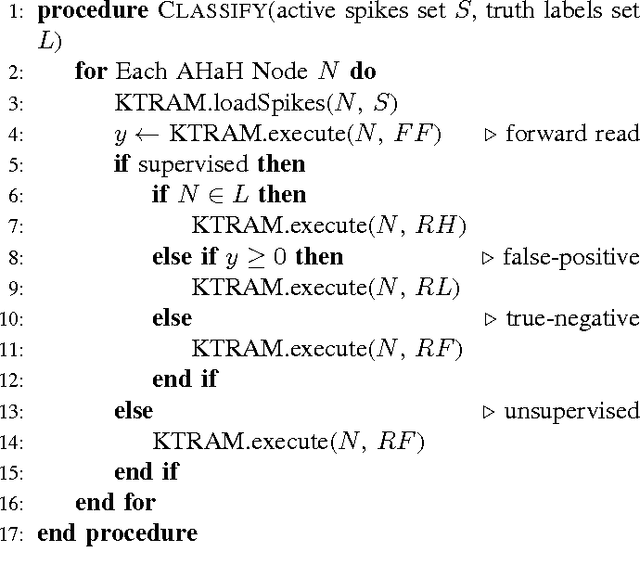

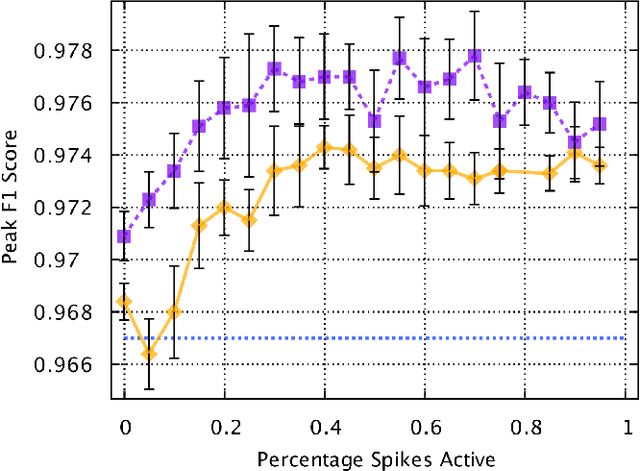

AHaH computing forms a theoretical framework from which a biologically-inspired type of computing architecture can be built where, unlike von Neumann systems, memory and processor are physically combined. In this paper we report on an incremental step beyond the theoretical framework of AHaH computing toward the development of a memristor-based physical neural processing unit (NPU), which we call Thermodynamic-RAM (kT-RAM). While the power consumption and speed dominance of such an NPU over von Neumann architectures for machine learning applications is well appreciated, Thermodynamic-RAM offers several advantages over other hardware approaches to adaptation and learning. Benefits include general-purpose use, a simple yet flexible instruction set and easy integration into existing digital platforms. We present a high level design of kT-RAM and a formal definition of its instruction set. We report the completion of a kT-RAM emulator and the successful port of all previous machine learning benchmark applications including unsupervised clustering, supervised and unsupervised classification, complex signal prediction, unsupervised robotic actuation and combinatorial optimization. Lastly, we extend a previous MNIST hand written digits benchmark application, to show that an extra step of reading the synaptic states of AHaH nodes during the train phase (healing) alone results in plasticity that improves the classifier's performance, bumping our best F1 score up to 99.5%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge