Principles of Explanation in Human-AI Systems

Feb 09, 2021Shane T. Mueller, Elizabeth S. Veinott, Robert R. Hoffman, Gary Klein, Lamia Alam, Tauseef Mamun, William J. Clancey

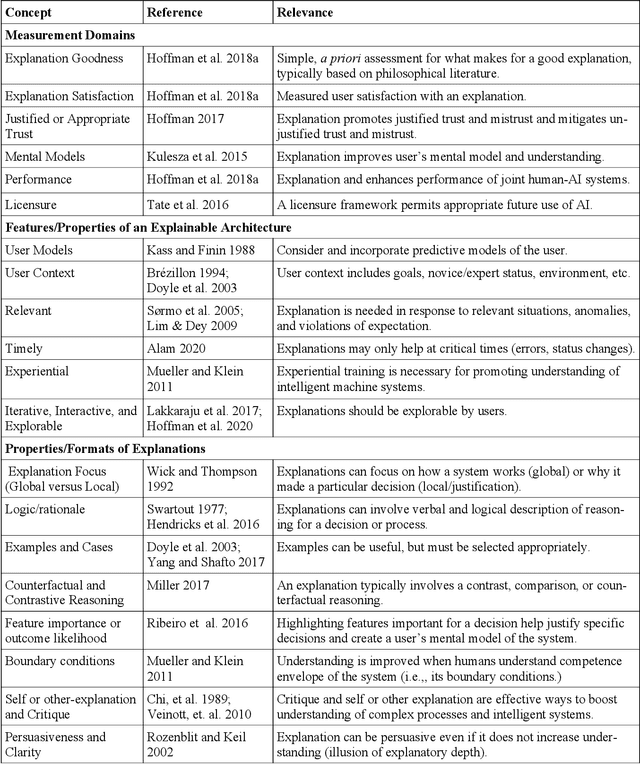

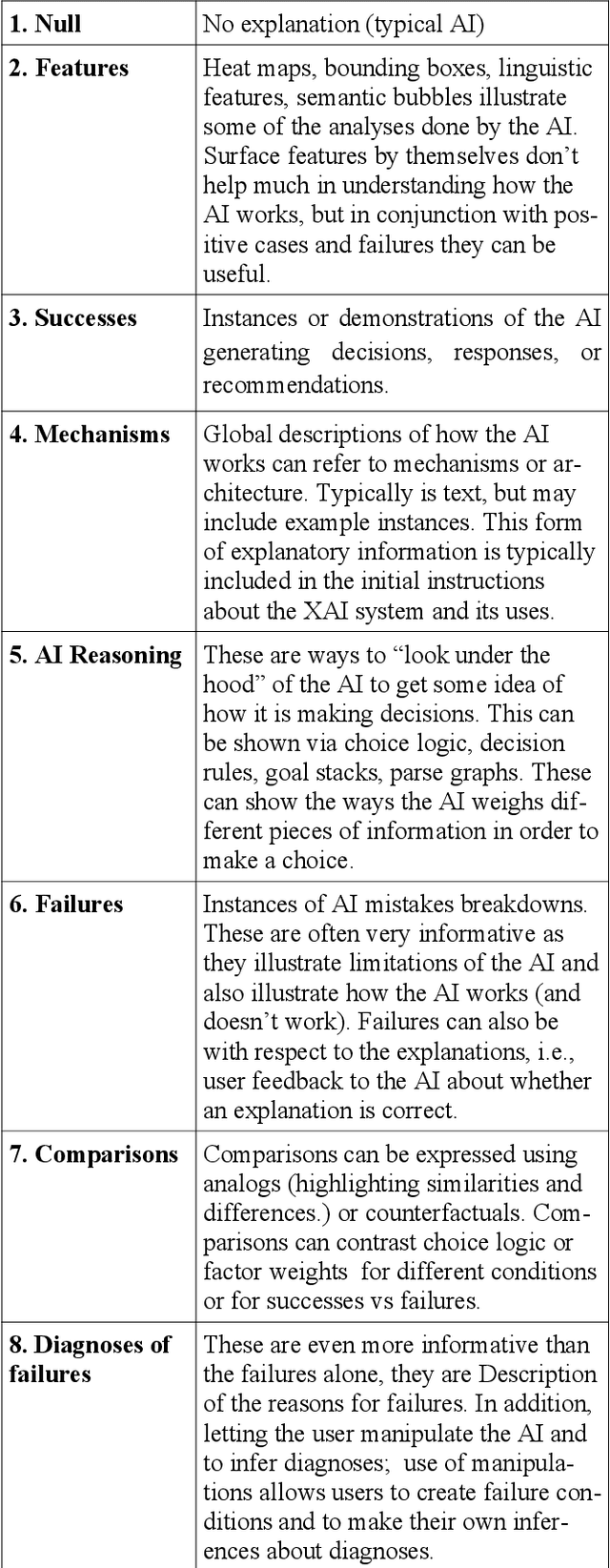

Explainable Artificial Intelligence (XAI) has re-emerged in response to the development of modern AI and ML systems. These systems are complex and sometimes biased, but they nevertheless make decisions that impact our lives. XAI systems are frequently algorithm-focused; starting and ending with an algorithm that implements a basic untested idea about explainability. These systems are often not tested to determine whether the algorithm helps users accomplish any goals, and so their explainability remains unproven. We propose an alternative: to start with human-focused principles for the design, testing, and implementation of XAI systems, and implement algorithms to serve that purpose. In this paper, we review some of the basic concepts that have been used for user-centered XAI systems over the past 40 years of research. Based on these, we describe the "Self-Explanation Scorecard", which can help developers understand how they can empower users by enabling self-explanation. Finally, we present a set of empirically-grounded, user-centered design principles that may guide developers to create successful explainable systems.

Explaining AI as an Exploratory Process: The Peircean Abduction Model

Oct 01, 2020Robert R. Hoffman, William J. Clancey, Shane T. Mueller

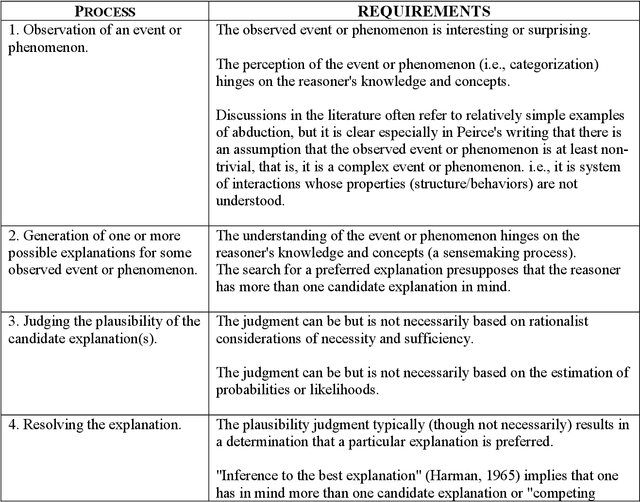

Current discussions of "Explainable AI" (XAI) do not much consider the role of abduction in explanatory reasoning (see Mueller, et al., 2018). It might be worthwhile to pursue this, to develop intelligent systems that allow for the observation and analysis of abductive reasoning and the assessment of abductive reasoning as a learnable skill. Abductive inference has been defined in many ways. For example, it has been defined as the achievement of insight. Most often abduction is taken as a single, punctuated act of syllogistic reasoning, like making a deductive or inductive inference from given premises. In contrast, the originator of the concept of abduction---the American scientist/philosopher Charles Sanders Peirce---regarded abduction as an exploratory activity. In this regard, Peirce's insights about reasoning align with conclusions from modern psychological research. Since abduction is often defined as "inferring the best explanation," the challenge of implementing abductive reasoning and the challenge of automating the explanation process are closely linked. We explore these linkages in this report. This analysis provides a theoretical framework for understanding what the XAI researchers are already doing, it explains why some XAI projects are succeeding (or might succeed), and it leads to design advice.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge