Annotation-Efficient Polyp Segmentation via Active Learning

Mar 21, 2024Duojun Huang, Xinyu Xiong, De-Jun Fan, Feng Gao, Xiao-Jian Wu, Guanbin Li

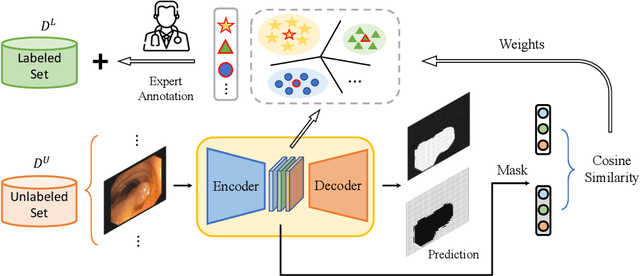

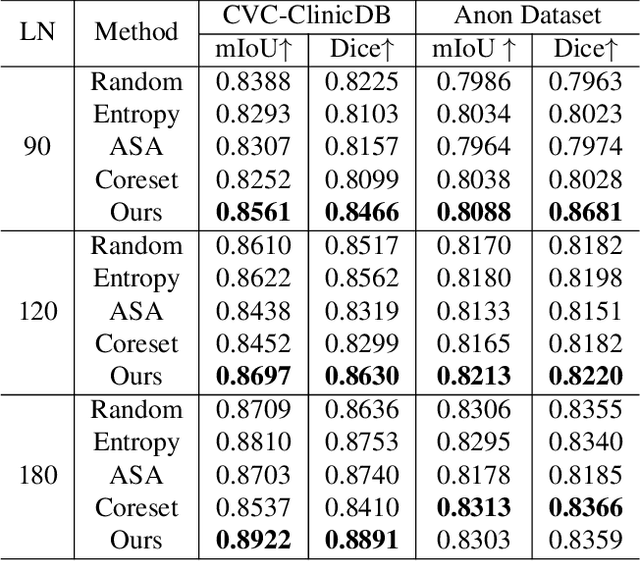

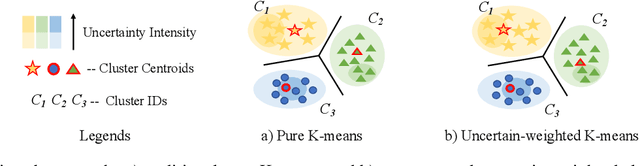

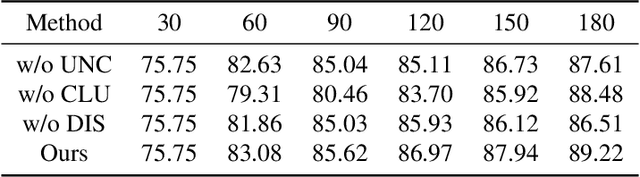

Deep learning-based techniques have proven effective in polyp segmentation tasks when provided with sufficient pixel-wise labeled data. However, the high cost of manual annotation has created a bottleneck for model generalization. To minimize annotation costs, we propose a deep active learning framework for annotation-efficient polyp segmentation. In practice, we measure the uncertainty of each sample by examining the similarity between features masked by the prediction map of the polyp and the background area. Since the segmentation model tends to perform weak in samples with indistinguishable features of foreground and background areas, uncertainty sampling facilitates the fitting of under-learning data. Furthermore, clustering image-level features weighted by uncertainty identify samples that are both uncertain and representative. To enhance the selectivity of the active selection strategy, we propose a novel unsupervised feature discrepancy learning mechanism. The selection strategy and feature optimization work in tandem to achieve optimal performance with a limited annotation budget. Extensive experimental results have demonstrated that our proposed method achieved state-of-the-art performance compared to other competitors on both a public dataset and a large-scale in-house dataset.

Semi- and Weakly-Supervised Learning for Mammogram Mass Segmentation with Limited Annotations

Mar 14, 2024Xinyu Xiong, Churan Wang, Wenxue Li, Guanbin Li

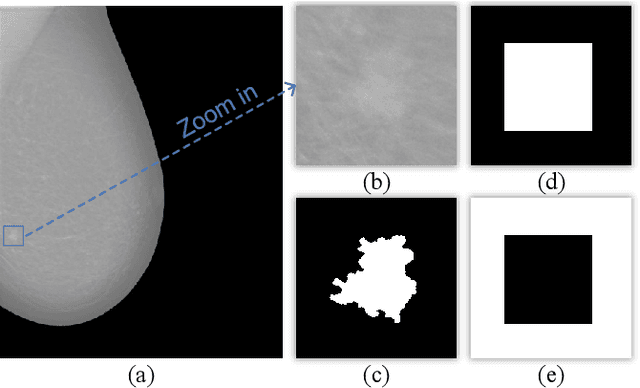

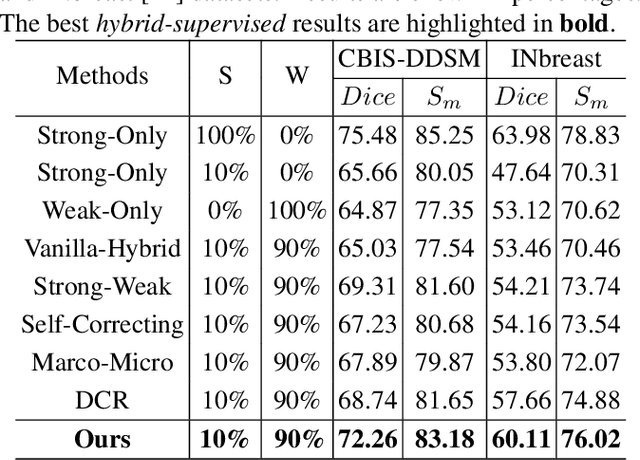

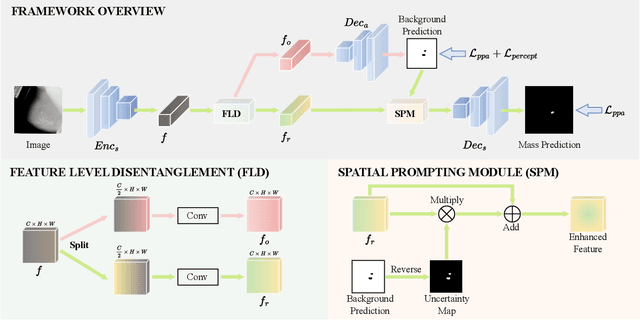

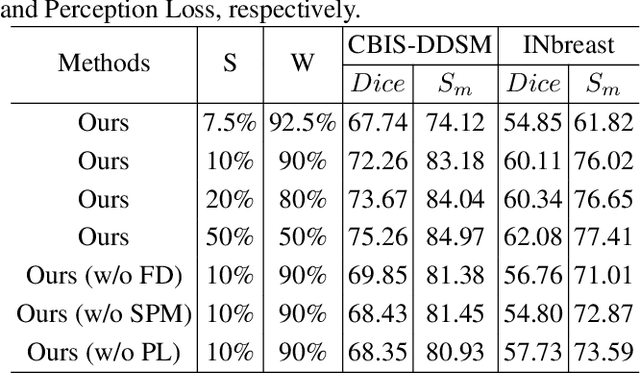

Accurate identification of breast masses is crucial in diagnosing breast cancer; however, it can be challenging due to their small size and being camouflaged in surrounding normal glands. Worse still, it is also expensive in clinical practice to obtain adequate pixel-wise annotations for training deep neural networks. To overcome these two difficulties with one stone, we propose a semi- and weakly-supervised learning framework for mass segmentation that utilizes limited strongly-labeled samples and sufficient weakly-labeled samples to achieve satisfactory performance. The framework consists of an auxiliary branch to exclude lesion-irrelevant background areas, a segmentation branch for final prediction, and a spatial prompting module to integrate the complementary information of the two branches. We further disentangle encoded obscure features into lesion-related and others to boost performance. Experiments on CBIS-DDSM and INbreast datasets demonstrate the effectiveness of our method.

Long-term Wind Power Forecasting with Hierarchical Spatial-Temporal Transformer

May 30, 2023Yang Zhang, Lingbo Liu, Xinyu Xiong, Guanbin Li, Guoli Wang, Liang Lin

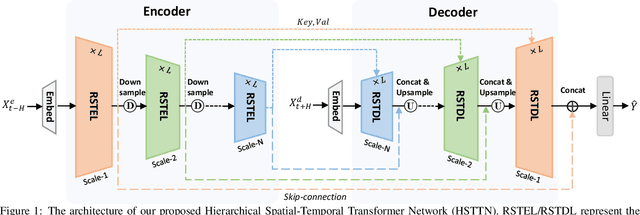

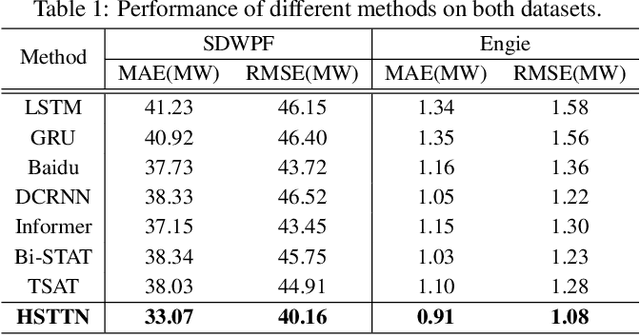

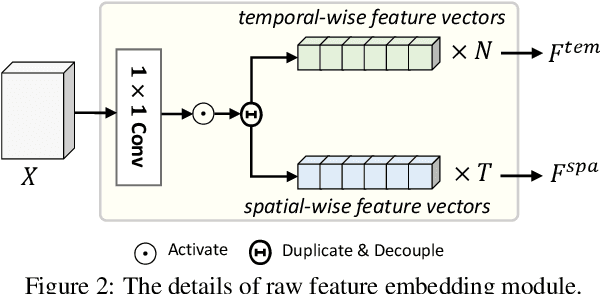

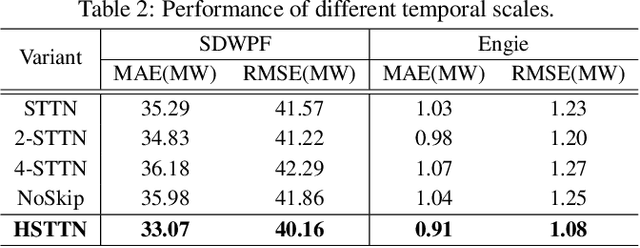

Wind power is attracting increasing attention around the world due to its renewable, pollution-free, and other advantages. However, safely and stably integrating the high permeability intermittent power energy into electric power systems remains challenging. Accurate wind power forecasting (WPF) can effectively reduce power fluctuations in power system operations. Existing methods are mainly designed for short-term predictions and lack effective spatial-temporal feature augmentation. In this work, we propose a novel end-to-end wind power forecasting model named Hierarchical Spatial-Temporal Transformer Network (HSTTN) to address the long-term WPF problems. Specifically, we construct an hourglass-shaped encoder-decoder framework with skip-connections to jointly model representations aggregated in hierarchical temporal scales, which benefits long-term forecasting. Based on this framework, we capture the inter-scale long-range temporal dependencies and global spatial correlations with two parallel Transformer skeletons and strengthen the intra-scale connections with downsampling and upsampling operations. Moreover, the complementary information from spatial and temporal features is fused and propagated in each other via Contextual Fusion Blocks (CFBs) to promote the prediction further. Extensive experimental results on two large-scale real-world datasets demonstrate the superior performance of our HSTTN over existing solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge