Sensing-Resistance-Oriented Beamforming for Privacy Protection from ISAC Devices

Apr 08, 2024Teng Ma, Yue Xiao, Xia Lei, Ming Xiao

With the evolution of integrated sensing and communication (ISAC) technology, a growing number of devices go beyond conventional communication functions with sensing abilities. Therefore, future networks are divinable to encounter new privacy concerns on sensing, such as the exposure of position information to unintended receivers. In contrast to traditional privacy preserving schemes aiming to prevent eavesdropping, this contribution conceives a novel beamforming design toward sensing resistance (SR). Specifically, we expect to guarantee the communication quality while masking the real direction of the SR transmitter during the communication. To evaluate the SR performance, a metric termed angular-domain peak-to-average ratio (ADPAR) is first defined and analyzed. Then, we resort to the null-space technique to conceal the real direction, hence to convert the optimization problem to a more tractable form. Moreover, semidefinite relaxation along with index optimization is further utilized to obtain the optimal beamformer. Finally, simulation results demonstrate the feasibility of the proposed SR-oriented beamforming design toward privacy protection from ISAC receivers.

Deep Reinforcement Learning Empowered Activity-Aware Dynamic Health Monitoring Systems

Jan 19, 2024Ziqiaing Ye, Yulan Gao, Yue Xiao, Zehui Xiong, Dusit Niyato

In smart healthcare, health monitoring utilizes diverse tools and technologies to analyze patients' real-time biosignal data, enabling immediate actions and interventions. Existing monitoring approaches were designed on the premise that medical devices track several health metrics concurrently, tailored to their designated functional scope. This means that they report all relevant health values within that scope, which can result in excess resource use and the gathering of extraneous data due to monitoring irrelevant health metrics. In this context, we propose Dynamic Activity-Aware Health Monitoring strategy (DActAHM) for striking a balance between optimal monitoring performance and cost efficiency, a novel framework based on Deep Reinforcement Learning (DRL) and SlowFast Model to ensure precise monitoring based on users' activities. Specifically, with the SlowFast Model, DActAHM efficiently identifies individual activities and captures these results for enhanced processing. Subsequently, DActAHM refines health metric monitoring in response to the identified activity by incorporating a DRL framework. Extensive experiments comparing DActAHM against three state-of-the-art approaches demonstrate it achieves 27.3% higher gain than the best-performing baseline that fixes monitoring actions over timeline.

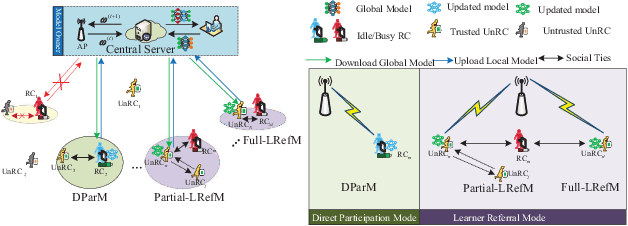

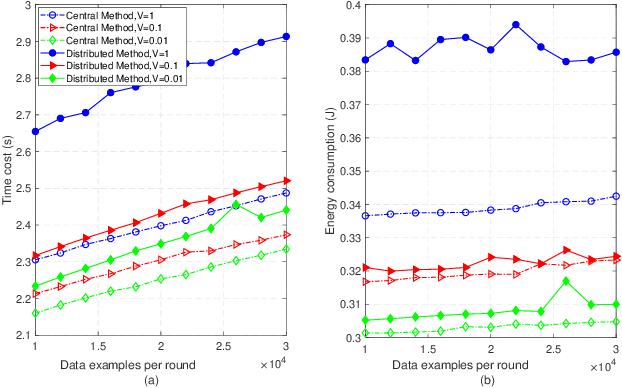

Learner Referral for Cost-Effective Federated Learning Over Hierarchical IoT Networks

Jul 19, 2023Yulan Gao, Ziqiang Ye, Yue Xiao, Wei Xiang

The paradigm of federated learning (FL) to address data privacy concerns by locally training parameters on resource-constrained clients in a distributed manner has garnered significant attention. Nonetheless, FL is not applicable when not all clients within the coverage of the FL server are registered with the FL network. To bridge this gap, this paper proposes joint learner referral aided federated client selection (LRef-FedCS), along with communications and computing resource scheduling, and local model accuracy optimization (LMAO) methods. These methods are designed to minimize the cost incurred by the worst-case participant and ensure the long-term fairness of FL in hierarchical Internet of Things (HieIoT) networks. Utilizing the Lyapunov optimization technique, we reformulate the original problem into a stepwise joint optimization problem (JOP). Subsequently, to tackle the mixed-integer non-convex JOP, we separatively and iteratively address LRef-FedCS and LMAO through the centralized method and self-adaptive global best harmony search (SGHS) algorithm, respectively. To enhance scalability, we further propose a distributed LRef-FedCS approach based on a matching game to replace the centralized method described above. Numerical simulations and experimental results on the MNIST/CIFAR-10 datasets demonstrate that our proposed LRef-FedCS approach could achieve a good balance between pursuing high global accuracy and reducing cost.

Zero-Energy Reconfigurable Intelligent Surfaces (zeRIS)

May 12, 2023Dimitrios Tyrovolas, Sotiris A. Tegos, Vasilis K. Papanikolaou, Yue Xiao, Prodromos-Vasileios Mekikis, Panagiotis D. Diamantoulakis, Sotiris Ioannidis, Christos K. Liaskos, George K. Karagiannidis

A primary objective of the forthcoming sixth generation (6G) of wireless networking is to support demanding applications, while ensuring energy efficiency. Programmable wireless environments (PWEs) have emerged as a promising solution, leveraging reconfigurable intelligent surfaces (RISs), to control wireless propagation and deliver exceptional quality-ofservice. In this paper, we analyze the performance of a network supported by zero-energy RISs (zeRISs), which harvest energy for their operation and contribute to the realization of PWEs. Specifically, we investigate joint energy-data rate outage probability and the energy efficiency of a zeRIS-assisted communication system by employing three harvest-and-reflect (HaR) methods, i) power splitting, ii) time switching, and iii) element splitting. Furthermore, we consider two zeRIS deployment strategies, namely BS-side zeRIS and UE-side zeRIS. Simulation results validate the provided analysis and examine which HaR method performs better depending on the zeRIS placement. Finally, valuable insights and conclusions for the performance of zeRISassisted wireless networks are drawn from the presented results.

Design of Reconfigurable Intelligent Surface-Aided Cross-Media Communications

Nov 05, 2022Mingming Wu, Yue Xiao, Yulan Gao, Ming Xiao

A novel reconfigurable intelligent surface (RIS)-aided hybrid reflection/transmitter design is proposed for achieving information exchange in cross-media communications. In pursuit of the balance between energy efficiency and low-cost implementations, the cloud-management transmission protocol is adopted in the integrated multi-media system. Specifically, the messages of devices using heterogeneous propagation media, are firstly transmitted to the medium-matched AP, with the aid of the RIS-based dual-hop transmission. After the operation of intermediate frequency conversion, the access point (AP) uploads the received signals to the cloud for further demodulating and decoding process. Based on time division multiple access (TDMA), the cloud is able to distinguish the downlink data transmitted to different devices and transforms them into the input of the RIS controller via the dedicated control channel. Thereby, the RIS can passively reflect the incident carrier back into the original receiver with the exchanged information during the preallocated slots, following the idea of an index modulation-based transmitter. Moreover, the iterative optimization algorithm is utilized for optimizing the RIS phase, transmit rate and time allocation jointly in the delay-constrained cross-media communication model. Our simulation results demonstrate that the proposed RIS-based scheme can improve the end-to-end throughput than that of the AP-based transmission, the equal time allocation, the random and the discrete phase adjustment benchmarks.

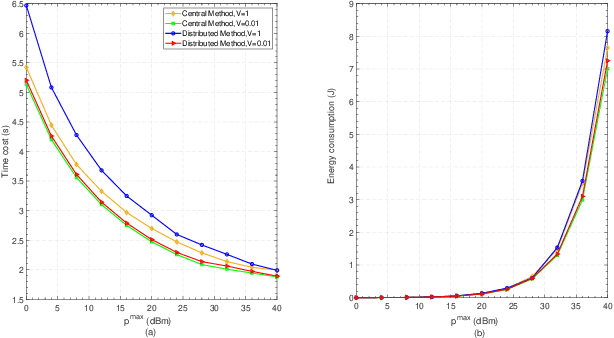

Multi-Resource Allocation for On-Device Distributed Federated Learning Systems

Nov 01, 2022Yulan Gao, Ziqiang Ye, Han Yu, Zehui Xiong, Yue Xiao, Dusit Niyato

This work poses a distributed multi-resource allocation scheme for minimizing the weighted sum of latency and energy consumption in the on-device distributed federated learning (FL) system. Each mobile device in the system engages the model training process within the specified area and allocates its computation and communication resources for deriving and uploading parameters, respectively, to minimize the objective of system subject to the computation/communication budget and a target latency requirement. In particular, mobile devices are connect via wireless TCP/IP architectures. Exploiting the optimization problem structure, the problem can be decomposed to two convex sub-problems. Drawing on the Lagrangian dual and harmony search techniques, we characterize the global optimal solution by the closed-form solutions to all sub-problems, which give qualitative insights to multi-resource tradeoff. Numerical simulations are used to validate the analysis and assess the performance of the proposed algorithm.

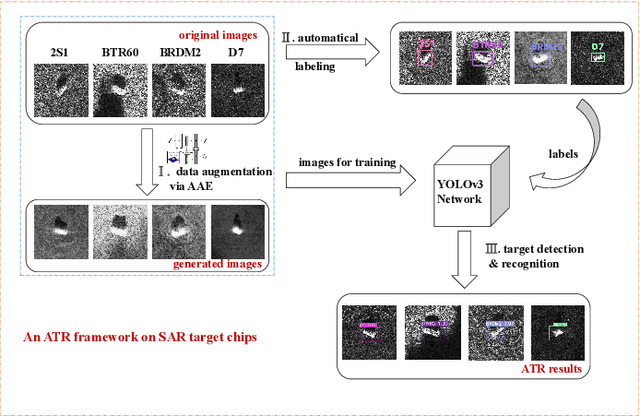

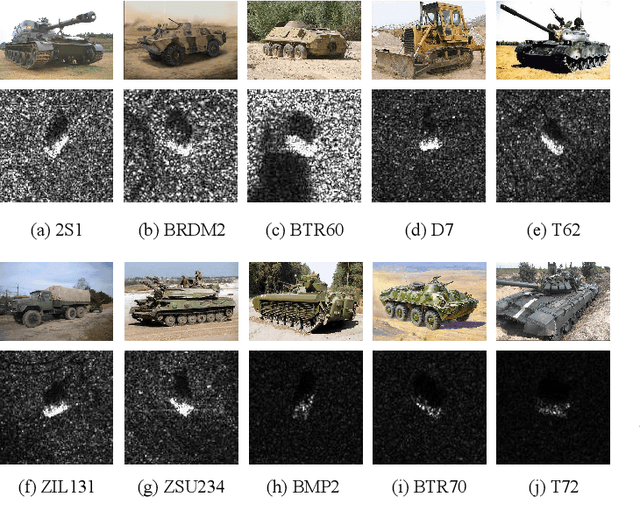

Learning Efficient Representations for Enhanced Object Detection on Large-scene SAR Images

Jan 22, 2022Siyan Li, Yue Xiao, Yuhang Zhang, Lei Chu, Robert C. Qiu

It is a challenging problem to detect and recognize targets on complex large-scene Synthetic Aperture Radar (SAR) images. Recently developed deep learning algorithms can automatically learn the intrinsic features of SAR images, but still have much room for improvement on large-scene SAR images with limited data. In this paper, based on learning representations and multi-scale features of SAR images, we propose an efficient and robust deep learning based target detection method. Especially, by leveraging the effectiveness of adversarial autoencoder (AAE) which influences the distribution of the investigated data explicitly, the raw SAR dataset is augmented into an enhanced version with a large quantity and diversity. Besides, an auto-labeling scheme is proposed to improve labeling efficiency. Finally, with jointly training small target chips and large-scene images, an integrated YOLO network combining non-maximum suppression on sub-images is used to realize multiple targets detection of high resolution images. The numerical experimental results on the MSTAR dataset show that our method can realize target detection and recognition on large-scene images accurately and efficiently. The superior anti-noise performance is also confirmed by experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge