Planning Paths through Occlusions in Urban Environments

Dec 29, 2022Yutao Han, Youya Xia, Guo-Jun Qi, Mark Campbell

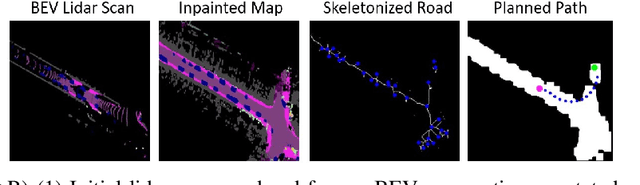

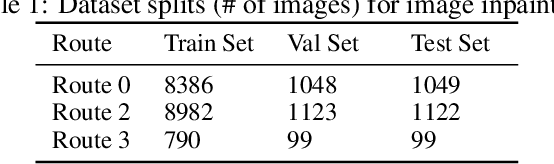

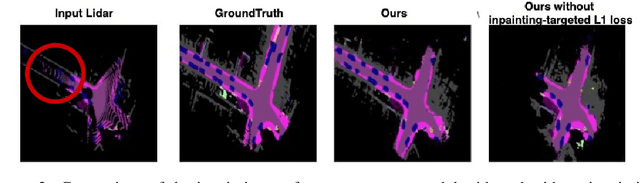

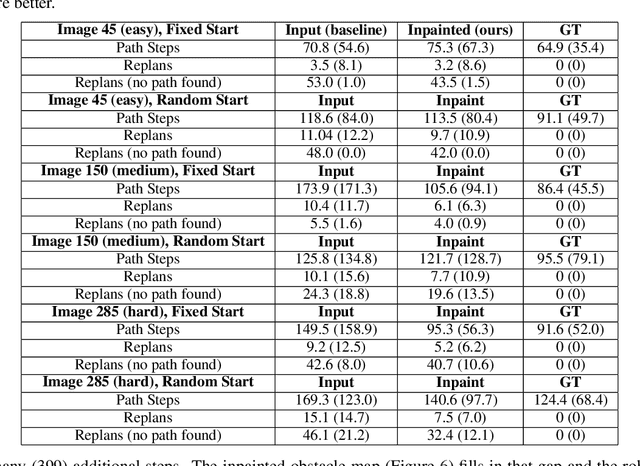

This paper presents a novel framework for planning in unknown and occluded urban spaces. We specifically focus on turns and intersections where occlusions significantly impact navigability. Our approach uses an inpainting model to fill in a sparse, occluded, semantic lidar point cloud and plans dynamically feasible paths for a vehicle to traverse through the open and inpainted spaces. We demonstrate our approach using a car's lidar data with real-time occlusions, and show that by inpainting occluded areas, we can plan longer paths, with more turn options compared to without inpainting; in addition, our approach more closely follows paths derived from a planner with no occlusions (called the ground truth) compared to other state of the art approaches.

Planning Paths Through Unknown Space by Imagining What Lies Therein

Nov 14, 2020Yutao Han, Jacopo Banfi, Mark Campbell

This paper presents a novel framework for planning paths in maps containing unknown spaces, such as from occlusions. Our approach takes as input a semantically-annotated point cloud, and leverages an image inpainting neural network to generate a reasonable model of unknown space as free or occupied. Our validation campaign shows that it is possible to greatly increase the performance of standard pathfinding algorithms which adopt the general optimistic assumption of treating unknown space as free.

DeepSemanticHPPC: Hypothesis-based Planning over Uncertain Semantic Point Clouds

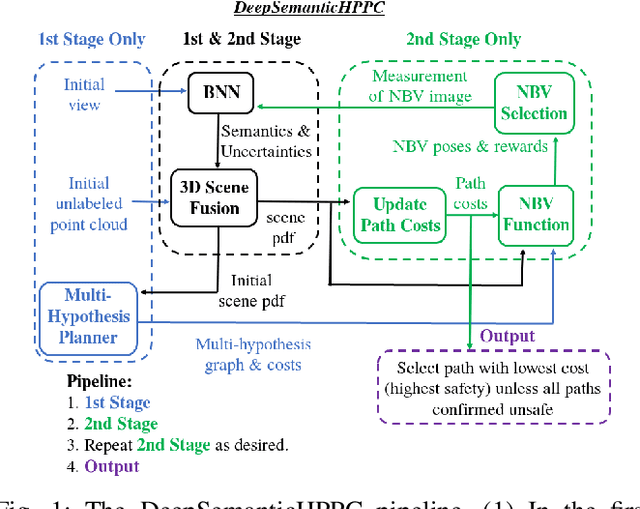

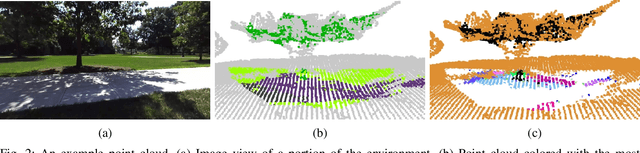

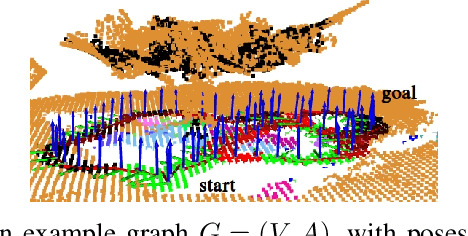

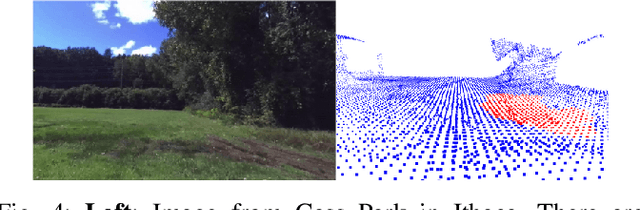

Mar 06, 2020Yutao Han, Hubert Lin, Jacopo Banfi, Kavita Bala, Mark Campbell

Planning in unstructured environments is challenging -- it relies on sensing, perception, scene reconstruction, and reasoning about various uncertainties. We propose DeepSemanticHPPC, a novel uncertainty-aware hypothesis-based planner for unstructured environments. Our algorithmic pipeline consists of: a deep Bayesian neural network which segments surfaces with uncertainty estimates; a flexible point cloud scene representation; a next-best-view planner which minimizes the uncertainty of scene semantics using sparse visual measurements; and a hypothesis-based path planner that proposes multiple kinematically feasible paths with evolving safety confidences given next-best-view measurements. Our pipeline iteratively decreases semantic uncertainty along planned paths, filtering out unsafe paths with high confidence. We show that our framework plans safe paths in real-world environments where existing path planners typically fail.

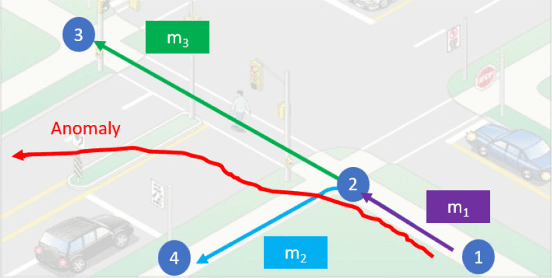

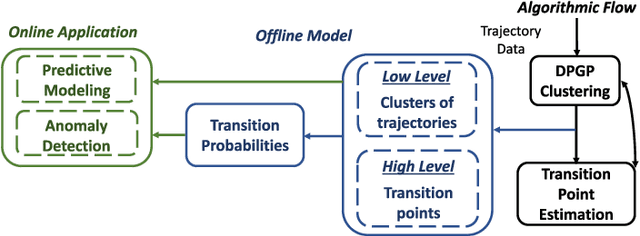

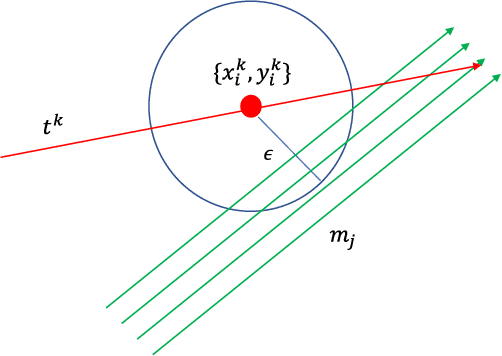

Pedestrian Motion Model Using Non-Parametric Trajectory Clustering and Discrete Transition Points

Jan 28, 2020Yutao Han, Rina Tse, Mark Campbell

This paper presents a pedestrian motion model that includes both low level trajectory patterns, and high level discrete transitions. The inclusion of both levels creates a more general predictive model, allowing for more meaningful prediction and reasoning about pedestrian trajectories, as compared to the current state of the art. The model uses an iterative clustering algorithm with (1) Dirichlet Process Gaussian Processes to cluster trajectories into continuous motion patterns and (2) hypothesis testing to identify discrete transitions in the data called transition points. The model iteratively splits full trajectories into sub-trajectory clusters based on transition points, where pedestrians make discrete decisions. State transition probabilities are then learned over the transition points and trajectory clusters. The model is for online prediction of motions, and detection of anomalous trajectories. The proposed model is validated on the Duke MTMC dataset to demonstrate identification of low level trajectory clusters and high level transitions, and the ability to predict pedestrian motion and detect anomalies online with high accuracy.

* 9 pages, 9 figures, published in IEEE Robotics and Automation Letters (RA-L). Video attachment at: https://www.youtube.com/watch?v=6z5NYIcXV_M&

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge