Learning to bag with a simulation-free reinforcement learning framework for robots

Oct 22, 2023Francisco Munguia-Galeano, Jihong Zhu, Juan David Hernández, Ze Ji

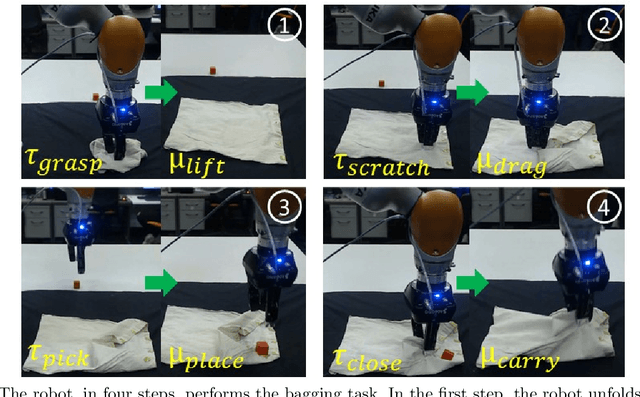

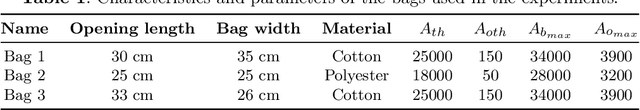

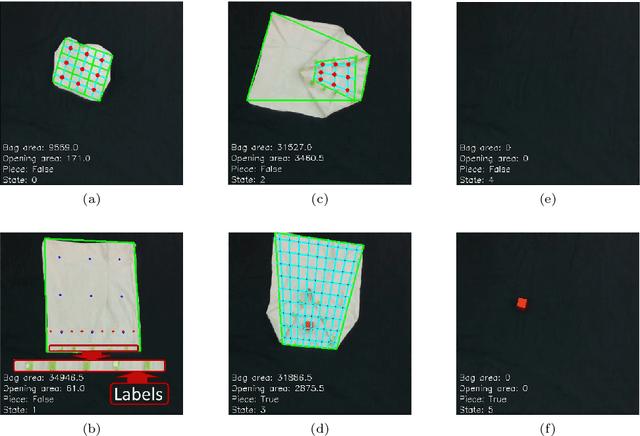

Bagging is an essential skill that humans perform in their daily activities. However, deformable objects, such as bags, are complex for robots to manipulate. This paper presents an efficient learning-based framework that enables robots to learn bagging. The novelty of this framework is its ability to perform bagging without relying on simulations. The learning process is accomplished through a reinforcement learning algorithm introduced in this work, designed to find the best grasping points of the bag based on a set of compact state representations. The framework utilizes a set of primitive actions and represents the task in five states. In our experiments, the framework reaches a 60 % and 80 % of success rate after around three hours of training in the real world when starting the bagging task from folded and unfolded, respectively. Finally, we test the trained model with two more bags of different sizes to evaluate its generalizability.

Deep Reinforcement Learning with Explicit Context Representation

Oct 15, 2023Francisco Munguia-Galeano, Ah-Hwee Tan, Ze Ji

Reinforcement learning (RL) has shown an outstanding capability for solving complex computational problems. However, most RL algorithms lack an explicit method that would allow learning from contextual information. Humans use context to identify patterns and relations among elements in the environment, along with how to avoid making wrong actions. On the other hand, what may seem like an obviously wrong decision from a human perspective could take hundreds of steps for an RL agent to learn to avoid. This paper proposes a framework for discrete environments called Iota explicit context representation (IECR). The framework involves representing each state using contextual key frames (CKFs), which can then be used to extract a function that represents the affordances of the state; in addition, two loss functions are introduced with respect to the affordances of the state. The novelty of the IECR framework lies in its capacity to extract contextual information from the environment and learn from the CKFs' representation. We validate the framework by developing four new algorithms that learn using context: Iota deep Q-network (IDQN), Iota double deep Q-network (IDDQN), Iota dueling deep Q-network (IDuDQN), and Iota dueling double deep Q-network (IDDDQN). Furthermore, we evaluate the framework and the new algorithms in five discrete environments. We show that all the algorithms, which use contextual information, converge in around 40,000 training steps of the neural networks, significantly outperforming their state-of-the-art equivalents.

Rethink Baseline of Integrated Gradients from the Perspective of Shapley Value

Oct 10, 2023Shuyang Liu, Zixuan Chen, Ge Shi, Ji Wang, Changjie Fan, Yu Xiong, Runze Wu Yujing Hu, Ze Ji, Yang Gao

Numerous approaches have attempted to interpret deep neural networks (DNNs) by attributing the prediction of DNN to its input features. One of the well-studied attribution methods is Integrated Gradients (IG). Specifically, the choice of baselines for IG is a critical consideration for generating meaningful and unbiased explanations for model predictions in different scenarios. However, current practice of exploiting a single baseline fails to fulfill this ambition, thus demanding multiple baselines. Fortunately, the inherent connection between IG and Aumann-Shapley Value forms a unique perspective to rethink the design of baselines. Under certain hypothesis, we theoretically analyse that a set of baseline aligns with the coalitions in Shapley Value. Thus, we propose a novel baseline construction method called Shapley Integrated Gradients (SIG) that searches for a set of baselines by proportional sampling to partly simulate the computation path of Shapley Value. Simulations on GridWorld show that SIG approximates the proportion of Shapley Values. Furthermore, experiments conducted on various image tasks demonstrate that compared to IG using other baseline methods, SIG exhibits an improved estimation of feature's contribution, offers more consistent explanations across diverse applications, and is generic to distinct data types or instances with insignificant computational overhead.

CasIL: Cognizing and Imitating Skills via a Dual Cognition-Action Architecture

Sep 28, 2023Zixuan Chen, Ze Ji, Shuyang Liu, Jing Huo, Yiyu Chen, Yang Gao

Enabling robots to effectively imitate expert skills in longhorizon tasks such as locomotion, manipulation, and more, poses a long-standing challenge. Existing imitation learning (IL) approaches for robots still grapple with sub-optimal performance in complex tasks. In this paper, we consider how this challenge can be addressed within the human cognitive priors. Heuristically, we extend the usual notion of action to a dual Cognition (high-level)-Action (low-level) architecture by introducing intuitive human cognitive priors, and propose a novel skill IL framework through human-robot interaction, called Cognition-Action-based Skill Imitation Learning (CasIL), for the robotic agent to effectively cognize and imitate the critical skills from raw visual demonstrations. CasIL enables both cognition and action imitation, while high-level skill cognition explicitly guides low-level primitive actions, providing robustness and reliability to the entire skill IL process. We evaluated our method on MuJoCo and RLBench benchmarks, as well as on the obstacle avoidance and point-goal navigation tasks for quadrupedal robot locomotion. Experimental results show that our CasIL consistently achieves competitive and robust skill imitation capability compared to other counterparts in a variety of long-horizon robotic tasks.

Recent Advances of Deep Robotic Affordance Learning: A Reinforcement Learning Perspective

Mar 10, 2023Xintong Yang, Ze Ji, Jing Wu, Yu-kun Lai

As a popular concept proposed in the field of psychology, affordance has been regarded as one of the important abilities that enable humans to understand and interact with the environment. Briefly, it captures the possibilities and effects of the actions of an agent applied to a specific object or, more generally, a part of the environment. This paper provides a short review of the recent developments of deep robotic affordance learning (DRAL), which aims to develop data-driven methods that use the concept of affordance to aid in robotic tasks. We first classify these papers from a reinforcement learning (RL) perspective, and draw connections between RL and affordances. The technical details of each category are discussed and their limitations identified. We further summarise them and identify future challenges from the aspects of observations, actions, affordance representation, data-collection and real-world deployment. A final remark is given at the end to propose a promising future direction of the RL-based affordance definition to include the predictions of arbitrary action consequences.

Abstract Demonstrations and Adaptive Exploration for Efficient and Stable Multi-step Sparse Reward Reinforcement Learning

Jul 19, 2022Xintong Yang, Ze Ji, Jing Wu, Yu-kun Lai

Although Deep Reinforcement Learning (DRL) has been popular in many disciplines including robotics, state-of-the-art DRL algorithms still struggle to learn long-horizon, multi-step and sparse reward tasks, such as stacking several blocks given only a task-completion reward signal. To improve learning efficiency for such tasks, this paper proposes a DRL exploration technique, termed A^2, which integrates two components inspired by human experiences: Abstract demonstrations and Adaptive exploration. A^2 starts by decomposing a complex task into subtasks, and then provides the correct orders of subtasks to learn. During training, the agent explores the environment adaptively, acting more deterministically for well-mastered subtasks and more stochastically for ill-learnt subtasks. Ablation and comparative experiments are conducted on several grid-world tasks and three robotic manipulation tasks. We demonstrate that A^2 can aid popular DRL algorithms (DQN, DDPG, and SAC) to learn more efficiently and stably in these environments.

An Open-Source Multi-Goal Reinforcement Learning Environment for Robotic Manipulation with Pybullet

May 12, 2021Xintong Yang, Ze Ji, Jing Wu, Yu-Kun Lai

This work re-implements the OpenAI Gym multi-goal robotic manipulation environment, originally based on the commercial Mujoco engine, onto the open-source Pybullet engine. By comparing the performances of the Hindsight Experience Replay-aided Deep Deterministic Policy Gradient agent on both environments, we demonstrate our successful re-implementation of the original environment. Besides, we provide users with new APIs to access a joint control mode, image observations and goals with customisable camera and a built-in on-hand camera. We further design a set of multi-step, multi-goal, long-horizon and sparse reward robotic manipulation tasks, aiming to inspire new goal-conditioned reinforcement learning algorithms for such challenges. We use a simple, human-prior-based curriculum learning method to benchmark the multi-step manipulation tasks. Discussions about future research opportunities regarding this kind of tasks are also provided.

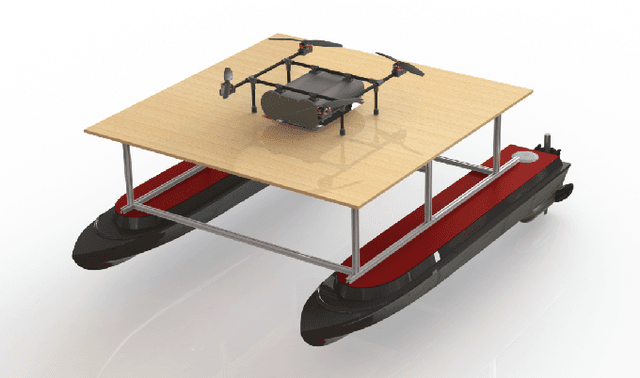

Design, Integration and Sea Trials of 3D Printed Unmanned Aerial Vehicle and Unmanned Surface Vehicle for Cooperative Missions

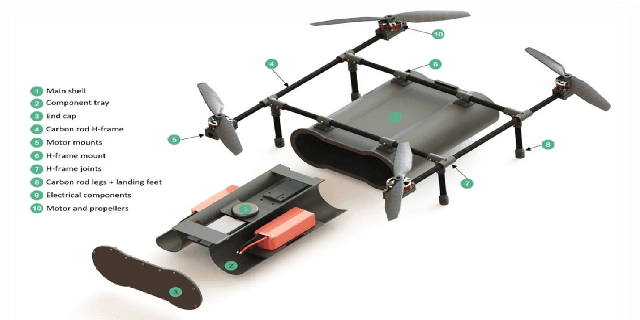

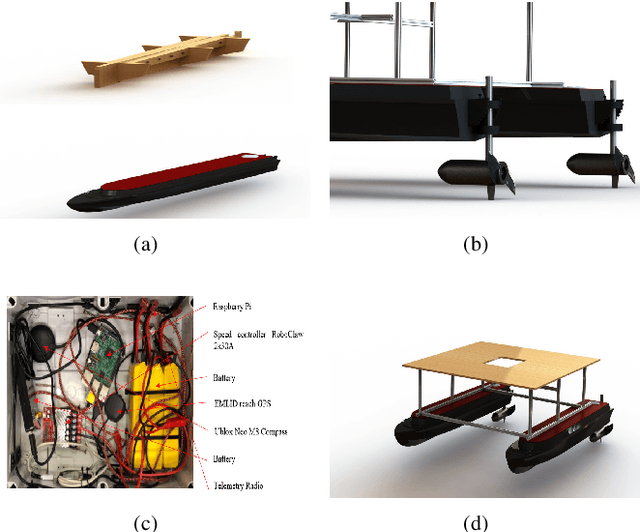

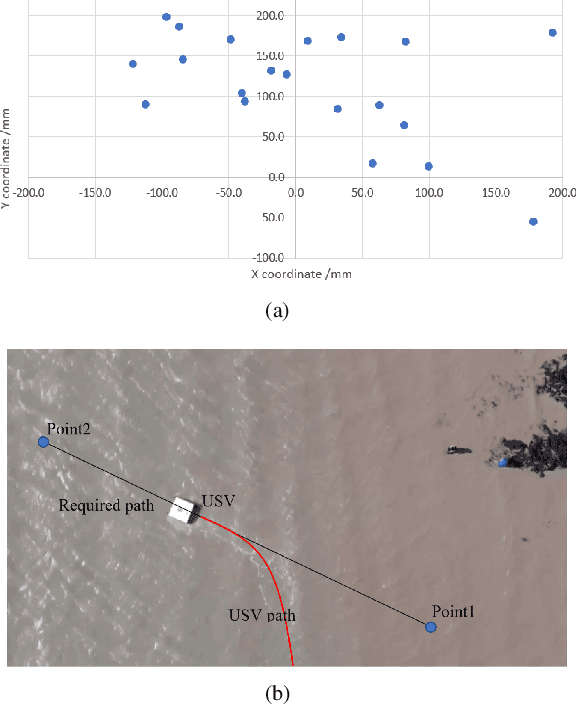

Feb 23, 2021Hanlin Niu, Ze Ji, Pietro Liguori, Hujun Yin, Joaquin Carrasco

In recent years, Unmanned Surface Vehicles (USV) have been extensively deployed for maritime applications. However, USV has a limited detection range with sensor installed at the same elevation with the targets. In this research, we propose a cooperative Unmanned Aerial Vehicle - Unmanned Surface Vehicle (UAV-USV) platform to improve the detection range of USV. A floatable and waterproof UAV is designed and 3D printed, which allows it to land on the sea. A catamaran USV and landing platform are also developed. To land UAV on the USV precisely in various lighting conditions, IR beacon detector and IR beacon are implemented on the UAV and USV, respectively. Finally, a two-phase UAV precise landing method, USV control algorithm and USV path following algorithm are proposed and tested.

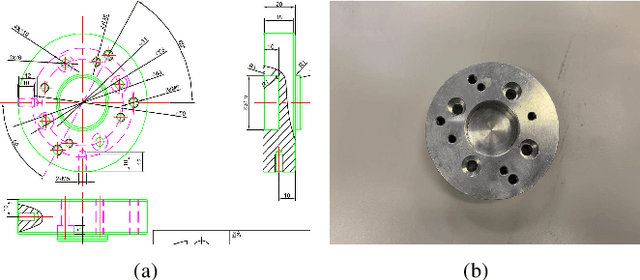

3D Vision-guided Pick-and-Place Using Kuka LBR iiwa Robot

Feb 23, 2021Hanlin Niu, Ze Ji, Zihang Zhu, Hujun Yin, Joaquin Carrasco

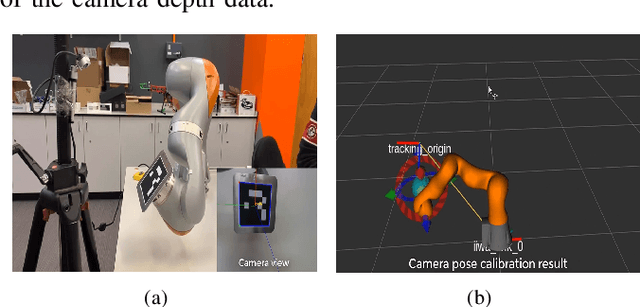

This paper presents the development of a control system for vision-guided pick-and-place tasks using a robot arm equipped with a 3D camera. The main steps include camera intrinsic and extrinsic calibration, hand-eye calibration, initial object pose registration, objects pose alignment algorithm, and pick-and-place execution. The proposed system allows the robot be able to to pick and place object with limited times of registering a new object and the developed software can be applied for new object scenario quickly. The integrated system was tested using the hardware combination of kuka iiwa, Robotiq grippers (two finger gripper and three finger gripper) and 3D cameras (Intel realsense D415 camera, Intel realsense D435 camera, Microsoft Kinect V2). The whole system can also be modified for the combination of other robotic arm, gripper and 3D camera.

Accelerated Sim-to-Real Deep Reinforcement Learning: Learning Collision Avoidance from Human Player

Feb 23, 2021Hanlin Niu, Ze Ji, Farshad Arvin, Barry Lennox, Hujun Yin, Joaquin Carrasco

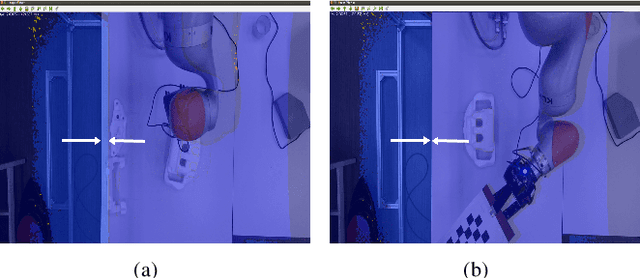

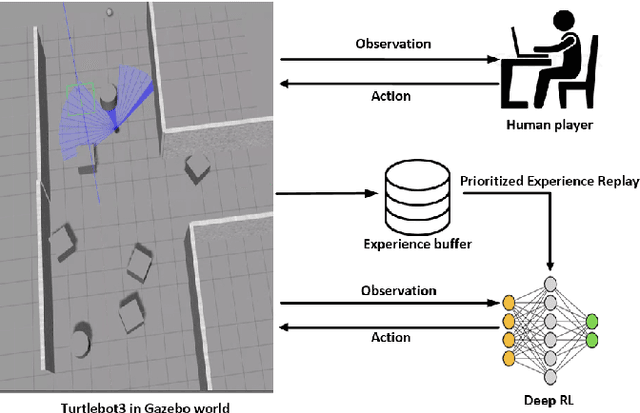

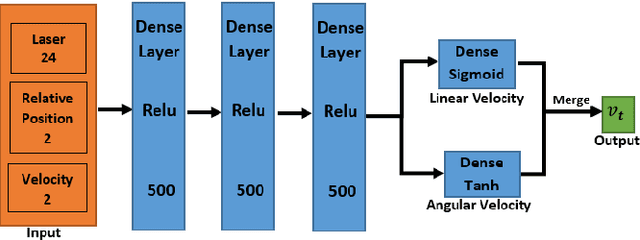

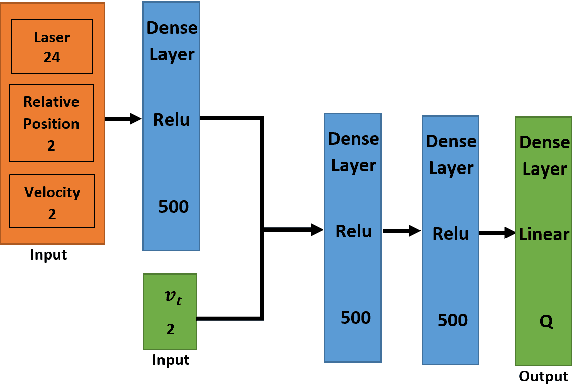

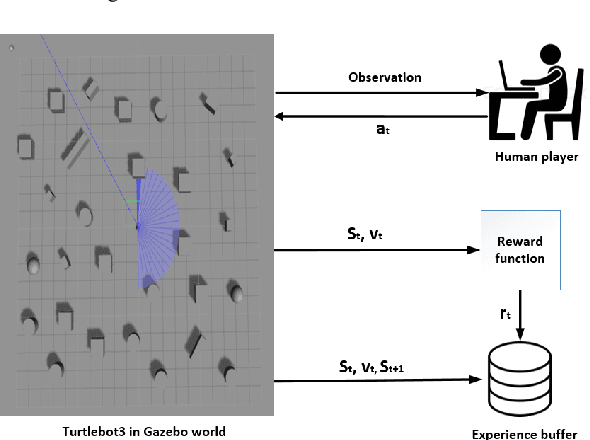

This paper presents a sensor-level mapless collision avoidance algorithm for use in mobile robots that map raw sensor data to linear and angular velocities and navigate in an unknown environment without a map. An efficient training strategy is proposed to allow a robot to learn from both human experience data and self-exploratory data. A game format simulation framework is designed to allow the human player to tele-operate the mobile robot to a goal and human action is also scored using the reward function. Both human player data and self-playing data are sampled using prioritized experience replay algorithm. The proposed algorithm and training strategy have been evaluated in two different experimental configurations: \textit{Environment 1}, a simulated cluttered environment, and \textit{Environment 2}, a simulated corridor environment, to investigate the performance. It was demonstrated that the proposed method achieved the same level of reward using only 16\% of the training steps required by the standard Deep Deterministic Policy Gradient (DDPG) method in Environment 1 and 20\% of that in Environment 2. In the evaluation of 20 random missions, the proposed method achieved no collision in less than 2~h and 2.5~h of training time in the two Gazebo environments respectively. The method also generated smoother trajectories than DDPG. The proposed method has also been implemented on a real robot in the real-world environment for performance evaluation. We can confirm that the trained model with the simulation software can be directly applied into the real-world scenario without further fine-tuning, further demonstrating its higher robustness than DDPG. The video and code are available: https://youtu.be/BmwxevgsdGc https://github.com/hanlinniu/turtlebot3_ddpg_collision_avoidance

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge