Mutual information is copula entropy

Aug 06, 2008Jian Ma, Zengqi Sun

We prove that mutual information is actually negative copula entropy, based on which a method for mutual information estimation is proposed.

Dependence Structure Estimation via Copula

Apr 28, 2008Jian Ma, Zengqi Sun

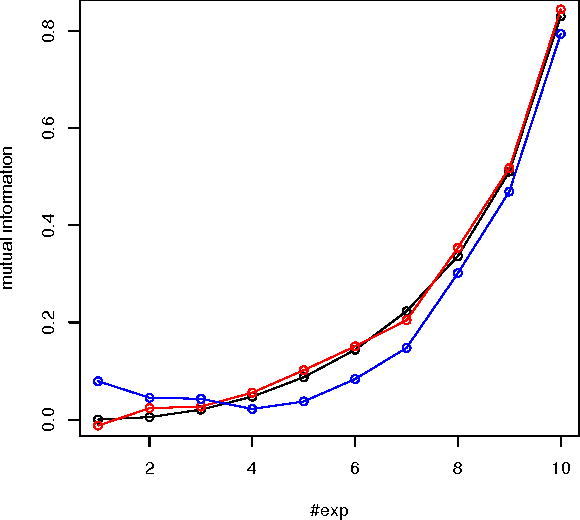

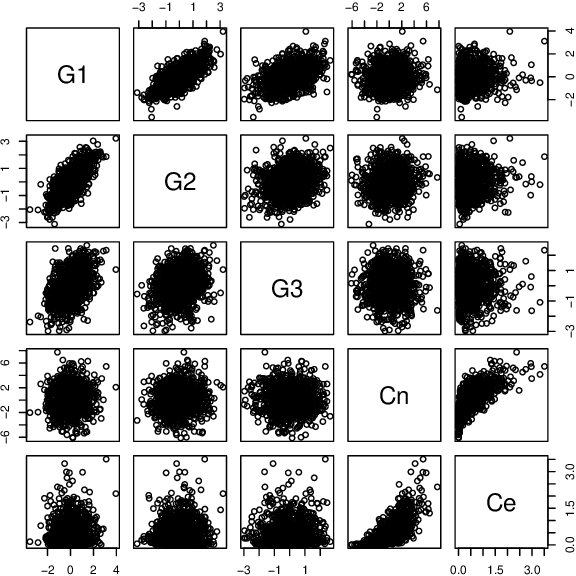

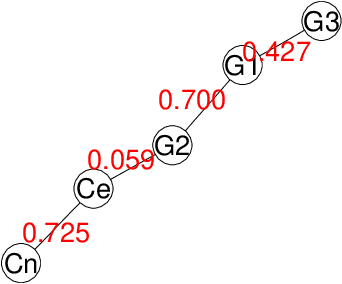

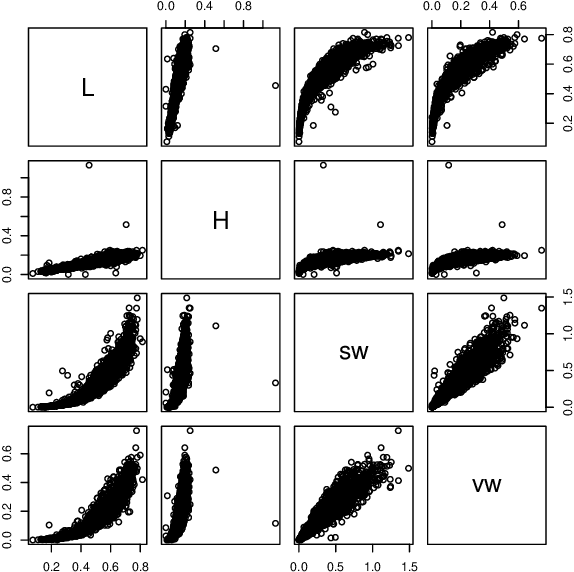

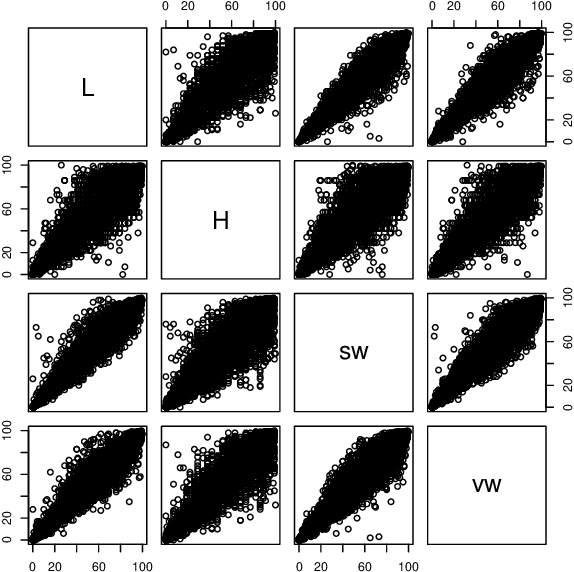

We propose a new framework for dependence structure learning via copula. Copula is a statistical theory on dependence and measurement of association. Graphical models are considered as a type of special case of copula families, named product copula. In this paper, a nonparametric algorithm for copula estimation is presented. Then a Chow-Liu like method based on dependence measure via copula is proposed to estimate maximum spanning product copula with only bivariate dependence relations. The advantage of the framework is that learning with empirical copula focuses only on dependence relations among random variables, without knowing the properties of individual variables. Another advantage is that copula is a universal model of dependence and therefore the framework based on it can be generalized to deal with a wide range of complex dependence relations. Experiments on both simulated data and real application data show the effectiveness of the proposed method.

Copula Component Analysis

Mar 20, 2007Jian Ma, Zengqi Sun

A framework named Copula Component Analysis (CCA) for blind source separation is proposed as a generalization of Independent Component Analysis (ICA). It differs from ICA which assumes independence of sources that the underlying components may be dependent with certain structure which is represented by Copula. By incorporating dependency structure, much accurate estimation can be made in principle in the case that the assumption of independence is invalidated. A two phrase inference method is introduced for CCA which is based on the notion of multidimensional ICA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge