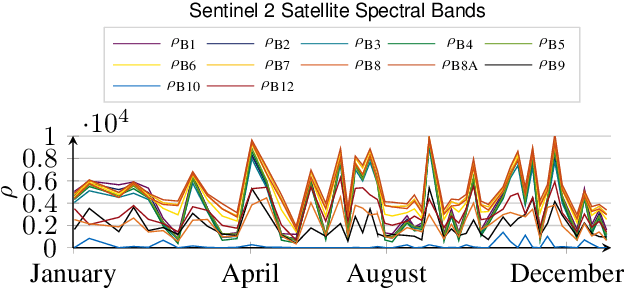

Weakly supervised covariance matrices alignment through Stiefel matrices estimation for MEG applications

Jan 24, 2024Antoine Collas, Rémi Flamary, Alexandre Gramfort

This paper introduces a novel domain adaptation technique for time series data, called Mixing model Stiefel Adaptation (MSA), specifically addressing the challenge of limited labeled signals in the target dataset. Leveraging a domain-dependent mixing model and the optimal transport domain adaptation assumption, we exploit abundant unlabeled data in the target domain to ensure effective prediction by establishing pairwise correspondence with equivalent signal variances between domains. Theoretical foundations are laid for identifying crucial Stiefel matrices, essential for recovering underlying signal variances from a Riemannian representation of observed signal covariances. We propose an integrated cost function that simultaneously learns these matrices, pairwise domain relationships, and a predictor, classifier, or regressor, depending on the task. Applied to neuroscience problems, MSA outperforms recent methods in brain-age regression with task variations using magnetoencephalography (MEG) signals from the Cam-CAN dataset.

The Fisher-Rao geometry of CES distributions

Oct 02, 2023Florent Bouchard, Arnaud Breloy, Antoine Collas, Alexandre Renaux, Guillaume Ginolhac

When dealing with a parametric statistical model, a Riemannian manifold can naturally appear by endowing the parameter space with the Fisher information metric. The geometry induced on the parameters by this metric is then referred to as the Fisher-Rao information geometry. Interestingly, this yields a point of view that allows for leveragingmany tools from differential geometry. After a brief introduction about these concepts, we will present some practical uses of these geometric tools in the framework of elliptical distributions. This second part of the exposition is divided into three main axes: Riemannian optimization for covariance matrix estimation, Intrinsic Cram\'er-Rao bounds, and classification using Riemannian distances.

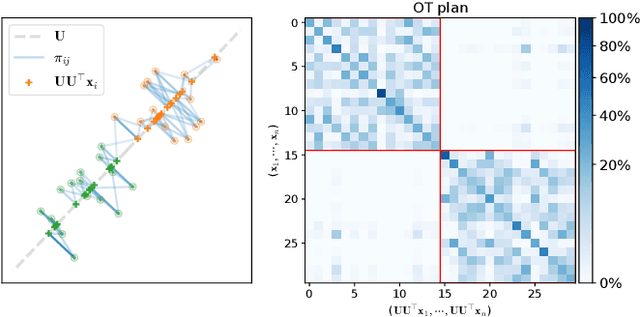

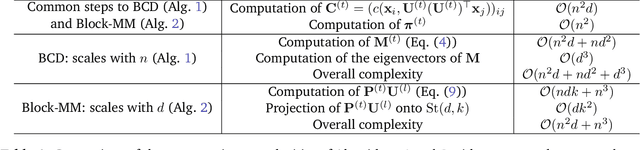

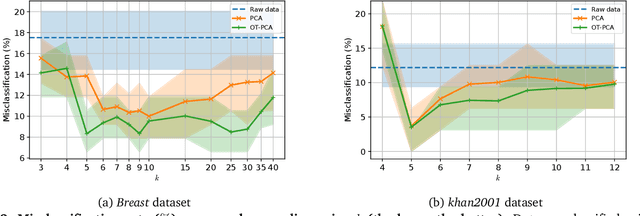

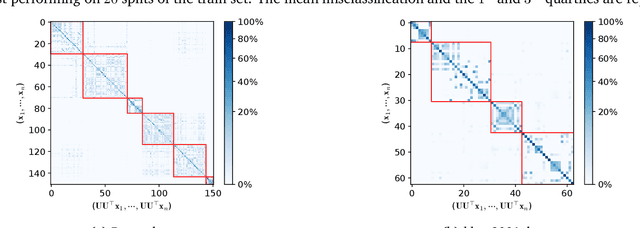

Entropic Wasserstein Component Analysis

Mar 09, 2023Antoine Collas, Titouan Vayer, Rémi Flamary, Arnaud Breloy

Dimension reduction (DR) methods provide systematic approaches for analyzing high-dimensional data. A key requirement for DR is to incorporate global dependencies among original and embedded samples while preserving clusters in the embedding space. To achieve this, we combine the principles of optimal transport (OT) and principal component analysis (PCA). Our method seeks the best linear subspace that minimizes reconstruction error using entropic OT, which naturally encodes the neighborhood information of the samples. From an algorithmic standpoint, we propose an efficient block-majorization-minimization solver over the Stiefel manifold. Our experimental results demonstrate that our approach can effectively preserve high-dimensional clusters, leading to more interpretable and effective embeddings. Python code of the algorithms and experiments is available online.

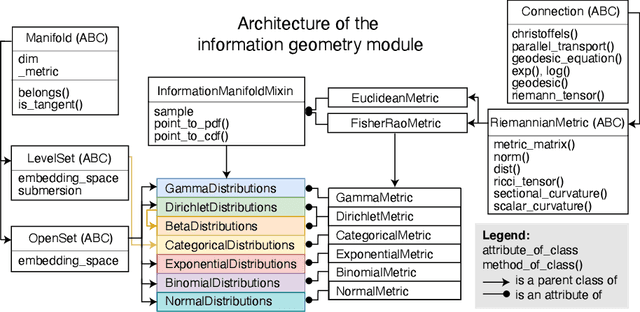

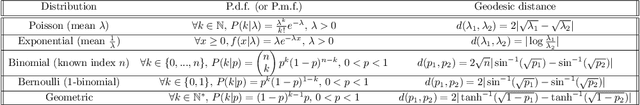

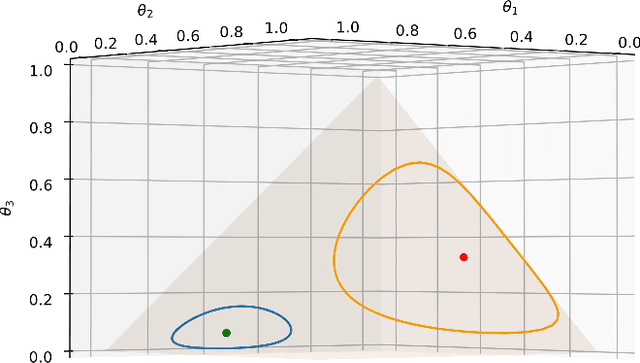

Parametric information geometry with the package Geomstats

Nov 21, 2022Alice Le Brigant, Jules Deschamps, Antoine Collas, Nina Miolane

We introduce the information geometry module of the Python package Geomstats. The module first implements Fisher-Rao Riemannian manifolds of widely used parametric families of probability distributions, such as normal, gamma, beta, Dirichlet distributions, and more. The module further gives the Fisher-Rao Riemannian geometry of any parametric family of distributions of interest, given a parameterized probability density function as input. The implemented Riemannian geometry tools allow users to compare, average, interpolate between distributions inside a given family. Importantly, such capabilities open the door to statistics and machine learning on probability distributions. We present the object-oriented implementation of the module along with illustrative examples and show how it can be used to perform learning on manifolds of parametric probability distributions.

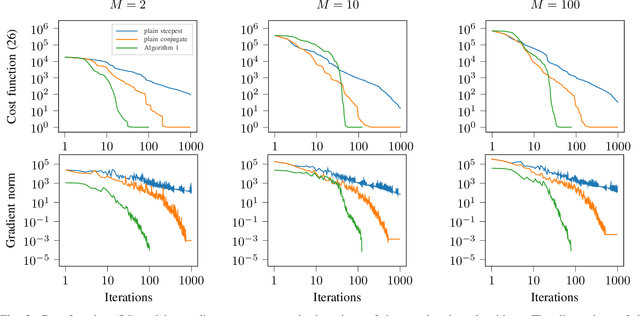

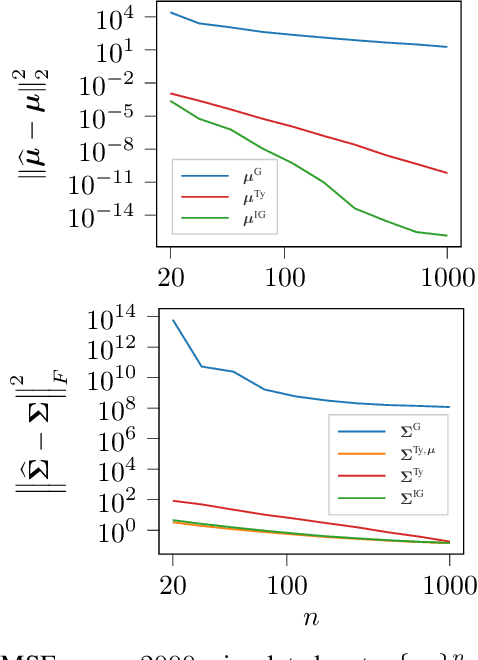

Riemannian optimization for non-centered mixture of scaled Gaussian distributions

Sep 07, 2022Antoine Collas, Arnaud Breloy, Chengfang Ren, Guillaume Ginolhac, Jean-Philippe Ovarlez

This paper studies the statistical model of the non-centered mixture of scaled Gaussian distributions (NC-MSG). Using the Fisher-Rao information geometry associated to this distribution, we derive a Riemannian gradient descent algorithm. This algorithm is leveraged for two minimization problems. The first one is the minimization of a regularized negative log- likelihood (NLL). The latter makes the trade-off between a white Gaussian distribution and the NC-MSG. Conditions on the regularization are given so that the existence of a minimum to this problem is guaranteed without assumptions on the samples. Then, the Kullback-Leibler (KL) divergence between two NC-MSG is derived. This divergence enables us to define a minimization problem to compute centers of mass of several NC-MSGs. The proposed Riemannian gradient descent algorithm is leveraged to solve this second minimization problem. Numerical experiments show the good performance and the speed of the Riemannian gradient descent on the two problems. Finally, a Nearest centroid classifier is implemented leveraging the KL divergence and its associated center of mass. Applied on the large scale dataset Breizhcrops, this classifier shows good accuracies as well as robustness to rigid transformations of the test set.

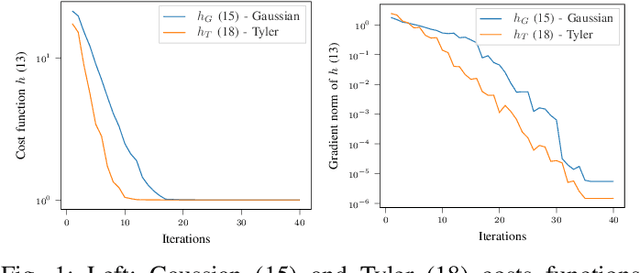

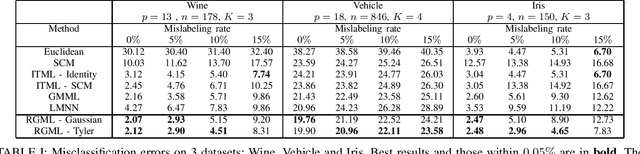

Robust Geometric Metric Learning

Feb 23, 2022Antoine Collas, Arnaud Breloy, Guillaume Ginolhac, Chengfang Ren, Jean-Philippe Ovarlez

This paper proposes new algorithms for the metric learning problem. We start by noticing that several classical metric learning formulations from the literature can be viewed as modified covariance matrix estimation problems. Leveraging this point of view, a general approach, called Robust Geometric Metric Learning (RGML), is then studied. This method aims at simultaneously estimating the covariance matrix of each class while shrinking them towards their (unknown) barycenter. We focus on two specific costs functions: one associated with the Gaussian likelihood (RGML Gaussian), and one with Tyler's M -estimator (RGML Tyler). In both, the barycenter is defined with the Riemannian distance, which enjoys nice properties of geodesic convexity and affine invariance. The optimization is performed using the Riemannian geometry of symmetric positive definite matrices and its submanifold of unit determinant. Finally, the performance of RGML is asserted on real datasets. Strong performance is exhibited while being robust to mislabeled data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge