Improving End-to-End Speech Translation by Imitation-Based Knowledge Distillation with Synthetic Transcripts

Jul 17, 2023Rebekka Hubert, Artem Sokolov, Stefan Riezler

End-to-end automatic speech translation (AST) relies on data that combines audio inputs with text translation outputs. Previous work used existing large parallel corpora of transcriptions and translations in a knowledge distillation (KD) setup to distill a neural machine translation (NMT) into an AST student model. While KD allows using larger pretrained models, the reliance of previous KD approaches on manual audio transcripts in the data pipeline restricts the applicability of this framework to AST. We present an imitation learning approach where a teacher NMT system corrects the errors of an AST student without relying on manual transcripts. We show that the NMT teacher can recover from errors in automatic transcriptions and is able to correct erroneous translations of the AST student, leading to improvements of about 4 BLEU points over the standard AST end-to-end baseline on the English-German CoVoST-2 and MuST-C datasets, respectively. Code and data are publicly available.\footnote{\url{https://github.com/HubReb/imitkd_ast/releases/tag/v1.1}}

* IWSLT 2023, corrected version

Make Every Example Count: On Stability and Utility of Self-Influence for Learning from Noisy NLP Datasets

Feb 27, 2023Irina Bejan, Artem Sokolov, Katja Filippova

Increasingly larger datasets have become a standard ingredient to advancing the state of the art in NLP. However, data quality might have already become the bottleneck to unlock further gains. Given the diversity and the sizes of modern datasets, standard data filtering is not straight-forward to apply, because of the multifacetedness of the harmful data and elusiveness of filtering rules that would generalize across multiple tasks. We study the fitness of task-agnostic self-influence scores of training examples for data cleaning, analyze their efficacy in capturing naturally occurring outliers, and investigate to what extent self-influence based data cleaning can improve downstream performance in machine translation, question answering and text classification, building up on recent approaches to self-influence calculation and automated curriculum learning.

Large Raw Emotional Dataset with Aggregation Mechanism

Dec 23, 2022Vladimir Kondratenko, Artem Sokolov, Nikolay Karpov, Oleg Kutuzov, Nikita Savushkin, Fyodor Minkin

We present a new data set for speech emotion recognition (SER) tasks called Dusha. The corpus contains approximately 350 hours of data, more than 300 000 audio recordings with Russian speech and their transcripts. Therefore it is the biggest open bi-modal data collection for SER task nowadays. It is annotated using a crowd-sourcing platform and includes two subsets: acted and real-life. Acted subset has a more balanced class distribution than the unbalanced real-life part consisting of audio podcasts. So the first one is suitable for model pre-training, and the second is elaborated for fine-tuning purposes, model approbation, and validation. This paper describes pre-processing routine, annotation, and experiment with a baseline model to demonstrate some actual metrics which could be obtained with the Dusha data set.

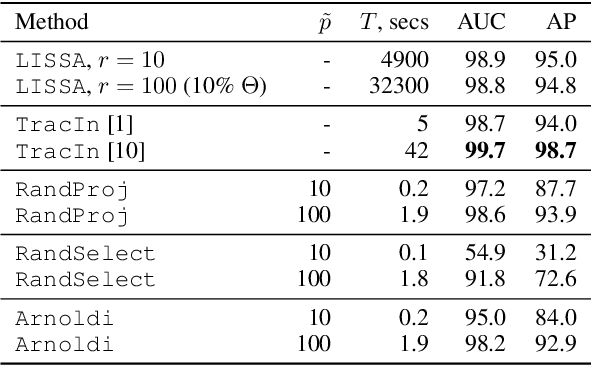

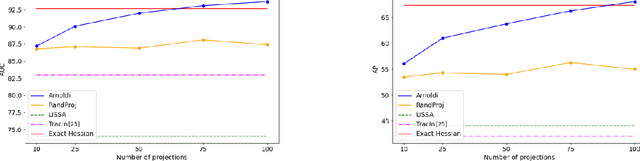

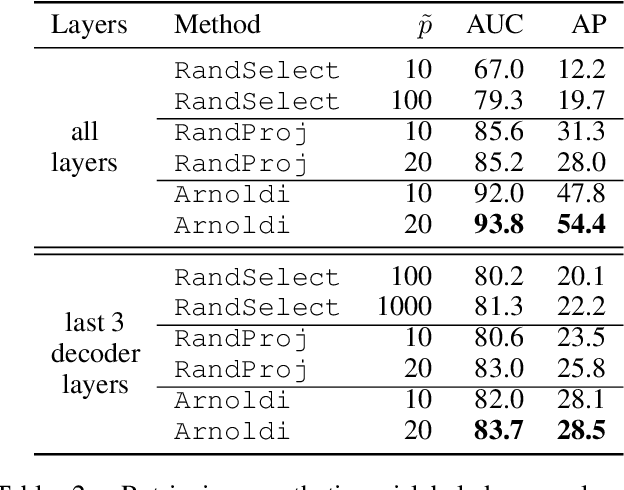

Scaling Up Influence Functions

Dec 06, 2021Andrea Schioppa, Polina Zablotskaia, David Vilar, Artem Sokolov

We address efficient calculation of influence functions for tracking predictions back to the training data. We propose and analyze a new approach to speeding up the inverse Hessian calculation based on Arnoldi iteration. With this improvement, we achieve, to the best of our knowledge, the first successful implementation of influence functions that scales to full-size (language and vision) Transformer models with several hundreds of millions of parameters. We evaluate our approach on image classification and sequence-to-sequence tasks with tens to a hundred of millions of training examples. Our code will be available at https://github.com/google-research/jax-influence.

Bandits Don't Follow Rules: Balancing Multi-Facet Machine Translation with Multi-Armed Bandits

Oct 13, 2021Julia Kreutzer, David Vilar, Artem Sokolov

Training data for machine translation (MT) is often sourced from a multitude of large corpora that are multi-faceted in nature, e.g. containing contents from multiple domains or different levels of quality or complexity. Naturally, these facets do not occur with equal frequency, nor are they equally important for the test scenario at hand. In this work, we propose to optimize this balance jointly with MT model parameters to relieve system developers from manual schedule design. A multi-armed bandit is trained to dynamically choose between facets in a way that is most beneficial for the MT system. We evaluate it on three different multi-facet applications: balancing translationese and natural training data, or data from multiple domains or multiple language pairs. We find that bandit learning leads to competitive MT systems across tasks, and our analysis provides insights into its learned strategies and the underlying data sets.

Don't Search for a Search Method -- Simple Heuristics Suffice for Adversarial Text Attacks

Oct 04, 2021Nathaniel Berger, Stefan Riezler, Artem Sokolov, Sebastian Ebert

Recently more attention has been given to adversarial attacks on neural networks for natural language processing (NLP). A central research topic has been the investigation of search algorithms and search constraints, accompanied by benchmark algorithms and tasks. We implement an algorithm inspired by zeroth order optimization-based attacks and compare with the benchmark results in the TextAttack framework. Surprisingly, we find that optimization-based methods do not yield any improvement in a constrained setup and slightly benefit from approximate gradient information only in unconstrained setups where search spaces are larger. In contrast, simple heuristics exploiting nearest neighbors without querying the target function yield substantial success rates in constrained setups, and nearly full success rate in unconstrained setups, at an order of magnitude fewer queries. We conclude from these results that current TextAttack benchmark tasks are too easy and constraints are too strict, preventing meaningful research on black-box adversarial text attacks.

Fixing exposure bias with imitation learning needs powerful oracles

Sep 17, 2021Luca Hormann, Artem Sokolov

We apply imitation learning (IL) to tackle the NMT exposure bias problem with error-correcting oracles, and evaluate an SMT lattice-based oracle which, despite its excellent performance in an unconstrained oracle translation task, turned out to be too pruned and idiosyncratic to serve as the oracle for IL.

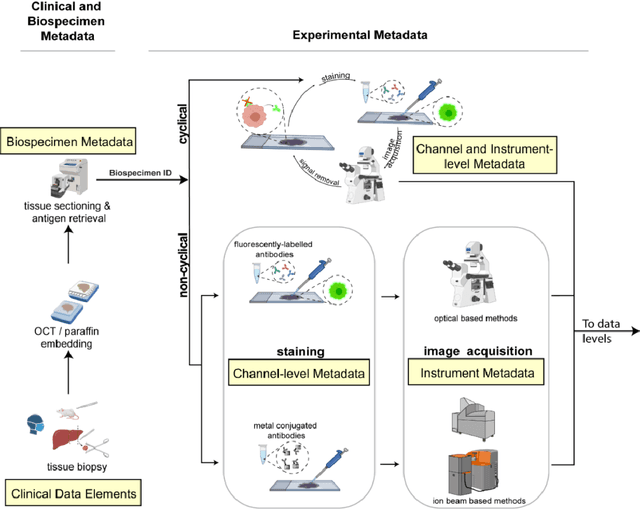

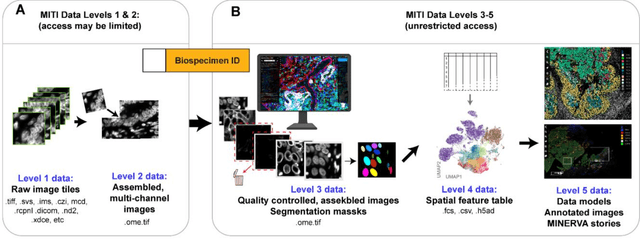

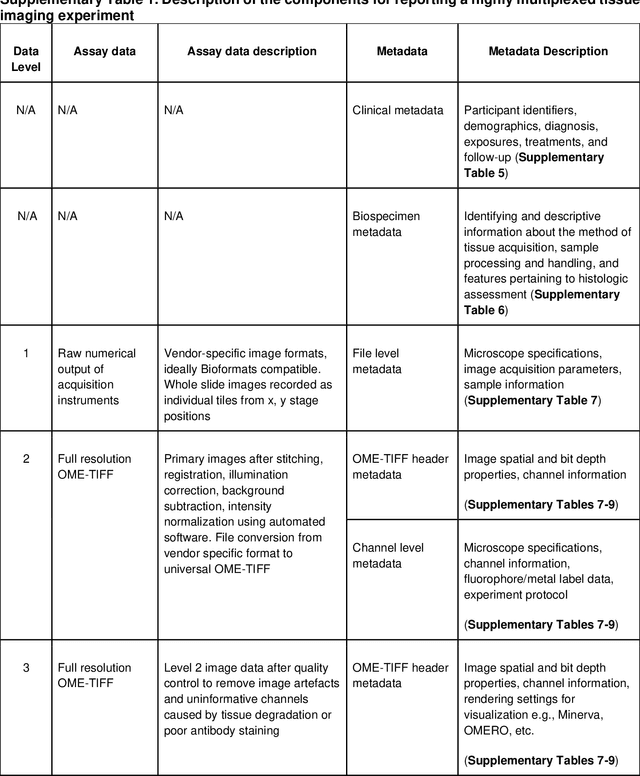

MITI Minimum Information guidelines for highly multiplexed tissue images

Aug 21, 2021Denis Schapiro, Clarence Yapp, Artem Sokolov, Sheila M. Reynolds, Yu-An Chen, Damir Sudar, Yubin Xie, Jeremy Muhlich, Raquel Arias-Camison, Milen Nikolov, Madison Tyler, Jia-Ren Lin, Erik A. Burlingame, Sarah Arena, Human Tumor Atlas Network, Young H. Chang, Samouil L Farhi, Vésteinn Thorsson, Nithya Venkatamohan, Julia L. Drewes, Dana Pe'er, David A. Gutman, Markus D. Herrmann, Nils Gehlenborg, Peter Bankhead, Joseph T. Roland, John M. Herndon, Michael P. Snyder, Michael Angelo, Garry Nolan, Jason Swedlow, Nikolaus Schultz, Daniel T. Merrick, Sarah A. Mazzilli, Ethan Cerami, Scott J. Rodig, Sandro Santagata, Peter K. Sorger

The imminent release of atlases combining highly multiplexed tissue imaging with single cell sequencing and other omics data from human tissues and tumors creates an urgent need for data and metadata standards compliant with emerging and traditional approaches to histology. We describe the development of a Minimum Information about highly multiplexed Tissue Imaging (MITI) standard that draws on best practices from genomics and microscopy of cultured cells and model organisms.

On the Impact of Word Error Rate on Acoustic-Linguistic Speech Emotion Recognition: An Update for the Deep Learning Era

Apr 20, 2021Shahin Amiriparian, Artem Sokolov, Ilhan Aslan, Lukas Christ, Maurice Gerczuk, Tobias Hübner, Dmitry Lamanov, Manuel Milling, Sandra Ottl, Ilya Poduremennykh, Evgeniy Shuranov, Björn W. Schuller

Text encodings from automatic speech recognition (ASR) transcripts and audio representations have shown promise in speech emotion recognition (SER) ever since. Yet, it is challenging to explain the effect of each information stream on the SER systems. Further, more clarification is required for analysing the impact of ASR's word error rate (WER) on linguistic emotion recognition per se and in the context of fusion with acoustic information exploitation in the age of deep ASR systems. In order to tackle the above issues, we create transcripts from the original speech by applying three modern ASR systems, including an end-to-end model trained with recurrent neural network-transducer loss, a model with connectionist temporal classification loss, and a wav2vec framework for self-supervised learning. Afterwards, we use pre-trained textual models to extract text representations from the ASR outputs and the gold standard. For extraction and learning of acoustic speech features, we utilise openSMILE, openXBoW, DeepSpectrum, and auDeep. Finally, we conduct decision-level fusion on both information streams -- acoustics and linguistics. Using the best development configuration, we achieve state-of-the-art unweighted average recall values of $73.6\,\%$ and $73.8\,\%$ on the speaker-independent development and test partitions of IEMOCAP, respectively.

Real-time Streaming Wave-U-Net with Temporal Convolutions for Multichannel Speech Enhancement

Apr 05, 2021Vasiliy Kuzmin, Fyodor Kravchenko, Artem Sokolov, Jie Geng

In this paper, we describe the work that we have done to participate in Task1 of the ConferencingSpeech2021 challenge. This task set a goal to develop the solution for multi-channel speech enhancement in a real-time manner. We propose a novel system for streaming speech enhancement. We employ Wave-U-Net architecture with temporal convolutions in encoder and decoder. We incorporate self-attention in the decoder to apply attention mask retrieved from skip-connection on features from down-blocks. We explore history cache mechanisms that work like hidden states in recurrent networks and implemented them in proposal solution. It helps us to run an inference with chunks length 40ms and Real-Time Factor 0.4 with the same precision.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge