Profiling Visual Dynamic Complexity Using a Bio-Robotic Approach

May 20, 2021Qinbing Fu, Tian Liu, Xuelong Sun, Huatian Wang, Jigen Peng, Shigang Yue, Cheng Hu

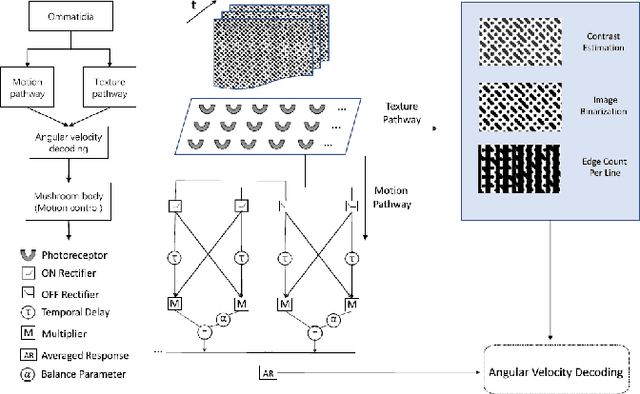

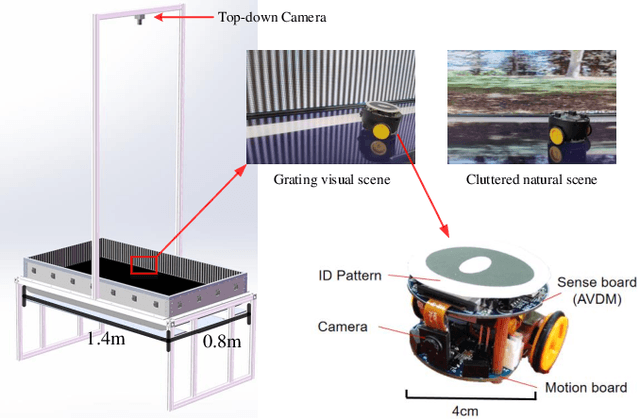

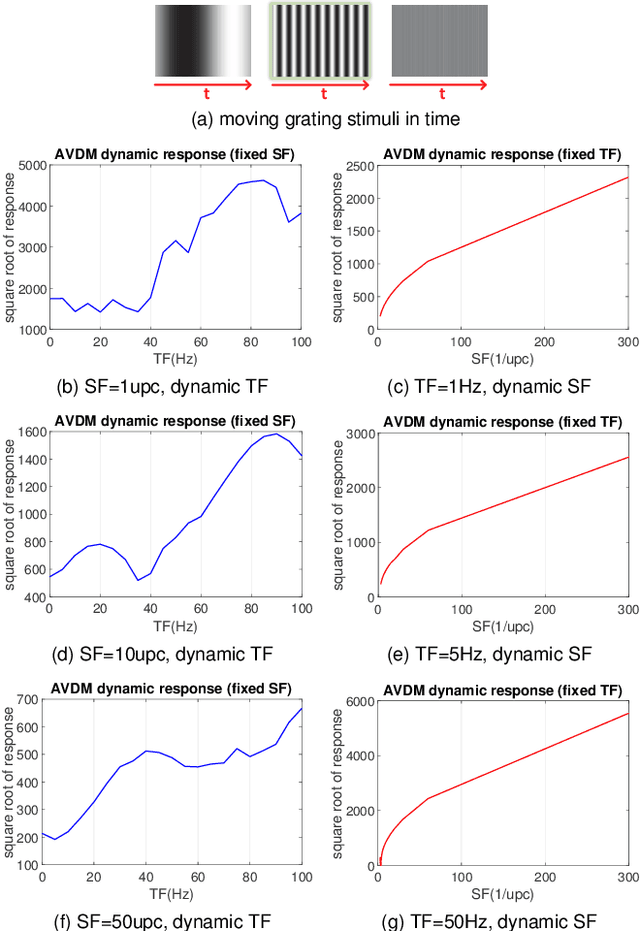

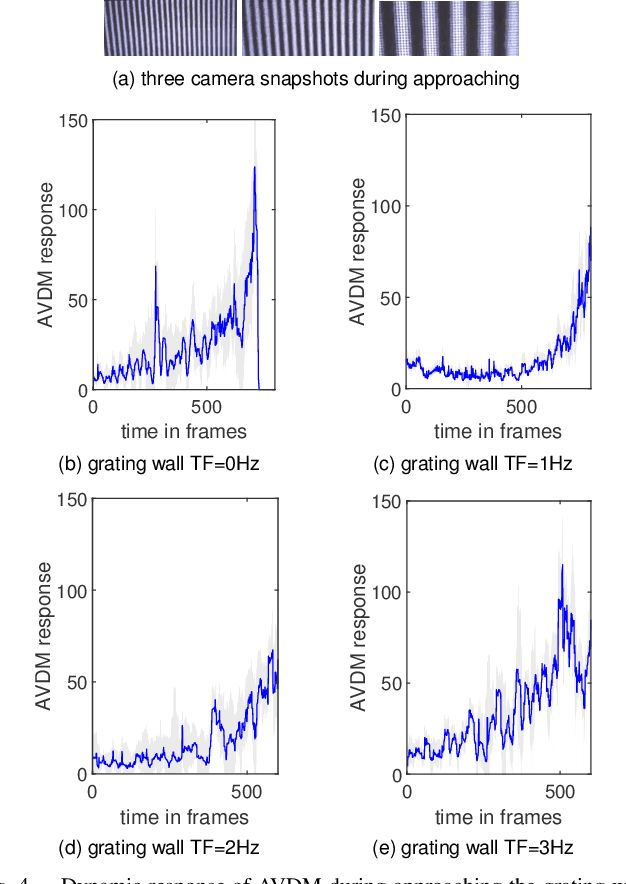

Visual dynamic complexity is a ubiquitous, hidden attribute of the visual world that every dynamic vision system is faced with. However, it is implicit and intractable which has never been quantitatively described due to the difficulty in defending temporal features correlated to spatial image complexity. To fill this vacancy, we propose a novel bio-robotic approach to profile visual dynamic complexity which can be used as a new metric. Here we apply a state-of-the-art brain-inspired motion detection neural network model to explicitly profile such complexity associated with spatial-temporal frequency (SF-TF) of visual scene. This model is for the first time implemented in an autonomous micro-mobile robot which navigates freely in an arena with visual walls displaying moving sine-wave grating or cluttered natural scene. The neural dynamic response can make reasonable prediction on surrounding complexity since it can be mapped monotonically to varying SF-TF of visual scene. The experiments show this approach is flexible to different visual scenes for profiling the dynamic complexity. We also use this metric as a predictor to investigate the boundary of another collision detection visual system performing in changing environment with increasing dynamic complexity. This research demonstrates a new paradigm of using biologically plausible visual processing scheme to estimate dynamic complexity of visual scene from both spatial and temporal perspectives, which could be beneficial to predicting input complexity when evaluating dynamic vision systems.

Attention and Prediction Guided Motion Detection for Low-Contrast Small Moving Targets

May 08, 2021Hongxin Wang, Jiannan Zhao, Huatian Wang, Cheng Hu, Jigen Peng, Shigang Yue

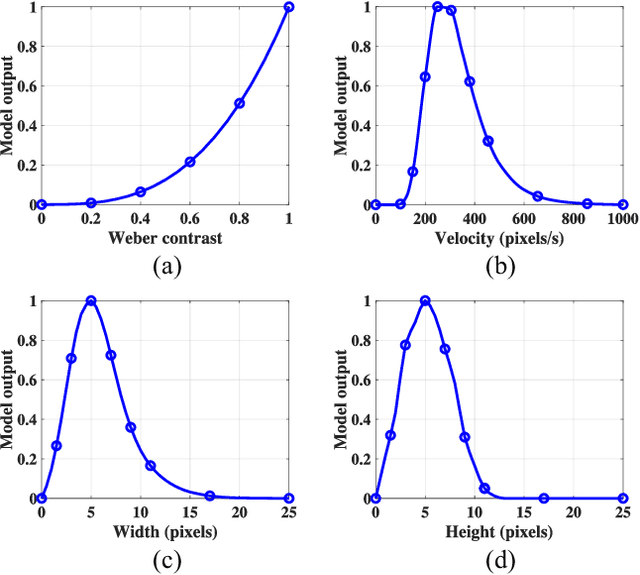

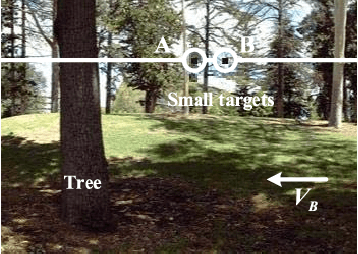

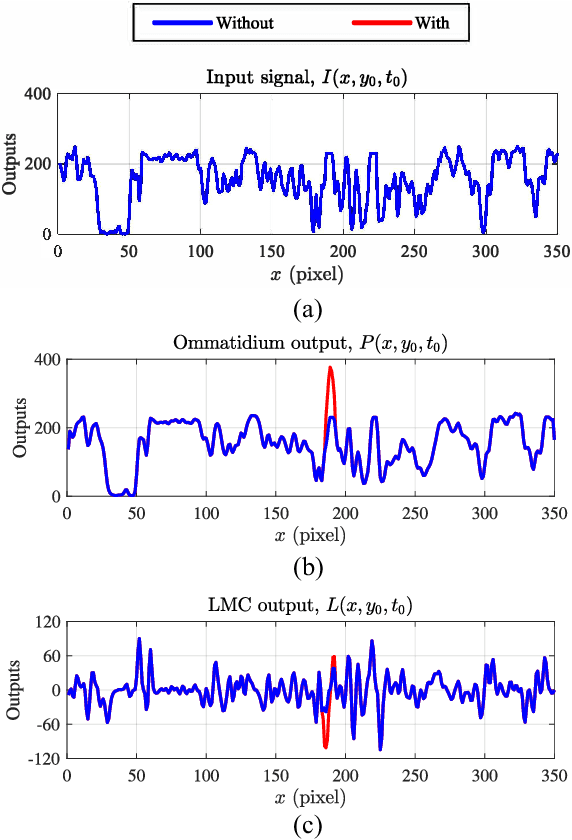

Small target motion detection within complex natural environments is an extremely challenging task for autonomous robots. Surprisingly, the visual systems of insects have evolved to be highly efficient in detecting mates and tracking prey, even though targets are as small as a few pixels in their visual fields. The excellent sensitivity to small target motion relies on a class of specialized neurons called small target motion detectors (STMDs). However, existing STMD-based models are heavily dependent on visual contrast and perform poorly in complex natural environments where small targets generally exhibit extremely low contrast against neighbouring backgrounds. In this paper, we develop an attention and prediction guided visual system to overcome this limitation. The developed visual system comprises three main subsystems, namely, an attention module, an STMD-based neural network, and a prediction module. The attention module searches for potential small targets in the predicted areas of the input image and enhances their contrast against complex background. The STMD-based neural network receives the contrast-enhanced image and discriminates small moving targets from background false positives. The prediction module foresees future positions of the detected targets and generates a prediction map for the attention module. The three subsystems are connected in a recurrent architecture allowing information to be processed sequentially to activate specific areas for small target detection. Extensive experiments on synthetic and real-world datasets demonstrate the effectiveness and superiority of the proposed visual system for detecting small, low-contrast moving targets against complex natural environments.

Does Time-Delay Feedback Matter to Small Target Motion Detection Against Complex Dynamic Environments?

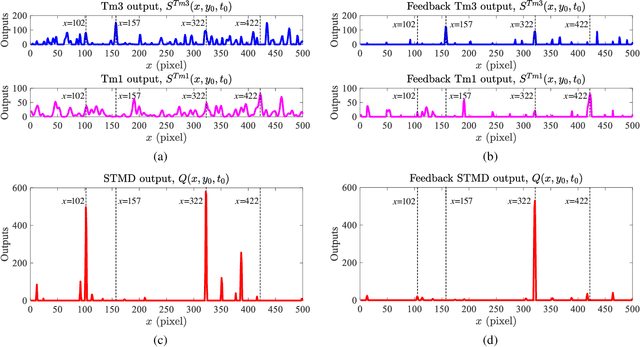

Dec 29, 2019Hongxin Wang, Huatian Wang, Jiannan Zhao, Cheng Hu, Jigen Peng, Shigang Yue

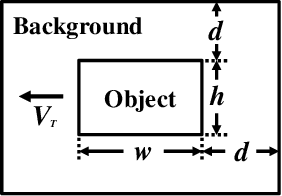

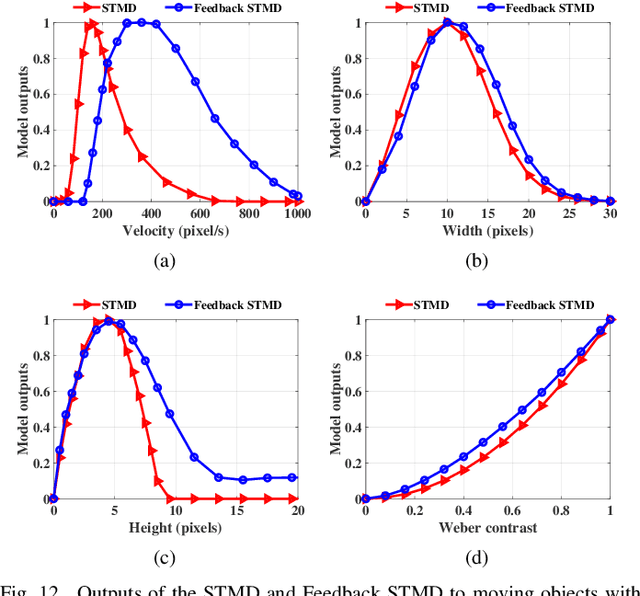

Discriminating small moving objects in complex visual environments is a significant challenge for autonomous micro robots that are generally limited in computational power. Relying on well-evolved visual systems, flying insects can effortlessly detect mates and track prey in rapid pursuits, despite target sizes as small as a few pixels in the visual field. Such exquisite sensitivity for small target motion is known to be supported by a class of specialized neurons named as small target motion detectors (STMDs). The existing STMD-based models normally consist of four sequentially arranged neural layers interconnected through feedforward loops to extract motion information about small targets from raw visual inputs. However, feedback loop, another important regulatory circuit for motion perception, has not been investigated in the STMD pathway and its functional roles for small target motion detection are not clear. In this paper, we assume the existence of the feedback and propose a STMD-based visual system with feedback connection (Feedback STMD), where the system output is temporally delayed, then fed back to lower layers to mediate neural responses. We compare the properties of the visual system with and without the time-delay feedback loop, and discuss its effect on small target motion detection. The experimental results suggest that the Feedback STMD prefers fast-moving small targets, while significantly suppresses those background features moving at lower velocities.

ColCOS$Φ$: A Multiple Pheromone Communication System for Swarm Robotics and Social Insects Research

Jun 05, 2019Xuelong Sun, Tian Liu, Cheng Hu, Qingbin Fu, Shigang Yue

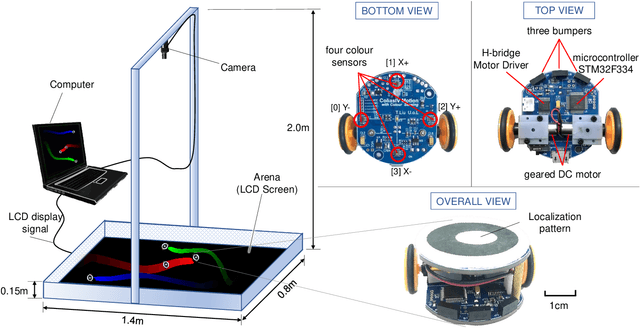

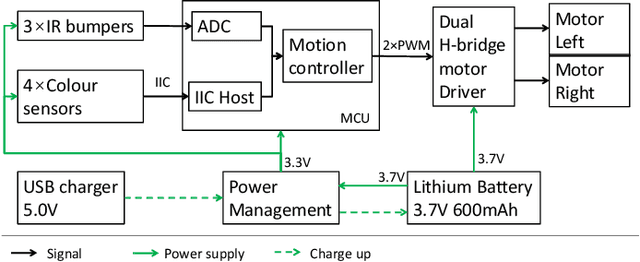

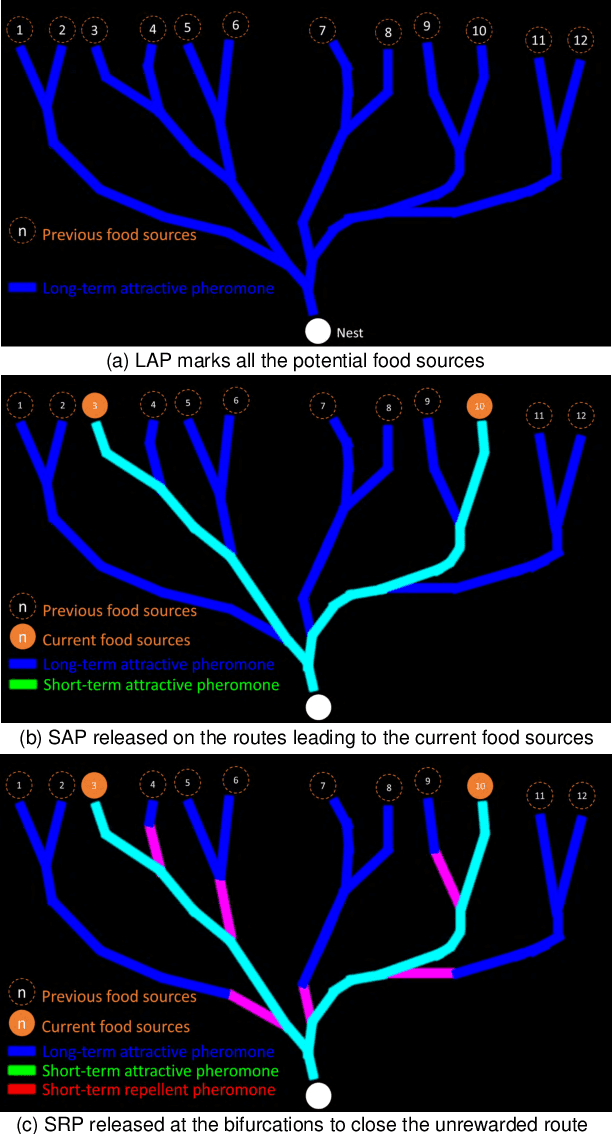

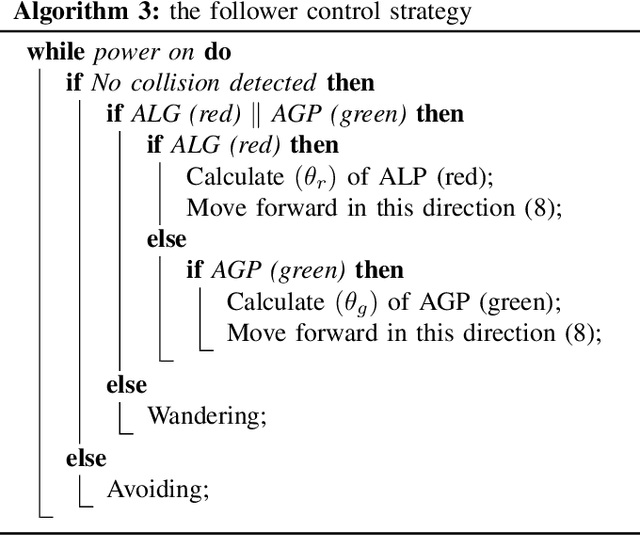

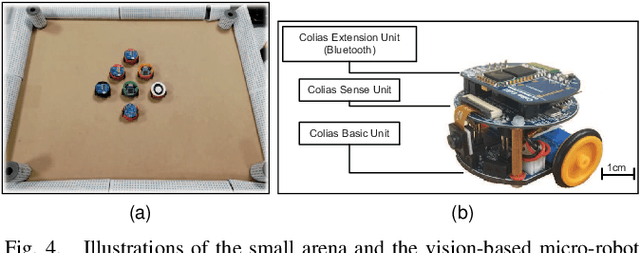

In the last few decades we have witnessed how the pheromone of social insect has become a rich inspiration source of swarm robotics. By utilising the virtual pheromone in physical swarm robot system to coordinate individuals and realise direct/indirect inter-robot communications like the social insect, stigmergic behaviour has emerged. However, many studies only take one single pheromone into account in solving swarm problems, which is not the case in real insects. In the real social insect world, diverse behaviours, complex collective performances and flexible transition from one state to another are guided by different kinds of pheromones and their interactions. Therefore, whether multiple pheromone based strategy can inspire swarm robotics research, and inversely how the performances of swarm robots controlled by multiple pheromones bring inspirations to explain the social insects' behaviours will become an interesting question. Thus, to provide a reliable system to undertake the multiple pheromone study, in this paper, we specifically proposed and realised a multiple pheromone communication system called ColCOS$\Phi$. This system consists of a virtual pheromone sub-system wherein the multiple pheromone is represented by a colour image displayed on a screen, and the micro-robots platform designed for swarm robotics applications. Two case studies are undertaken to verify the effectiveness of this system: one is the multiple pheromone based on an ant's forage and another is the interactions of aggregation and alarm pheromones. The experimental results demonstrate the feasibility of ColCOS$\Phi$ and its great potential in directing swarm robotics and social insects research.

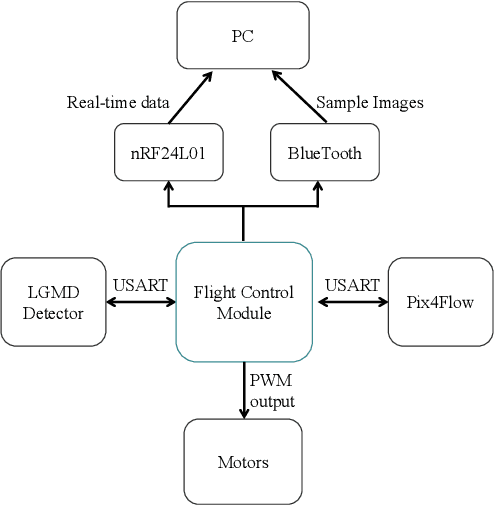

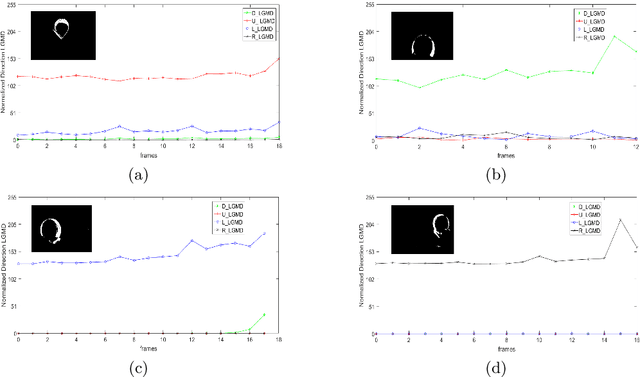

An LGMD Based Competitive Collision Avoidance Strategy for UAV

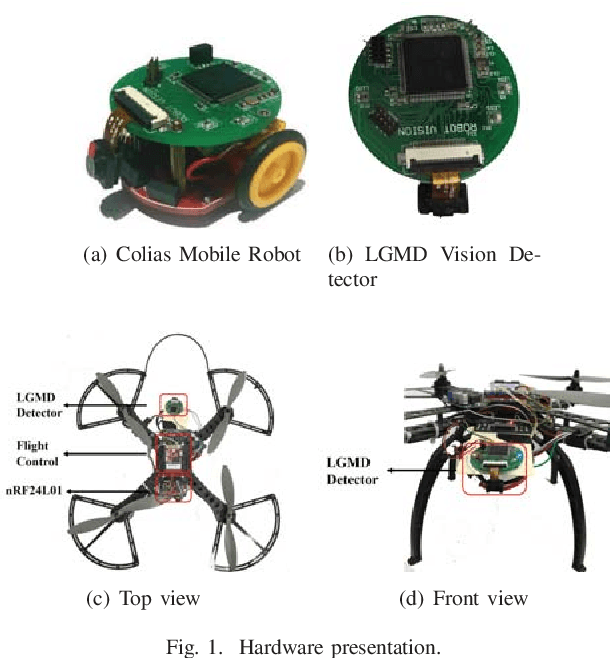

Apr 15, 2019Jiannan Zhao, Xingzao Ma, Qinbing Fu, Cheng Hu, Shigang Yue

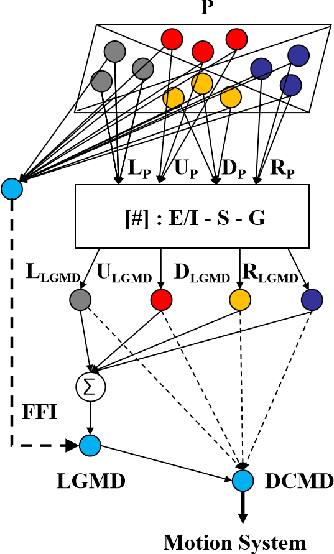

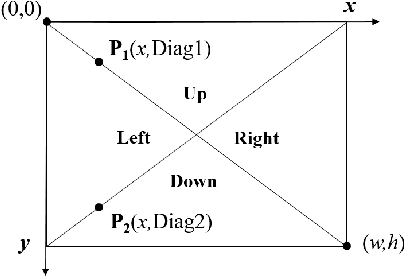

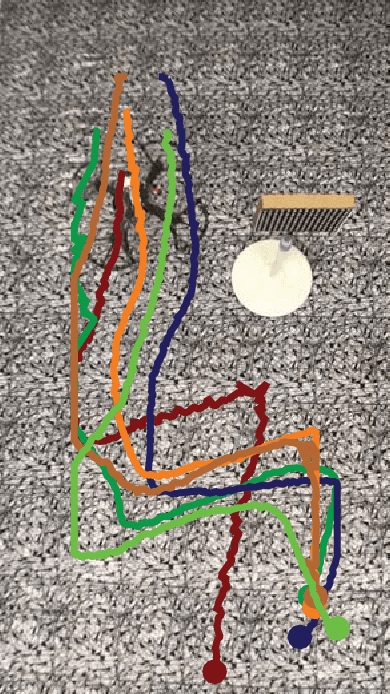

Building a reliable and efficient collision avoidance system for unmanned aerial vehicles (UAVs) is still a challenging problem. This research takes inspiration from locusts, which can fly in dense swarms for hundreds of miles without collision. In the locust's brain, a visual pathway of LGMD-DCMD (lobula giant movement detector and descending contra-lateral motion detector) has been identified as collision perception system guiding fast collision avoidance for locusts, which is ideal for designing artificial vision systems. However, there is very few works investigating its potential in real-world UAV applications. In this paper, we present an LGMD based competitive collision avoidance method for UAV indoor navigation. Compared to previous works, we divided the UAV's field of view into four subfields each handled by an LGMD neuron. Therefore, four individual competitive LGMDs (C-LGMD) compete for guiding the directional collision avoidance of UAV. With more degrees of freedom compared to ground robots and vehicles, the UAV can escape from collision along four cardinal directions (e.g. the object approaching from the left-side triggers a rightward shifting of the UAV). Our proposed method has been validated by both simulations and real-time quadcopter arena experiments.

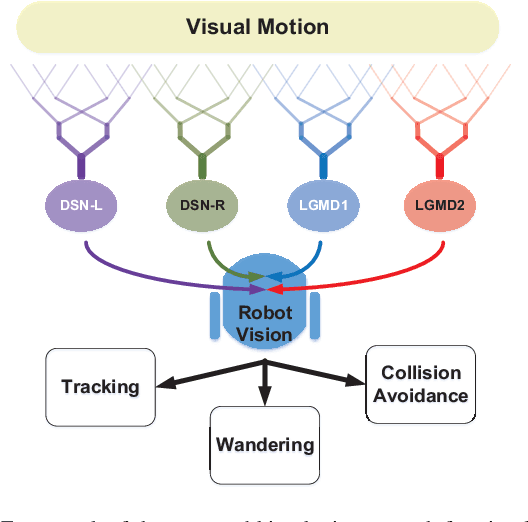

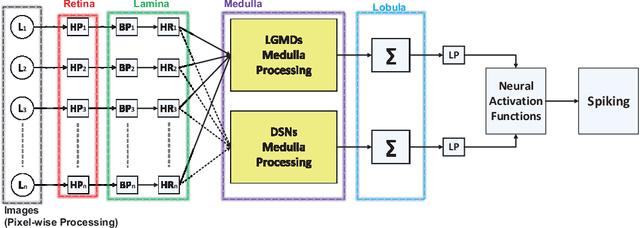

Synthetic Neural Vision System Design for Motion Pattern Recognition in Dynamic Robot Scenes

Apr 15, 2019Qinbing Fu, Cheng Hu, Pengcheng Liu, Shigang Yue

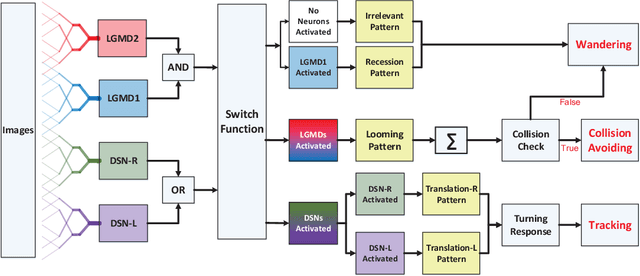

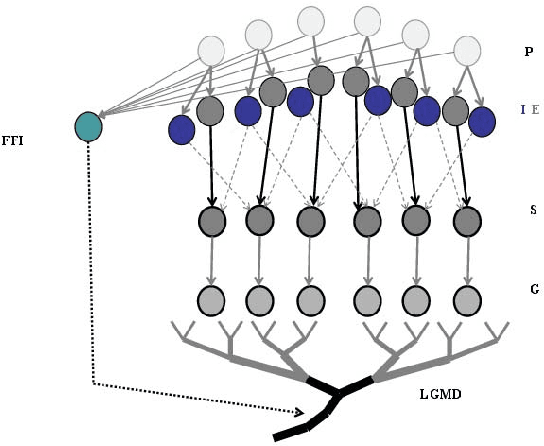

Insects have tiny brains but complicated visual systems for motion perception. A handful of insect visual neurons have been computationally modeled and successfully applied for robotics. How different neurons collaborate on motion perception, is an open question to date. In this paper, we propose a novel embedded vision system in autonomous micro-robots, to recognize motion patterns in dynamic robot scenes. Here, the basic motion patterns are categorized into movements of looming (proximity), recession, translation, and other irrelevant ones. The presented system is a synthetic neural network, which comprises two complementary sub-systems with four spiking neurons -- the lobula giant movement detectors (LGMD1 and LGMD2) in locusts for sensing looming and recession, and the direction selective neurons (DSN-R and DSN-L) in flies for translational motion extraction. Images are transformed to spikes via spatiotemporal computations towards a switch function and decision making mechanisms, in order to invoke proper robot behaviors amongst collision avoidance, tracking and wandering, in dynamic robot scenes. Our robot experiments demonstrated two main contributions: (1) This neural vision system is effective to recognize the basic motion patterns corresponding to timely and proper robot behaviors in dynamic scenes. (2) The arena tests with multi-robots demonstrated the effectiveness in recognizing more abundant motion features for collision detection, which is a great improvement compared with former studies.

Towards Computational Models and Applications of Insect Visual Systems for Motion Perception: A Review

Apr 03, 2019Qinbing Fu, Hongxin Wang, Cheng Hu, Shigang Yue

Motion perception is a critical capability determining a variety of aspects of insects' life, including avoiding predators, foraging and so forth. A good number of motion detectors have been identified in the insects' visual pathways. Computational modelling of these motion detectors has not only been providing effective solutions to artificial intelligence, but also benefiting the understanding of complicated biological visual systems. These biological mechanisms through millions of years of evolutionary development will have formed solid modules for constructing dynamic vision systems for future intelligent machines. This article reviews the computational motion perception models originating from biological research of insects' visual systems in the literature. These motion perception models or neural networks comprise the looming sensitive neuronal models of lobula giant movement detectors (LGMDs) in locusts, the translation sensitive neural systems of direction selective neurons (DSNs) in fruit flies, bees and locusts, as well as the small target motion detectors (STMDs) in dragonflies and hover flies. We also review the applications of these models to robots and vehicles. Through these modelling studies, we summarise the methodologies that generate different direction and size selectivity in motion perception. At last, we discuss about multiple systems integration and hardware realisation of these bio-inspired motion perception models.

A Bio-inspired Collision Detecotr for Small Quadcopter

Jan 14, 2018Jiannan Zhao, Cheng Hu, Chun Zhang, Zhihua Wang, Shigang Yue

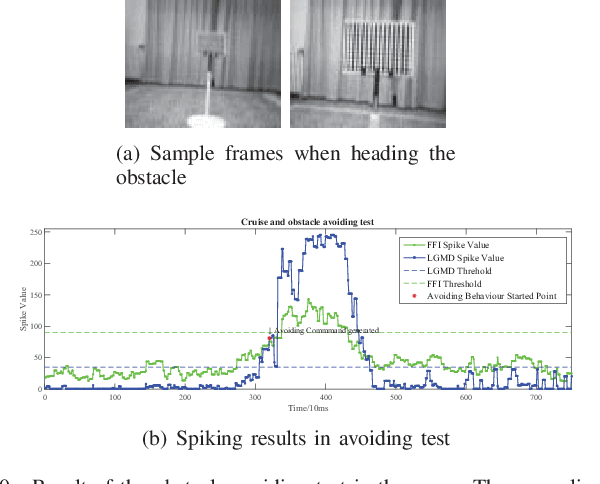

Sense and avoid capability enables insects to fly versatilely and robustly in dynamic complex environment. Their biological principles are so practical and efficient that inspired we human imitating them in our flying machines. In this paper, we studied a novel bio-inspired collision detector and its application on a quadcopter. The detector is inspired from LGMD neurons in the locusts, and modeled into an STM32F407 MCU. Compared to other collision detecting methods applied on quadcopters, we focused on enhancing the collision selectivity in a bio-inspired way that can considerably increase the computing efficiency during an obstacle detecting task even in complex dynamic environment. We designed the quadcopter's responding operation imminent collisions and tested this bio-inspired system in an indoor arena. The observed results from the experiments demonstrated that the LGMD collision detector is feasible to work as a vision module for the quadcopter's collision avoidance task.

Collision Selective Visual Neural Network Inspired by LGMD2 Neurons in Juvenile Locusts

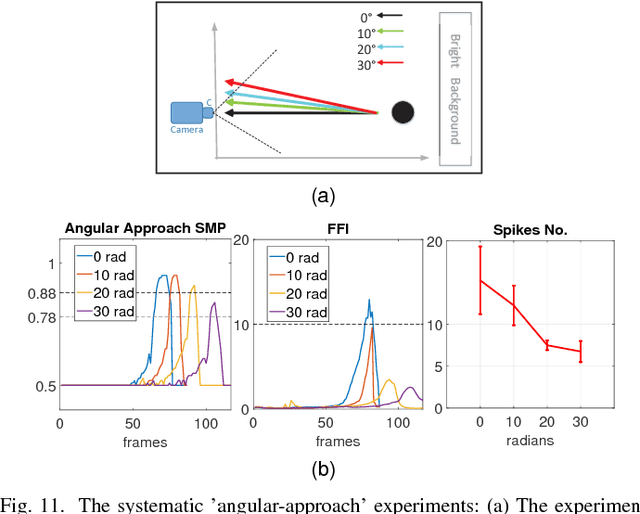

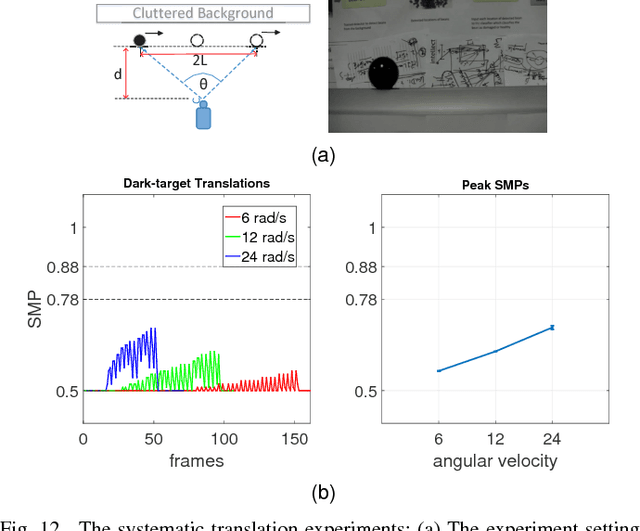

Dec 22, 2017Qinbing Fu, Cheng Hu, Shigang Yue

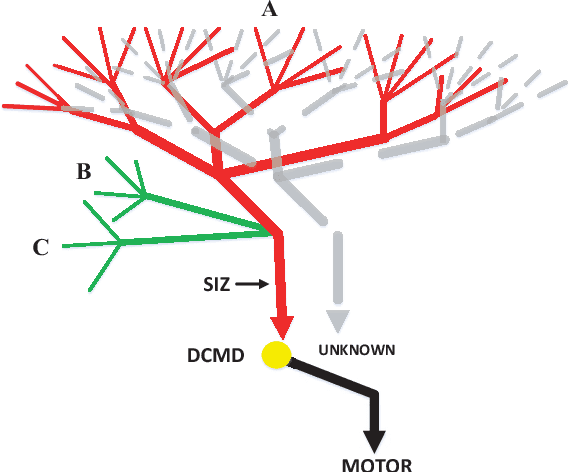

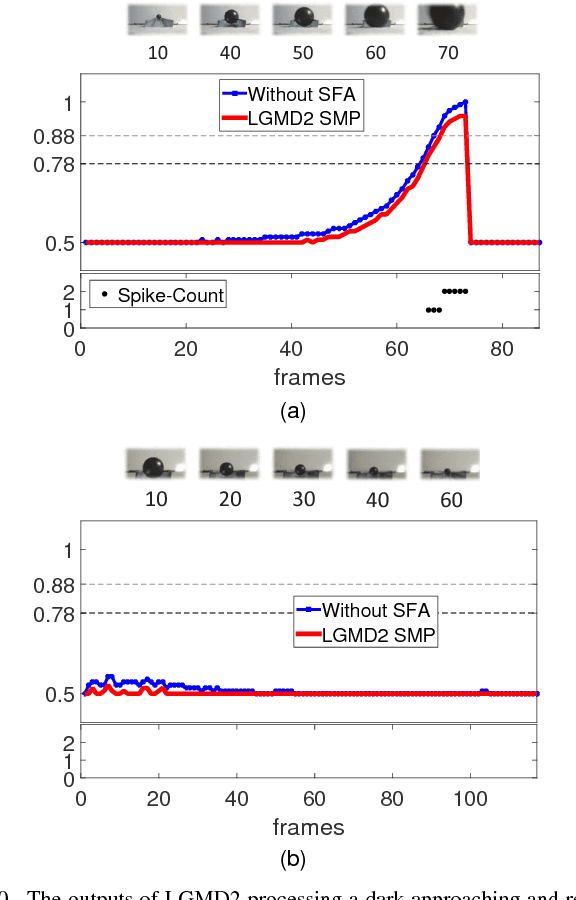

For autonomous robots in dynamic environments mixed with human, it is vital to detect impending collision quickly and robustly. The biological visual systems evolved over millions of years may provide us efficient solutions for collision detection in complex environments. In the cockpit of locusts, two Lobula Giant Movement Detectors, i.e. LGMD1 and LGMD2, have been identified which respond to looming objects rigorously with high firing rates. Compared to LGMD1, LGMD2 matures early in the juvenile locusts with specific selectivity to dark moving objects against bright background in depth while not responding to light objects embedded in dark background - a similar situation which ground vehicles and robots are facing with. However, little work has been done on modeling LGMD2, let alone its potential in robotics and other vision-based applications. In this article, we propose a novel way of modeling LGMD2 neuron, with biased ON and OFF pathways splitting visual streams into parallel channels encoding brightness increments and decrements separately to fulfill its selectivity. Moreover, we apply a biophysical mechanism of spike frequency adaptation to shape the looming selectivity in such a collision-detecting neuron model. The proposed visual neural network has been tested with systematic experiments, challenged against synthetic and real physical stimuli, as well as image streams from the sensor of a miniature robot. The results demonstrated this framework is able to detect looming dark objects embedded in bright backgrounds selectively, which make it ideal for ground mobile platforms. The robotic experiments also showed its robustness in collision detection - it performed well for near range navigation in an arena with many obstacles. Its enhanced collision selectivity to dark approaching objects versus receding and translating ones has also been verified via systematic experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge