Precoding and Beamforming Design for Intelligent Reconfigurable Surface-Aided Hybrid Secure Spatial Modulation

Feb 15, 2023Feng Shu, Lili Yang, Yan Wang, Xuehui Wang, Weiping Shi, Chong Shen, Jiangzhou Wang

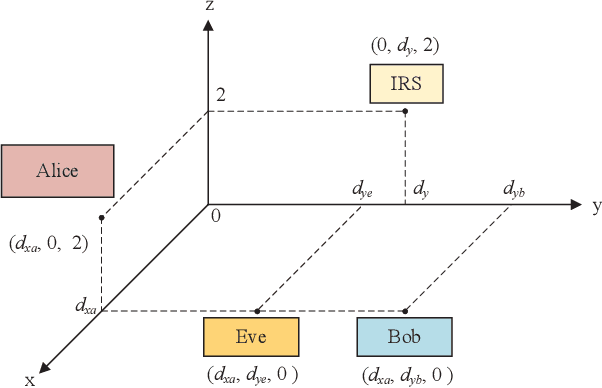

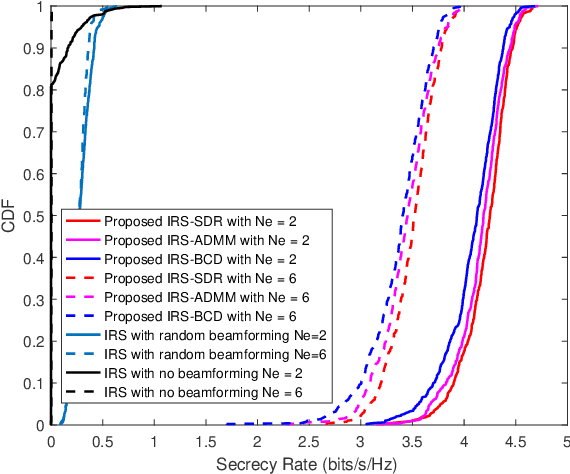

Intelligent reflecting surface (IRS) is an emerging technology for wireless communication composed of a large number of low-cost passive devices with reconfigurable parameters, which can reflect signals with a certain phase shift and is capable of building programmable communication environment. In this paper, to avoid the high hardware cost and energy consumption in spatial modulation (SM), an IRS-aided hybrid secure SM (SSM) system with a hybrid precoder is proposed. To improve the security performance, we formulate an optimization problem to maximize the secrecy rate (SR) by jointly optimizing the beamforming at IRS and hybrid precoding at the transmitter. Considering that the SR has no closed form expression, an approximate SR (ASR) expression is derived as the objective function. To improve the SR performance, three IRS beamforming methods, called IRS alternating direction method of multipliers (IRS-ADMM), IRS block coordinate ascend (IRS-BCA) and IRS semi-definite relaxation (IRS-SDR), are proposed. As for the hybrid precoding design, approximated secrecy rate-successive convex approximation (ASR-SCA) method and cut-off rate-gradient ascend (COR-GA) method are proposed. Simulation results demonstrate that the proposed IRS-SDR and IRS-ADMM beamformers harvest substantial SR performance gains over IRS-BCA. Particularly, the proposed IRS-ADMM and IRS-BCA are of low-complexity at the expense of a little performance loss compared with IRS-SDR. For hybrid precoding, the proposed ASR-SCA performs better than COR-GA in the high transmit power region.

Yuan 1.0: Large-Scale Pre-trained Language Model in Zero-Shot and Few-Shot Learning

Oct 12, 2021Shaohua Wu, Xudong Zhao, Tong Yu, Rongguo Zhang, Chong Shen, Hongli Liu, Feng Li, Hong Zhu, Jiangang Luo, Liang Xu, Xuanwei Zhang

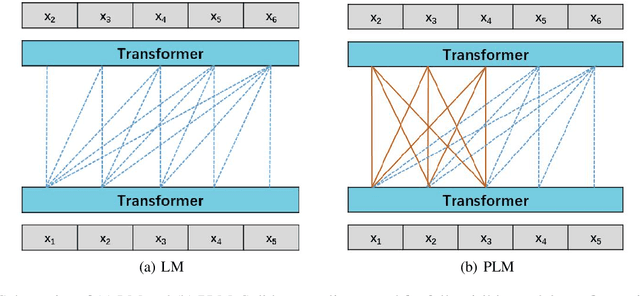

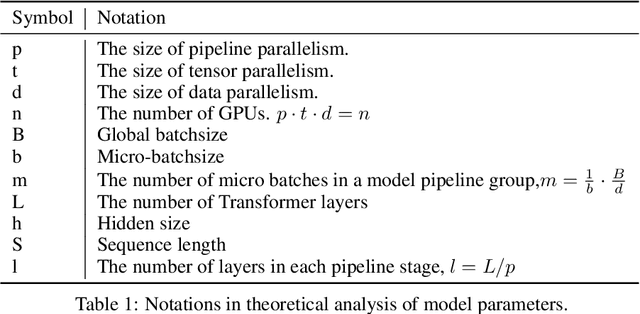

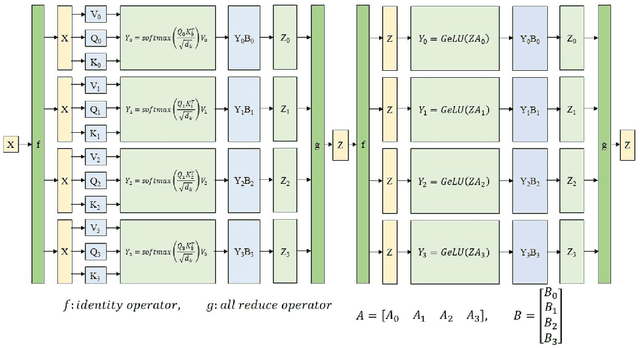

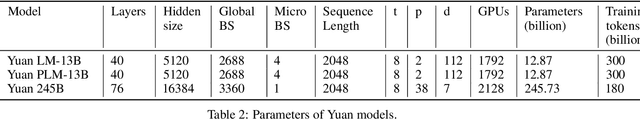

Recent work like GPT-3 has demonstrated excellent performance of Zero-Shot and Few-Shot learning on many natural language processing (NLP) tasks by scaling up model size, dataset size and the amount of computation. However, training a model like GPT-3 requires huge amount of computational resources which makes it challengeable to researchers. In this work, we propose a method that incorporates large-scale distributed training performance into model architecture design. With this method, Yuan 1.0, the current largest singleton language model with 245B parameters, achieves excellent performance on thousands GPUs during training, and the state-of-the-art results on NLP tasks. A data processing method is designed to efficiently filter massive amount of raw data. The current largest high-quality Chinese corpus with 5TB high quality texts is built based on this method. In addition, a calibration and label expansion method is proposed to improve the Zero-Shot and Few-Shot performance, and steady improvement is observed on the accuracy of various tasks. Yuan 1.0 presents strong capacity of natural language generation, and the generated articles are difficult to distinguish from the human-written ones.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge