Beamforming Inferring by Conditional WGAN-GP for Holographic Antenna Arrays

May 01, 2024Fenghao Zhu, Xinquan Wang, Chongwen Huang, Ahmed Alhammadi, Hui Chen, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

The beamforming technology with large holographic antenna arrays is one of the key enablers for the next generation of wireless systems, which can significantly improve the spectral efficiency. However, the deployment of large antenna arrays implies high algorithm complexity and resource overhead at both receiver and transmitter ends. To address this issue, advanced technologies such as artificial intelligence have been developed to reduce beamforming overhead. Intuitively, if we can implement the near-optimal beamforming only using a tiny subset of the all channel information, the overhead for channel estimation and beamforming would be reduced significantly compared with the traditional beamforming methods that usually need full channel information and the inversion of large dimensional matrix. In light of this idea, we propose a novel scheme that utilizes Wasserstein generative adversarial network with gradient penalty to infer the full beamforming matrices based on very little of channel information. Simulation results confirm that it can accomplish comparable performance with the weighted minimum mean-square error algorithm, while reducing the overhead by over 50%.

Robust Continuous-Time Beam Tracking with Liquid Neural Network

May 01, 2024Fenghao Zhu, Xinquan Wang, Chongwen Huang, Richeng Jin, Qianqian Yang, Ahmed Alhammadi, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

Millimeter-wave (mmWave) technology is increasingly recognized as a pivotal technology of the sixth-generation communication networks due to the large amounts of available spectrum at high frequencies. However, the huge overhead associated with beam training imposes a significant challenge in mmWave communications, particularly in urban environments with high background noise. To reduce this high overhead, we propose a novel solution for robust continuous-time beam tracking with liquid neural network, which dynamically adjust the narrow mmWave beams to ensure real-time beam alignment with mobile users. Through extensive simulations, we validate the effectiveness of our proposed method and demonstrate its superiority over existing state-of-the-art deep-learning-based approaches. Specifically, our scheme achieves at most 46.9% higher normalized spectral efficiency than the baselines when the user is moving at 5 m/s, demonstrating the potential of liquid neural networks to enhance mmWave mobile communication performance.

Device-Free 3D Drone Localization in RIS-Assisted mmWave MIMO Networks

Apr 23, 2024Jiguang He, Charles Vanwynsberghe, Hui Chen, Chongwen Huang, Aymen Fakhreddine

In this paper, we investigate the potential of reconfigurable intelligent surfaces (RISs) in facilitating passive/device-free three-dimensional (3D) drone localization within existing cellular infrastructure operating at millimeter-wave (mmWave) frequencies and employing multiple antennas at the transceivers. The developed localization system operates in the bi-static mode without requiring direct communication between the drone and the base station. We analyze the theoretical performance limits via Fisher information analysis and Cram\'er Rao lower bounds (CRLBs). Furthermore, we develop a low-complexity yet effective drone localization algorithm based on coordinate gradient descent and examine the impact of factors such as radar cross section (RCS) of the drone and training overhead on system performance. It is demonstrated that integrating RIS yields significant benefits over its RIS-free counterpart, as evidenced by both theoretical analyses and numerical simulations.

Fluid Antenna Relay Assisted Communication Systems Through Antenna Location Optimization

Mar 31, 2024Ruopeng Xu, Yixuan Chen, Jiawen Kang, Minrui Xu, Zhaohui Yang, Chongwen Huang, Niyato Dusit

In this paper, we investigate the problem of resource allocation for fluid antenna relay (FAR) system with antenna location optimization. In the considered model, each user transmits information to a base station (BS) with help of FAR. The antenna location of the FAR is flexible and can be adapted to dynamic location distribution of the users. We formulate a sum rate maximization problem through jointly optimizing the antenna location and bandwidth allocation with meeting the minimum rate requirements, total bandwidth budget, and feasible antenna region constraints. To solve this problem, we obtain the optimal bandwidth in closed form. Based on the optimal bandwidth, the original problem is reduced to the antenna location optimization problem and an alternating algorithm is proposed. Simulation results verify the effectiveness of the proposed algorithm and the sum rate can be increased by up to 125% compared to the conventional schemes.

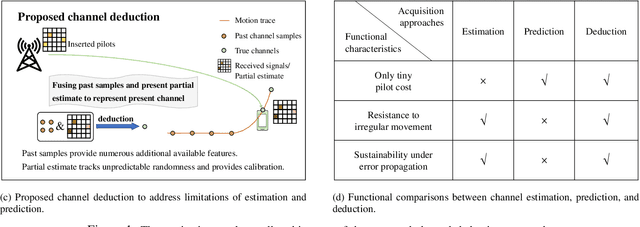

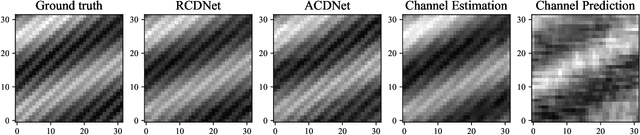

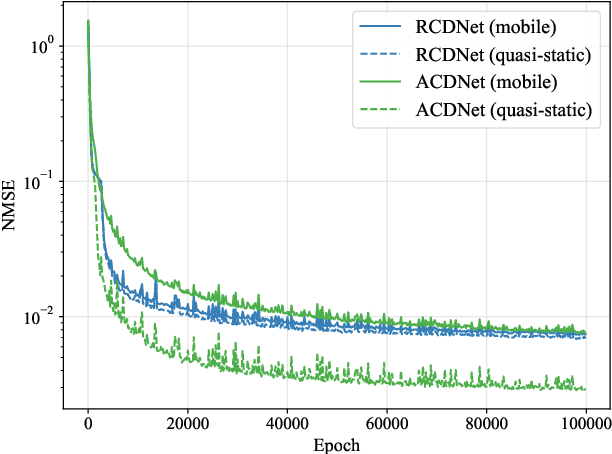

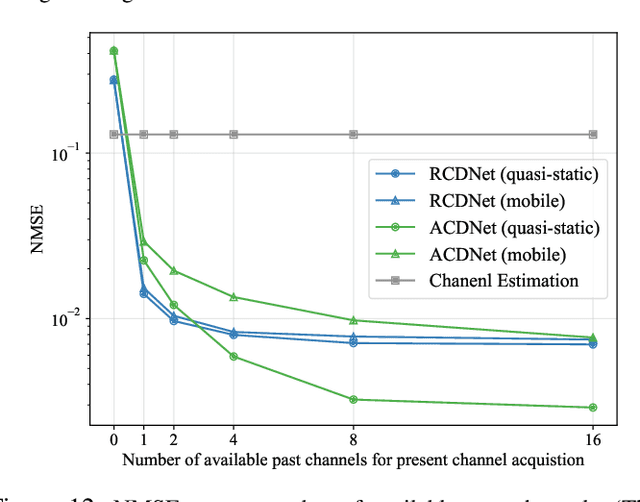

Channel Deduction: A New Learning Framework to Acquire Channel from Outdated Samples and Coarse Estimate

Mar 28, 2024Zirui Chen, Zhaoyang Zhang, Zhaohui Yang, Chongwen Huang, Merouane Debbah

How to reduce the pilot overhead required for channel estimation? How to deal with the channel dynamic changes and error propagation in channel prediction? To jointly address these two critical issues in next-generation transceiver design, in this paper, we propose a novel framework named channel deduction for high-dimensional channel acquisition in multiple-input multiple-output (MIMO)-orthogonal frequency division multiplexing (OFDM) systems. Specifically, it makes use of the outdated channel information of past time slots, performs coarse estimation for the current channel with a relatively small number of pilots, and then fuses these two information to obtain a complete representation of the present channel. The rationale is to align the current channel representation to both the latent channel features within the past samples and the coarse estimate of current channel at the pilots, which, in a sense, behaves as a complementary combination of estimation and prediction and thus reduces the overall overhead. To fully exploit the highly nonlinear correlations in time, space, and frequency domains, we resort to learning-based implementation approaches. By using the highly efficient complex-domain multilayer perceptron (MLP)-mixer for crossing space-frequency domain representation and the recurrence-based or attention-based mechanisms for the past-present interaction, we respectively design two different channel deduction neural networks (CDNets). We provide a general procedure of data collection, training, and deployment to standardize the application of CDNets. Comprehensive experimental evaluations in accuracy, robustness, and efficiency demonstrate the superiority of the proposed approach, which reduces the pilot overhead by up to 88.9% compared to state-of-the-art estimation approaches and enables continuous operating even under unknown user movement and error propagation.

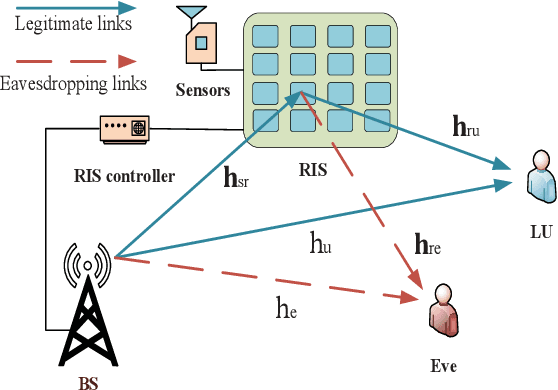

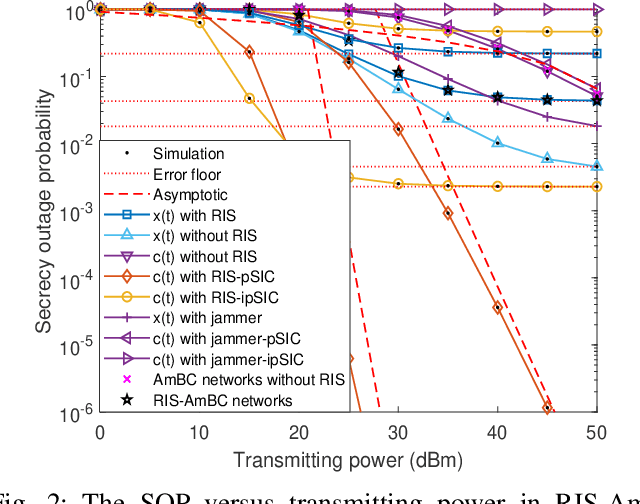

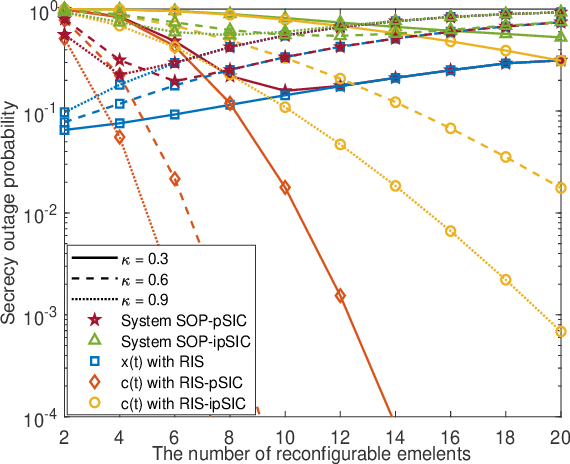

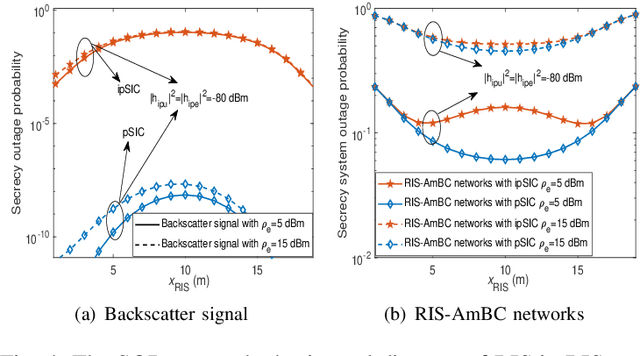

Secrecy Performance Analysis of RIS Assisted Ambient Backscatter Communication Networks

Mar 17, 2024Yingjie Pei, Xinwei Yue, Chongwen Huang, Zhiping Lu

Reconfigurable intelligent surface (RIS) and ambient backscatter communication (AmBC) have been envisioned as two promising technologies due to their high transmission reliability as well as energy-efficiency. This paper investigates the secrecy performance of RIS assisted AmBC networks. New closed-form and asymptotic expressions of secrecy outage probability for RIS-AmBC networks are derived by taking into account both imperfect successive interference cancellation (ipSIC) and perfect SIC (pSIC) cases. On top of these, the secrecy diversity order of legitimate user is obtained in high signal-to-noise ratio region, which equals \emph{zero} and is proportional to the number of RIS elements for ipSIC and pSIC, respectively. The secrecy throughput and energy efficiency are further surveyed to evaluate the secure effectiveness of RIS-AmBC networks. Numerical results are provided to verify the accuracy of theoretical analyses and manifest that: i) The secrecy outage behavior of RIS-AmBC networks exceeds that of conventional AmBC networks; ii) Due to the mutual interference between direct and backscattering links, the number of RIS elements has an optimal value to minimise the secrecy system outage probability; and iii) Secrecy throughput and energy efficiency are strongly influenced by the reflecting coefficient and eavesdropper's wiretapping ability.

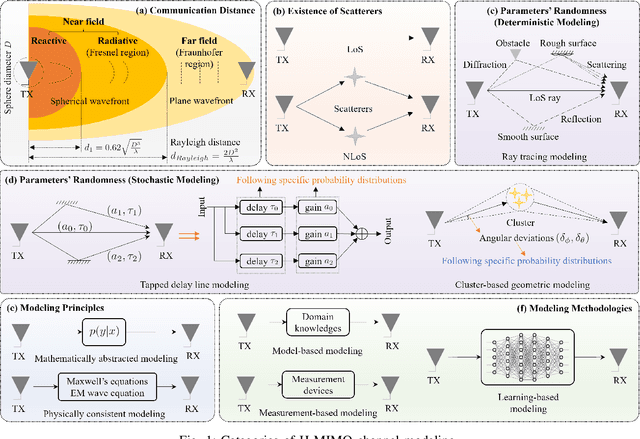

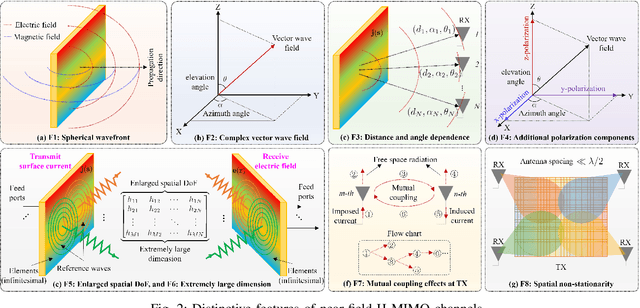

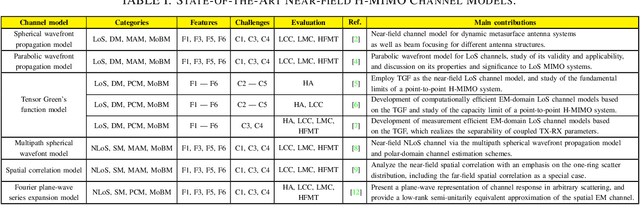

Near-Field Channel Modeling for Holographic MIMO Communications

Mar 16, 2024Tierui Gong, Li Wei, Chongwen Huang, George C. Alexandropoulos, Mérouane Debbah, Chau Yuen

Empowered by the latest progress on innovative metamaterials/metasurfaces and advanced antenna technologies, holographic multiple-input multiple-output (H-MIMO) emerges as a promising technology to fulfill the extreme goals of the sixth-generation (6G) wireless networks. The antenna arrays utilized in H-MIMO comprise massive (possibly to extreme extent) numbers of antenna elements, densely spaced less than half-a-wavelength and integrated into a compact space, realizing an almost continuous aperture. Thanks to the expected low cost, size, weight, and power consumption, such apertures are expected to be largely fabricated for near-field communications. In addition, the physical features of H-MIMO enable manipulations directly on the electromagnetic (EM) wave domain and spatial multiplexing. To fully leverage this potential, near-field H-MIMO channel modeling, especially from the EM perspective, is of paramount significance. In this article, we overview near-field H-MIMO channel models elaborating on the various modeling categories and respective features, as well as their challenges and evaluation criteria. We also present EM-domain channel models that address the inherit computational and measurement complexities. Finally, the article is concluded with a set of future research directions on the topic.

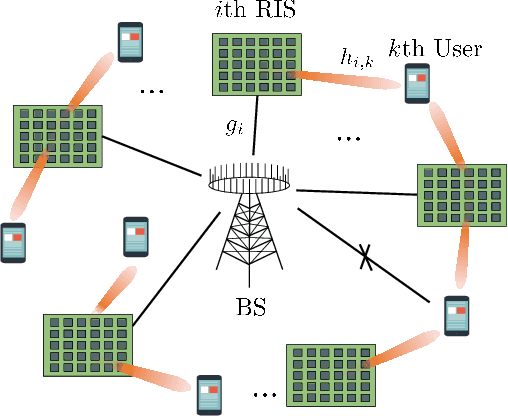

Low-Complexity Beam Training for Multi-RIS-Assisted Multi-User Communications

Mar 14, 2024Yuan Xu, Chongwen Huang, Wei Li, Zhaohui Yang, Xiaoming Chen, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

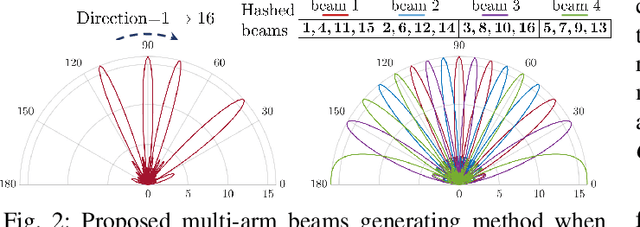

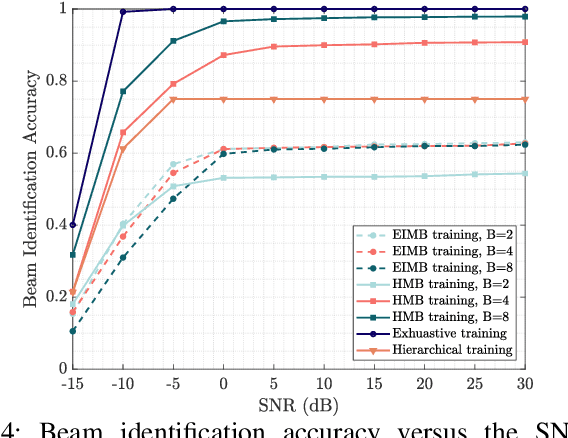

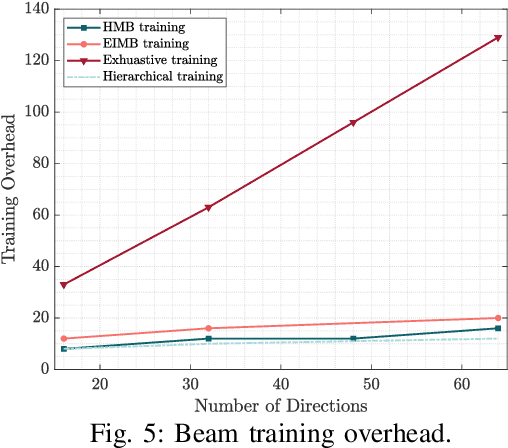

In this paper, we investigate the beam training problem in the multi-user millimeter wave (mmWave) communication system, where multiple reconfigurable intelligent surfaces (RISs) are deployed to improve the coverage and the achievable rate. However, existing beam training techniques in mmWave systems suffer from the high complexity (i.e., exponential order) and low identification accuracy. To address these problems, we propose a novel hashing multi-arm beam (HMB) training scheme that reduces the training complexity to the logarithmic order with the high accuracy. Specifically, we first design a generation mechanism for HMB codebooks. Then, we propose a demultiplexing algorithm based on the soft decision to distinguish signals from different RIS reflective links. Finally, we utilize a multi-round voting mechanism to align the beams. Simulation results show that the proposed HMB training scheme enables simultaneous training for multiple RISs and multiple users, and reduces the beam training overhead to the logarithmic level. Moreover, it also shows that our proposed scheme can significantly improve the identification accuracy by at least 20% compared to existing beam training techniques.

Coverage and Rate Analysis for Integrated Sensing and Communication Networks

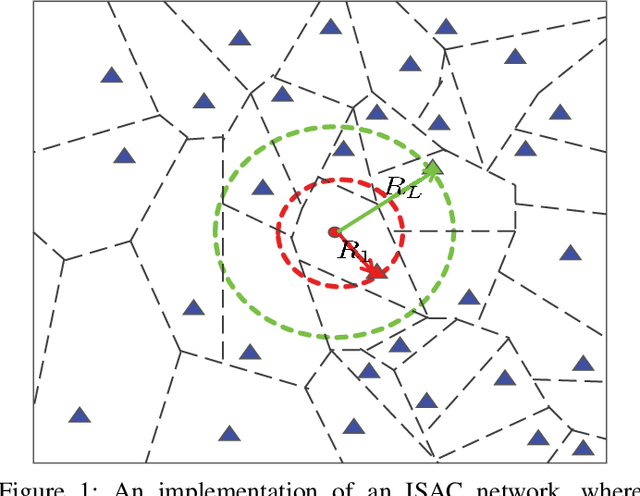

Mar 13, 2024Xu Gan, Chongwen Huang, Zhaohui Yang, Xiaoming Chen, Jiguang He, Zhaoyang Zhang, Chau Yuen, Yong Liang Guan, Mérouane Debbah

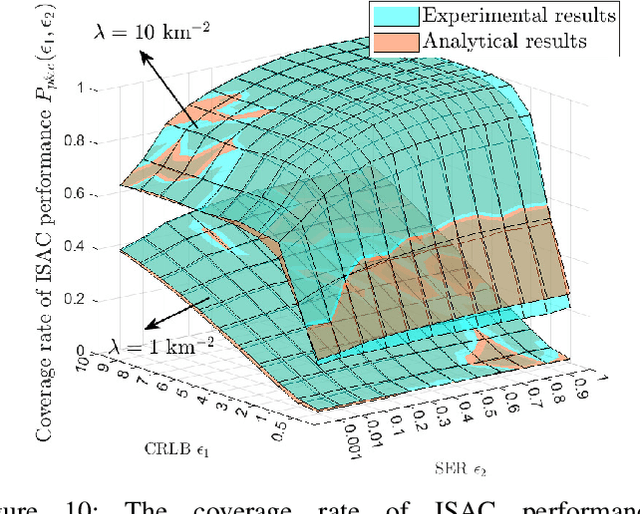

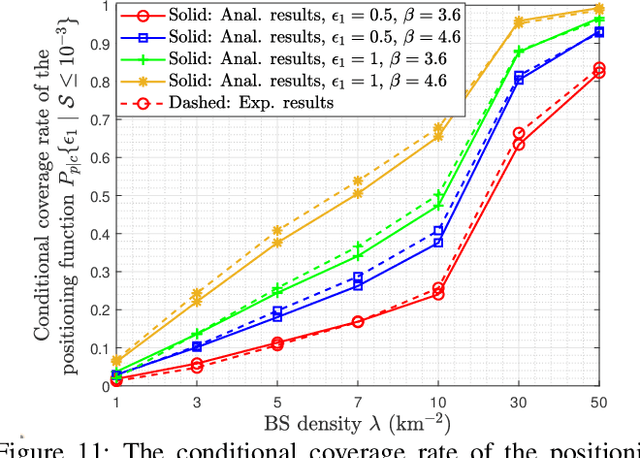

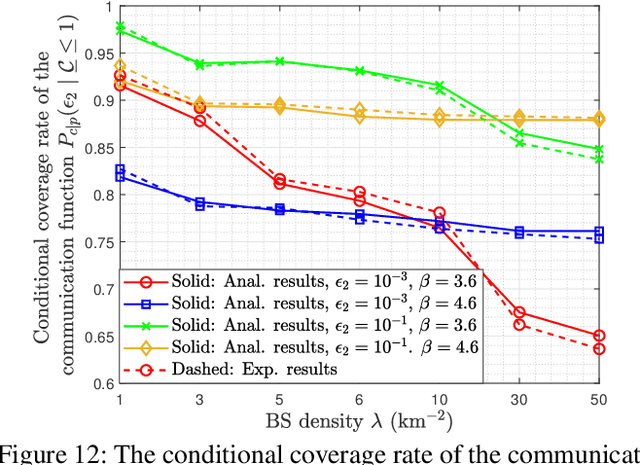

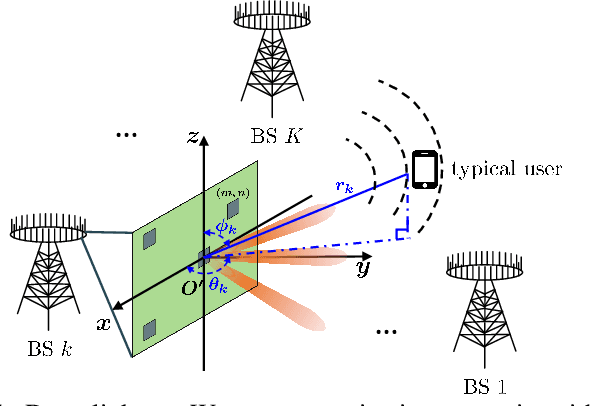

Integrated sensing and communication (ISAC) is increasingly recognized as a pivotal technology for next-generation cellular networks, offering mutual benefits in both sensing and communication capabilities. This advancement necessitates a re-examination of the fundamental limits within networks where these two functions coexist via shared spectrum and infrastructures. However, traditional stochastic geometry-based performance analyses are confined to either communication or sensing networks separately. This paper bridges this gap by introducing a generalized stochastic geometry framework in ISAC networks. Based on this framework, we define and calculate the coverage and ergodic rate of sensing and communication performance under resource constraints. Then, we shed light on the fundamental limits of ISAC networks by presenting theoretical results for the coverage rate of the unified performance, taking into account the coupling effects of dual functions in coexistence networks. Further, we obtain the analytical formulations for evaluating the ergodic sensing rate constrained by the maximum communication rate, and the ergodic communication rate constrained by the maximum sensing rate. Extensive numerical results validate the accuracy of all theoretical derivations, and also indicate that denser networks significantly enhance ISAC coverage. Specifically, increasing the base station density from $1$ $\text{km}^{-2}$ to $10$ $\text{km}^{-2}$ can boost the ISAC coverage rate from $1.4\%$ to $39.8\%$. Further, results also reveal that with the increase of the constrained sensing rate, the ergodic communication rate improves significantly, but the reverse is not obvious.

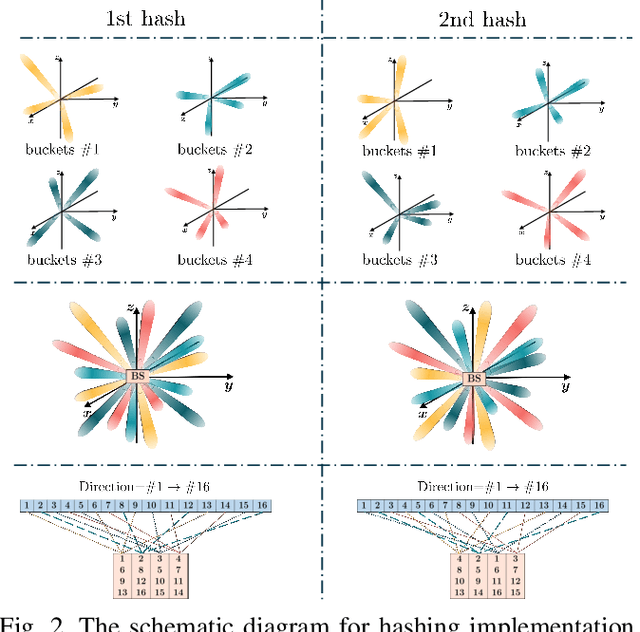

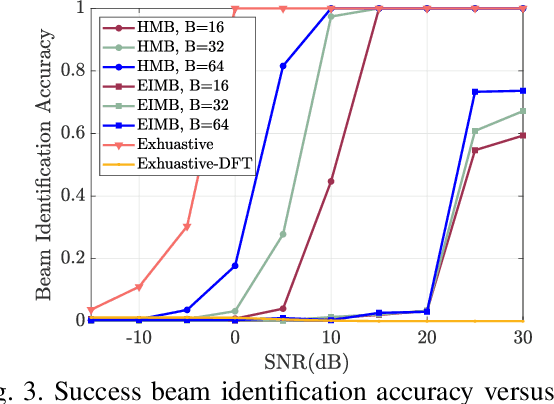

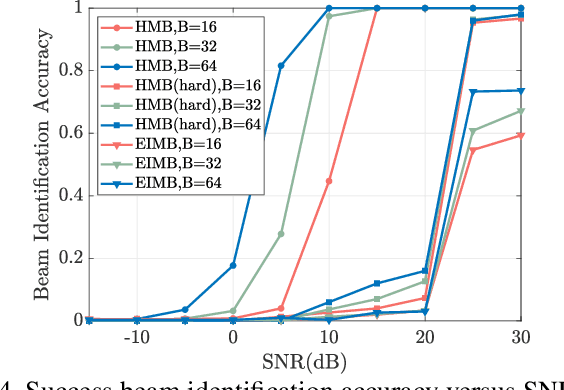

Hashing Beam Training for Near-Field Communications

Mar 10, 2024Yuan Xu, Wei Li, Chongwen Huang, Chen Zhu, Zhaohui Yang, Jun Yang, Jiguang He, Zhaoyang Zhang, Mérouane Debbah

In this paper, we investigate the millimeter-wave (mmWave) near-field beam training problem to find the correct beam direction. In order to address the high complexity and low identification accuracy of existing beam training techniques, we propose an efficient hashing multi-arm beam (HMB) training scheme for the near-field scenario. Specifically, we first design a set of sparse bases based on the polar domain sparsity of the near-field channel. Then, the random hash functions are chosen to construct the near-field multi-arm beam training codebook. Each multi-arm beam codeword is scanned in a time slot until all the predefined codewords are traversed. Finally, the soft decision and voting methods are applied to distinguish the signal from different base stations and obtain correctly aligned beams. Simulation results show that our proposed near-field HMB training method can reduce the beam training overhead to the logarithmic level, and achieve 96.4% identification accuracy of exhaustive beam training. Moreover, we also verify applicability under the far-field scenario.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge