Robust Continuous-Time Beam Tracking with Liquid Neural Network

May 01, 2024Fenghao Zhu, Xinquan Wang, Chongwen Huang, Richeng Jin, Qianqian Yang, Ahmed Alhammadi, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

Millimeter-wave (mmWave) technology is increasingly recognized as a pivotal technology of the sixth-generation communication networks due to the large amounts of available spectrum at high frequencies. However, the huge overhead associated with beam training imposes a significant challenge in mmWave communications, particularly in urban environments with high background noise. To reduce this high overhead, we propose a novel solution for robust continuous-time beam tracking with liquid neural network, which dynamically adjust the narrow mmWave beams to ensure real-time beam alignment with mobile users. Through extensive simulations, we validate the effectiveness of our proposed method and demonstrate its superiority over existing state-of-the-art deep-learning-based approaches. Specifically, our scheme achieves at most 46.9% higher normalized spectral efficiency than the baselines when the user is moving at 5 m/s, demonstrating the potential of liquid neural networks to enhance mmWave mobile communication performance.

Beamforming Inferring by Conditional WGAN-GP for Holographic Antenna Arrays

May 01, 2024Fenghao Zhu, Xinquan Wang, Chongwen Huang, Ahmed Alhammadi, Hui Chen, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

The beamforming technology with large holographic antenna arrays is one of the key enablers for the next generation of wireless systems, which can significantly improve the spectral efficiency. However, the deployment of large antenna arrays implies high algorithm complexity and resource overhead at both receiver and transmitter ends. To address this issue, advanced technologies such as artificial intelligence have been developed to reduce beamforming overhead. Intuitively, if we can implement the near-optimal beamforming only using a tiny subset of the all channel information, the overhead for channel estimation and beamforming would be reduced significantly compared with the traditional beamforming methods that usually need full channel information and the inversion of large dimensional matrix. In light of this idea, we propose a novel scheme that utilizes Wasserstein generative adversarial network with gradient penalty to infer the full beamforming matrices based on very little of channel information. Simulation results confirm that it can accomplish comparable performance with the weighted minimum mean-square error algorithm, while reducing the overhead by over 50%.

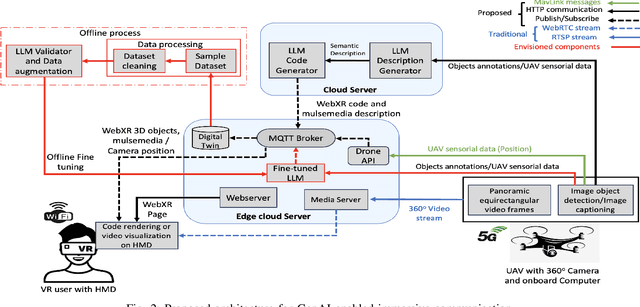

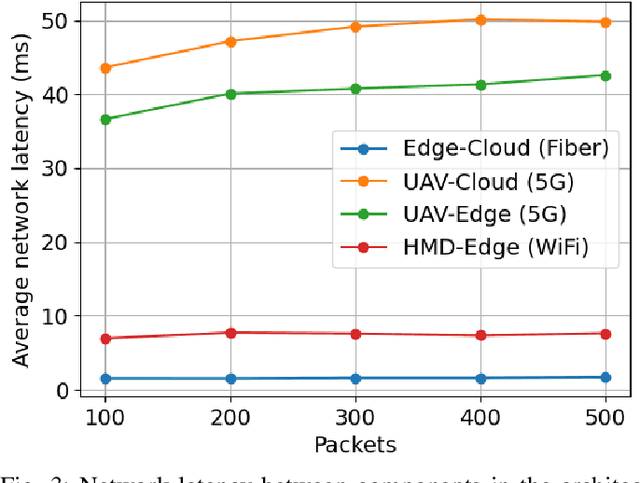

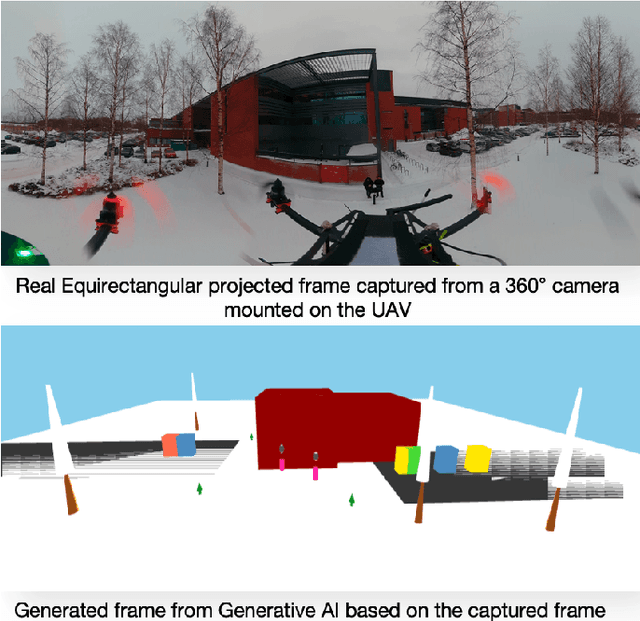

Generative AI for Immersive Communication: The Next Frontier in Internet-of-Senses Through 6G

Apr 02, 2024Nassim Sehad, Lina Bariah, Wassim Hamidouche, Hamed Hellaoui, Riku Jäntti, Mérouane Debbah

Over the past two decades, the Internet-of-Things (IoT) has been a transformative concept, and as we approach 2030, a new paradigm known as the Internet of Senses (IoS) is emerging. Unlike conventional Virtual Reality (VR), IoS seeks to provide multi-sensory experiences, acknowledging that in our physical reality, our perception extends far beyond just sight and sound; it encompasses a range of senses. This article explores existing technologies driving immersive multi-sensory media, delving into their capabilities and potential applications. This exploration includes a comparative analysis between conventional immersive media streaming and a proposed use case that leverages semantic communication empowered by generative Artificial Intelligence (AI). The focal point of this analysis is the substantial reduction in bandwidth consumption by 99.93% in the proposed scheme. Through this comparison, we aim to underscore the practical applications of generative AI for immersive media while addressing the challenges and outlining future trajectories.

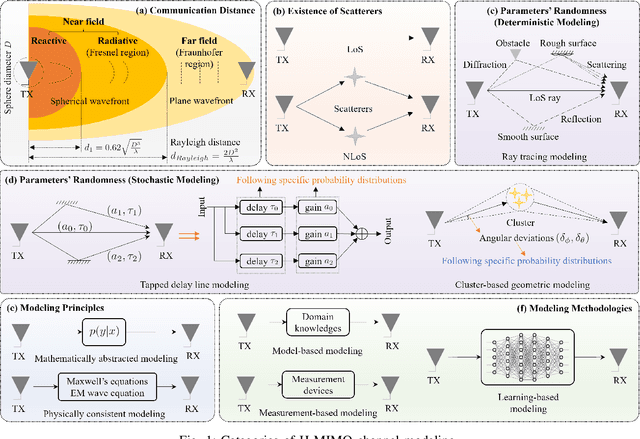

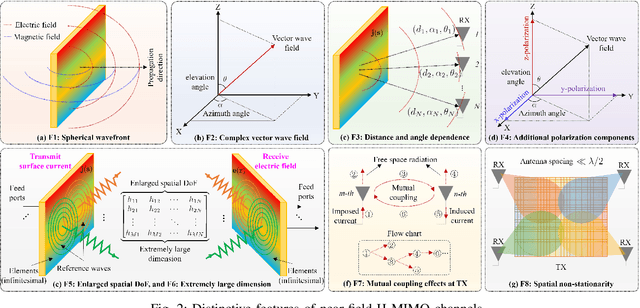

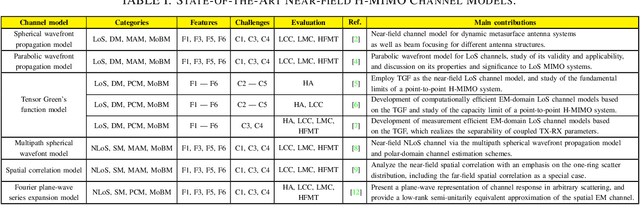

Near-Field Channel Modeling for Holographic MIMO Communications

Mar 16, 2024Tierui Gong, Li Wei, Chongwen Huang, George C. Alexandropoulos, Mérouane Debbah, Chau Yuen

Empowered by the latest progress on innovative metamaterials/metasurfaces and advanced antenna technologies, holographic multiple-input multiple-output (H-MIMO) emerges as a promising technology to fulfill the extreme goals of the sixth-generation (6G) wireless networks. The antenna arrays utilized in H-MIMO comprise massive (possibly to extreme extent) numbers of antenna elements, densely spaced less than half-a-wavelength and integrated into a compact space, realizing an almost continuous aperture. Thanks to the expected low cost, size, weight, and power consumption, such apertures are expected to be largely fabricated for near-field communications. In addition, the physical features of H-MIMO enable manipulations directly on the electromagnetic (EM) wave domain and spatial multiplexing. To fully leverage this potential, near-field H-MIMO channel modeling, especially from the EM perspective, is of paramount significance. In this article, we overview near-field H-MIMO channel models elaborating on the various modeling categories and respective features, as well as their challenges and evaluation criteria. We also present EM-domain channel models that address the inherit computational and measurement complexities. Finally, the article is concluded with a set of future research directions on the topic.

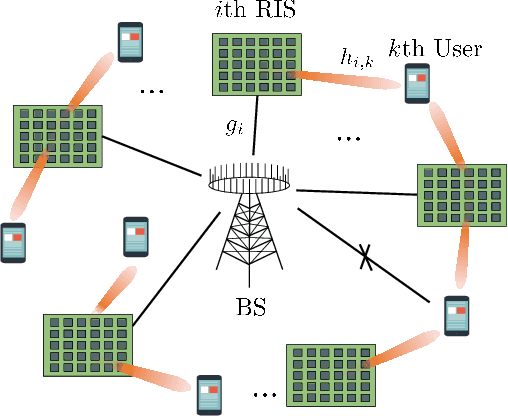

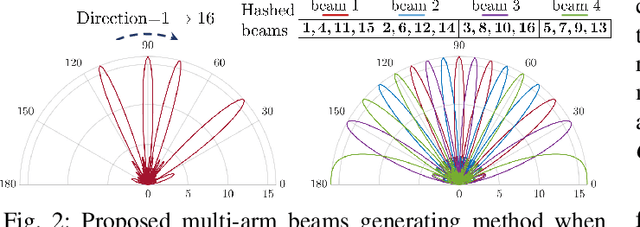

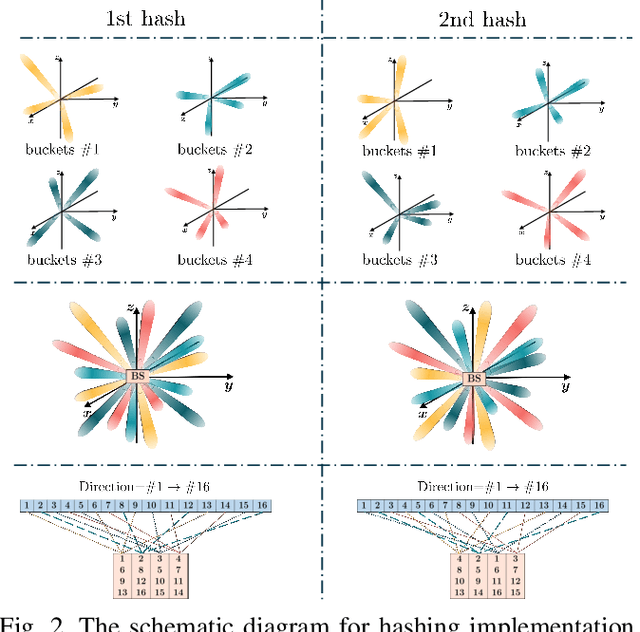

Low-Complexity Beam Training for Multi-RIS-Assisted Multi-User Communications

Mar 14, 2024Yuan Xu, Chongwen Huang, Wei Li, Zhaohui Yang, Xiaoming Chen, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

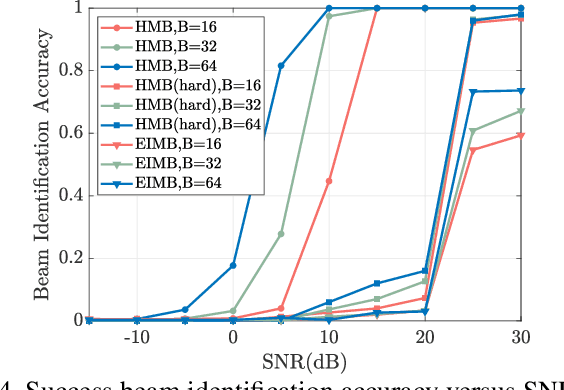

In this paper, we investigate the beam training problem in the multi-user millimeter wave (mmWave) communication system, where multiple reconfigurable intelligent surfaces (RISs) are deployed to improve the coverage and the achievable rate. However, existing beam training techniques in mmWave systems suffer from the high complexity (i.e., exponential order) and low identification accuracy. To address these problems, we propose a novel hashing multi-arm beam (HMB) training scheme that reduces the training complexity to the logarithmic order with the high accuracy. Specifically, we first design a generation mechanism for HMB codebooks. Then, we propose a demultiplexing algorithm based on the soft decision to distinguish signals from different RIS reflective links. Finally, we utilize a multi-round voting mechanism to align the beams. Simulation results show that the proposed HMB training scheme enables simultaneous training for multiple RISs and multiple users, and reduces the beam training overhead to the logarithmic level. Moreover, it also shows that our proposed scheme can significantly improve the identification accuracy by at least 20% compared to existing beam training techniques.

Coverage and Rate Analysis for Integrated Sensing and Communication Networks

Mar 13, 2024Xu Gan, Chongwen Huang, Zhaohui Yang, Xiaoming Chen, Jiguang He, Zhaoyang Zhang, Chau Yuen, Yong Liang Guan, Mérouane Debbah

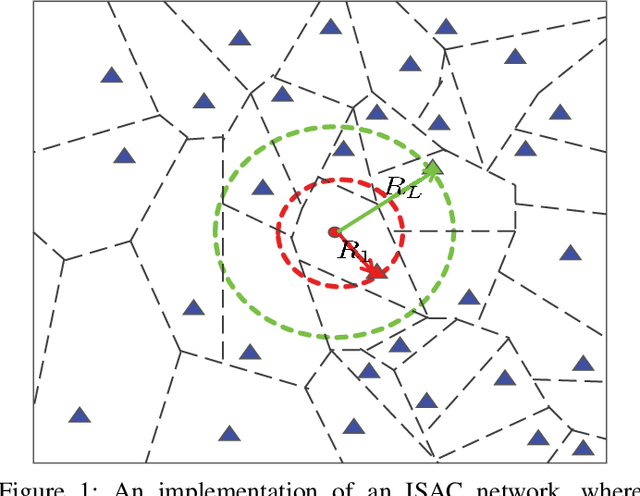

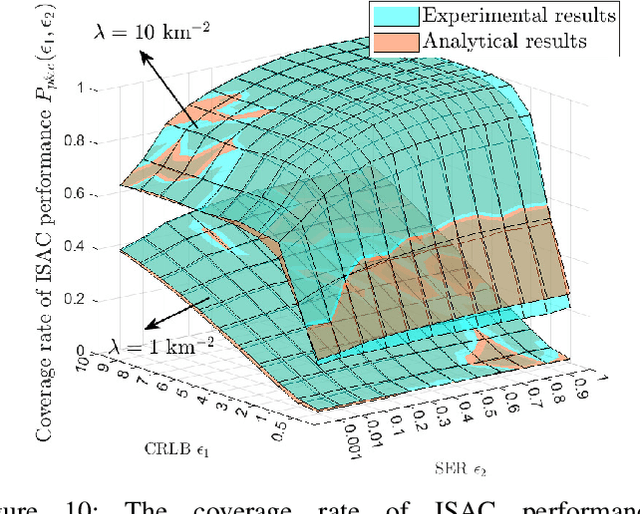

Integrated sensing and communication (ISAC) is increasingly recognized as a pivotal technology for next-generation cellular networks, offering mutual benefits in both sensing and communication capabilities. This advancement necessitates a re-examination of the fundamental limits within networks where these two functions coexist via shared spectrum and infrastructures. However, traditional stochastic geometry-based performance analyses are confined to either communication or sensing networks separately. This paper bridges this gap by introducing a generalized stochastic geometry framework in ISAC networks. Based on this framework, we define and calculate the coverage and ergodic rate of sensing and communication performance under resource constraints. Then, we shed light on the fundamental limits of ISAC networks by presenting theoretical results for the coverage rate of the unified performance, taking into account the coupling effects of dual functions in coexistence networks. Further, we obtain the analytical formulations for evaluating the ergodic sensing rate constrained by the maximum communication rate, and the ergodic communication rate constrained by the maximum sensing rate. Extensive numerical results validate the accuracy of all theoretical derivations, and also indicate that denser networks significantly enhance ISAC coverage. Specifically, increasing the base station density from $1$ $\text{km}^{-2}$ to $10$ $\text{km}^{-2}$ can boost the ISAC coverage rate from $1.4\%$ to $39.8\%$. Further, results also reveal that with the increase of the constrained sensing rate, the ergodic communication rate improves significantly, but the reverse is not obvious.

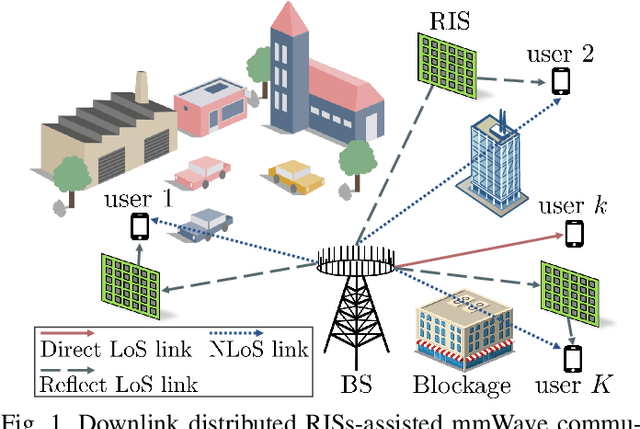

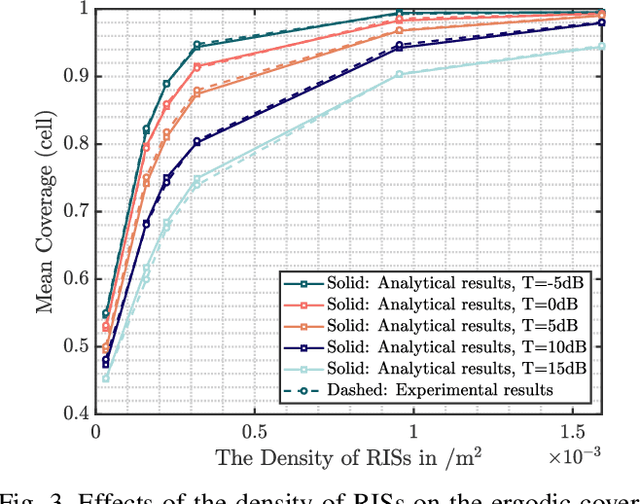

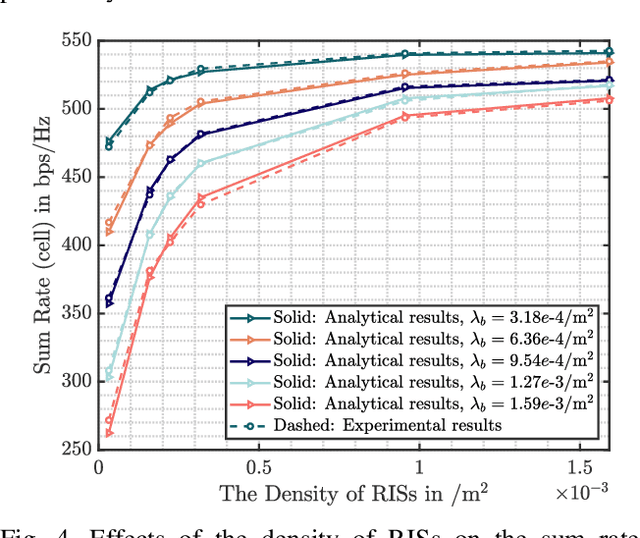

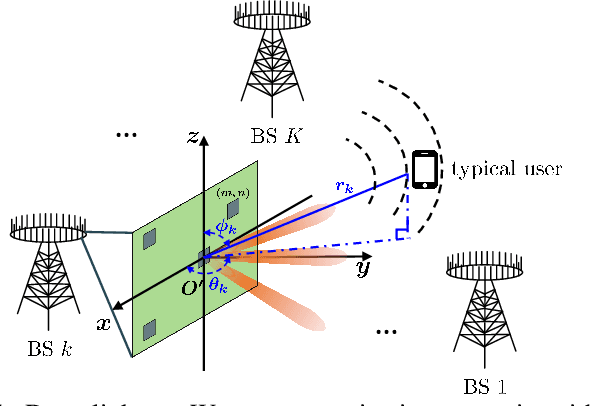

Stochastic Geometry Analysis for Distributed RISs-Assisted mmWave Communications

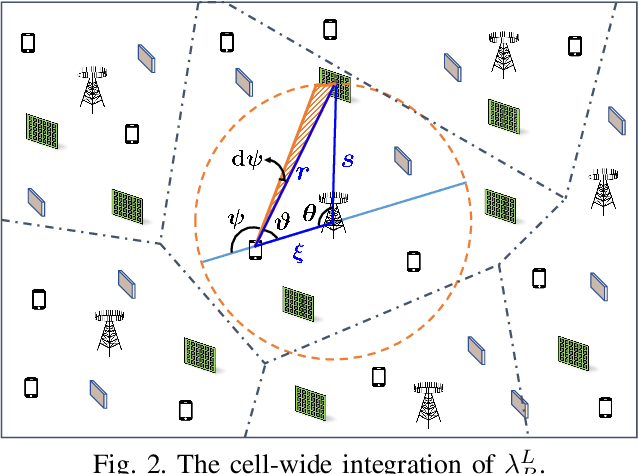

Mar 10, 2024Yuan Xu, Wei Li, Chongwen Huang, Yongxu Zhu, Zhaohui Yang, Jun Yang, Jiguang He, Zhaoyang Zhang, Mérouane Debbah

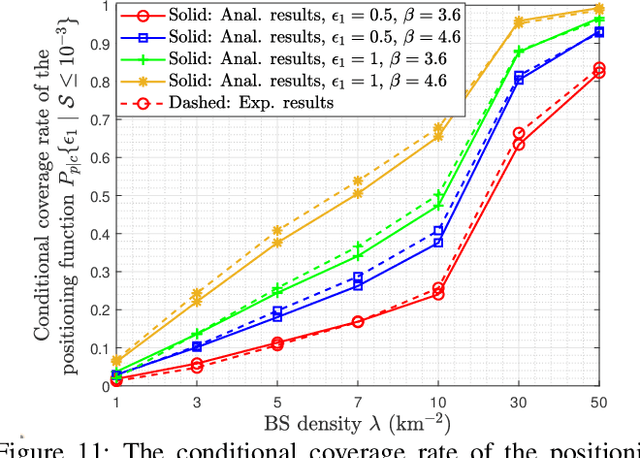

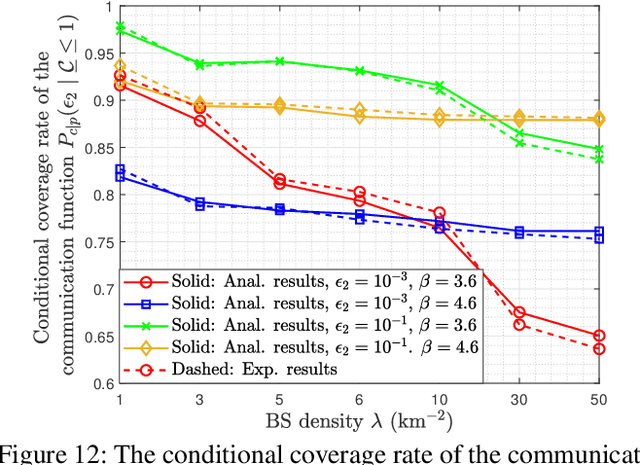

Millimeter wave (mmWave) has attracted considerable attention due to its wide bandwidth and high frequency. However, it is highly susceptible to blockages, resulting in significant degradation of the coverage and the sum rate. A promising approach is deploying distributed reconfigurable intelligent surfaces (RISs), which can establish extra communication links. In this paper, we investigate the impact of distributed RISs on the coverage probability and the sum rate in mmWave wireless communication systems. Specifically, we first introduce the system model, which includes the blockage, the RIS and the user distribution models, leveraging the Poisson point process. Then, we define the association criterion and derive the conditional coverage probabilities for the two cases of direct association and reflective association through RISs. Finally, we combine the two cases using Campbell's theorem and the total probability theorem to obtain the closed-form expressions for the ergodic coverage probability and the sum rate. Simulation results validate the effectiveness of the proposed analytical approach, demonstrating that the deployment of distributed RISs significantly improves the ergodic coverage probability by 45.4% and the sum rate by over 1.5 times.

Hashing Beam Training for Near-Field Communications

Mar 10, 2024Yuan Xu, Wei Li, Chongwen Huang, Chen Zhu, Zhaohui Yang, Jun Yang, Jiguang He, Zhaoyang Zhang, Mérouane Debbah

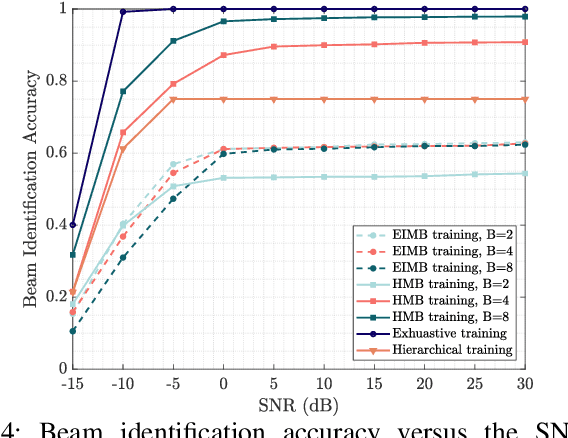

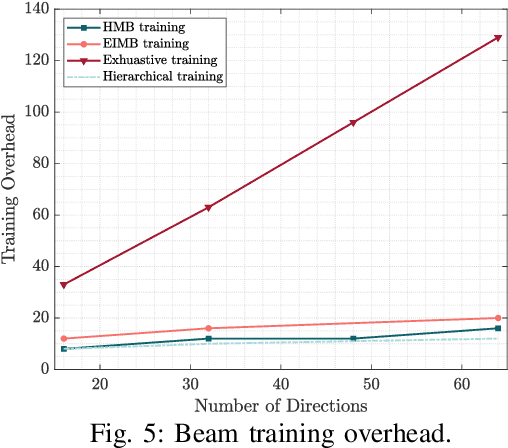

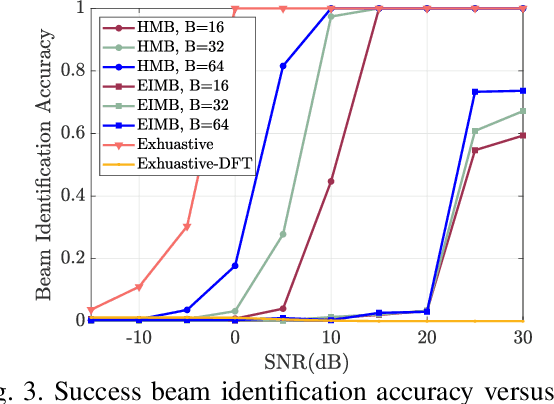

In this paper, we investigate the millimeter-wave (mmWave) near-field beam training problem to find the correct beam direction. In order to address the high complexity and low identification accuracy of existing beam training techniques, we propose an efficient hashing multi-arm beam (HMB) training scheme for the near-field scenario. Specifically, we first design a set of sparse bases based on the polar domain sparsity of the near-field channel. Then, the random hash functions are chosen to construct the near-field multi-arm beam training codebook. Each multi-arm beam codeword is scanned in a time slot until all the predefined codewords are traversed. Finally, the soft decision and voting methods are applied to distinguish the signal from different base stations and obtain correctly aligned beams. Simulation results show that our proposed near-field HMB training method can reduce the beam training overhead to the logarithmic level, and achieve 96.4% identification accuracy of exhaustive beam training. Moreover, we also verify applicability under the far-field scenario.

Stacked Intelligent Metasurface Enabled LEO Satellite Communications Relying on Statistical CSI

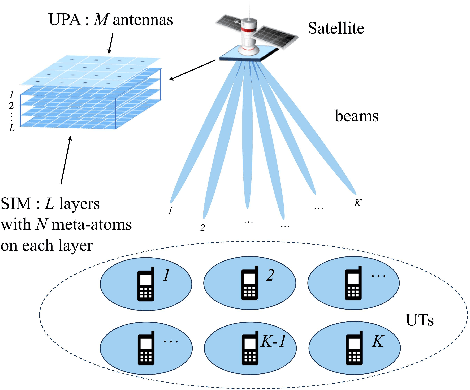

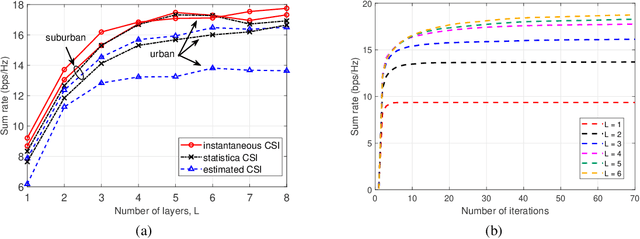

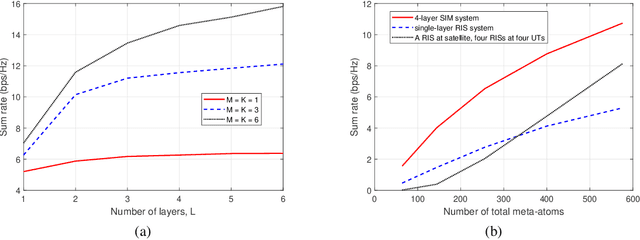

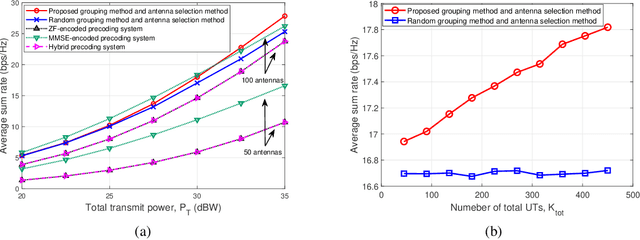

Mar 09, 2024Shining Lin, Jiancheng An, Lu Gan, Mérouane Debbah, Chau Yuen

Low earth orbit (LEO) satellite communication systems have gained increasing attention as a crucial supplement to terrestrial wireless networks due to their extensive coverage area. This letter presents a novel system design for LEO satellite systems by leveraging stacked intelligent metasurface (SIM) technology. Specifically, the lightweight and energy-efficient SIM is mounted on a satellite to achieve multiuser beamforming directly in the electromagnetic wave domain, which substantially reduces the processing delay and computational load of the satellite compared to the traditional digital beamforming scheme. To overcome the challenges of obtaining instantaneous channel state information (CSI) at the transmitter and maximize the system's performance, a joint power allocation and SIM phase shift optimization problem for maximizing the ergodic sum rate is formulated based on statistical CSI, and an alternating optimization (AO) algorithm is customized to solve it efficiently. Additionally, a user grouping method based on channel correlation and an antenna selection algorithm are proposed to further improve the system performance. Simulation results demonstrate the effectiveness of the proposed SIM-based LEO satellite system design and statistical CSI-based AO algorithm.

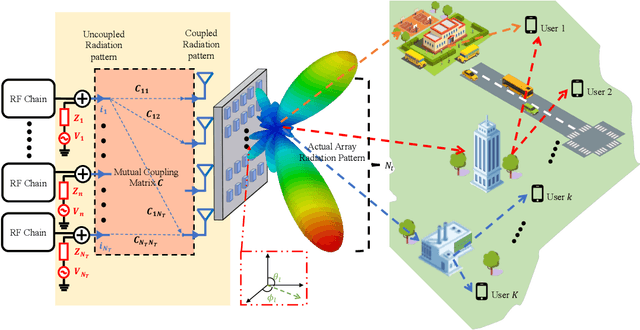

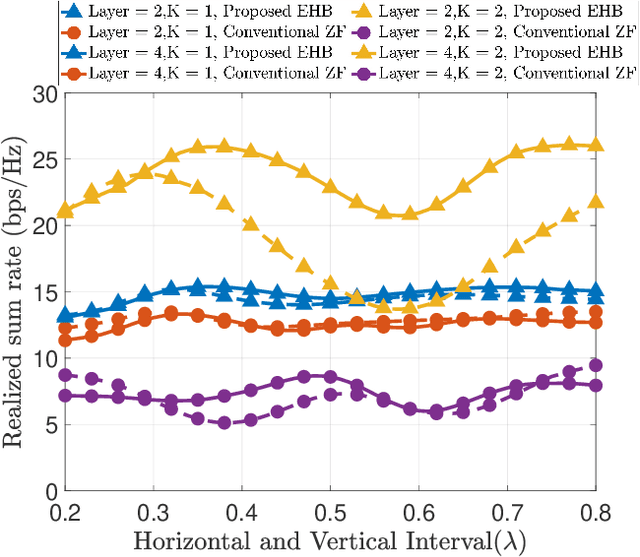

Electromagnetic Hybrid Beamforming for Holographic Communications

Mar 09, 2024Ran Ji, Chongwen Huang, Xiaoming Chen, Wei E. I. Sha, Linglong Dai, Jiguang He, Zhaoyang Zhang, Chau Yuen, Mérouane Debbah

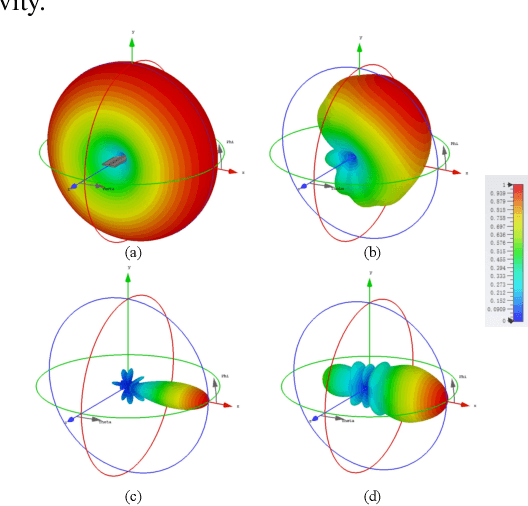

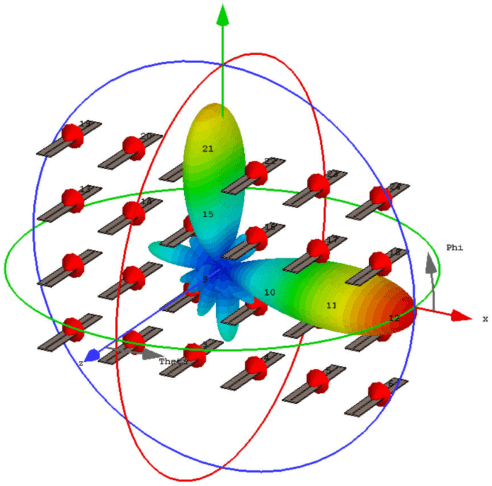

It is well known that there is inherent radiation pattern distortion for the commercial base station antenna array, which usually needs three antenna sectors to cover the whole space. To eliminate pattern distortion and further enhance beamforming performance, we propose an electromagnetic hybrid beamforming (EHB) scheme based on a three-dimensional (3D) superdirective holographic antenna array. Specifically, EHB consists of antenna excitation current vectors (analog beamforming) and digital precoding matrices, where the implementation of analog beamforming involves the real-time adjustment of the radiation pattern to adapt it to the dynamic wireless environment. Meanwhile, the digital beamforming is optimized based on the channel characteristics of analog beamforming to further improve the achievable rate of communication systems. An electromagnetic channel model incorporating array radiation patterns and the mutual coupling effect is also developed to evaluate the benefits of our proposed scheme. Simulation results demonstrate that our proposed EHB scheme with a 3D holographic array achieves a relatively flat superdirective beamforming gain and allows for programmable focusing directions throughout the entire spatial domain. Furthermore, they also verify that the proposed scheme achieves a sum rate gain of over 150% compared to traditional beamforming algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge